mirror of

https://github.com/alibaba/higress.git

synced 2026-03-22 12:07:39 +08:00

Compare commits

69 Commits

v2.0.1

...

wasm-go-ai

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

e68a8ac25f | ||

|

|

96575b982e | ||

|

|

c2d405b2a7 | ||

|

|

6efb3109f2 | ||

|

|

1b1c08afb7 | ||

|

|

d24123a55f | ||

|

|

f2a5df3949 | ||

|

|

ebc5b2987e | ||

|

|

ca97cbd75a | ||

|

|

a787e237ce | ||

|

|

6a1bf90d42 | ||

|

|

60e476da87 | ||

|

|

2cb8558cda | ||

|

|

4d1a037942 | ||

|

|

39b6eac9d0 | ||

|

|

7697af9d2b | ||

|

|

3660715506 | ||

|

|

7bd438877b | ||

|

|

0fbeb39cac | ||

|

|

d02c974af4 | ||

|

|

8ad4970231 | ||

|

|

aee37c5e22 | ||

|

|

73cf32aadd | ||

|

|

1ab69fcf82 | ||

|

|

9b995321bb | ||

|

|

00cac813e3 | ||

|

|

548cf2f081 | ||

|

|

c1f2504e87 | ||

|

|

7e8b0445ad | ||

|

|

63d5422da6 | ||

|

|

035e81a5ca | ||

|

|

9a1edcd4c8 | ||

|

|

2219a17898 | ||

|

|

93c1e5c2bb | ||

|

|

7c2d2b2855 | ||

|

|

b1550e91ab | ||

|

|

0b42836e85 | ||

|

|

7c33ebf6ea | ||

|

|

acec48ed8b | ||

|

|

d309bf2e25 | ||

|

|

496d365a95 | ||

|

|

d952fa562b | ||

|

|

e7561c30e5 | ||

|

|

cdd71155a9 | ||

|

|

a5ccb90b28 | ||

|

|

d76f574ab3 | ||

|

|

bb6c43c767 | ||

|

|

b8f5826a32 | ||

|

|

0d79386ce2 | ||

|

|

871ae179c3 | ||

|

|

f8d62a8ac3 | ||

|

|

badf4b7101 | ||

|

|

fc6902ded2 | ||

|

|

d96994767c | ||

|

|

32e5a59ae0 | ||

|

|

49bb5ec2b9 | ||

|

|

11ff2d1d31 | ||

|

|

c67f494b49 | ||

|

|

299621476f | ||

|

|

7e6168a644 | ||

|

|

e923cbaecc | ||

|

|

6f86c31bac | ||

|

|

51c956f0b3 | ||

|

|

d0693d8c4b | ||

|

|

e298078065 | ||

|

|

85f8eb5166 | ||

|

|

0a112d1a1e | ||

|

|

04ce776f14 | ||

|

|

952c9ec5dc |

@@ -3,9 +3,16 @@ name: Build and Push Wasm Plugin Image

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- "wasm-go-*-v*.*.*" # 匹配 wasm-go-{pluginName}-vX.Y.Z 格式的标签

|

||||

- "wasm-*-*-v*.*.*" # 匹配 wasm-{go|rust}-{pluginName}-vX.Y.Z 格式的标签

|

||||

workflow_dispatch:

|

||||

inputs:

|

||||

plugin_type:

|

||||

description: 'Type of the plugin'

|

||||

required: true

|

||||

type: choice

|

||||

options:

|

||||

- go

|

||||

- rust

|

||||

plugin_name:

|

||||

description: 'Name of the plugin'

|

||||

required: true

|

||||

@@ -23,32 +30,42 @@ jobs:

|

||||

env:

|

||||

IMAGE_REGISTRY_SERVICE: ${{ vars.IMAGE_REGISTRY || 'higress-registry.cn-hangzhou.cr.aliyuncs.com' }}

|

||||

IMAGE_REPOSITORY: ${{ vars.PLUGIN_IMAGE_REPOSITORY || 'plugins' }}

|

||||

RUST_VERSION: 1.82

|

||||

GO_VERSION: 1.19

|

||||

TINYGO_VERSION: 0.28.1

|

||||

ORAS_VERSION: 1.0.0

|

||||

steps:

|

||||

- name: Set plugin_name and version from inputs or ref_name

|

||||

- name: Set plugin_type, plugin_name and version from inputs or ref_name

|

||||

id: set_vars

|

||||

run: |

|

||||

if [[ "${{ github.event_name }}" == "workflow_dispatch" ]]; then

|

||||

plugin_type="${{ github.event.inputs.plugin_type }}"

|

||||

plugin_name="${{ github.event.inputs.plugin_name }}"

|

||||

version="${{ github.event.inputs.version }}"

|

||||

else

|

||||

ref_name=${{ github.ref_name }}

|

||||

plugin_type=${ref_name#*-} # 删除插件类型前面的字段(wasm-)

|

||||

plugin_type=${plugin_type%%-*} # 删除插件类型后面的字段(-{plugin_name}-vX.Y.Z)

|

||||

plugin_name=${ref_name#*-*-} # 删除插件名前面的字段(wasm-go-)

|

||||

plugin_name=${plugin_name%-*} # 删除插件名后面的字段(-vX.Y.Z)

|

||||

version=$(echo "$ref_name" | awk -F'v' '{print $2}')

|

||||

fi

|

||||

|

||||

if [[ "$plugin_type" == "rust" ]]; then

|

||||

builder_image="higress-registry.cn-hangzhou.cr.aliyuncs.com/plugins/wasm-rust-builder:rust${{ env.RUST_VERSION }}-oras${{ env.ORAS_VERSION }}"

|

||||

else

|

||||

builder_image="higress-registry.cn-hangzhou.cr.aliyuncs.com/plugins/wasm-go-builder:go${{ env.GO_VERSION }}-tinygo${{ env.TINYGO_VERSION }}-oras${{ env.ORAS_VERSION }}"

|

||||

fi

|

||||

echo "PLUGIN_TYPE=$plugin_type" >> $GITHUB_ENV

|

||||

echo "PLUGIN_NAME=$plugin_name" >> $GITHUB_ENV

|

||||

echo "VERSION=$version" >> $GITHUB_ENV

|

||||

echo "BUILDER_IMAGE=$builder_image" >> $GITHUB_ENV

|

||||

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v3

|

||||

|

||||

- name: File Check

|

||||

run: |

|

||||

workspace=${{ github.workspace }}/plugins/wasm-go/extensions/${PLUGIN_NAME}

|

||||

workspace=${{ github.workspace }}/plugins/wasm-${PLUGIN_TYPE}/extensions/${PLUGIN_NAME}

|

||||

push_command="./plugin.tar.gz:application/vnd.oci.image.layer.v1.tar+gzip"

|

||||

|

||||

# 查找spec.yaml

|

||||

@@ -75,10 +92,10 @@ jobs:

|

||||

|

||||

echo "PUSH_COMMAND=\"$push_command\"" >> $GITHUB_ENV

|

||||

|

||||

- name: Run a wasm-go-builder

|

||||

- name: Run a wasm-builder

|

||||

env:

|

||||

PLUGIN_NAME: ${{ env.PLUGIN_NAME }}

|

||||

BUILDER_IMAGE: higress-registry.cn-hangzhou.cr.aliyuncs.com/plugins/wasm-go-builder:go${{ env.GO_VERSION }}-tinygo${{ env.TINYGO_VERSION }}-oras${{ env.ORAS_VERSION }}

|

||||

BUILDER_IMAGE: ${{ env.BUILDER_IMAGE }}

|

||||

run: |

|

||||

docker run -itd --name builder -v ${{ github.workspace }}:/workspace -e PLUGIN_NAME=${{ env.PLUGIN_NAME }} --rm ${{ env.BUILDER_IMAGE }} /bin/bash

|

||||

|

||||

@@ -93,7 +110,7 @@ jobs:

|

||||

echo "TargetImage=${target_image}"

|

||||

echo "TargetImageLatest=${target_image_latest}"

|

||||

|

||||

cd ${{ github.workspace }}/plugins/wasm-go/extensions/${PLUGIN_NAME}

|

||||

cd ${{ github.workspace }}/plugins/wasm-${PLUGIN_TYPE}/extensions/${PLUGIN_NAME}

|

||||

if [ -f ./.buildrc ]; then

|

||||

echo 'Found .buildrc file, sourcing it...'

|

||||

. ./.buildrc

|

||||

@@ -101,7 +118,7 @@ jobs:

|

||||

echo '.buildrc file not found'

|

||||

fi

|

||||

echo "EXTRA_TAGS=${EXTRA_TAGS}"

|

||||

|

||||

if [ "${PLUGIN_TYPE}" == "go" ]; then

|

||||

command="

|

||||

set -e

|

||||

cd /workspace/plugins/wasm-go/extensions/${PLUGIN_NAME}

|

||||

@@ -112,4 +129,21 @@ jobs:

|

||||

oras push ${target_image} ${push_command}

|

||||

oras push ${target_image_latest} ${push_command}

|

||||

"

|

||||

elif [ "${PLUGIN_TYPE}" == "rust" ]; then

|

||||

command="

|

||||

set -e

|

||||

cd /workspace/plugins/wasm-rust/extensions/${PLUGIN_NAME}

|

||||

cargo build --target wasm32-wasi --release

|

||||

cp target/wasm32-wasi/release/*.wasm plugin.wasm

|

||||

tar czvf plugin.tar.gz plugin.wasm

|

||||

echo ${{ secrets.REGISTRY_PASSWORD }} | oras login -u ${{ secrets.REGISTRY_USERNAME }} --password-stdin ${{ env.IMAGE_REGISTRY_SERVICE }}

|

||||

oras push ${target_image} ${push_command}

|

||||

oras push ${target_image_latest} ${push_command}

|

||||

"

|

||||

else

|

||||

|

||||

command="

|

||||

echo "unkown type ${PLUGIN_TYPE}"

|

||||

"

|

||||

fi

|

||||

docker exec builder bash -c "$command"

|

||||

|

||||

35

.github/workflows/helm-docs.yaml

vendored

Normal file

35

.github/workflows/helm-docs.yaml

vendored

Normal file

@@ -0,0 +1,35 @@

|

||||

name: "Helm Docs"

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

branches:

|

||||

- "*"

|

||||

|

||||

push:

|

||||

|

||||

jobs:

|

||||

|

||||

helm:

|

||||

name: Helm Docs

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

fetch-depth: 1

|

||||

|

||||

- name: Setup Go

|

||||

uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: '1.22.9'

|

||||

|

||||

- name: Run helm-docs

|

||||

run: |

|

||||

GOBIN=$PWD GO111MODULE=on go install github.com/norwoodj/helm-docs/cmd/helm-docs@v1.14.2

|

||||

./helm-docs -c ${GITHUB_WORKSPACE}/helm/higress -f ../core/values.yaml

|

||||

DIFF=$(git diff ${GITHUB_WORKSPACE}/helm/higress/*md)

|

||||

if [ ! -z "$DIFF" ]; then

|

||||

echo "Please use helm-docs in your clone, of your fork, of the project, and commit a updated README.md for the chart."

|

||||

fi

|

||||

git diff --exit-code

|

||||

rm -f ./helm-docs

|

||||

24

.github/workflows/release-crd.yaml

vendored

Normal file

24

.github/workflows/release-crd.yaml

vendored

Normal file

@@ -0,0 +1,24 @@

|

||||

name: Release CRD to GitHub

|

||||

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- "v*.*.*"

|

||||

workflow_dispatch: ~

|

||||

|

||||

jobs:

|

||||

release-crd:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

|

||||

- name: generate crds

|

||||

run: |

|

||||

cat helm/core/crds/customresourcedefinitions.gen.yaml helm/core/crds/istio-envoyfilter.yaml > crd.yaml

|

||||

|

||||

- name: Upload hgctl packages to the GitHub release

|

||||

uses: softprops/action-gh-release@v2

|

||||

if: startsWith(github.ref, 'refs/tags/')

|

||||

with:

|

||||

files: |

|

||||

crd.yaml

|

||||

3

.gitignore

vendored

3

.gitignore

vendored

@@ -16,4 +16,5 @@ helm/**/charts/**.tgz

|

||||

target/

|

||||

tools/hack/cluster.conf

|

||||

envoy/1.20

|

||||

istio/1.12

|

||||

istio/1.12

|

||||

Cargo.lock

|

||||

|

||||

@@ -3,7 +3,7 @@

|

||||

/istio @SpecialYang @johnlanni

|

||||

/pkg @SpecialYang @johnlanni @CH3CHO

|

||||

/plugins @johnlanni @WeixinX @CH3CHO

|

||||

/plugins/wasm-rust @007gzs

|

||||

/plugins/wasm-rust @007gzs @jizhuozhi

|

||||

/registry @NameHaibinZhang @2456868764 @johnlanni

|

||||

/test @Xunzhuo @2456868764 @CH3CHO

|

||||

/tools @johnlanni @Xunzhuo @2456868764

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

# Contributing to Higress

|

||||

|

||||

It is warmly welcomed if you have interest to hack on Higress. First, we encourage this kind of willing very much. And here is a list of contributing guide for you.

|

||||

Your interest in contributing to Higress is warmly welcomed. First, we encourage this kind of willing very much. And here is a list of contributing guide for you.

|

||||

|

||||

[[中文贡献文档](./CONTRIBUTING_CN.md)]

|

||||

|

||||

|

||||

195

CONTRIBUTING_JP.md

Normal file

195

CONTRIBUTING_JP.md

Normal file

@@ -0,0 +1,195 @@

|

||||

# Higress への貢献

|

||||

|

||||

Higress のハッキングに興味がある場合は、温かく歓迎します。まず、このような意欲を非常に奨励します。そして、以下は貢献ガイドのリストです。

|

||||

|

||||

[[中文](./CONTRIBUTING.md)] | [[English Contributing Document](./CONTRIBUTING_EN.md)]

|

||||

|

||||

## トピック

|

||||

|

||||

- [Higress への貢献](#higress-への貢献)

|

||||

- [トピック](#トピック)

|

||||

- [セキュリティ問題の報告](#セキュリティ問題の報告)

|

||||

- [一般的な問題の報告](#一般的な問題の報告)

|

||||

- [コードとドキュメントの貢献](#コードとドキュメントの貢献)

|

||||

- [ワークスペースの準備](#ワークスペースの準備)

|

||||

- [ブランチの定義](#ブランチの定義)

|

||||

- [コミットルール](#コミットルール)

|

||||

- [コミットメッセージ](#コミットメッセージ)

|

||||

- [コミット内容](#コミット内容)

|

||||

- [PR 説明](#pr-説明)

|

||||

- [テストケースの貢献](#テストケースの貢献)

|

||||

- [何かを手伝うための参加](#何かを手伝うための参加)

|

||||

- [コードスタイル](#コードスタイル)

|

||||

|

||||

## セキュリティ問題の報告

|

||||

|

||||

セキュリティ問題は常に真剣に扱われます。通常の原則として、セキュリティ問題を広めることは推奨しません。Higress のセキュリティ問題を発見した場合は、公開で議論せず、公開の問題を開かないでください。代わりに、[higress@googlegroups.com](mailto:higress@googlegroups.com) にプライベートなメールを送信して報告することをお勧めします。

|

||||

|

||||

## 一般的な問題の報告

|

||||

|

||||

正直なところ、Higress のすべてのユーザーを非常に親切な貢献者と見なしています。Higress を体験した後、プロジェクトに対するフィードバックがあるかもしれません。その場合は、[NEW ISSUE](https://github.com/alibaba/higress/issues/new/choose) を通じて問題を開くことを自由に行ってください。

|

||||

|

||||

Higress プロジェクトを分散型で協力しているため、**よく書かれた**、**詳細な**、**明確な**問題報告を高く評価します。コミュニケーションをより効率的にするために、問題が検索リストに存在するかどうかを検索することを希望します。存在する場合は、新しい問題を開くのではなく、既存の問題のコメントに詳細を追加してください。

|

||||

|

||||

問題の詳細をできるだけ標準化するために、問題報告者のために [ISSUE TEMPLATE](./.github/ISSUE_TEMPLATE) を設定しました。テンプレートのフィールドに従って指示に従って記入してください。

|

||||

|

||||

問題を開く場合は多くのケースがあります:

|

||||

|

||||

* バグ報告

|

||||

* 機能要求

|

||||

* パフォーマンス問題

|

||||

* 機能提案

|

||||

* 機能設計

|

||||

* 助けが必要

|

||||

* ドキュメントが不完全

|

||||

* テストの改善

|

||||

* プロジェクトに関する質問

|

||||

* その他

|

||||

|

||||

また、新しい問題を記入する際には、投稿から機密データを削除することを忘れないでください。機密データには、パスワード、秘密鍵、ネットワークの場所、プライベートなビジネスデータなどが含まれる可能性があります。

|

||||

|

||||

## コードとドキュメントの貢献

|

||||

|

||||

Higress プロジェクトをより良くするためのすべての行動が奨励されます。GitHub では、Higress のすべての改善は PR(プルリクエストの略)を通じて行うことができます。

|

||||

|

||||

* タイプミスを見つけた場合は、修正してみてください!

|

||||

* バグを見つけた場合は、修正してみてください!

|

||||

* 冗長なコードを見つけた場合は、削除してみてください!

|

||||

* 欠落しているテストケースを見つけた場合は、追加してみてください!

|

||||

* 機能を強化できる場合は、**ためらわないでください**!

|

||||

* コードが不明瞭な場合は、コメントを追加して明確にしてください!

|

||||

* コードが醜い場合は、リファクタリングしてみてください!

|

||||

* ドキュメントの改善に役立つ場合は、さらに良いです!

|

||||

* ドキュメントが不正確な場合は、修正してください!

|

||||

* ...

|

||||

|

||||

実際には、それらを完全にリストすることは不可能です。1つの原則を覚えておいてください:

|

||||

|

||||

> あなたからの PR を楽しみにしています。

|

||||

|

||||

Higress を PR で改善する準備ができたら、ここで PR ルールを確認することをお勧めします。

|

||||

|

||||

* [ワークスペースの準備](#ワークスペースの準備)

|

||||

* [ブランチの定義](#ブランチの定義)

|

||||

* [コミットルール](#コミットルール)

|

||||

* [PR 説明](#pr-説明)

|

||||

|

||||

### ワークスペースの準備

|

||||

|

||||

PR を提出するために、GitHub ID に登録していることを前提とします。その後、以下の手順で準備を完了できます:

|

||||

|

||||

1. Higress を自分のリポジトリに **FORK** します。この作業を行うには、[alibaba/higress](https://github.com/alibaba/higress) のメインページの右上にある Fork ボタンをクリックするだけです。その後、`https://github.com/<your-username>/higress` に自分のリポジトリが作成されます。ここで、`your-username` はあなたの GitHub ユーザー名です。

|

||||

|

||||

2. 自分のリポジトリをローカルに **CLONE** します。`git clone git@github.com:<your-username>/higress.git` を使用してリポジトリをローカルマシンにクローンします。その後、新しいブランチを作成して、行いたい変更を完了できます。

|

||||

|

||||

3. リモートを `git@github.com:alibaba/higress.git` に設定します。以下の2つのコマンドを使用します:

|

||||

|

||||

```bash

|

||||

git remote add upstream git@github.com:alibaba/higress.git

|

||||

git remote set-url --push upstream no-pushing

|

||||

```

|

||||

|

||||

このリモート設定を使用すると、git リモート設定を次のように確認できます:

|

||||

|

||||

```shell

|

||||

$ git remote -v

|

||||

origin git@github.com:<your-username>/higress.git (fetch)

|

||||

origin git@github.com:<your-username>/higress.git (push)

|

||||

upstream git@github.com:alibaba/higress.git (fetch)

|

||||

upstream no-pushing (push)

|

||||

```

|

||||

|

||||

これを追加すると、ローカルブランチを上流ブランチと簡単に同期できます。

|

||||

|

||||

### ブランチの定義

|

||||

|

||||

現在、プルリクエストを通じたすべての貢献は Higress の [main ブランチ](https://github.com/alibaba/higress/tree/main) に対するものであると仮定します。貢献する前に、ブランチの定義を理解することは非常に役立ちます。

|

||||

|

||||

貢献者として、プルリクエストを通じたすべての貢献は main ブランチに対するものであることを再度覚えておいてください。Higress プロジェクトには、リリースブランチ(例:0.6.0、0.6.1)、機能ブランチ、ホットフィックスブランチなど、いくつかの他のブランチがあります。

|

||||

|

||||

正式にバージョンをリリースする際には、リリースブランチが作成され、バージョン番号で命名されます。

|

||||

|

||||

リリース後、リリースブランチのコミットを main ブランチにマージします。

|

||||

|

||||

特定のバージョンにバグがある場合、後のバージョンで修正するか、特定のホットフィックスバージョンで修正するかを決定します。ホットフィックスバージョンで修正することを決定した場合、対応するリリースブランチに基づいてホットフィックスブランチをチェックアウトし、コード修正と検証を行い、main ブランチにマージします。

|

||||

|

||||

大きな機能については、開発と検証のために機能ブランチを引き出します。

|

||||

|

||||

### コミットルール

|

||||

|

||||

実際には、Higress ではコミット時に2つのルールを真剣に考えています:

|

||||

|

||||

* [コミットメッセージ](#コミットメッセージ)

|

||||

* [コミット内容](#コミット内容)

|

||||

|

||||

#### コミットメッセージ

|

||||

|

||||

コミットメッセージは、提出された PR の目的をレビュアーがよりよく理解するのに役立ちます。また、コードレビューの手続きを加速するのにも役立ちます。貢献者には、曖昧なメッセージではなく、**明確な**コミットメッセージを使用することを奨励します。一般的に、以下のコミットメッセージタイプを推奨します:

|

||||

|

||||

* docs: xxxx. 例:"docs: add docs about Higress cluster installation".

|

||||

* feature: xxxx. 例:"feature: use higress config instead of istio config".

|

||||

* bugfix: xxxx. 例:"bugfix: fix panic when input nil parameter".

|

||||

* refactor: xxxx. 例:"refactor: simplify to make codes more readable".

|

||||

* test: xxx. 例:"test: add unit test case for func InsertIntoArray".

|

||||

* その他の読みやすく明確な表現方法。

|

||||

|

||||

一方で、以下のような方法でのコミットメッセージは推奨しません:

|

||||

|

||||

* ~~バグ修正~~

|

||||

* ~~更新~~

|

||||

* ~~ドキュメント追加~~

|

||||

|

||||

迷った場合は、[Git コミットメッセージの書き方](http://chris.beams.io/posts/git-commit/) を参照してください。

|

||||

|

||||

#### コミット内容

|

||||

|

||||

コミット内容は、1つのコミットに含まれるすべての内容の変更を表します。1つのコミットに、他のコミットの助けを借りずにレビュアーが完全にレビューできる内容を含めるのが最善です。言い換えれば、1つのコミットの内容は CI を通過でき、コードの混乱を避けることができます。簡単に言えば、次の3つの小さなルールを覚えておく必要があります:

|

||||

|

||||

* コミットで非常に大きな変更を避ける;

|

||||

* 各コミットが完全でレビュー可能であること。

|

||||

* コミット時に git config(`user.name`、`user.email`)を確認して、それが GitHub ID に関連付けられていることを確認します。

|

||||

|

||||

```bash

|

||||

git config --get user.name

|

||||

git config --get user.email

|

||||

```

|

||||

|

||||

* pr を提出する際には、'changes/' フォルダーの下の XXX.md ファイルに現在の変更の簡単な説明を追加してください。

|

||||

|

||||

さらに、コード変更部分では、すべての貢献者が Higress の [コードスタイル](#コードスタイル) を読むことをお勧めします。

|

||||

|

||||

コミットメッセージやコミット内容に関係なく、コードレビューに重点を置いています。

|

||||

|

||||

### PR 説明

|

||||

|

||||

PR は Higress プロジェクトファイルを変更する唯一の方法です。レビュアーが目的をよりよく理解できるようにするために、PR 説明は詳細すぎることはありません。貢献者には、[PR テンプレート](./.github/PULL_REQUEST_TEMPLATE.md) に従ってプルリクエストを完了することを奨励します。

|

||||

|

||||

### 開発前の準備

|

||||

|

||||

```shell

|

||||

make prebuild && go mod tidy

|

||||

```

|

||||

|

||||

## テストケースの貢献

|

||||

|

||||

テストケースは歓迎されます。現在、Higress の機能テストケースが高優先度です。

|

||||

|

||||

* 単体テストの場合、同じモジュールの test ディレクトリに xxxTest.go という名前のテストファイルを作成する必要があります。

|

||||

* 統合テストの場合、統合テストを test ディレクトリに配置できます。

|

||||

//TBD

|

||||

|

||||

## 何かを手伝うための参加

|

||||

|

||||

GitHub を Higress の協力の主要な場所として選択しました。したがって、Higress の最新の更新は常にここにあります。PR を通じた貢献は明確な助けの方法ですが、他の方法も呼びかけています。

|

||||

|

||||

* 可能であれば、他の人の質問に返信する;

|

||||

* 他のユーザーの問題を解決するのを手伝う;

|

||||

* 他の人の PR 設計をレビューするのを手伝う;

|

||||

* 他の人の PR のコードをレビューするのを手伝う;

|

||||

* Higress について議論して、物事を明確にする;

|

||||

* GitHub 以外で Higress 技術を宣伝する;

|

||||

* Higress に関するブログを書くなど。

|

||||

|

||||

## コードスタイル

|

||||

//TBD

|

||||

要するに、**どんな助けも貢献です。**

|

||||

@@ -72,17 +72,17 @@ go.test.coverage: prebuild

|

||||

|

||||

.PHONY: build

|

||||

build: prebuild $(OUT)

|

||||

GOPROXY=$(GOPROXY) GOOS=$(GOOS_LOCAL) GOARCH=$(GOARCH_LOCAL) LDFLAGS=$(RELEASE_LDFLAGS) tools/hack/gobuild.sh $(OUT)/ $(HIGRESS_BINARIES)

|

||||

GOPROXY="$(GOPROXY)" GOOS=$(GOOS_LOCAL) GOARCH=$(GOARCH_LOCAL) LDFLAGS=$(RELEASE_LDFLAGS) tools/hack/gobuild.sh $(OUT)/ $(HIGRESS_BINARIES)

|

||||

|

||||

.PHONY: build-linux

|

||||

build-linux: prebuild $(OUT)

|

||||

GOPROXY=$(GOPROXY) GOOS=linux GOARCH=$(GOARCH_LOCAL) LDFLAGS=$(RELEASE_LDFLAGS) tools/hack/gobuild.sh $(OUT_LINUX)/ $(HIGRESS_BINARIES)

|

||||

GOPROXY="$(GOPROXY)" GOOS=linux GOARCH=$(GOARCH_LOCAL) LDFLAGS=$(RELEASE_LDFLAGS) tools/hack/gobuild.sh $(OUT_LINUX)/ $(HIGRESS_BINARIES)

|

||||

|

||||

$(AMD64_OUT_LINUX)/higress:

|

||||

GOPROXY=$(GOPROXY) GOOS=linux GOARCH=amd64 LDFLAGS=$(RELEASE_LDFLAGS) tools/hack/gobuild.sh ./out/linux_amd64/ $(HIGRESS_BINARIES)

|

||||

GOPROXY="$(GOPROXY)" GOOS=linux GOARCH=amd64 LDFLAGS=$(RELEASE_LDFLAGS) tools/hack/gobuild.sh ./out/linux_amd64/ $(HIGRESS_BINARIES)

|

||||

|

||||

$(ARM64_OUT_LINUX)/higress:

|

||||

GOPROXY=$(GOPROXY) GOOS=linux GOARCH=arm64 LDFLAGS=$(RELEASE_LDFLAGS) tools/hack/gobuild.sh ./out/linux_arm64/ $(HIGRESS_BINARIES)

|

||||

GOPROXY="$(GOPROXY)" GOOS=linux GOARCH=arm64 LDFLAGS=$(RELEASE_LDFLAGS) tools/hack/gobuild.sh ./out/linux_arm64/ $(HIGRESS_BINARIES)

|

||||

|

||||

.PHONY: build-hgctl

|

||||

build-hgctl: prebuild $(OUT)

|

||||

@@ -221,11 +221,15 @@ clean-higress: ## Cleans all the intermediate files and folders previously gener

|

||||

rm -rf $(DIRS_TO_CLEAN)

|

||||

|

||||

clean-istio:

|

||||

rm -rf external/api

|

||||

rm -rf external/client-go

|

||||

rm -rf external/istio

|

||||

rm -rf external/pkg

|

||||

|

||||

clean-gateway: clean-istio

|

||||

rm -rf external/envoy

|

||||

rm -rf external/proxy

|

||||

rm -rf external/go-control-plane

|

||||

rm -rf external/package/envoy.tar.gz

|

||||

|

||||

clean-env:

|

||||

@@ -284,6 +288,8 @@ delete-cluster: $(tools/kind) ## Delete kind cluster.

|

||||

.PHONY: kube-load-image

|

||||

kube-load-image: $(tools/kind) ## Install the Higress image to a kind cluster using the provided $IMAGE and $TAG.

|

||||

tools/hack/kind-load-image.sh higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/higress $(TAG)

|

||||

tools/hack/docker-pull-image.sh higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/pilot $(ISTIO_LATEST_IMAGE_TAG)

|

||||

tools/hack/docker-pull-image.sh higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/gateway $(ENVOY_LATEST_IMAGE_TAG)

|

||||

tools/hack/docker-pull-image.sh higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/dubbo-provider-demo 0.0.3-x86

|

||||

tools/hack/docker-pull-image.sh higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/nacos-standlone-rc3 1.0.0-RC3

|

||||

tools/hack/docker-pull-image.sh docker.io/hashicorp/consul 1.16.0

|

||||

@@ -294,6 +300,7 @@ kube-load-image: $(tools/kind) ## Install the Higress image to a kind cluster us

|

||||

tools/hack/docker-pull-image.sh higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/echo-server v1.0

|

||||

tools/hack/docker-pull-image.sh higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/echo-body 1.0.0

|

||||

tools/hack/docker-pull-image.sh openpolicyagent/opa latest

|

||||

tools/hack/docker-pull-image.sh curlimages/curl latest

|

||||

tools/hack/docker-pull-image.sh registry.cn-hangzhou.aliyuncs.com/2456868764/httpbin 1.0.2

|

||||

tools/hack/docker-pull-image.sh registry.cn-hangzhou.aliyuncs.com/hinsteny/nacos-standlone-rc3 1.0.0-RC3

|

||||

tools/hack/kind-load-image.sh higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/dubbo-provider-demo 0.0.3-x86

|

||||

@@ -306,6 +313,7 @@ kube-load-image: $(tools/kind) ## Install the Higress image to a kind cluster us

|

||||

tools/hack/kind-load-image.sh higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/echo-server v1.0

|

||||

tools/hack/kind-load-image.sh higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/echo-body 1.0.0

|

||||

tools/hack/kind-load-image.sh openpolicyagent/opa latest

|

||||

tools/hack/kind-load-image.sh curlimages/curl latest

|

||||

tools/hack/kind-load-image.sh registry.cn-hangzhou.aliyuncs.com/2456868764/httpbin 1.0.2

|

||||

tools/hack/kind-load-image.sh registry.cn-hangzhou.aliyuncs.com/hinsteny/nacos-standlone-rc3 1.0.0-RC3

|

||||

|

||||

|

||||

53

README.md

53

README.md

@@ -1,3 +1,4 @@

|

||||

<a name="readme-top"></a>

|

||||

<h1 align="center">

|

||||

<img src="https://img.alicdn.com/imgextra/i2/O1CN01NwxLDd20nxfGBjxmZ_!!6000000006895-2-tps-960-290.png" alt="Higress" width="240" height="72.5">

|

||||

<br>

|

||||

@@ -9,7 +10,7 @@

|

||||

[](https://www.apache.org/licenses/LICENSE-2.0.html)

|

||||

|

||||

[**官网**](https://higress.cn/) |

|

||||

[**文档**](https://higress.cn/docs/latest/user/quickstart/) |

|

||||

[**文档**](https://higress.cn/docs/latest/overview/what-is-higress/) |

|

||||

[**博客**](https://higress.cn/blog/) |

|

||||

[**电子书**](https://higress.cn/docs/ebook/wasm14/) |

|

||||

[**开发指引**](https://higress.cn/docs/latest/dev/architecture/) |

|

||||

@@ -17,18 +18,19 @@

|

||||

|

||||

|

||||

<p>

|

||||

<a href="README_EN.md"> English <a/> | 中文

|

||||

<a href="README_EN.md"> English <a/>| 中文 | <a href="README_JP.md"> 日本語 <a/>

|

||||

</p>

|

||||

|

||||

|

||||

Higress 是基于阿里内部多年的 Envoy Gateway 实践沉淀,以开源 [Istio](https://github.com/istio/istio) 与 [Envoy](https://github.com/envoyproxy/envoy) 为核心构建的云原生 API 网关。

|

||||

Higress 是一款云原生 API 网关,内核基于 Istio 和 Envoy,可以用 Go/Rust/JS 等编写 Wasm 插件,提供了数十个现成的通用插件,以及开箱即用的控制台(demo 点[这里](http://demo.higress.io/))

|

||||

|

||||

Higress 在阿里内部作为 AI 网关,承载了通义千问 APP、百炼大模型 API、机器学习 PAI 平台等 AI 业务的流量。

|

||||

Higress 在阿里内部为解决 Tengine reload 对长连接业务有损,以及 gRPC/Dubbo 负载均衡能力不足而诞生。

|

||||

|

||||

Higress 能够用统一的协议对接国内外所有 LLM 模型厂商,同时具备丰富的 AI 可观测、多模型负载均衡/fallback、AI token 流控、AI 缓存等能力:

|

||||

阿里云基于 Higress 构建了云原生 API 网关产品,为大量企业客户提供 99.99% 的网关高可用保障服务能力。

|

||||

|

||||

|

||||

Higress 基于 AI 网关能力,支撑了通义千问 APP、百炼大模型 API、机器学习 PAI 平台等 AI 业务。同时服务国内头部的 AIGC 企业(如零一万物),以及 AI 产品(如 FastGPT)

|

||||

|

||||

|

||||

|

||||

|

||||

## Summary

|

||||

@@ -58,32 +60,45 @@ docker run -d --rm --name higress-ai -v ${PWD}:/data \

|

||||

- 8080 端口:网关 HTTP 协议入口

|

||||

- 8443 端口:网关 HTTPS 协议入口

|

||||

|

||||

**Higress 的所有 Docker 镜像都一直使用自己独享的仓库,不受 Docker Hub 境内不可访问的影响**

|

||||

**Higress 的所有 Docker 镜像都一直使用自己独享的仓库,不受 Docker Hub 境内访问受限的影响**

|

||||

|

||||

K8s 下使用 Helm 部署等其他安装方式可以参考官网 [Quick Start 文档](https://higress.cn/docs/latest/user/quickstart/)。

|

||||

|

||||

如果您是在云上部署,生产环境推荐使用[企业版](https://higress.io/cloud/),开发测试可以使用下面一键部署社区版:

|

||||

|

||||

[](https://computenest.console.aliyun.com/service/instance/create/default?type=user&ServiceName=Higress社区版)

|

||||

|

||||

|

||||

## 使用场景

|

||||

|

||||

- **AI 网关**:

|

||||

|

||||

Higress 提供了一站式的 AI 插件集,可以增强依赖 AI 能力业务的稳定性、灵活性、可观测性,使得业务与 AI 的集成更加便捷和高效。

|

||||

Higress 能够用统一的协议对接国内外所有 LLM 模型厂商,同时具备丰富的 AI 可观测、多模型负载均衡/fallback、AI token 流控、AI 缓存等能力:

|

||||

|

||||

|

||||

|

||||

- **Kubernetes Ingress 网关**:

|

||||

|

||||

Higress 可以作为 K8s 集群的 Ingress 入口网关, 并且兼容了大量 K8s Nginx Ingress 的注解,可以从 K8s Nginx Ingress 快速平滑迁移到 Higress。

|

||||

|

||||

支持 [Gateway API](https://gateway-api.sigs.k8s.io/) 标准,支持用户从 Ingress API 平滑迁移到 Gateway API。

|

||||

|

||||

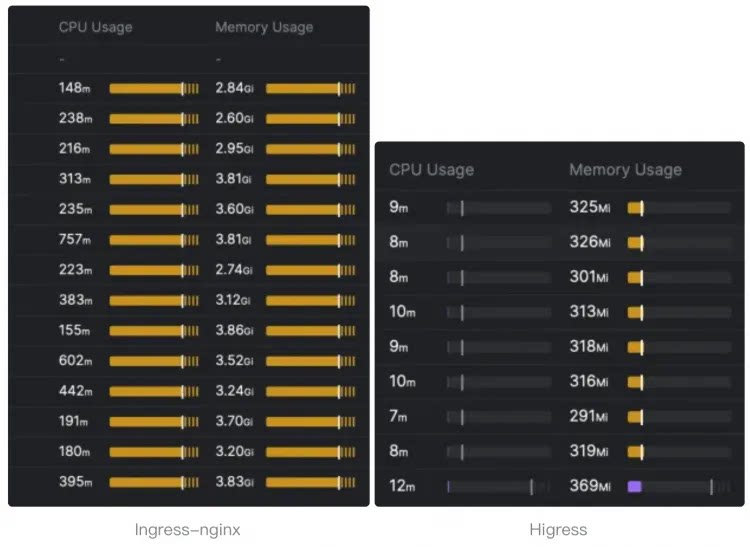

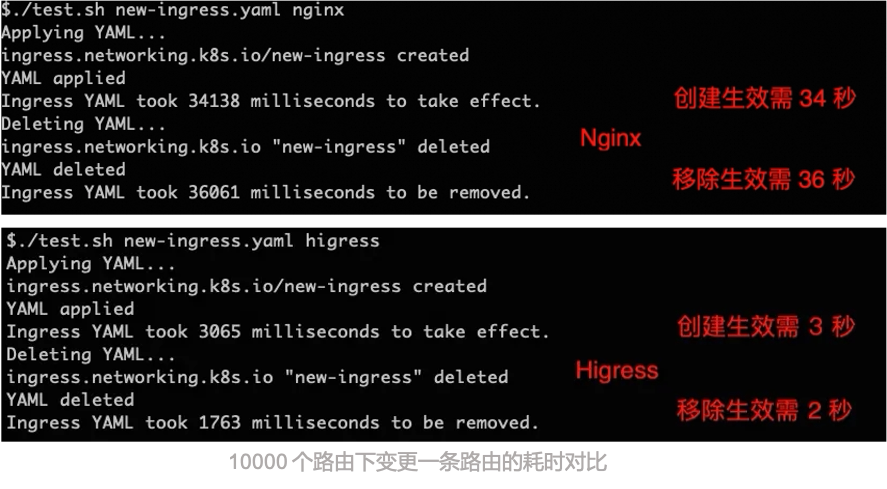

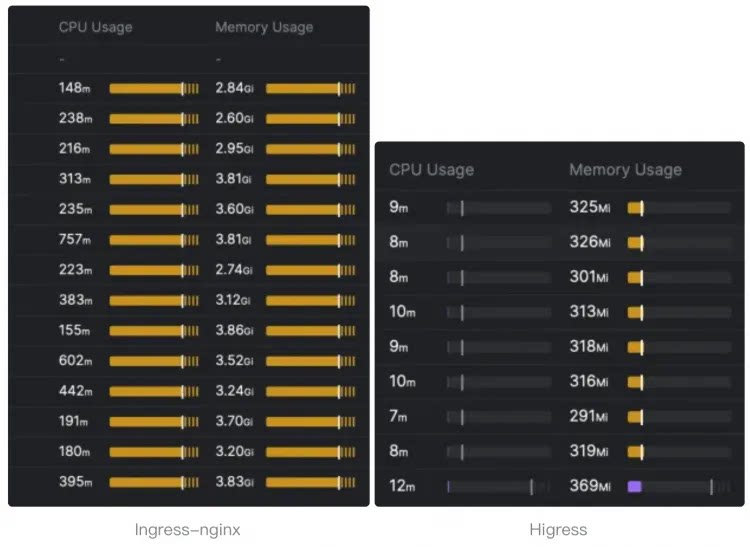

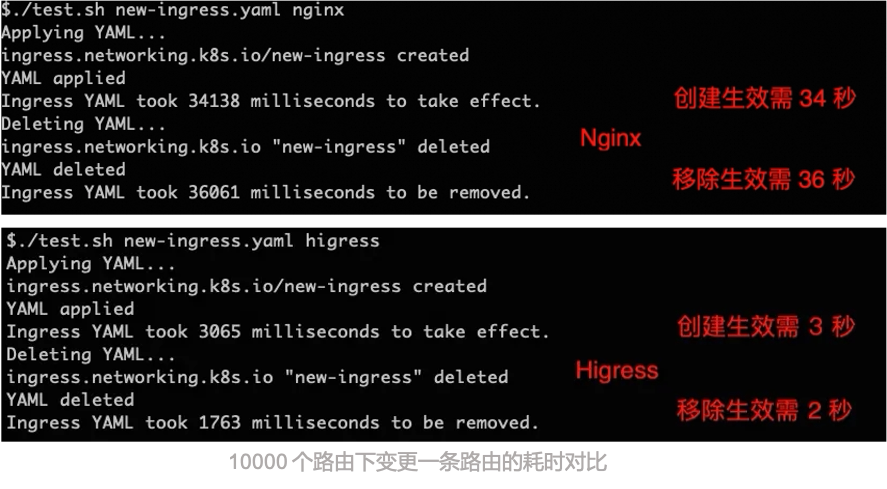

相比 ingress-nginx,资源开销大幅下降,路由变更生效速度有十倍提升:

|

||||

|

||||

|

||||

|

||||

|

||||

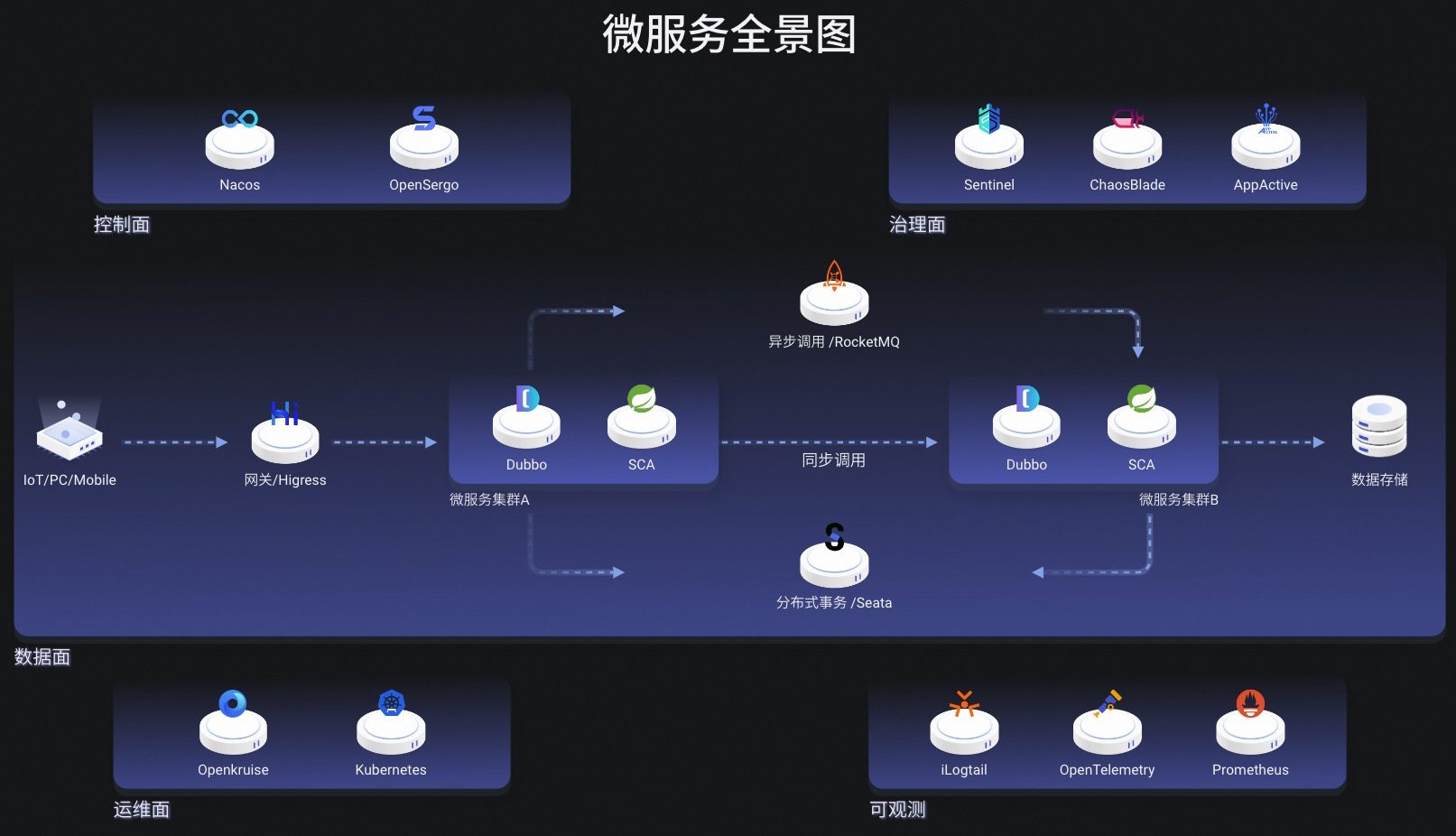

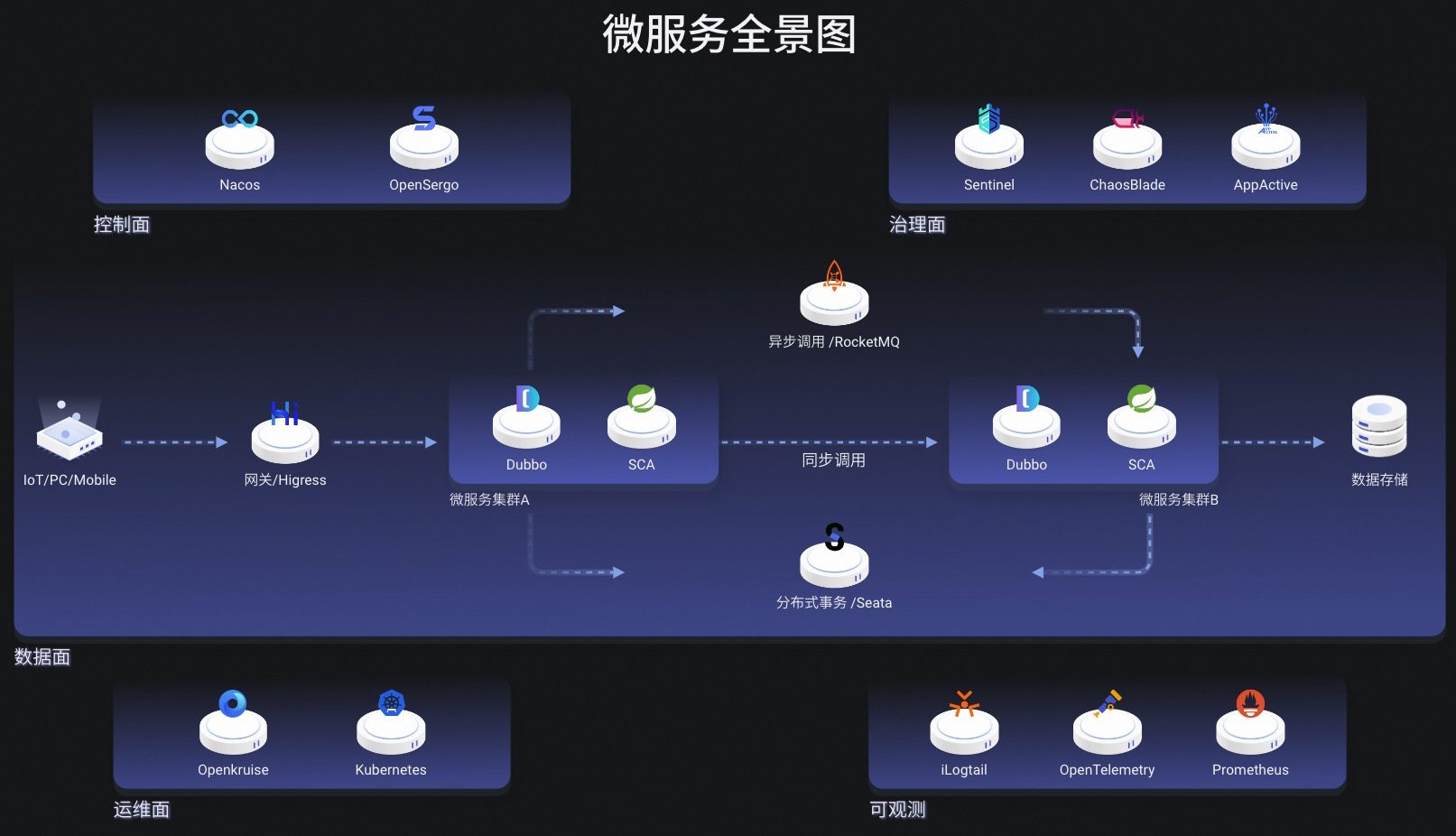

- **微服务网关**:

|

||||

|

||||

Higress 可以作为微服务网关, 能够对接多种类型的注册中心发现服务配置路由,例如 Nacos, ZooKeeper, Consul, Eureka 等。

|

||||

|

||||

并且深度集成了 [Dubbo](https://github.com/apache/dubbo), [Nacos](https://github.com/alibaba/nacos), [Sentinel](https://github.com/alibaba/Sentinel) 等微服务技术栈,基于 Envoy C++ 网关内核的出色性能,相比传统 Java 类微服务网关,可以显著降低资源使用率,减少成本。

|

||||

|

||||

|

||||

|

||||

- **安全防护网关**:

|

||||

|

||||

Higress 可以作为安全防护网关, 提供 WAF 的能力,并且支持多种认证鉴权策略,例如 key-auth, hmac-auth, jwt-auth, basic-auth, oidc 等。

|

||||

Higress 可以作为安全防护网关, 提供 WAF 的能力,并且支持多种认证鉴权策略,例如 key-auth, hmac-auth, jwt-auth, basic-auth, oidc 等。

|

||||

|

||||

## 核心优势

|

||||

|

||||

@@ -165,7 +180,7 @@ K8s 下使用 Helm 部署等其他安装方式可以参考官网 [Quick Start

|

||||

|

||||

### 交流群

|

||||

|

||||

|

||||

|

||||

|

||||

### 技术分享

|

||||

|

||||

@@ -176,4 +191,20 @@ K8s 下使用 Helm 部署等其他安装方式可以参考官网 [Quick Start

|

||||

### 关联仓库

|

||||

|

||||

- Higress 控制台:https://github.com/higress-group/higress-console

|

||||

- Higress(独立运行版):https://github.com/higress-group/higress-standalone

|

||||

- Higress(独立运行版):https://github.com/higress-group/higress-standalone

|

||||

|

||||

### 贡献者

|

||||

|

||||

<a href="https://github.com/alibaba/higress/graphs/contributors">

|

||||

<img alt="contributors" src="https://contrib.rocks/image?repo=alibaba/higress"/>

|

||||

</a>

|

||||

|

||||

### Star History

|

||||

|

||||

[](https://star-history.com/#alibaba/higress&Date)

|

||||

|

||||

<p align="right" style="font-size: 14px; color: #555; margin-top: 20px;">

|

||||

<a href="#readme-top" style="text-decoration: none; color: #007bff; font-weight: bold;">

|

||||

↑ 返回顶部 ↑

|

||||

</a>

|

||||

</p>

|

||||

|

||||

29

README_EN.md

29

README_EN.md

@@ -1,3 +1,4 @@

|

||||

<a name="readme-top"></a>

|

||||

<h1 align="center">

|

||||

<img src="https://img.alicdn.com/imgextra/i2/O1CN01NwxLDd20nxfGBjxmZ_!!6000000006895-2-tps-960-290.png" alt="Higress" width="240" height="72.5">

|

||||

<br>

|

||||

@@ -15,7 +16,7 @@

|

||||

|

||||

|

||||

<p>

|

||||

English | <a href="README.md">中文<a/>

|

||||

English | <a href="README.md">中文<a/> | <a href="README_JP.md">日本語<a/>

|

||||

</p>

|

||||

|

||||

Higress is a cloud-native api gateway based on Alibaba's internal gateway practices.

|

||||

@@ -47,7 +48,7 @@ Powered by [Istio](https://github.com/istio/istio) and [Envoy](https://github.co

|

||||

|

||||

Higress can function as a microservice gateway, which can discovery microservices from various service registries, such as Nacos, ZooKeeper, Consul, Eureka, etc.

|

||||

|

||||

It deeply integrates of [Dubbo](https://github.com/apache/dubbo), [Nacos](https://github.com/alibaba/nacos), [Sentinel](https://github.com/alibaba/Sentinel) and other microservice technology stacks.

|

||||

It deeply integrates with [Dubbo](https://github.com/apache/dubbo), [Nacos](https://github.com/alibaba/nacos), [Sentinel](https://github.com/alibaba/Sentinel) and other microservice technology stacks.

|

||||

|

||||

- **Security gateway**:

|

||||

|

||||

@@ -57,7 +58,7 @@ Powered by [Istio](https://github.com/istio/istio) and [Envoy](https://github.co

|

||||

|

||||

- **Easy to use**

|

||||

|

||||

Provide one-stop gateway solutions for traffic scheduling, service management, and security protection, support Console, K8s Ingress, and Gateway API configuration methods, and also support HTTP to Dubbo protocol conversion, and easily complete protocol mapping configuration.

|

||||

Provides one-stop gateway solutions for traffic scheduling, service management, and security protection, support Console, K8s Ingress, and Gateway API configuration methods, and also support HTTP to Dubbo protocol conversion, and easily complete protocol mapping configuration.

|

||||

|

||||

- **Easy to expand**

|

||||

|

||||

@@ -73,7 +74,7 @@ Powered by [Istio](https://github.com/istio/istio) and [Envoy](https://github.co

|

||||

|

||||

- **Security**

|

||||

|

||||

Provides JWT, OIDC, custom authentication and authentication, deeply integrates open source web application firewall.

|

||||

Provides JWT, OIDC, custom authentication and authentication, deeply integrates open-source web application firewall.

|

||||

|

||||

## Community

|

||||

|

||||

@@ -81,9 +82,25 @@ Powered by [Istio](https://github.com/istio/istio) and [Envoy](https://github.co

|

||||

|

||||

### Thanks

|

||||

|

||||

Higress would not be possible without the valuable open-source work of projects in the community. We would like to extend a special thank-you to Envoy and Istio.

|

||||

Higress would not be possible without the valuable open-source work of projects in the community. We would like to extend a special thank you to Envoy and Istio.

|

||||

|

||||

### Related Repositories

|

||||

|

||||

- Higress Console: https://github.com/higress-group/higress-console

|

||||

- Higress Standalone: https://github.com/higress-group/higress-standalone

|

||||

- Higress Standalone: https://github.com/higress-group/higress-standalone

|

||||

|

||||

### Contributors

|

||||

|

||||

<a href="https://github.com/alibaba/higress/graphs/contributors">

|

||||

<img alt="contributors" src="https://contrib.rocks/image?repo=alibaba/higress"/>

|

||||

</a>

|

||||

|

||||

### Star History

|

||||

|

||||

[](https://star-history.com/#alibaba/higress&Date)

|

||||

|

||||

<p align="right" style="font-size: 14px; color: #555; margin-top: 20px;">

|

||||

<a href="#readme-top" style="text-decoration: none; color: #007bff; font-weight: bold;">

|

||||

↑ Back to Top ↑

|

||||

</a>

|

||||

</p>

|

||||

206

README_JP.md

Normal file

206

README_JP.md

Normal file

@@ -0,0 +1,206 @@

|

||||

<a name="readme-top"></a>

|

||||

<h1 align="center">

|

||||

<img src="https://img.alicdn.com/imgextra/i2/O1CN01NwxLDd20nxfGBjxmZ_!!6000000006895-2-tps-960-290.png" alt="Higress" width="240" height="72.5">

|

||||

<br>

|

||||

AIゲートウェイ

|

||||

</h1>

|

||||

<h4 align="center"> AIネイティブAPIゲートウェイ </h4>

|

||||

|

||||

[](https://github.com/alibaba/higress/actions)

|

||||

[](https://www.apache.org/licenses/LICENSE-2.0.html)

|

||||

|

||||

[**公式サイト**](https://higress.cn/) |

|

||||

[**ドキュメント**](https://higress.cn/docs/latest/overview/what-is-higress/) |

|

||||

[**ブログ**](https://higress.cn/blog/) |

|

||||

[**電子書籍**](https://higress.cn/docs/ebook/wasm14/) |

|

||||

[**開発ガイド**](https://higress.cn/docs/latest/dev/architecture/) |

|

||||

[**AIプラグイン**](https://higress.cn/plugin/)

|

||||

|

||||

|

||||

<p>

|

||||

<a href="README_EN.md"> English <a/> | <a href="README.md">中文<a/> | 日本語

|

||||

</p>

|

||||

|

||||

|

||||

Higressは、IstioとEnvoyをベースにしたクラウドネイティブAPIゲートウェイで、Go/Rust/JSなどを使用してWasmプラグインを作成できます。数十の既製の汎用プラグインと、すぐに使用できるコンソールを提供しています(デモは[こちら](http://demo.higress.io/))。

|

||||

|

||||

Higressは、Tengineのリロードが長時間接続のビジネスに影響を与える問題や、gRPC/Dubboの負荷分散能力の不足を解決するために、Alibaba内部で誕生しました。

|

||||

|

||||

Alibaba Cloudは、Higressを基盤にクラウドネイティブAPIゲートウェイ製品を構築し、多くの企業顧客に99.99%のゲートウェイ高可用性保証サービスを提供しています。

|

||||

|

||||

Higressは、AIゲートウェイ機能を基盤に、Tongyi Qianwen APP、Bailian大規模モデルAPI、機械学習PAIプラットフォームなどのAIビジネスをサポートしています。また、国内の主要なAIGC企業(例:ZeroOne)やAI製品(例:FastGPT)にもサービスを提供しています。

|

||||

|

||||

|

||||

|

||||

|

||||

## 目次

|

||||

|

||||

- [**クイックスタート**](#クイックスタート)

|

||||

- [**機能紹介**](#機能紹介)

|

||||

- [**使用シナリオ**](#使用シナリオ)

|

||||

- [**主な利点**](#主な利点)

|

||||

- [**コミュニティ**](#コミュニティ)

|

||||

|

||||

## クイックスタート

|

||||

|

||||

HigressはDockerだけで起動でき、個人開発者がローカルで学習用にセットアップしたり、簡易サイトを構築するのに便利です。

|

||||

|

||||

```bash

|

||||

# 作業ディレクトリを作成

|

||||

mkdir higress; cd higress

|

||||

# Higressを起動し、設定ファイルを作業ディレクトリに書き込みます

|

||||

docker run -d --rm --name higress-ai -v ${PWD}:/data \

|

||||

-p 8001:8001 -p 8080:8080 -p 8443:8443 \

|

||||

higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/all-in-one:latest

|

||||

```

|

||||

|

||||

リスンポートの説明は以下の通りです:

|

||||

|

||||

- 8001ポート:Higress UIコンソールのエントリーポイント

|

||||

- 8080ポート:ゲートウェイのHTTPプロトコルエントリーポイント

|

||||

- 8443ポート:ゲートウェイのHTTPSプロトコルエントリーポイント

|

||||

|

||||

**HigressのすべてのDockerイメージは専用のリポジトリを使用しており、Docker Hubの国内アクセス不可の影響を受けません**

|

||||

|

||||

K8sでのHelmデプロイなどの他のインストール方法については、公式サイトの[クイックスタートドキュメント](https://higress.cn/docs/latest/user/quickstart/)を参照してください。

|

||||

|

||||

|

||||

## 使用シナリオ

|

||||

|

||||

- **AIゲートウェイ**:

|

||||

|

||||

Higressは、国内外のすべてのLLMモデルプロバイダーと統一されたプロトコルで接続でき、豊富なAI可観測性、多モデル負荷分散/フォールバック、AIトークンフロー制御、AIキャッシュなどの機能を備えています。

|

||||

|

||||

|

||||

|

||||

- **Kubernetes Ingressゲートウェイ**:

|

||||

|

||||

HigressはK8sクラスターのIngressエントリーポイントゲートウェイとして機能し、多くのK8s Nginx Ingressの注釈に対応しています。K8s Nginx IngressからHigressへのスムーズな移行が可能です。

|

||||

|

||||

[Gateway API](https://gateway-api.sigs.k8s.io/)標準をサポートし、ユーザーがIngress APIからGateway APIにスムーズに移行できるようにします。

|

||||

|

||||

ingress-nginxと比較して、リソースの消費が大幅に減少し、ルーティングの変更が10倍速く反映されます。

|

||||

|

||||

|

||||

|

||||

|

||||

- **マイクロサービスゲートウェイ**:

|

||||

|

||||

Higressはマイクロサービスゲートウェイとして機能し、Nacos、ZooKeeper、Consul、Eurekaなどのさまざまなサービスレジストリからサービスを発見し、ルーティングを構成できます。

|

||||

|

||||

また、[Dubbo](https://github.com/apache/dubbo)、[Nacos](https://github.com/alibaba/nacos)、[Sentinel](https://github.com/alibaba/Sentinel)などのマイクロサービス技術スタックと深く統合されています。Envoy C++ゲートウェイコアの優れたパフォーマンスに基づいて、従来のJavaベースのマイクロサービスゲートウェイと比較して、リソース使用率を大幅に削減し、コストを削減できます。

|

||||

|

||||

|

||||

|

||||

- **セキュリティゲートウェイ**:

|

||||

|

||||

Higressはセキュリティゲートウェイとして機能し、WAF機能を提供し、key-auth、hmac-auth、jwt-auth、basic-auth、oidcなどのさまざまな認証戦略をサポートします。

|

||||

|

||||

## 主な利点

|

||||

|

||||

- **プロダクションレベル**

|

||||

|

||||

Alibabaで2年以上のプロダクション検証を経た内部製品から派生し、毎秒数十万のリクエストを処理する大規模なシナリオをサポートします。

|

||||

|

||||

Nginxのリロードによるトラフィックの揺れを完全に排除し、構成変更がミリ秒単位で反映され、ビジネスに影響を与えません。AIビジネスなどの長時間接続シナリオに特に適しています。

|

||||

|

||||

- **ストリーム処理**

|

||||

|

||||

リクエスト/レスポンスボディの完全なストリーム処理をサポートし、Wasmプラグインを使用してSSE(Server-Sent Events)などのストリームプロトコルのメッセージをカスタマイズして処理できます。

|

||||

|

||||

AIビジネスなどの大帯域幅シナリオで、メモリ使用量を大幅に削減できます。

|

||||

|

||||

- **拡張性**

|

||||

|

||||

AI、トラフィック管理、セキュリティ保護などの一般的な機能をカバーする豊富な公式プラグインライブラリを提供し、90%以上のビジネスシナリオのニーズを満たします。

|

||||

|

||||

Wasmプラグイン拡張を主力とし、サンドボックス隔離を通じてメモリの安全性を確保し、複数のプログラミング言語をサポートし、プラグインバージョンの独立したアップグレードを許可し、トラフィックに影響を与えずにゲートウェイロジックをホットアップデートできます。

|

||||

|

||||

- **安全で使いやすい**

|

||||

|

||||

Ingress APIおよびGateway API標準に基づき、すぐに使用できるUIコンソールを提供し、WAF保護プラグイン、IP/Cookie CC保護プラグインをすぐに使用できます。

|

||||

|

||||

Let's Encryptの自動証明書発行および更新をサポートし、K8sを使用せずにデプロイでき、1行のDockerコマンドで起動でき、個人開発者にとって便利です。

|

||||

|

||||

|

||||

## 機能紹介

|

||||

|

||||

### AIゲートウェイデモ展示

|

||||

|

||||

[OpenAIから他の大規模モデルへの移行を30秒で完了

|

||||

](https://www.bilibili.com/video/BV1dT421a7w7/?spm_id_from=333.788.recommend_more_video.14)

|

||||

|

||||

|

||||

### Higress UIコンソール

|

||||

|

||||

- **豊富な可観測性**

|

||||

|

||||

すぐに使用できる可観測性を提供し、Grafana&Prometheusは組み込みのものを使用することも、自分で構築したものを接続することもできます。

|

||||

|

||||

|

||||

|

||||

|

||||

- **プラグイン拡張メカニズム**

|

||||

|

||||

公式にはさまざまなプラグインが提供されており、ユーザーは[独自のプラグインを開発](./plugins/wasm-go)し、Docker/OCIイメージとして構築し、コンソールで構成して、プラグインロジックをリアルタイムで変更できます。トラフィックに影響を与えずにプラグインロジックをホットアップデートできます。

|

||||

|

||||

|

||||

|

||||

|

||||

- **さまざまなサービス発見**

|

||||

|

||||

デフォルトでK8s Serviceサービス発見を提供し、構成を通じてNacos/ZooKeeperなどのレジストリに接続してサービスを発見することも、静的IPまたはDNSに基づいて発見することもできます。

|

||||

|

||||

|

||||

|

||||

|

||||

- **ドメインと証明書**

|

||||

|

||||

TLS証明書を作成および管理し、ドメインのHTTP/HTTPS動作を構成できます。ドメインポリシーでは、特定のドメインに対してプラグインを適用することができます。

|

||||

|

||||

|

||||

|

||||

|

||||

- **豊富なルーティング機能**

|

||||

|

||||

上記で定義されたサービス発見メカニズムを通じて、発見されたサービスはサービスリストに表示されます。ルーティングを作成する際に、ドメインを選択し、ルーティングマッチングメカニズムを定義し、ターゲットサービスを選択してルーティングを行います。ルーティングポリシーでは、特定のルーティングに対してプラグインを適用することができます。

|

||||

|

||||

|

||||

|

||||

|

||||

## コミュニティ

|

||||

|

||||

### 感謝

|

||||

|

||||

EnvoyとIstioのオープンソースの取り組みがなければ、Higressは実現できませんでした。これらのプロジェクトに最も誠実な敬意を表します。

|

||||

|

||||

### 交流グループ

|

||||

|

||||

|

||||

|

||||

### 技術共有

|

||||

|

||||

WeChat公式アカウント:

|

||||

|

||||

|

||||

|

||||

### 関連リポジトリ

|

||||

|

||||

- Higressコンソール:https://github.com/higress-group/higress-console

|

||||

- Higress(スタンドアロン版):https://github.com/higress-group/higress-standalone

|

||||

|

||||

### 貢献者

|

||||

|

||||

<a href="https://github.com/alibaba/higress/graphs/contributors">

|

||||

<img alt="contributors" src="https://contrib.rocks/image?repo=alibaba/higress"/>

|

||||

</a>

|

||||

|

||||

### スターの歴史

|

||||

|

||||

[](https://star-history.com/#alibaba/higress&Date)

|

||||

|

||||

<p align="right" style="font-size: 14px; color: #555; margin-top: 20px;">

|

||||

<a href="#readme-top" style="text-decoration: none; color: #007bff; font-weight: bold;">

|

||||

↑ トップに戻る ↑

|

||||

</a>

|

||||

</p>

|

||||

@@ -4,6 +4,7 @@

|

||||

|

||||

| Version | Supported |

|

||||

| ------- | ------------------ |

|

||||

| 2.x.x | :white_check_mark: |

|

||||

| 1.x.x | :white_check_mark: |

|

||||

| < 1.0.0 | :x: |

|

||||

|

||||

|

||||

Submodule envoy/envoy updated: b3541845c1...e9302f5574

@@ -1,5 +1,5 @@

|

||||

apiVersion: v2

|

||||

appVersion: 2.0.1

|

||||

appVersion: 2.0.3

|

||||

description: Helm chart for deploying higress gateways

|

||||

icon: https://higress.io/img/higress_logo_small.png

|

||||

home: http://higress.io/

|

||||

@@ -10,4 +10,4 @@ name: higress-core

|

||||

sources:

|

||||

- http://github.com/alibaba/higress

|

||||

type: application

|

||||

version: 2.0.1

|

||||

version: 2.0.3

|

||||

|

||||

@@ -1,3 +1,4 @@

|

||||

---

|

||||

apiVersion: apiextensions.k8s.io/v1

|

||||

kind: CustomResourceDefinition

|

||||

metadata:

|

||||

|

||||

@@ -116,6 +116,12 @@ data:

|

||||

{{- $existingData = index $existingConfig.data "higress" | default "{}" | fromYaml }}

|

||||

{{- end }}

|

||||

{{- $newData := dict }}

|

||||

{{- if hasKey .Values "upstream" }}

|

||||

{{- $_ := set $newData "upstream" .Values.upstream }}

|

||||

{{- end }}

|

||||

{{- if hasKey .Values "downstream" }}

|

||||

{{- $_ := set $newData "downstream" .Values.downstream }}

|

||||

{{- end }}

|

||||

{{- if and (hasKey .Values "tracing") .Values.tracing.enable }}

|

||||

{{- $_ := set $newData "tracing" .Values.tracing }}

|

||||

{{- end }}

|

||||

|

||||

@@ -129,3 +129,10 @@ rules:

|

||||

- apiGroups: ["networking.internal.knative.dev"]

|

||||

resources: ["ingresses/status"]

|

||||

verbs: ["get","patch","update"]

|

||||

# gateway api need

|

||||

- apiGroups: ["apps"]

|

||||

verbs: [ "get", "watch", "list", "update", "patch", "create", "delete" ]

|

||||

resources: [ "deployments" ]

|

||||

- apiGroups: [""]

|

||||

verbs: [ "get", "watch", "list", "update", "patch", "create", "delete" ]

|

||||

resources: [ "serviceaccounts"]

|

||||

|

||||

@@ -69,6 +69,12 @@ spec:

|

||||

fieldPath: spec.serviceAccountName

|

||||

- name: DOMAIN_SUFFIX

|

||||

value: {{ .Values.global.proxy.clusterDomain }}

|

||||

- name: GATEWAY_NAME

|

||||

value: {{ include "gateway.name" . }}

|

||||

- name: PILOT_ENABLE_GATEWAY_API

|

||||

value: "{{ .Values.global.enableGatewayAPI }}"

|

||||

- name: PILOT_ENABLE_ALPHA_GATEWAY_API

|

||||

value: "{{ .Values.global.enableGatewayAPI }}"

|

||||

{{- if .Values.controller.env }}

|

||||

{{- range $key, $val := .Values.controller.env }}

|

||||

- name: {{ $key }}

|

||||

@@ -215,14 +221,14 @@ spec:

|

||||

- name: HIGRESS_ENABLE_ISTIO_API

|

||||

value: "true"

|

||||

{{- end }}

|

||||

{{- if .Values.global.enableGatewayAPI }}

|

||||

- name: PILOT_ENABLE_GATEWAY_API

|

||||

value: "true"

|

||||

value: "false"

|

||||

- name: PILOT_ENABLE_ALPHA_GATEWAY_API

|

||||

value: "false"

|

||||

- name: PILOT_ENABLE_GATEWAY_API_STATUS

|

||||

value: "true"

|

||||

value: "false"

|

||||

- name: PILOT_ENABLE_GATEWAY_API_DEPLOYMENT_CONTROLLER

|

||||

value: "false"

|

||||

{{- end }}

|

||||

{{- if not .Values.global.enableHigressIstio }}

|

||||

- name: CUSTOM_CA_CERT_NAME

|

||||

value: "higress-ca-root-cert"

|

||||

|

||||

22

helm/core/templates/fallback-envoyfilter.yaml

Normal file

22

helm/core/templates/fallback-envoyfilter.yaml

Normal file

@@ -0,0 +1,22 @@

|

||||

apiVersion: networking.istio.io/v1alpha3

|

||||

kind: EnvoyFilter

|

||||

metadata:

|

||||

name: {{ include "gateway.name" . }}-global-custom-response

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

{{- include "gateway.labels" . | nindent 4}}

|

||||

spec:

|

||||

configPatches:

|

||||

- applyTo: HTTP_FILTER

|

||||

match:

|

||||

context: GATEWAY

|

||||

listener:

|

||||

filterChain:

|

||||

filter:

|

||||

name: envoy.filters.network.http_connection_manager

|

||||

patch:

|

||||

operation: INSERT_FIRST

|

||||

value:

|

||||

name: envoy.filters.http.custom_response

|

||||

typed_config:

|

||||

"@type": type.googleapis.com/envoy.extensions.filters.http.custom_response.v3.CustomResponse

|

||||

@@ -1,6 +1,8 @@

|

||||

{{- if .Values.global.ingressClass }}

|

||||

apiVersion: networking.k8s.io/v1

|

||||

kind: IngressClass

|

||||

metadata:

|

||||

name: {{ .Values.global.ingressClass }}

|

||||

spec:

|

||||

controller: higress.io/higress-controller

|

||||

controller: higress.io/higress-controller

|

||||

{{- end }}

|

||||

|

||||

@@ -10,7 +10,7 @@ global:

|

||||

onDemandRDS: false

|

||||

hostRDSMergeSubset: false

|

||||

onlyPushRouteCluster: true

|

||||

# IngressClass filters which ingress resources the higress controller watches.

|

||||

# -- IngressClass filters which ingress resources the higress controller watches.

|

||||

# The default ingress class is higress.

|

||||

# There are some special cases for special ingress class.

|

||||

# 1. When the ingress class is set as nginx, the higress controller will watch ingress

|

||||

@@ -18,28 +18,40 @@ global:

|

||||

# 2. When the ingress class is set empty, the higress controller will watch all ingress

|

||||

# resources in the k8s cluster.

|

||||

ingressClass: "higress"

|

||||

# -- If not empty, Higress Controller will only watch resources in the specified namespace.

|

||||

# When isolating different business systems using K8s namespace,

|

||||

# if each namespace requires a standalone gateway instance,

|

||||

# this parameter can be used to confine the Ingress watching of Higress within the given namespace.

|

||||

watchNamespace: ""

|

||||

# -- Whether to disable HTTP/2 in ALPN

|

||||

disableAlpnH2: false

|

||||

# -- If true, Higress Controller will update the status field of Ingress resources.

|

||||

# When migrating from Nginx Ingress, in order to avoid status field of Ingress objects being overwritten,

|

||||

# this parameter needs to be set to false,

|

||||

# so Higress won't write the entry IP to the status field of the corresponding Ingress object.

|

||||

enableStatus: true

|

||||

# whether to use autoscaling/v2 template for HPA settings

|

||||

# -- whether to use autoscaling/v2 template for HPA settings

|

||||

# for internal usage only, not to be configured by users.

|

||||

autoscalingv2API: true

|

||||

local: false # When deploying to a local cluster (e.g.: kind cluster), set this to true.

|

||||

# -- When deploying to a local cluster (e.g.: kind cluster), set this to true.

|

||||

local: false

|

||||

kind: false # Deprecated. Please use "global.local" instead. Will be removed later.

|

||||

enableIstioAPI: false

|

||||

# -- If true, Higress Controller will monitor istio resources as well

|

||||

enableIstioAPI: true

|

||||

# -- If true, Higress Controller will monitor Gateway API resources as well

|

||||

enableGatewayAPI: false

|

||||

# Deprecated

|

||||

enableHigressIstio: false

|

||||

# Used to locate istiod.

|

||||

# -- Used to locate istiod.

|

||||

istioNamespace: istio-system

|

||||

# enable pod disruption budget for the control plane, which is used to

|

||||

# -- enable pod disruption budget for the control plane, which is used to

|

||||

# ensure Istio control plane components are gradually upgraded or recovered.

|

||||

defaultPodDisruptionBudget:

|

||||

enabled: false

|

||||

# The values aren't mutable due to a current PodDisruptionBudget limitation

|

||||

# minAvailable: 1

|

||||

|

||||

# A minimal set of requested resources to applied to all deployments so that

|

||||

# -- A minimal set of requested resources to applied to all deployments so that

|

||||

# Horizontal Pod Autoscaler will be able to function (if set).

|

||||

# Each component can overwrite these default values by adding its own resources

|

||||

# block in the relevant section below and setting the desired resources values.

|

||||

@@ -51,16 +63,16 @@ global:

|

||||

# cpu: 100m

|

||||

# memory: 128Mi

|

||||

|

||||

# Default hub for Istio images.

|

||||

# -- Default hub for Istio images.

|

||||

# Releases are published to docker hub under 'istio' project.

|

||||

# Dev builds from prow are on gcr.io

|

||||

hub: higress-registry.cn-hangzhou.cr.aliyuncs.com/higress

|

||||

|

||||

# Specify image pull policy if default behavior isn't desired.

|

||||

# -- Specify image pull policy if default behavior isn't desired.

|

||||

# Default behavior: latest images will be Always else IfNotPresent.

|

||||

imagePullPolicy: ""

|

||||

|

||||

# ImagePullSecrets for all ServiceAccount, list of secrets in the same namespace

|

||||

# -- ImagePullSecrets for all ServiceAccount, list of secrets in the same namespace

|

||||

# to use for pulling any images in pods that reference this ServiceAccount.

|

||||

# For components that don't use ServiceAccounts (i.e. grafana, servicegraph, tracing)

|

||||

# ImagePullSecrets will be added to the corresponding Deployment(StatefulSet) objects.

|

||||

@@ -68,14 +80,14 @@ global:

|

||||

imagePullSecrets: []

|

||||

# - private-registry-key

|

||||

|

||||

# Enabled by default in master for maximising testing.

|

||||

# -- Enabled by default in master for maximising testing.

|

||||

istiod:

|

||||

enableAnalysis: false

|

||||

|

||||

# To output all istio components logs in json format by adding --log_as_json argument to each container argument

|

||||

# -- To output all istio components logs in json format by adding --log_as_json argument to each container argument

|

||||

logAsJson: false

|

||||

|

||||

# Comma-separated minimum per-scope logging level of messages to output, in the form of <scope>:<level>,<scope>:<level>

|

||||

# -- Comma-separated minimum per-scope logging level of messages to output, in the form of <scope>:<level>,<scope>:<level>

|

||||

# The control plane has different scopes depending on component, but can configure default log level across all components

|

||||

# If empty, default scope and level will be used as configured in code

|

||||

logging:

|

||||

@@ -83,11 +95,11 @@ global:

|

||||

|

||||

omitSidecarInjectorConfigMap: false

|

||||

|

||||

# Whether to restrict the applications namespace the controller manages;

|

||||

# -- Whether to restrict the applications namespace the controller manages;

|

||||

# If not set, controller watches all namespaces

|

||||

oneNamespace: false

|

||||

|

||||

# Configure whether Operator manages webhook configurations. The current behavior

|

||||

# -- Configure whether Operator manages webhook configurations. The current behavior

|

||||

# of Istiod is to manage its own webhook configurations.

|

||||

# When this option is set as true, Istio Operator, instead of webhooks, manages the

|

||||

# webhook configurations. When this option is set as false, webhooks manage their

|

||||

@@ -106,7 +118,7 @@ global:

|

||||

#- global

|

||||

#- "{{ valueOrDefault .DeploymentMeta.Namespace \"default\" }}.global"

|

||||

|

||||

# Kubernetes >=v1.11.0 will create two PriorityClass, including system-cluster-critical and

|

||||

# -- Kubernetes >=v1.11.0 will create two PriorityClass, including system-cluster-critical and

|

||||

# system-node-critical, it is better to configure this in order to make sure your Istio pods

|

||||

# will not be killed because of low priority class.

|

||||

# Refer to https://kubernetes.io/docs/concepts/configuration/pod-priority-preemption/#priorityclass

|

||||

@@ -116,18 +128,18 @@ global:

|

||||

proxy:

|

||||

image: proxyv2

|

||||

|

||||

# This controls the 'policy' in the sidecar injector.

|

||||

# -- This controls the 'policy' in the sidecar injector.

|

||||

autoInject: enabled

|

||||

|

||||

# CAUTION: It is important to ensure that all Istio helm charts specify the same clusterDomain value

|

||||

# -- CAUTION: It is important to ensure that all Istio helm charts specify the same clusterDomain value

|

||||

# cluster domain. Default value is "cluster.local".

|

||||

clusterDomain: "cluster.local"

|

||||

|

||||

# Per Component log level for proxy, applies to gateways and sidecars. If a component level is

|

||||

# -- Per Component log level for proxy, applies to gateways and sidecars. If a component level is

|

||||

# not set, then the global "logLevel" will be used.

|

||||

componentLogLevel: "misc:error"

|

||||

|

||||

# If set, newly injected sidecars will have core dumps enabled.

|

||||

# -- If set, newly injected sidecars will have core dumps enabled.

|

||||

enableCoreDump: false

|

||||

|

||||

# istio ingress capture allowlist

|

||||

@@ -136,7 +148,7 @@ global:

|

||||

excludeInboundPorts: ""

|

||||

includeInboundPorts: "*"

|

||||

|

||||

# istio egress capture allowlist

|

||||

# -- istio egress capture allowlist

|

||||

# https://istio.io/docs/tasks/traffic-management/egress.html#calling-external-services-directly

|

||||

# example: includeIPRanges: "172.30.0.0/16,172.20.0.0/16"

|

||||

# would only capture egress traffic on those two IP Ranges, all other outbound traffic would

|

||||

@@ -146,29 +158,29 @@ global:

|

||||

includeOutboundPorts: ""

|

||||

excludeOutboundPorts: ""

|

||||

|

||||

# Log level for proxy, applies to gateways and sidecars.

|

||||

# -- Log level for proxy, applies to gateways and sidecars.

|

||||

# Expected values are: trace|debug|info|warning|error|critical|off

|

||||

logLevel: warning

|

||||

|

||||

#If set to true, istio-proxy container will have privileged securityContext

|

||||

# -- If set to true, istio-proxy container will have privileged securityContext

|

||||

privileged: false

|

||||

|

||||

# The number of successive failed probes before indicating readiness failure.

|

||||

# -- The number of successive failed probes before indicating readiness failure.

|

||||

readinessFailureThreshold: 30

|

||||

|

||||

# The number of successive successed probes before indicating readiness success.

|

||||

# -- The number of successive successed probes before indicating readiness success.

|

||||

readinessSuccessThreshold: 30

|

||||

|

||||

# The initial delay for readiness probes in seconds.

|

||||

# -- The initial delay for readiness probes in seconds.

|

||||

readinessInitialDelaySeconds: 1

|

||||

|

||||

# The period between readiness probes.

|

||||

# -- The period between readiness probes.

|

||||

readinessPeriodSeconds: 2

|

||||

|

||||

# The readiness timeout seconds

|

||||

# -- The readiness timeout seconds

|

||||

readinessTimeoutSeconds: 3

|

||||

|

||||

# Resources for the sidecar.

|

||||

# -- Resources for the sidecar.

|

||||

resources:

|

||||

requests:

|

||||

cpu: 100m

|

||||

@@ -177,18 +189,18 @@ global:

|

||||

cpu: 2000m

|

||||

memory: 1024Mi

|

||||

|

||||

# Default port for Pilot agent health checks. A value of 0 will disable health checking.

|

||||

# -- Default port for Pilot agent health checks. A value of 0 will disable health checking.

|

||||

statusPort: 15020

|

||||

|

||||

# Specify which tracer to use. One of: lightstep, datadog, stackdriver.

|

||||

# -- Specify which tracer to use. One of: lightstep, datadog, stackdriver.

|

||||

# If using stackdriver tracer outside GCP, set env GOOGLE_APPLICATION_CREDENTIALS to the GCP credential file.

|

||||

tracer: ""

|

||||

|

||||

# Controls if sidecar is injected at the front of the container list and blocks the start of the other containers until the proxy is ready

|

||||

# -- Controls if sidecar is injected at the front of the container list and blocks the start of the other containers until the proxy is ready

|

||||

holdApplicationUntilProxyStarts: false

|

||||

|

||||

proxy_init:

|

||||

# Base name for the proxy_init container, used to configure iptables.

|

||||

# -- Base name for the proxy_init container, used to configure iptables.

|

||||

image: proxyv2

|

||||

resources:

|

||||

limits:

|

||||

@@ -198,7 +210,7 @@ global:

|

||||

cpu: 10m

|

||||

memory: 10Mi

|

||||

|

||||

# configure remote pilot and istiod service and endpoint

|

||||

# -- configure remote pilot and istiod service and endpoint

|

||||

remotePilotAddress: ""

|

||||

|

||||

##############################################################################################

|

||||

@@ -206,20 +218,20 @@ global:

|

||||

# make sure they are consistent across your Istio helm charts #

|

||||

##############################################################################################

|

||||

|

||||

# The customized CA address to retrieve certificates for the pods in the cluster.

|

||||

# -- The customized CA address to retrieve certificates for the pods in the cluster.

|

||||

# CSR clients such as the Istio Agent and ingress gateways can use this to specify the CA endpoint.

|

||||

# If not set explicitly, default to the Istio discovery address.

|

||||

caAddress: ""

|

||||

|

||||

# Configure a remote cluster data plane controlled by an external istiod.

|

||||

# -- Configure a remote cluster data plane controlled by an external istiod.

|

||||

# When set to true, istiod is not deployed locally and only a subset of the other

|

||||

# discovery charts are enabled.

|

||||

externalIstiod: false

|

||||

|

||||

# Configure a remote cluster as the config cluster for an external istiod.

|

||||

# -- Configure a remote cluster as the config cluster for an external istiod.

|

||||

configCluster: false

|

||||

|

||||

# Configure the policy for validating JWT.

|

||||

# -- Configure the policy for validating JWT.

|

||||

# Currently, two options are supported: "third-party-jwt" and "first-party-jwt".

|

||||

jwtPolicy: "third-party-jwt"

|

||||

|

||||

@@ -241,7 +253,7 @@ global:

|

||||

# of migration TBD, and it may be a disruptive operation to change the Mesh

|

||||

# ID post-install.

|

||||

#

|

||||

# If the mesh admin does not specify a value, Istio will use the value of the

|

||||

# -- If the mesh admin does not specify a value, Istio will use the value of the

|

||||

# mesh's Trust Domain. The best practice is to select a proper Trust Domain

|

||||

# value.

|

||||

meshID: ""

|

||||

@@ -275,68 +287,69 @@ global:

|

||||

#

|

||||

meshNetworks: {}

|

||||

|

||||

# Use the user-specified, secret volume mounted key and certs for Pilot and workloads.

|

||||

# -- Use the user-specified, secret volume mounted key and certs for Pilot and workloads.

|

||||

mountMtlsCerts: false

|

||||

|

||||

multiCluster:

|

||||

# Set to true to connect two kubernetes clusters via their respective

|

||||

# -- Set to true to connect two kubernetes clusters via their respective

|

||||

# ingressgateway services when pods in each cluster cannot directly

|

||||

# talk to one another. All clusters should be using Istio mTLS and must

|

||||

# have a shared root CA for this model to work.

|

||||

enabled: true

|

||||

# Should be set to the name of the cluster this installation will run in. This is required for sidecar injection

|

||||

# -- Should be set to the name of the cluster this installation will run in. This is required for sidecar injection

|

||||

# to properly label proxies

|

||||

clusterName: ""

|

||||

|

||||

# Network defines the network this cluster belong to. This name

|

||||

# -- Network defines the network this cluster belong to. This name

|

||||

# corresponds to the networks in the map of mesh networks.

|

||||

network: ""

|

||||

|

||||

# Configure the certificate provider for control plane communication.

|

||||

# -- Configure the certificate provider for control plane communication.

|

||||

# Currently, two providers are supported: "kubernetes" and "istiod".

|

||||

# As some platforms may not have kubernetes signing APIs,

|

||||

# Istiod is the default

|

||||

pilotCertProvider: istiod

|

||||

|

||||

sds:

|

||||