mirror of

https://github.com/alibaba/higress.git

synced 2026-05-09 13:27:27 +08:00

AI Agent plugin adds JSON formatting output feature (#1374)

This commit is contained in:

@@ -5,7 +5,7 @@ description: AI Agent插件配置参考

|

||||

---

|

||||

|

||||

## 功能说明

|

||||

一个可定制化的 API AI Agent,支持配置 http method 类型为 GET 与 POST 的 API,支持多轮对话,支持流式与非流式模式。

|

||||

一个可定制化的 API AI Agent,支持配置 http method 类型为 GET 与 POST 的 API,支持多轮对话,支持流式与非流式模式,支持将结果格式化为自定义的 json。

|

||||

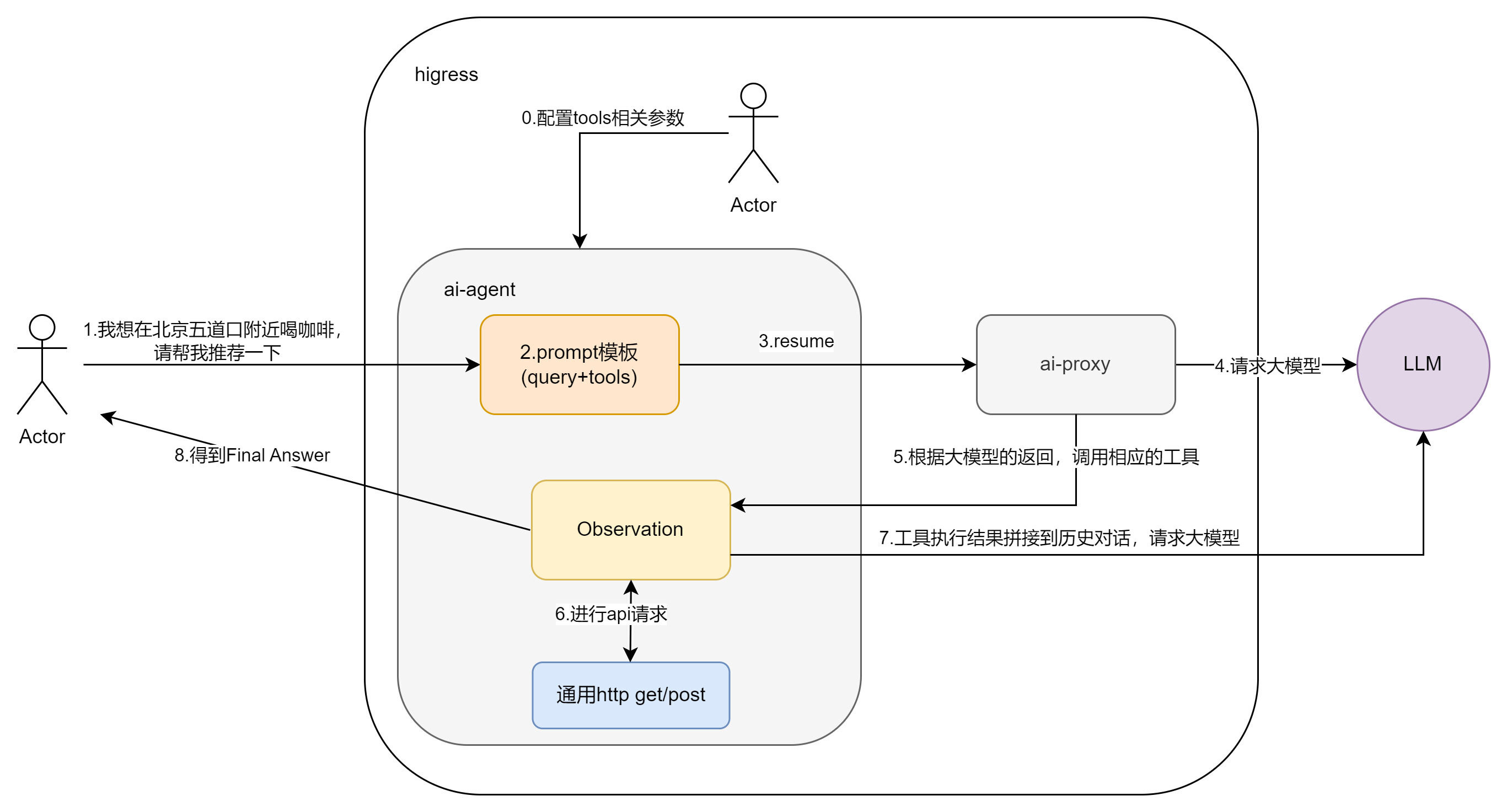

agent流程图如下:

|

||||

|

||||

|

||||

@@ -21,6 +21,7 @@ agent流程图如下:

|

||||

| `llm` | object | 必填 | - | 配置 AI 服务提供商的信息 |

|

||||

| `apis` | object | 必填 | - | 配置外部 API 服务提供商的信息 |

|

||||

| `promptTemplate` | object | 非必填 | - | 配置 Agent ReAct 模板的信息 |

|

||||

| `jsonResp` | object | 非必填 | - | 配置 json 格式化的相关信息 |

|

||||

|

||||

`llm`的配置字段说明如下:

|

||||

|

||||

@@ -78,7 +79,14 @@ agent流程图如下:

|

||||

| `observation` | string | 非必填 | - | Agent ReAct 模板的 observation 部分 |

|

||||

| `thought2` | string | 非必填 | - | Agent ReAct 模板的 thought2 部分 |

|

||||

|

||||

## 用法示例

|

||||

`jsonResp`的配置字段说明如下:

|

||||

|

||||

| 名称 | 数据类型 | 填写要求 | 默认值 | 描述 |

|

||||

|--------------------|-----------|---------|--------|-----------------------------------|

|

||||

| `enable` | bool | 非必填 | false | 是否开启 json 格式化。 |

|

||||

| `jsonSchema` | string | 非必填 | - | 自定义 json schema |

|

||||

|

||||

## 用法示例-不开启 json 格式化

|

||||

|

||||

**配置信息**

|

||||

|

||||

@@ -293,7 +301,7 @@ deepl提供了一个工具,用于翻译给定的句子,支持多语言。。

|

||||

**请求示例**

|

||||

|

||||

```shell

|

||||

curl 'http://<这里换成网关公网IP>/api/openai/v1/chat/completions' \

|

||||

curl 'http://<这里换成网关地址>/api/openai/v1/chat/completions' \

|

||||

-H 'Accept: application/json, text/event-stream' \

|

||||

-H 'Content-Type: application/json' \

|

||||

--data-raw '{"model":"qwen","frequency_penalty":0,"max_tokens":800,"stream":false,"messages":[{"role":"user","content":"我想在济南市鑫盛大厦附近喝咖啡,给我推荐几个"}],"presence_penalty":0,"temperature":0,"top_p":0}'

|

||||

@@ -308,7 +316,7 @@ curl 'http://<这里换成网关公网IP>/api/openai/v1/chat/completions' \

|

||||

**请求示例**

|

||||

|

||||

```shell

|

||||

curl 'http://<这里换成网关公网IP>/api/openai/v1/chat/completions' \

|

||||

curl 'http://<这里换成网关地址>/api/openai/v1/chat/completions' \

|

||||

-H 'Accept: application/json, text/event-stream' \

|

||||

-H 'Content-Type: application/json' \

|

||||

--data-raw '{"model":"qwen","frequency_penalty":0,"max_tokens":800,"stream":false,"messages":[{"role":"user","content":"济南市现在的天气情况如何?"}],"presence_penalty":0,"temperature":0,"top_p":0}'

|

||||

@@ -323,7 +331,7 @@ curl 'http://<这里换成网关公网IP>/api/openai/v1/chat/completions' \

|

||||

**请求示例**

|

||||

|

||||

```shell

|

||||

curl 'http://<这里换成网关公网IP>/api/openai/v1/chat/completions' \

|

||||

curl 'http://<这里换成网关地址>/api/openai/v1/chat/completions' \

|

||||

-H 'Accept: application/json, text/event-stream' \

|

||||

-H 'Content-Type: application/json' \

|

||||

--data-raw '{"model":"qwen","frequency_penalty":0,"max_tokens":800,"stream":false,"messages":[{"role": "user","content": "济南的天气如何?"},{ "role": "assistant","content": "目前,济南市的天气为多云,气温为24℃,数据更新时间为2024年9月12日21时50分14秒。"},{"role": "user","content": "北京呢?"}],"presence_penalty":0,"temperature":0,"top_p":0}'

|

||||

@@ -338,7 +346,7 @@ curl 'http://<这里换成网关公网IP>/api/openai/v1/chat/completions' \

|

||||

**请求示例**

|

||||

|

||||

```shell

|

||||

curl 'http://<这里换成网关公网IP>/api/openai/v1/chat/completions' \

|

||||

curl 'http://<这里换成网关地址>/api/openai/v1/chat/completions' \

|

||||

-H 'Accept: application/json, text/event-stream' \

|

||||

-H 'Content-Type: application/json' \

|

||||

--data-raw '{"model":"qwen","frequency_penalty":0,"max_tokens":800,"stream":false,"messages":[{"role":"user","content":"济南市现在的天气情况如何?用华氏度表示,用日语回答"}],"presence_penalty":0,"temperature":0,"top_p":0}'

|

||||

@@ -353,7 +361,7 @@ curl 'http://<这里换成网关公网IP>/api/openai/v1/chat/completions' \

|

||||

**请求示例**

|

||||

|

||||

```shell

|

||||

curl 'http://<这里换成网关公网IP>/api/openai/v1/chat/completions' \

|

||||

curl 'http://<这里换成网关地址>/api/openai/v1/chat/completions' \

|

||||

-H 'Accept: application/json, text/event-stream' \

|

||||

-H 'Content-Type: application/json' \

|

||||

--data-raw '{"model":"qwen","frequency_penalty":0,"max_tokens":800,"stream":false,"messages":[{"role":"user","content":"帮我用德语翻译以下句子:九头蛇万岁!"}],"presence_penalty":0,"temperature":0,"top_p":0}'

|

||||

@@ -364,3 +372,71 @@ curl 'http://<这里换成网关公网IP>/api/openai/v1/chat/completions' \

|

||||

```json

|

||||

{"id":"65dcf12c-61ff-9e68-bffa-44fc9e6070d5","choices":[{"index":0,"message":{"role":"assistant","content":" “九头蛇万岁!”的德语翻译为“Hoch lebe Hydra!”。"},"finish_reason":"stop"}],"created":1724043865,"model":"qwen-max-0403","object":"chat.completion","usage":{"prompt_tokens":908,"completion_tokens":52,"total_tokens":960}}

|

||||

```

|

||||

|

||||

## 用法示例-开启 json 格式化

|

||||

|

||||

**配置信息**

|

||||

在上述配置的基础上增加 jsonResp 配置

|

||||

```yaml

|

||||

jsonResp:

|

||||

enable: true

|

||||

```

|

||||

|

||||

**请求示例**

|

||||

|

||||

```shell

|

||||

curl 'http://<这里换成网关地址>/api/openai/v1/chat/completions' \

|

||||

-H 'Accept: application/json, text/event-stream' \

|

||||

-H 'Content-Type: application/json' \

|

||||

--data-raw '{"model":"qwen","frequency_penalty":0,"max_tokens":800,"stream":false,"messages":[{"role":"user","content":"北京市现在的天气情况如何?"}],"presence_penalty":0,"temperature":0,"top_p":0}'

|

||||

```

|

||||

|

||||

**响应示例**

|

||||

|

||||

```json

|

||||

{"id":"ebd6ea91-8e38-9e14-9a5b-90178d2edea4","choices":[{"index":0,"message":{"role":"assistant","content": "{\"city\": \"北京市\", \"weather_condition\": \"多云\", \"temperature\": \"19℃\", \"data_update_time\": \"2024年10月9日16时37分53秒\"}"},"finish_reason":"stop"}],"created":1723187991,"model":"qwen-max-0403","object":"chat.completion","usage":{"prompt_tokens":890,"completion_tokens":56,"total_tokens":946}}

|

||||

```

|

||||

如果不自定义 json schema,大模型会自动生成一个 json 格式

|

||||

|

||||

**配置信息**

|

||||

增加自定义 json schema 配置

|

||||

```yaml

|

||||

jsonResp:

|

||||

enable: true

|

||||

jsonSchema: |

|

||||

title: WeatherSchema

|

||||

type: object

|

||||

properties:

|

||||

location:

|

||||

type: string

|

||||

description: 城市名称.

|

||||

weather:

|

||||

type: string

|

||||

description: 天气情况.

|

||||

temperature:

|

||||

type: string

|

||||

description: 温度.

|

||||

update_time:

|

||||

type: string

|

||||

description: 数据更新时间.

|

||||

required:

|

||||

- location

|

||||

- weather

|

||||

- temperature

|

||||

additionalProperties: false

|

||||

```

|

||||

|

||||

**请求示例**

|

||||

|

||||

```shell

|

||||

curl 'http://<这里换成网关地址>/api/openai/v1/chat/completions' \

|

||||

-H 'Accept: application/json, text/event-stream' \

|

||||

-H 'Content-Type: application/json' \

|

||||

--data-raw '{"model":"qwen","frequency_penalty":0,"max_tokens":800,"stream":false,"messages":[{"role":"user","content":"北京市现在的天气情况如何?"}],"presence_penalty":0,"temperature":0,"top_p":0}'

|

||||

```

|

||||

|

||||

**响应示例**

|

||||

|

||||

```json

|

||||

{"id":"ebd6ea91-8e38-9e14-9a5b-90178d2edea4","choices":[{"index":0,"message":{"role":"assistant","content": "{\"location\": \"北京市\", \"weather\": \"多云\", \"temperature\": \"19℃\", \"update_time\": \"2024年10月9日16时37分53秒\"}"},"finish_reason":"stop"}],"created":1723187991,"model":"qwen-max-0403","object":"chat.completion","usage":{"prompt_tokens":890,"completion_tokens":56,"total_tokens":946}}

|

||||

```

|

||||

@@ -4,7 +4,7 @@ keywords: [ AI Gateway, AI Agent ]

|

||||

description: AI Agent plugin configuration reference

|

||||

---

|

||||

## Functional Description

|

||||

A customizable API AI Agent that supports configuring HTTP method types as GET and POST APIs. Supports multiple dialogue rounds, streaming and non-streaming modes.

|

||||

A customizable API AI Agent that supports configuring HTTP method types as GET and POST APIs. Supports multiple dialogue rounds, streaming and non-streaming modes, support for formatting results as custom json.

|

||||

The agent flow chart is as follows:

|

||||

|

||||

|

||||

@@ -20,6 +20,7 @@ Plugin execution priority: `200`

|

||||

| `llm` | object | Required | - | Configuration information for AI service provider |

|

||||

| `apis` | object | Required | - | Configuration information for external API service provider |

|

||||

| `promptTemplate` | object | Optional | - | Configuration information for Agent ReAct template |

|

||||

| `jsonResp` | object | Optional | - | Configuring json formatting information |

|

||||

|

||||

The configuration fields for `llm` are as follows:

|

||||

| Name | Data Type | Requirement | Default Value | Description |

|

||||

@@ -71,7 +72,13 @@ The configuration fields for `chTemplate` and `enTemplate` are as follows:

|

||||

| `observation` | string | Optional | - | The observation part of the Agent ReAct template |

|

||||

| `thought2` | string | Optional | - | The thought2 part of the Agent ReAct template |

|

||||

|

||||

## Usage Example

|

||||

The configuration fields for `jsonResp` are as follows:

|

||||

| Name | Data Type | Requirement | Default Value | Description |

|

||||

|--------------------|-----------|-------------|---------------|------------------------------------|

|

||||

| `enable` | bool | Optional | - | Whether to enable json formatting. |

|

||||

| `jsonSchema` | string | Optional | - | Custom json schema |

|

||||

|

||||

## Usage Example-disable json formatting

|

||||

**Configuration Information**

|

||||

```yaml

|

||||

llm:

|

||||

@@ -335,3 +342,68 @@ curl 'http://<replace with gateway public IP>/api/openai/v1/chat/completions' \

|

||||

{"id":"65dcf12c-61ff-9e68-bffa-44fc9e6070d5","choices":[{"index":0,"message":{"role":"assistant","content":" The German translation of \"Hail Hydra!\" is \"Hoch lebe Hydra!\"."},"finish_reason":"stop"}],"created":1724043865,"model":"qwen-max-0403","object":"chat.completion","usage":{"prompt_tokens":908,"completion_tokens":52,"total_tokens":960}}

|

||||

```

|

||||

|

||||

## Usage Example-enable json formatting

|

||||

**Configuration Information**

|

||||

Add jsonResp configuration to the above configuration

|

||||

```yaml

|

||||

jsonResp:

|

||||

enable: true

|

||||

```

|

||||

|

||||

**Request Example**

|

||||

```shell

|

||||

curl 'http://<replace with gateway public IP>/api/openai/v1/chat/completions' \

|

||||

-H 'Accept: application/json, text/event-stream' \

|

||||

-H 'Content-Type: application/json' \

|

||||

--data-raw '{"model":"qwen","frequency_penalty":0,"max_tokens":800,"stream":false,"messages":[{"role":"user","content":"What is the current weather in Beijing ?"}],"presence_penalty":0,"temperature":0,"top_p":0}'

|

||||

```

|

||||

|

||||

**Response Example**

|

||||

|

||||

```json

|

||||

{"id":"ebd6ea91-8e38-9e14-9a5b-90178d2edea4","choices":[{"index":0,"message":{"role":"assistant","content": "{\"city\": \"BeiJing\", \"weather_condition\": \"cloudy\", \"temperature\": \"19℃\", \"data_update_time\": \"Oct 9, 2024, at 16:37\"}"},"finish_reason":"stop"}],"created":1723187991,"model":"qwen-max-0403","object":"chat.completion","usage":{"prompt_tokens":890,"completion_tokens":56,"total_tokens":946}}

|

||||

```

|

||||

If you don't customise the json schema, the big model will automatically generate a json format

|

||||

|

||||

**Configuration Information**

|

||||

Add custom json schema configuration

|

||||

```yaml

|

||||

jsonResp:

|

||||

enable: true

|

||||

jsonSchema:

|

||||

title: WeatherSchema

|

||||

type: object

|

||||

properties:

|

||||

location:

|

||||

type: string

|

||||

description: city name.

|

||||

weather:

|

||||

type: string

|

||||

description: weather conditions.

|

||||

temperature:

|

||||

type: string

|

||||

description: temperature.

|

||||

update_time:

|

||||

type: string

|

||||

description: the update time of data.

|

||||

required:

|

||||

- location

|

||||

- weather

|

||||

- temperature

|

||||

additionalProperties: false

|

||||

```

|

||||

|

||||

**Request Example**

|

||||

|

||||

```shell

|

||||

curl 'http://<replace with gateway public IP>/api/openai/v1/chat/completions' \

|

||||

-H 'Accept: application/json, text/event-stream' \

|

||||

-H 'Content-Type: application/json' \

|

||||

--data-raw '{"model":"qwen","frequency_penalty":0,"max_tokens":800,"stream":false,"messages":[{"role":"user","content":"What is the current weather in Beijing ?"}],"presence_penalty":0,"temperature":0,"top_p":0}'

|

||||

```

|

||||

|

||||

**Response Example**

|

||||

|

||||

```json

|

||||

{"id":"ebd6ea91-8e38-9e14-9a5b-90178d2edea4","choices":[{"index":0,"message":{"role":"assistant","content": "{\"location\": \"Beijing\", \"weather\": \"cloudy\", \"temperature\": \"19℃\", \"update_time\": \"Oct 9, 2024, at 16:37\"}"},"finish_reason":"stop"}],"created":1723187991,"model":"qwen-max-0403","object":"chat.completion","usage":{"prompt_tokens":890,"completion_tokens":56,"total_tokens":946}}

|

||||

```

|

||||

@@ -211,6 +211,15 @@ type LLMInfo struct {

|

||||

MaxTokens int64 `yaml:"maxToken" json:"maxTokens"`

|

||||

}

|

||||

|

||||

type JsonResp struct {

|

||||

// @Title zh-CN Enable

|

||||

// @Description zh-CN 是否要启用json格式化输出

|

||||

Enable bool `yaml:"enable" json:"enable"`

|

||||

// @Title zh-CN Json Schema

|

||||

// @Description zh-CN 用以验证响应json的Json Schema, 为空则只验证返回的响应是否为合法json

|

||||

JsonSchema map[string]interface{} `required:"false" json:"jsonSchema" yaml:"jsonSchema"`

|

||||

}

|

||||

|

||||

type PluginConfig struct {

|

||||

// @Title zh-CN 返回 HTTP 响应的模版

|

||||

// @Description zh-CN 用 %s 标记需要被 cache value 替换的部分

|

||||

@@ -225,6 +234,7 @@ type PluginConfig struct {

|

||||

LLMClient wrapper.HttpClient `yaml:"-" json:"-"`

|

||||

APIsParam []APIsParam `yaml:"-" json:"-"`

|

||||

PromptTemplate PromptTemplate `yaml:"promptTemplate" json:"promptTemplate"`

|

||||

JsonResp JsonResp `yaml:"jsonResp" json:"jsonResp"`

|

||||

}

|

||||

|

||||

func initResponsePromptTpl(gjson gjson.Result, c *PluginConfig) {

|

||||

@@ -402,3 +412,15 @@ func initLLMClient(gjson gjson.Result, c *PluginConfig) {

|

||||

Host: c.LLMInfo.Domain,

|

||||

})

|

||||

}

|

||||

|

||||

func initJsonResp(gjson gjson.Result, c *PluginConfig) {

|

||||

c.JsonResp.Enable = false

|

||||

if c.JsonResp.Enable = gjson.Get("jsonResp.enable").Bool(); c.JsonResp.Enable {

|

||||

c.JsonResp.JsonSchema = nil

|

||||

if jsonSchemaValue := gjson.Get("jsonResp.jsonSchema"); jsonSchemaValue.Exists() {

|

||||

if schemaValue, ok := jsonSchemaValue.Value().(map[string]interface{}); ok {

|

||||

c.JsonResp.JsonSchema = schemaValue

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@@ -2,8 +2,10 @@ package main

|

||||

|

||||

import (

|

||||

"encoding/json"

|

||||

"errors"

|

||||

"fmt"

|

||||

"net/http"

|

||||

"net/url"

|

||||

"regexp"

|

||||

"strings"

|

||||

|

||||

@@ -47,6 +49,8 @@ func parseConfig(gjson gjson.Result, c *PluginConfig, log wrapper.Log) error {

|

||||

|

||||

initLLMClient(gjson, c)

|

||||

|

||||

initJsonResp(gjson, c)

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

@@ -76,10 +80,10 @@ func firstReq(ctx wrapper.HttpContext, config PluginConfig, prompt string, rawRe

|

||||

log.Debugf("[onHttpRequestBody] newRequestBody: %s", string(newbody))

|

||||

err := proxywasm.ReplaceHttpRequestBody(newbody)

|

||||

if err != nil {

|

||||

log.Debug("替换失败")

|

||||

log.Debugf("failed replace err: %s", err.Error())

|

||||

proxywasm.SendHttpResponse(200, [][2]string{{"content-type", "application/json; charset=utf-8"}}, []byte(fmt.Sprintf(config.ReturnResponseTemplate, "替换失败"+err.Error())), -1)

|

||||

}

|

||||

log.Debug("[onHttpRequestBody] request替换成功")

|

||||

log.Debug("[onHttpRequestBody] replace request success")

|

||||

return types.ActionContinue

|

||||

}

|

||||

}

|

||||

@@ -175,11 +179,103 @@ func onHttpResponseHeaders(ctx wrapper.HttpContext, config PluginConfig, log wra

|

||||

return types.ActionContinue

|

||||

}

|

||||

|

||||

func toolsCallResult(ctx wrapper.HttpContext, config PluginConfig, content string, rawResponse Response, log wrapper.Log, statusCode int, responseBody []byte) {

|

||||

func extractJson(bodyStr string) (string, error) {

|

||||

// simply extract json from response body string

|

||||

startIndex := strings.Index(bodyStr, "{")

|

||||

endIndex := strings.LastIndex(bodyStr, "}") + 1

|

||||

|

||||

// if not found

|

||||

if startIndex == -1 || startIndex >= endIndex {

|

||||

return "", errors.New("cannot find json in the response body")

|

||||

}

|

||||

|

||||

jsonStr := bodyStr[startIndex:endIndex]

|

||||

|

||||

// attempt to parse the JSON

|

||||

var result map[string]interface{}

|

||||

err := json.Unmarshal([]byte(jsonStr), &result)

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

return jsonStr, nil

|

||||

}

|

||||

|

||||

func jsonFormat(llmClient wrapper.HttpClient, llmInfo LLMInfo, jsonSchema map[string]interface{}, assistantMessage Message, actionInput string, headers [][2]string, streamMode bool, rawResponse Response, log wrapper.Log) string {

|

||||

prompt := fmt.Sprintf(prompttpl.Json_Resp_Template, jsonSchema, actionInput)

|

||||

|

||||

messages := []dashscope.Message{{Role: "user", Content: prompt}}

|

||||

|

||||

completion := dashscope.Completion{

|

||||

Model: llmInfo.Model,

|

||||

Messages: messages,

|

||||

}

|

||||

|

||||

completionSerialized, _ := json.Marshal(completion)

|

||||

var content string

|

||||

err := llmClient.Post(

|

||||

llmInfo.Path,

|

||||

headers,

|

||||

completionSerialized,

|

||||

func(statusCode int, responseHeaders http.Header, responseBody []byte) {

|

||||

//得到gpt的返回结果

|

||||

var responseCompletion dashscope.CompletionResponse

|

||||

_ = json.Unmarshal(responseBody, &responseCompletion)

|

||||

log.Infof("[jsonFormat] content: %s", responseCompletion.Choices[0].Message.Content)

|

||||

content = responseCompletion.Choices[0].Message.Content

|

||||

jsonStr, err := extractJson(content)

|

||||

if err != nil {

|

||||

log.Debugf("[onHttpRequestBody] extractJson err: %s", err.Error())

|

||||

jsonStr = content

|

||||

}

|

||||

|

||||

if streamMode {

|

||||

stream(jsonStr, rawResponse, log)

|

||||

} else {

|

||||

noneStream(assistantMessage, jsonStr, rawResponse, log)

|

||||

}

|

||||

}, uint32(llmInfo.MaxExecutionTime))

|

||||

if err != nil {

|

||||

log.Debugf("[onHttpRequestBody] completion err: %s", err.Error())

|

||||

proxywasm.ResumeHttpRequest()

|

||||

}

|

||||

return content

|

||||

}

|

||||

|

||||

func noneStream(assistantMessage Message, actionInput string, rawResponse Response, log wrapper.Log) {

|

||||

assistantMessage.Role = "assistant"

|

||||

assistantMessage.Content = actionInput

|

||||

rawResponse.Choices[0].Message = assistantMessage

|

||||

newbody, err := json.Marshal(rawResponse)

|

||||

if err != nil {

|

||||

proxywasm.ResumeHttpResponse()

|

||||

return

|

||||

} else {

|

||||

proxywasm.ReplaceHttpResponseBody(newbody)

|

||||

|

||||

log.Debug("[onHttpResponseBody] replace response success")

|

||||

proxywasm.ResumeHttpResponse()

|

||||

}

|

||||

}

|

||||

|

||||

func stream(actionInput string, rawResponse Response, log wrapper.Log) {

|

||||

headers := [][2]string{{"content-type", "text/event-stream; charset=utf-8"}}

|

||||

proxywasm.ReplaceHttpResponseHeaders(headers)

|

||||

// Remove quotes from actionInput

|

||||

actionInput = strings.Trim(actionInput, "\"")

|

||||

returnStreamResponseTemplate := `data:{"id":"%s","choices":[{"index":0,"delta":{"role":"assistant","content":"%s"},"finish_reason":"stop"}],"model":"%s","object":"chat.completion","usage":{"prompt_tokens":%d,"completion_tokens":%d,"total_tokens":%d}}` + "\n\ndata:[DONE]\n\n"

|

||||

newbody := fmt.Sprintf(returnStreamResponseTemplate, rawResponse.ID, actionInput, rawResponse.Model, rawResponse.Usage.PromptTokens, rawResponse.Usage.CompletionTokens, rawResponse.Usage.TotalTokens)

|

||||

log.Infof("[onHttpResponseBody] newResponseBody: ", newbody)

|

||||

proxywasm.ReplaceHttpResponseBody([]byte(newbody))

|

||||

|

||||

log.Debug("[onHttpResponseBody] replace response success")

|

||||

proxywasm.ResumeHttpResponse()

|

||||

}

|

||||

|

||||

func toolsCallResult(ctx wrapper.HttpContext, llmClient wrapper.HttpClient, llmInfo LLMInfo, jsonResp JsonResp, aPIsParam []APIsParam, aPIClient []wrapper.HttpClient, content string, rawResponse Response, log wrapper.Log, statusCode int, responseBody []byte) {

|

||||

if statusCode != http.StatusOK {

|

||||

log.Debugf("statusCode: %d", statusCode)

|

||||

}

|

||||

log.Info("========函数返回结果========")

|

||||

log.Info("========function result========")

|

||||

log.Infof(string(responseBody))

|

||||

|

||||

observation := "Observation: " + string(responseBody)

|

||||

@@ -187,15 +283,15 @@ func toolsCallResult(ctx wrapper.HttpContext, config PluginConfig, content strin

|

||||

dashscope.MessageStore.AddForUser(observation)

|

||||

|

||||

completion := dashscope.Completion{

|

||||

Model: config.LLMInfo.Model,

|

||||

Model: llmInfo.Model,

|

||||

Messages: dashscope.MessageStore,

|

||||

MaxTokens: config.LLMInfo.MaxTokens,

|

||||

MaxTokens: llmInfo.MaxTokens,

|

||||

}

|

||||

|

||||

headers := [][2]string{{"Content-Type", "application/json"}, {"Authorization", "Bearer " + config.LLMInfo.APIKey}}

|

||||

headers := [][2]string{{"Content-Type", "application/json"}, {"Authorization", "Bearer " + llmInfo.APIKey}}

|

||||

completionSerialized, _ := json.Marshal(completion)

|

||||

err := config.LLMClient.Post(

|

||||

config.LLMInfo.Path,

|

||||

err := llmClient.Post(

|

||||

llmInfo.Path,

|

||||

headers,

|

||||

completionSerialized,

|

||||

func(statusCode int, responseHeaders http.Header, responseBody []byte) {

|

||||

@@ -205,42 +301,31 @@ func toolsCallResult(ctx wrapper.HttpContext, config PluginConfig, content strin

|

||||

log.Infof("[toolsCall] content: %s", responseCompletion.Choices[0].Message.Content)

|

||||

|

||||

if responseCompletion.Choices[0].Message.Content != "" {

|

||||

retType, actionInput := toolsCall(ctx, config, responseCompletion.Choices[0].Message.Content, rawResponse, log)

|

||||

retType, actionInput := toolsCall(ctx, llmClient, llmInfo, jsonResp, aPIsParam, aPIClient, responseCompletion.Choices[0].Message.Content, rawResponse, log)

|

||||

if retType == types.ActionContinue {

|

||||

//得到了Final Answer

|

||||

var assistantMessage Message

|

||||

var streamMode bool

|

||||

if ctx.GetContext(StreamContextKey) == nil {

|

||||

assistantMessage.Role = "assistant"

|

||||

assistantMessage.Content = actionInput

|

||||

rawResponse.Choices[0].Message = assistantMessage

|

||||

newbody, err := json.Marshal(rawResponse)

|

||||

if err != nil {

|

||||

proxywasm.ResumeHttpResponse()

|

||||

return

|

||||

streamMode = false

|

||||

if jsonResp.Enable {

|

||||

jsonFormat(llmClient, llmInfo, jsonResp.JsonSchema, assistantMessage, actionInput, headers, streamMode, rawResponse, log)

|

||||

} else {

|

||||

proxywasm.ReplaceHttpResponseBody(newbody)

|

||||

|

||||

log.Debug("[onHttpResponseBody] response替换成功")

|

||||

proxywasm.ResumeHttpResponse()

|

||||

noneStream(assistantMessage, actionInput, rawResponse, log)

|

||||

}

|

||||

} else {

|

||||

headers := [][2]string{{"content-type", "text/event-stream; charset=utf-8"}}

|

||||

proxywasm.ReplaceHttpResponseHeaders(headers)

|

||||

// Remove quotes from actionInput

|

||||

actionInput = strings.Trim(actionInput, "\"")

|

||||

returnStreamResponseTemplate := `data:{"id":"%s","choices":[{"index":0,"delta":{"role":"assistant","content":"%s"},"finish_reason":"stop"}],"model":"%s","object":"chat.completion","usage":{"prompt_tokens":%d,"completion_tokens":%d,"total_tokens":%d}}` + "\n\ndata:[DONE]\n\n"

|

||||

newbody := fmt.Sprintf(returnStreamResponseTemplate, rawResponse.ID, actionInput, rawResponse.Model, rawResponse.Usage.PromptTokens, rawResponse.Usage.CompletionTokens, rawResponse.Usage.TotalTokens)

|

||||

log.Infof("[onHttpResponseBody] newResponseBody: ", newbody)

|

||||

proxywasm.ReplaceHttpResponseBody([]byte(newbody))

|

||||

|

||||

log.Debug("[onHttpResponseBody] response替换成功")

|

||||

proxywasm.ResumeHttpResponse()

|

||||

streamMode = true

|

||||

if jsonResp.Enable {

|

||||

jsonFormat(llmClient, llmInfo, jsonResp.JsonSchema, assistantMessage, actionInput, headers, streamMode, rawResponse, log)

|

||||

} else {

|

||||

stream(actionInput, rawResponse, log)

|

||||

}

|

||||

}

|

||||

}

|

||||

} else {

|

||||

proxywasm.ResumeHttpRequest()

|

||||

}

|

||||

}, uint32(config.LLMInfo.MaxExecutionTime))

|

||||

}, uint32(llmInfo.MaxExecutionTime))

|

||||

if err != nil {

|

||||

log.Debugf("[onHttpRequestBody] completion err: %s", err.Error())

|

||||

proxywasm.ResumeHttpRequest()

|

||||

@@ -294,7 +379,7 @@ func outputParser(response string, log wrapper.Log) (string, string) {

|

||||

return "", ""

|

||||

}

|

||||

|

||||

func toolsCall(ctx wrapper.HttpContext, config PluginConfig, content string, rawResponse Response, log wrapper.Log) (types.Action, string) {

|

||||

func toolsCall(ctx wrapper.HttpContext, llmClient wrapper.HttpClient, llmInfo LLMInfo, jsonResp JsonResp, aPIsParam []APIsParam, aPIClient []wrapper.HttpClient, content string, rawResponse Response, log wrapper.Log) (types.Action, string) {

|

||||

dashscope.MessageStore.AddForAssistant(content)

|

||||

|

||||

action, actionInput := outputParser(content, log)

|

||||

@@ -305,9 +390,9 @@ func toolsCall(ctx wrapper.HttpContext, config PluginConfig, content string, raw

|

||||

}

|

||||

count := ctx.GetContext(ToolCallsCount).(int)

|

||||

count++

|

||||

log.Debugf("toolCallsCount:%d, config.LLMInfo.MaxIterations=%d", count, config.LLMInfo.MaxIterations)

|

||||

log.Debugf("toolCallsCount:%d, config.LLMInfo.MaxIterations=%d", count, llmInfo.MaxIterations)

|

||||

//函数递归调用次数,达到了预设的循环次数,强制结束

|

||||

if int64(count) > config.LLMInfo.MaxIterations {

|

||||

if int64(count) > llmInfo.MaxIterations {

|

||||

ctx.SetContext(ToolCallsCount, 0)

|

||||

return types.ActionContinue, ""

|

||||

} else {

|

||||

@@ -316,15 +401,14 @@ func toolsCall(ctx wrapper.HttpContext, config PluginConfig, content string, raw

|

||||

|

||||

//没得到最终答案

|

||||

|

||||

var url string

|

||||

var urlStr string

|

||||

var headers [][2]string

|

||||

var apiClient wrapper.HttpClient

|

||||

var method string

|

||||

var reqBody []byte

|

||||

var key string

|

||||

var maxExecutionTime int64

|

||||

|

||||

for i, apisParam := range config.APIsParam {

|

||||

for i, apisParam := range aPIsParam {

|

||||

maxExecutionTime = apisParam.MaxExecutionTime

|

||||

for _, tools_param := range apisParam.ToolsParam {

|

||||

if action == tools_param.ToolName {

|

||||

@@ -340,28 +424,37 @@ func toolsCall(ctx wrapper.HttpContext, config PluginConfig, content string, raw

|

||||

|

||||

method = tools_param.Method

|

||||

|

||||

// 组装 headers 和 key

|

||||

headers = [][2]string{{"Content-Type", "application/json"}}

|

||||

if apisParam.APIKey.Name != "" {

|

||||

if apisParam.APIKey.In == "query" {

|

||||

key = "?" + apisParam.APIKey.Name + "=" + apisParam.APIKey.Value

|

||||

} else if apisParam.APIKey.In == "header" {

|

||||

headers = append(headers, [2]string{"Authorization", apisParam.APIKey.Name + " " + apisParam.APIKey.Value})

|

||||

// 组装 URL 和请求体

|

||||

urlStr = apisParam.URL + tools_param.Path

|

||||

|

||||

// 解析URL模板以查找路径参数

|

||||

urlParts := strings.Split(urlStr, "/")

|

||||

for i, part := range urlParts {

|

||||

if strings.Contains(part, "{") && strings.Contains(part, "}") {

|

||||

for _, param := range tools_param.ParamName {

|

||||

paramNameInPath := part[1 : len(part)-1]

|

||||

if paramNameInPath == param {

|

||||

if value, ok := data[param]; ok {

|

||||

// 删除已经使用过的

|

||||

delete(data, param)

|

||||

// 替换模板中的占位符

|

||||

urlParts[i] = url.QueryEscape(value.(string))

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// 组装 URL 和请求体

|

||||

url = apisParam.URL + tools_param.Path + key

|

||||

// 重新组合URL

|

||||

urlStr = strings.Join(urlParts, "/")

|

||||

|

||||

queryParams := make([][2]string, 0)

|

||||

if method == "GET" {

|

||||

queryParams := make([]string, 0, len(tools_param.ParamName))

|

||||

for _, param := range tools_param.ParamName {

|

||||

if value, ok := data[param]; ok {

|

||||

queryParams = append(queryParams, fmt.Sprintf("%s=%v", param, value))

|

||||

queryParams = append(queryParams, [2]string{param, fmt.Sprintf("%v", value)})

|

||||

}

|

||||

}

|

||||

if len(queryParams) > 0 {

|

||||

url += "&" + strings.Join(queryParams, "&")

|

||||

}

|

||||

} else if method == "POST" {

|

||||

var err error

|

||||

reqBody, err = json.Marshal(data)

|

||||

@@ -371,9 +464,30 @@ func toolsCall(ctx wrapper.HttpContext, config PluginConfig, content string, raw

|

||||

}

|

||||

}

|

||||

|

||||

log.Infof("url: %s", url)

|

||||

// 组装 headers 和 key

|

||||

headers = [][2]string{{"Content-Type", "application/json"}}

|

||||

if apisParam.APIKey.Name != "" {

|

||||

if apisParam.APIKey.In == "query" {

|

||||

queryParams = append(queryParams, [2]string{apisParam.APIKey.Name, apisParam.APIKey.Value})

|

||||

} else if apisParam.APIKey.In == "header" {

|

||||

headers = append(headers, [2]string{"Authorization", apisParam.APIKey.Name + " " + apisParam.APIKey.Value})

|

||||

}

|

||||

}

|

||||

|

||||

apiClient = config.APIClient[i]

|

||||

if len(queryParams) > 0 {

|

||||

// 将 key 拼接到 url 后面

|

||||

urlStr += "?"

|

||||

for i, param := range queryParams {

|

||||

if i != 0 {

|

||||

urlStr += "&"

|

||||

}

|

||||

urlStr += url.QueryEscape(param[0]) + "=" + url.QueryEscape(param[1])

|

||||

}

|

||||

}

|

||||

|

||||

log.Debugf("url: %s", urlStr)

|

||||

|

||||

apiClient = aPIClient[i]

|

||||

break

|

||||

}

|

||||

}

|

||||

@@ -382,11 +496,11 @@ func toolsCall(ctx wrapper.HttpContext, config PluginConfig, content string, raw

|

||||

if apiClient != nil {

|

||||

err := apiClient.Call(

|

||||

method,

|

||||

url,

|

||||

urlStr,

|

||||

headers,

|

||||

reqBody,

|

||||

func(statusCode int, responseHeaders http.Header, responseBody []byte) {

|

||||

toolsCallResult(ctx, config, content, rawResponse, log, statusCode, responseBody)

|

||||

toolsCallResult(ctx, llmClient, llmInfo, jsonResp, aPIsParam, aPIClient, content, rawResponse, log, statusCode, responseBody)

|

||||

}, uint32(maxExecutionTime))

|

||||

if err != nil {

|

||||

log.Debugf("tool calls error: %s", err.Error())

|

||||

@@ -415,7 +529,7 @@ func onHttpResponseBody(ctx wrapper.HttpContext, config PluginConfig, body []byt

|

||||

//如果gpt返回的内容不是空的

|

||||

if rawResponse.Choices[0].Message.Content != "" {

|

||||

//进入agent的循环思考,工具调用的过程中

|

||||

retType, _ := toolsCall(ctx, config, rawResponse.Choices[0].Message.Content, rawResponse, log)

|

||||

retType, _ := toolsCall(ctx, config.LLMClient, config.LLMInfo, config.JsonResp, config.APIsParam, config.APIClient, rawResponse.Choices[0].Message.Content, rawResponse, log)

|

||||

return retType

|

||||

} else {

|

||||

return types.ActionContinue

|

||||

|

||||

@@ -167,3 +167,7 @@ Action:` + "```" + `

|

||||

%s

|

||||

Question: %s

|

||||

`

|

||||

const Json_Resp_Template = `

|

||||

Given the Json Schema: %s, please help me convert the following content to a pure json: %s

|

||||

Do not respond other content except the pure json!!!!

|

||||

`

|

||||

|

||||

Reference in New Issue

Block a user