mirror of

https://github.com/alibaba/higress.git

synced 2026-03-10 03:30:48 +08:00

Compare commits

24 Commits

update-hel

...

f2fcd68ef8

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

f2fcd68ef8 | ||

|

|

cbcc3ecf43 | ||

|

|

a92c89ce61 | ||

|

|

819f773297 | ||

|

|

255f0bde76 | ||

|

|

a2eb599eff | ||

|

|

3a28a9b6a7 | ||

|

|

399d2f372e | ||

|

|

ac69eb5b27 | ||

|

|

9d8a1c2e95 | ||

|

|

fb71d7b33d | ||

|

|

eb7b22d2b9 | ||

|

|

f1a5f18c78 | ||

|

|

e7010256fe | ||

|

|

5e787b3258 | ||

|

|

23fbe0e9e9 | ||

|

|

72c87b3e15 | ||

|

|

78d4b33424 | ||

|

|

b09793c3d4 | ||

|

|

5d7a30783f | ||

|

|

b98b51ef06 | ||

|

|

9c11c5406f | ||

|

|

10ca6d9515 | ||

|

|

08a7204085 |

13

README.md

13

README.md

@@ -45,7 +45,7 @@ Higress was born within Alibaba to solve the issues of Tengine reload affecting

|

||||

|

||||

You can click the button below to install the enterprise version of Higress:

|

||||

|

||||

[](https://www.aliyun.com/product/apigateway?spm=higress-github.topbar.0.0.0)

|

||||

[](https://www.aliyun.com/product/api-gateway?spm=higress-github.topbar.0.0.0)

|

||||

|

||||

|

||||

If you use open-source Higress and wish to obtain enterprise-level support, you can contact the project maintainer johnlanni's email: **zty98751@alibaba-inc.com** or social media accounts (WeChat ID: **nomadao**, DingTalk ID: **chengtanzty**). Please note **Higress** when adding as a friend :)

|

||||

@@ -119,7 +119,16 @@ If you are deploying on the cloud, it is recommended to use the [Enterprise Edit

|

||||

|

||||

Higress can function as a feature-rich ingress controller, which is compatible with many annotations of K8s' nginx ingress controller.

|

||||

|

||||

[Gateway API](https://gateway-api.sigs.k8s.io/) support is coming soon and will support smooth migration from Ingress API to Gateway API.

|

||||

[Gateway API](https://gateway-api.sigs.k8s.io/) is already supported, and it supports a smooth migration from Ingress API to Gateway API.

|

||||

|

||||

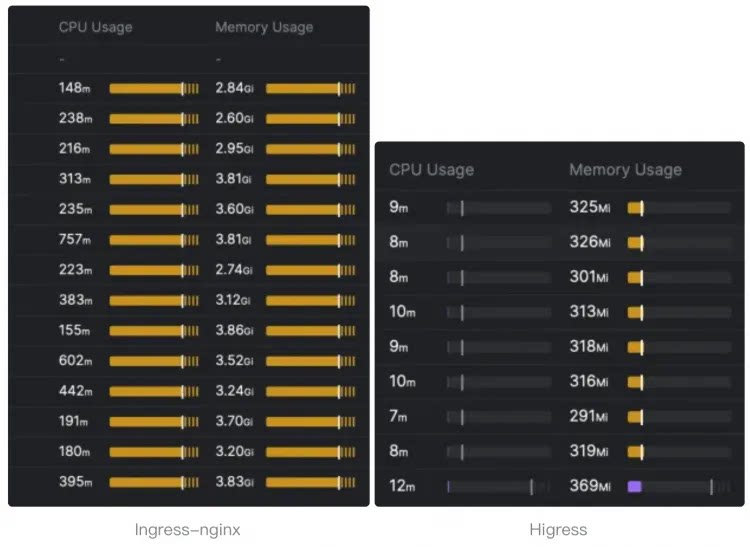

Compared to ingress-nginx, the resource overhead has significantly decreased, and the speed at which route changes take effect has improved by ten times.

|

||||

|

||||

> The following resource overhead comparison comes from [sealos](https://github.com/labring).

|

||||

>

|

||||

> For details, you can read this [article](https://sealos.io/blog/sealos-envoy-vs-nginx-2000-tenants) to understand how sealos migrates the monitoring of **tens of thousands of ingress** resources from nginx ingress to higress.

|

||||

|

||||

|

||||

|

||||

|

||||

- **Microservice gateway**:

|

||||

|

||||

|

||||

@@ -250,6 +250,10 @@ template:

|

||||

tolerations:

|

||||

{{- toYaml . | nindent 6 }}

|

||||

{{- end }}

|

||||

{{- with .Values.gateway.topologySpreadConstraints }}

|

||||

topologySpreadConstraints:

|

||||

{{- toYaml . | nindent 6 }}

|

||||

{{- end }}

|

||||

volumes:

|

||||

- emptyDir: {}

|

||||

name: workload-socket

|

||||

|

||||

@@ -301,6 +301,10 @@ spec:

|

||||

tolerations:

|

||||

{{- toYaml . | nindent 8 }}

|

||||

{{- end }}

|

||||

{{- with .Values.controller.topologySpreadConstraints }}

|

||||

topologySpreadConstraints:

|

||||

{{- toYaml . | nindent 8 }}

|

||||

{{- end }}

|

||||

volumes:

|

||||

- name: log

|

||||

emptyDir: {}

|

||||

|

||||

@@ -24,9 +24,6 @@ spec:

|

||||

{{- end }}

|

||||

{{- with .Values.gateway.service.externalTrafficPolicy }}

|

||||

externalTrafficPolicy: "{{ . }}"

|

||||

{{- end }}

|

||||

{{- with .Values.gateway.service.loadBalancerClass}}

|

||||

loadBalancerClass: "{{ . }}"

|

||||

{{- end }}

|

||||

type: {{ .Values.gateway.service.type }}

|

||||

ports:

|

||||

|

||||

@@ -524,6 +524,8 @@ gateway:

|

||||

|

||||

affinity: {}

|

||||

|

||||

topologySpreadConstraints: []

|

||||

|

||||

# -- If specified, the gateway will act as a network gateway for the given network.

|

||||

networkGateway: ""

|

||||

|

||||

@@ -631,6 +633,8 @@ controller:

|

||||

|

||||

affinity: {}

|

||||

|

||||

topologySpreadConstraints: []

|

||||

|

||||

autoscaling:

|

||||

enabled: false

|

||||

minReplicas: 1

|

||||

|

||||

@@ -83,6 +83,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| controller.serviceAccount.name | string | `""` | If not set and create is true, a name is generated using the fullname template |

|

||||

| controller.tag | string | `""` | |

|

||||

| controller.tolerations | list | `[]` | |

|

||||

| controller.topologySpreadConstraints | list | `[]` | |

|

||||

| downstream | object | `{"connectionBufferLimits":32768,"http2":{"initialConnectionWindowSize":1048576,"initialStreamWindowSize":65535,"maxConcurrentStreams":100},"idleTimeout":180,"maxRequestHeadersKb":60,"routeTimeout":0}` | Downstream config settings |

|

||||

| gateway.affinity | object | `{}` | |

|

||||

| gateway.annotations | object | `{}` | Annotations to apply to all resources |

|

||||

@@ -152,6 +153,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| gateway.serviceAccount.name | string | `""` | The name of the service account to use. If not set, the release name is used |

|

||||

| gateway.tag | string | `""` | |

|

||||

| gateway.tolerations | list | `[]` | |

|

||||

| gateway.topologySpreadConstraints | list | `[]` | |

|

||||

| gateway.unprivilegedPortSupported | string | `nil` | |

|

||||

| global.autoscalingv2API | bool | `true` | whether to use autoscaling/v2 template for HPA settings for internal usage only, not to be configured by users. |

|

||||

| global.caAddress | string | `""` | The customized CA address to retrieve certificates for the pods in the cluster. CSR clients such as the Istio Agent and ingress gateways can use this to specify the CA endpoint. If not set explicitly, default to the Istio discovery address. |

|

||||

|

||||

Submodule istio/istio updated: 3d7792ae28...c4703274ca

@@ -16,12 +16,13 @@ package bootstrap

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"istio.io/istio/pkg/config/mesh/meshwatcher"

|

||||

"istio.io/istio/pkg/kube/krt"

|

||||

"net"

|

||||

"net/http"

|

||||

"time"

|

||||

|

||||

"istio.io/istio/pkg/config/mesh/meshwatcher"

|

||||

"istio.io/istio/pkg/kube/krt"

|

||||

|

||||

prometheus "github.com/grpc-ecosystem/go-grpc-prometheus"

|

||||

"google.golang.org/grpc"

|

||||

"google.golang.org/grpc/reflection"

|

||||

@@ -436,10 +437,17 @@ func (s *Server) initHttpServer() error {

|

||||

}

|

||||

s.xdsServer.AddDebugHandlers(s.httpMux, nil, true, nil)

|

||||

s.httpMux.HandleFunc("/ready", s.readyHandler)

|

||||

s.httpMux.HandleFunc("/registry/watcherStatus", s.registryWatcherStatusHandler)

|

||||

s.httpMux.HandleFunc("/registry/watcherStatus", s.withConditionalAuth(s.registryWatcherStatusHandler))

|

||||

return nil

|

||||

}

|

||||

|

||||

func (s *Server) withConditionalAuth(handler http.HandlerFunc) http.HandlerFunc {

|

||||

if features.DebugAuth {

|

||||

return s.xdsServer.AllowAuthenticatedOrLocalhost(handler)

|

||||

}

|

||||

return handler

|

||||

}

|

||||

|

||||

// readyHandler checks whether the http server is ready

|

||||

func (s *Server) readyHandler(w http.ResponseWriter, _ *http.Request) {

|

||||

for name, fn := range s.readinessProbes {

|

||||

|

||||

@@ -26,8 +26,8 @@ type config struct {

|

||||

matchList []common.MatchRule

|

||||

enableUserLevelServer bool

|

||||

rateLimitConfig *handler.MCPRatelimitConfig

|

||||

defaultServer *common.SSEServer

|

||||

redisClient *common.RedisClient

|

||||

sharedMCPServer *common.MCPServer // Created once, thread-safe with sync.RWMutex

|

||||

}

|

||||

|

||||

func (c *config) Destroy() {

|

||||

@@ -110,6 +110,9 @@ func (p *Parser) Parse(any *anypb.Any, callbacks api.ConfigCallbackHandler) (int

|

||||

}

|

||||

GlobalSSEPathSuffix = ssePathSuffix

|

||||

|

||||

// Create shared MCPServer once during config parsing (thread-safe with sync.RWMutex)

|

||||

conf.sharedMCPServer = common.NewMCPServer(DefaultServerName, Version)

|

||||

|

||||

return conf, nil

|

||||

}

|

||||

|

||||

@@ -125,9 +128,6 @@ func (p *Parser) Merge(parent interface{}, child interface{}) interface{} {

|

||||

if childConfig.rateLimitConfig != nil {

|

||||

newConfig.rateLimitConfig = childConfig.rateLimitConfig

|

||||

}

|

||||

if childConfig.defaultServer != nil {

|

||||

newConfig.defaultServer = childConfig.defaultServer

|

||||

}

|

||||

return &newConfig

|

||||

}

|

||||

|

||||

|

||||

@@ -37,6 +37,7 @@ type filter struct {

|

||||

skipRequestBody bool

|

||||

skipResponseBody bool

|

||||

cachedResponseBody []byte

|

||||

sseServer *common.SSEServer // SSE server instance for this filter (per-request, not shared)

|

||||

|

||||

userLevelConfig bool

|

||||

mcpConfigHandler *handler.MCPConfigHandler

|

||||

@@ -135,11 +136,13 @@ func (f *filter) processMcpRequestHeadersForRestUpstream(header api.RequestHeade

|

||||

trimmed += "?" + rq

|

||||

}

|

||||

|

||||

f.config.defaultServer = common.NewSSEServer(common.NewMCPServer(DefaultServerName, Version),

|

||||

// Create SSE server instance for this filter (per-request, not shared)

|

||||

// MCPServer is shared (thread-safe), but SSEServer must be per-request (contains request-specific messageEndpoint)

|

||||

f.sseServer = common.NewSSEServer(f.config.sharedMCPServer,

|

||||

common.WithSSEEndpoint(GlobalSSEPathSuffix),

|

||||

common.WithMessageEndpoint(trimmed),

|

||||

common.WithRedisClient(f.config.redisClient))

|

||||

f.serverName = f.config.defaultServer.GetServerName()

|

||||

f.serverName = f.sseServer.GetServerName()

|

||||

body := "SSE connection create"

|

||||

f.callbacks.DecoderFilterCallbacks().SendLocalReply(http.StatusOK, body, nil, 0, "")

|

||||

}

|

||||

@@ -275,9 +278,9 @@ func (f *filter) encodeDataFromRestUpstream(buffer api.BufferInstance, endStream

|

||||

|

||||

if f.serverName != "" {

|

||||

if f.config.redisClient != nil {

|

||||

// handle default server

|

||||

// handle SSE server for this filter instance

|

||||

buffer.Reset()

|

||||

f.config.defaultServer.HandleSSE(f.callbacks, f.stopChan)

|

||||

f.sseServer.HandleSSE(f.callbacks, f.stopChan)

|

||||

return api.Running

|

||||

} else {

|

||||

_ = buffer.SetString(RedisNotEnabledResponseBody)

|

||||

|

||||

@@ -16,40 +16,91 @@ import (

|

||||

)

|

||||

|

||||

const (

|

||||

RedisKeyFormat = "higress:global_least_request_table:%s:%s"

|

||||

RedisLua = `local seed = KEYS[1]

|

||||

RedisKeyFormat = "higress:global_least_request_table:%s:%s"

|

||||

RedisLastCleanKeyFormat = "higress:global_least_request_table:last_clean_time:%s:%s"

|

||||

RedisLua = `local seed = tonumber(KEYS[1])

|

||||

local hset_key = KEYS[2]

|

||||

local current_target = KEYS[3]

|

||||

local current_count = 0

|

||||

local last_clean_key = KEYS[3]

|

||||

local clean_interval = tonumber(KEYS[4])

|

||||

local current_target = KEYS[5]

|

||||

local healthy_count = tonumber(KEYS[6])

|

||||

local enable_detail_log = KEYS[7]

|

||||

|

||||

math.randomseed(seed)

|

||||

|

||||

local function randomBool()

|

||||

return math.random() >= 0.5

|

||||

end

|

||||

-- 1. Selection

|

||||

local current_count = 0

|

||||

local same_count_hits = 0

|

||||

|

||||

if redis.call('HEXISTS', hset_key, current_target) == 1 then

|

||||

current_count = redis.call('HGET', hset_key, current_target)

|

||||

for i = 4, #KEYS do

|

||||

if redis.call('HEXISTS', hset_key, KEYS[i]) == 1 then

|

||||

local count = redis.call('HGET', hset_key, KEYS[i])

|

||||

if tonumber(count) < tonumber(current_count) then

|

||||

current_target = KEYS[i]

|

||||

current_count = count

|

||||

elseif count == current_count and randomBool() then

|

||||

current_target = KEYS[i]

|

||||

end

|

||||

end

|

||||

end

|

||||

for i = 8, 8 + healthy_count - 1 do

|

||||

local host = KEYS[i]

|

||||

local count = 0

|

||||

local val = redis.call('HGET', hset_key, host)

|

||||

if val then

|

||||

count = tonumber(val) or 0

|

||||

end

|

||||

|

||||

if same_count_hits == 0 or count < current_count then

|

||||

current_target = host

|

||||

current_count = count

|

||||

same_count_hits = 1

|

||||

elseif count == current_count then

|

||||

same_count_hits = same_count_hits + 1

|

||||

if math.random(same_count_hits) == 1 then

|

||||

current_target = host

|

||||

end

|

||||

end

|

||||

end

|

||||

|

||||

redis.call("HINCRBY", hset_key, current_target, 1)

|

||||

local new_count = redis.call("HGET", hset_key, current_target)

|

||||

|

||||

return current_target`

|

||||

-- Collect host counts for logging

|

||||

local host_details = {}

|

||||

if enable_detail_log == "1" then

|

||||

local fields = {}

|

||||

for i = 8, #KEYS do

|

||||

table.insert(fields, KEYS[i])

|

||||

end

|

||||

if #fields > 0 then

|

||||

local values = redis.call('HMGET', hset_key, (table.unpack or unpack)(fields))

|

||||

for i, val in ipairs(values) do

|

||||

table.insert(host_details, fields[i])

|

||||

table.insert(host_details, tostring(val or 0))

|

||||

end

|

||||

end

|

||||

end

|

||||

|

||||

-- 2. Cleanup

|

||||

local current_time = math.floor(seed / 1000000)

|

||||

local last_clean_time = tonumber(redis.call('GET', last_clean_key) or 0)

|

||||

|

||||

if current_time - last_clean_time >= clean_interval then

|

||||

local all_keys = redis.call('HKEYS', hset_key)

|

||||

if #all_keys > 0 then

|

||||

-- Create a lookup table for current hosts (from index 8 onwards)

|

||||

local current_hosts = {}

|

||||

for i = 8, #KEYS do

|

||||

current_hosts[KEYS[i]] = true

|

||||

end

|

||||

-- Remove keys not in current hosts

|

||||

for _, host in ipairs(all_keys) do

|

||||

if not current_hosts[host] then

|

||||

redis.call('HDEL', hset_key, host)

|

||||

end

|

||||

end

|

||||

end

|

||||

redis.call('SET', last_clean_key, current_time)

|

||||

end

|

||||

|

||||

return {current_target, new_count, host_details}`

|

||||

)

|

||||

|

||||

type GlobalLeastRequestLoadBalancer struct {

|

||||

redisClient wrapper.RedisClient

|

||||

redisClient wrapper.RedisClient

|

||||

maxRequestCount int64

|

||||

cleanInterval int64 // seconds

|

||||

enableDetailLog bool

|

||||

}

|

||||

|

||||

func NewGlobalLeastRequestLoadBalancer(json gjson.Result) (GlobalLeastRequestLoadBalancer, error) {

|

||||

@@ -72,6 +123,18 @@ func NewGlobalLeastRequestLoadBalancer(json gjson.Result) (GlobalLeastRequestLoa

|

||||

}

|

||||

// database default is 0

|

||||

database := json.Get("database").Int()

|

||||

lb.maxRequestCount = json.Get("maxRequestCount").Int()

|

||||

lb.cleanInterval = json.Get("cleanInterval").Int()

|

||||

if lb.cleanInterval == 0 {

|

||||

lb.cleanInterval = 60 * 60 // default 60 minutes

|

||||

} else {

|

||||

lb.cleanInterval = lb.cleanInterval * 60 // convert minutes to seconds

|

||||

}

|

||||

lb.enableDetailLog = true

|

||||

if val := json.Get("enableDetailLog"); val.Exists() {

|

||||

lb.enableDetailLog = val.Bool()

|

||||

}

|

||||

log.Infof("redis client init, serviceFQDN: %s, servicePort: %d, timeout: %d, database: %d, maxRequestCount: %d, cleanInterval: %d minutes, enableDetailLog: %v", serviceFQDN, servicePort, timeout, database, lb.maxRequestCount, lb.cleanInterval/60, lb.enableDetailLog)

|

||||

return lb, lb.redisClient.Init(username, password, int64(timeout), wrapper.WithDataBase(int(database)))

|

||||

}

|

||||

|

||||

@@ -100,9 +163,11 @@ func (lb GlobalLeastRequestLoadBalancer) HandleHttpRequestBody(ctx wrapper.HttpC

|

||||

ctx.SetContext("error", true)

|

||||

return types.ActionContinue

|

||||

}

|

||||

allHostMap := make(map[string]struct{})

|

||||

// Only healthy host can be selected

|

||||

healthyHostArray := []string{}

|

||||

for _, hostInfo := range hostInfos {

|

||||

allHostMap[hostInfo[0]] = struct{}{}

|

||||

if gjson.Get(hostInfo[1], "health_status").String() == "Healthy" {

|

||||

healthyHostArray = append(healthyHostArray, hostInfo[0])

|

||||

}

|

||||

@@ -113,10 +178,37 @@ func (lb GlobalLeastRequestLoadBalancer) HandleHttpRequestBody(ctx wrapper.HttpC

|

||||

}

|

||||

randomIndex := rand.Intn(len(healthyHostArray))

|

||||

hostSelected := healthyHostArray[randomIndex]

|

||||

keys := []interface{}{time.Now().UnixMicro(), fmt.Sprintf(RedisKeyFormat, routeName, clusterName), hostSelected}

|

||||

|

||||

// KEYS structure: [seed, hset_key, last_clean_key, clean_interval, host_selected, healthy_count, ...healthy_hosts, enableDetailLog, ...unhealthy_hosts]

|

||||

keys := []interface{}{

|

||||

time.Now().UnixMicro(),

|

||||

fmt.Sprintf(RedisKeyFormat, routeName, clusterName),

|

||||

fmt.Sprintf(RedisLastCleanKeyFormat, routeName, clusterName),

|

||||

lb.cleanInterval,

|

||||

hostSelected,

|

||||

len(healthyHostArray),

|

||||

"0",

|

||||

}

|

||||

if lb.enableDetailLog {

|

||||

keys[6] = "1"

|

||||

}

|

||||

for _, v := range healthyHostArray {

|

||||

keys = append(keys, v)

|

||||

}

|

||||

// Append unhealthy hosts (those in allHostMap but not in healthyHostArray)

|

||||

for host := range allHostMap {

|

||||

isHealthy := false

|

||||

for _, hh := range healthyHostArray {

|

||||

if host == hh {

|

||||

isHealthy = true

|

||||

break

|

||||

}

|

||||

}

|

||||

if !isHealthy {

|

||||

keys = append(keys, host)

|

||||

}

|

||||

}

|

||||

|

||||

err = lb.redisClient.Eval(RedisLua, len(keys), keys, []interface{}{}, func(response resp.Value) {

|

||||

if err := response.Error(); err != nil {

|

||||

log.Errorf("HGetAll failed: %+v", err)

|

||||

@@ -124,17 +216,54 @@ func (lb GlobalLeastRequestLoadBalancer) HandleHttpRequestBody(ctx wrapper.HttpC

|

||||

proxywasm.ResumeHttpRequest()

|

||||

return

|

||||

}

|

||||

hostSelected = response.String()

|

||||

valArray := response.Array()

|

||||

if len(valArray) < 2 {

|

||||

log.Errorf("redis eval lua result format error, expect at least [host, count], got: %+v", valArray)

|

||||

ctx.SetContext("error", true)

|

||||

proxywasm.ResumeHttpRequest()

|

||||

return

|

||||

}

|

||||

hostSelected = valArray[0].String()

|

||||

currentCount := valArray[1].Integer()

|

||||

|

||||

// detail log

|

||||

if lb.enableDetailLog && len(valArray) >= 3 {

|

||||

detailLogStr := "host and count: "

|

||||

details := valArray[2].Array()

|

||||

for i := 0; i+1 < len(details); i += 2 {

|

||||

h := details[i].String()

|

||||

c := details[i+1].String()

|

||||

detailLogStr += fmt.Sprintf("{%s: %s}, ", h, c)

|

||||

}

|

||||

log.Debugf("host_selected: %s + 1, %s", hostSelected, detailLogStr)

|

||||

}

|

||||

|

||||

// check rate limit

|

||||

if !lb.checkRateLimit(hostSelected, int64(currentCount), ctx, routeName, clusterName) {

|

||||

ctx.SetContext("error", true)

|

||||

log.Warnf("host_selected: %s, current_count: %d, exceed max request limit %d", hostSelected, currentCount, lb.maxRequestCount)

|

||||

// return 429

|

||||

proxywasm.SendHttpResponse(429, [][2]string{}, []byte("Exceeded maximum request limit from ai-load-balancer."), -1)

|

||||

ctx.DontReadResponseBody()

|

||||

return

|

||||

}

|

||||

|

||||

if err := proxywasm.SetUpstreamOverrideHost([]byte(hostSelected)); err != nil {

|

||||

ctx.SetContext("error", true)

|

||||

log.Errorf("override upstream host failed, fallback to default lb policy, error informations: %+v", err)

|

||||

proxywasm.ResumeHttpRequest()

|

||||

return

|

||||

}

|

||||

|

||||

log.Debugf("host_selected: %s", hostSelected)

|

||||

|

||||

// finally resume the request

|

||||

ctx.SetContext("host_selected", hostSelected)

|

||||

proxywasm.ResumeHttpRequest()

|

||||

})

|

||||

if err != nil {

|

||||

ctx.SetContext("error", true)

|

||||

log.Errorf("redis eval failed, fallback to default lb policy, error informations: %+v", err)

|

||||

return types.ActionContinue

|

||||

}

|

||||

return types.ActionPause

|

||||

@@ -161,7 +290,10 @@ func (lb GlobalLeastRequestLoadBalancer) HandleHttpStreamDone(ctx wrapper.HttpCo

|

||||

if host_selected == "" {

|

||||

log.Errorf("get host_selected failed")

|

||||

} else {

|

||||

lb.redisClient.HIncrBy(fmt.Sprintf(RedisKeyFormat, routeName, clusterName), host_selected, -1, nil)

|

||||

err := lb.redisClient.HIncrBy(fmt.Sprintf(RedisKeyFormat, routeName, clusterName), host_selected, -1, nil)

|

||||

if err != nil {

|

||||

log.Errorf("host_selected: %s - 1, failed to update count from redis: %v", host_selected, err)

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@@ -0,0 +1,220 @@

|

||||

-- Mocking Redis environment

|

||||

local redis_data = {

|

||||

hset = {},

|

||||

kv = {}

|

||||

}

|

||||

|

||||

local redis = {

|

||||

call = function(cmd, ...)

|

||||

local args = {...}

|

||||

if cmd == "HGET" then

|

||||

local key, field = args[1], args[2]

|

||||

return redis_data.hset[field]

|

||||

elseif cmd == "HSET" then

|

||||

local key, field, val = args[1], args[2], args[3]

|

||||

redis_data.hset[field] = val

|

||||

elseif cmd == "HINCRBY" then

|

||||

local key, field, increment = args[1], args[2], args[3]

|

||||

local val = tonumber(redis_data.hset[field] or 0)

|

||||

redis_data.hset[field] = tostring(val + increment)

|

||||

return redis_data.hset[field]

|

||||

elseif cmd == "HKEYS" then

|

||||

local keys = {}

|

||||

for k, _ in pairs(redis_data.hset) do

|

||||

table.insert(keys, k)

|

||||

end

|

||||

return keys

|

||||

elseif cmd == "HDEL" then

|

||||

local key, field = args[1], args[2]

|

||||

redis_data.hset[field] = nil

|

||||

elseif cmd == "GET" then

|

||||

return redis_data.kv[args[1]]

|

||||

elseif cmd == "HMGET" then

|

||||

local key = args[1]

|

||||

local res = {}

|

||||

for i = 2, #args do

|

||||

table.insert(res, redis_data.hset[args[i]])

|

||||

end

|

||||

return res

|

||||

elseif cmd == "SET" then

|

||||

redis_data.kv[args[1]] = args[2]

|

||||

end

|

||||

end

|

||||

}

|

||||

|

||||

-- The actual logic from lb_policy.go

|

||||

local function run_lb_logic(KEYS)

|

||||

local seed = tonumber(KEYS[1])

|

||||

local hset_key = KEYS[2]

|

||||

local last_clean_key = KEYS[3]

|

||||

local clean_interval = tonumber(KEYS[4])

|

||||

local current_target = KEYS[5]

|

||||

local healthy_count = tonumber(KEYS[6])

|

||||

local enable_detail_log = KEYS[7]

|

||||

|

||||

math.randomseed(seed)

|

||||

|

||||

-- 1. Selection

|

||||

local current_count = 0

|

||||

local same_count_hits = 0

|

||||

|

||||

for i = 8, 8 + healthy_count - 1 do

|

||||

local host = KEYS[i]

|

||||

local count = 0

|

||||

local val = redis.call('HGET', hset_key, host)

|

||||

if val then

|

||||

count = tonumber(val) or 0

|

||||

end

|

||||

|

||||

if same_count_hits == 0 or count < current_count then

|

||||

current_target = host

|

||||

current_count = count

|

||||

same_count_hits = 1

|

||||

elseif count == current_count then

|

||||

same_count_hits = same_count_hits + 1

|

||||

if math.random(same_count_hits) == 1 then

|

||||

current_target = host

|

||||

end

|

||||

end

|

||||

end

|

||||

|

||||

redis.call("HINCRBY", hset_key, current_target, 1)

|

||||

local new_count = redis.call("HGET", hset_key, current_target)

|

||||

|

||||

-- Collect host counts for logging

|

||||

local host_details = {}

|

||||

if enable_detail_log == "1" then

|

||||

local fields = {}

|

||||

for i = 8, #KEYS do

|

||||

table.insert(fields, KEYS[i])

|

||||

end

|

||||

if #fields > 0 then

|

||||

local values = redis.call('HMGET', hset_key, (table.unpack or unpack)(fields))

|

||||

for i, val in ipairs(values) do

|

||||

table.insert(host_details, fields[i])

|

||||

table.insert(host_details, tostring(val or 0))

|

||||

end

|

||||

end

|

||||

end

|

||||

|

||||

-- 2. Cleanup

|

||||

local current_time = math.floor(seed / 1000000)

|

||||

local last_clean_time = tonumber(redis.call('GET', last_clean_key) or 0)

|

||||

|

||||

if current_time - last_clean_time >= clean_interval then

|

||||

local all_keys = redis.call('HKEYS', hset_key)

|

||||

if #all_keys > 0 then

|

||||

-- Create a lookup table for current hosts (from index 8 onwards)

|

||||

local current_hosts = {}

|

||||

for i = 8, #KEYS do

|

||||

current_hosts[KEYS[i]] = true

|

||||

end

|

||||

-- Remove keys not in current hosts

|

||||

for _, host in ipairs(all_keys) do

|

||||

if not current_hosts[host] then

|

||||

redis.call('HDEL', hset_key, host)

|

||||

end

|

||||

end

|

||||

end

|

||||

redis.call('SET', last_clean_key, current_time)

|

||||

end

|

||||

|

||||

return {current_target, new_count, host_details}

|

||||

end

|

||||

|

||||

-- --- Test 1: Load Balancing Distribution ---

|

||||

print("--- Test 1: Load Balancing Distribution ---")

|

||||

local hosts = {"host1", "host2", "host3", "host4", "host5"}

|

||||

local iterations = 100000

|

||||

local results = {}

|

||||

for _, h in ipairs(hosts) do results[h] = 0 end

|

||||

|

||||

-- Reset redis

|

||||

redis_data.hset = {}

|

||||

for _, h in ipairs(hosts) do redis_data.hset[h] = "0" end

|

||||

|

||||

print(string.format("Running %d iterations with %d hosts (all counts started at 0)...", iterations, #hosts))

|

||||

|

||||

for i = 1, iterations do

|

||||

local initial_host = hosts[math.random(#hosts)]

|

||||

-- KEYS structure: [seed, hset_key, last_clean_key, clean_interval, host_selected, healthy_count, enable_detail_log, ...healthy_hosts]

|

||||

local keys = {i * 1000000, "table_key", "clean_key", 3600, initial_host, #hosts, "1"}

|

||||

for _, h in ipairs(hosts) do table.insert(keys, h) end

|

||||

|

||||

local res = run_lb_logic(keys)

|

||||

local selected = res[1]

|

||||

results[selected] = results[selected] + 1

|

||||

end

|

||||

|

||||

for _, h in ipairs(hosts) do

|

||||

local percentage = (results[h] / iterations) * 100

|

||||

print(string.format("%s: %6d (%.2f%%)", h, results[h], percentage))

|

||||

end

|

||||

|

||||

-- --- Test 2: IP Cleanup Logic ---

|

||||

print("\n--- Test 2: IP Cleanup Logic ---")

|

||||

|

||||

local function test_cleanup()

|

||||

redis_data.hset = {

|

||||

["host1"] = "10",

|

||||

["host2"] = "5",

|

||||

["old_ip_1"] = "1",

|

||||

["old_ip_2"] = "1",

|

||||

}

|

||||

redis_data.kv["clean_key"] = "1000" -- Last cleaned at 1000s

|

||||

|

||||

local current_hosts = {"host1", "host2"}

|

||||

local current_time_ms = 1000 * 1000000 + 500 * 1000000 -- 1500s (interval is 300s, let's say)

|

||||

local clean_interval = 300

|

||||

|

||||

print("Initial Redis IPs:", table.concat((function() local res={} for k,_ in pairs(redis_data.hset) do table.insert(res, k) end return res end)(), ", "))

|

||||

|

||||

-- Run logic (seed is microtime)

|

||||

local keys = {current_time_ms, "table_key", "clean_key", clean_interval, "host1", #current_hosts, "1"}

|

||||

for _, h in ipairs(current_hosts) do table.insert(keys, h) end

|

||||

|

||||

run_lb_logic(keys)

|

||||

|

||||

print("After Cleanup Redis IPs:", table.concat((function() local res={} for k,_ in pairs(redis_data.hset) do table.insert(res, k) end table.sort(res) return res end)(), ", "))

|

||||

|

||||

local exists_old1 = redis_data.hset["old_ip_1"] ~= nil

|

||||

local exists_old2 = redis_data.hset["old_ip_2"] ~= nil

|

||||

|

||||

if not exists_old1 and not exists_old2 then

|

||||

print("Success: Outdated IPs removed.")

|

||||

else

|

||||

print("Failure: Outdated IPs still exist.")

|

||||

end

|

||||

|

||||

print("New last_clean_time:", redis_data.kv["clean_key"])

|

||||

end

|

||||

|

||||

test_cleanup()

|

||||

|

||||

-- --- Test 3: No Cleanup if Interval Not Reached ---

|

||||

print("\n--- Test 3: No Cleanup if Interval Not Reached ---")

|

||||

|

||||

local function test_no_cleanup()

|

||||

redis_data.hset = {

|

||||

["host1"] = "10",

|

||||

["old_ip_1"] = "1",

|

||||

}

|

||||

redis_data.kv["clean_key"] = "1000"

|

||||

|

||||

local current_hosts = {"host1"}

|

||||

local current_time_ms = 1000 * 1000000 + 100 * 1000000 -- 1100s (interval 300s, not reached)

|

||||

local clean_interval = 300

|

||||

|

||||

local keys = {current_time_ms, "table_key", "clean_key", clean_interval, "host1", #current_hosts, "0"}

|

||||

for _, h in ipairs(current_hosts) do table.insert(keys, h) end

|

||||

|

||||

run_lb_logic(keys)

|

||||

|

||||

if redis_data.hset["old_ip_1"] then

|

||||

print("Success: Cleanup not triggered as expected.")

|

||||

else

|

||||

print("Failure: Cleanup triggered unexpectedly.")

|

||||

end

|

||||

end

|

||||

|

||||

test_no_cleanup()

|

||||

@@ -0,0 +1,24 @@

|

||||

package global_least_request

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

|

||||

"github.com/higress-group/wasm-go/pkg/wrapper"

|

||||

)

|

||||

|

||||

func (lb GlobalLeastRequestLoadBalancer) checkRateLimit(hostSelected string, currentCount int64, ctx wrapper.HttpContext, routeName string, clusterName string) bool {

|

||||

// 如果没有配置最大请求数,直接通过

|

||||

if lb.maxRequestCount <= 0 {

|

||||

return true

|

||||

}

|

||||

|

||||

// 如果当前请求数大于最大请求数,则限流

|

||||

// 注意:Lua脚本已经加了1,所以这里比较的是加1后的值

|

||||

if currentCount > lb.maxRequestCount {

|

||||

// 恢复 Redis 计数

|

||||

lb.redisClient.HIncrBy(fmt.Sprintf(RedisKeyFormat, routeName, clusterName), hostSelected, -1, nil)

|

||||

return false

|

||||

}

|

||||

|

||||

return true

|

||||

}

|

||||

@@ -26,6 +26,8 @@ description: AI 代理插件配置参考

|

||||

|

||||

> 请求路径后缀匹配 `/v1/embeddings` 时,对应文本向量场景,会用 OpenAI 的文本向量协议解析请求 Body,再转换为对应 LLM 厂商的文本向量协议

|

||||

|

||||

> 请求路径后缀匹配 `/v1/images/generations` 时,对应文生图场景,会用 OpenAI 的图片生成协议解析请求 Body,再转换为对应 LLM 厂商的图片生成协议

|

||||

|

||||

## 运行属性

|

||||

|

||||

插件执行阶段:`默认阶段`

|

||||

@@ -309,7 +311,9 @@ Dify 所对应的 `type` 为 `dify`。它特有的配置字段如下:

|

||||

|

||||

#### Google Vertex AI

|

||||

|

||||

Google Vertex AI 所对应的 type 为 vertex。它特有的配置字段如下:

|

||||

Google Vertex AI 所对应的 type 为 vertex。支持两种认证模式:

|

||||

|

||||

**标准模式**(使用 Service Account):

|

||||

|

||||

| 名称 | 数据类型 | 填写要求 | 默认值 | 描述 |

|

||||

|-----------------------------|---------------|--------|--------|-------------------------------------------------------------------------------|

|

||||

@@ -320,25 +324,56 @@ Google Vertex AI 所对应的 type 为 vertex。它特有的配置字段如下

|

||||

| `geminiSafetySetting` | map of string | 非必填 | - | Gemini AI 内容过滤和安全级别设定。参考[Safety settings](https://ai.google.dev/gemini-api/docs/safety-settings) |

|

||||

| `vertexTokenRefreshAhead` | number | 非必填 | - | Vertex access token刷新提前时间(单位秒) |

|

||||

|

||||

**Express Mode**(使用 API Key,简化配置):

|

||||

|

||||

Express Mode 是 Vertex AI 推出的简化访问模式,只需 API Key 即可快速开始使用,无需配置 Service Account。详见 [Vertex AI Express Mode 文档](https://cloud.google.com/vertex-ai/generative-ai/docs/start/express-mode/overview)。

|

||||

|

||||

| 名称 | 数据类型 | 填写要求 | 默认值 | 描述 |

|

||||

|-----------------------------|---------------|--------|--------|-------------------------------------------------------------------------------|

|

||||

| `apiTokens` | array of string | 必填 | - | Express Mode 使用的 API Key,从 Google Cloud Console 的 API & Services > Credentials 获取 |

|

||||

| `geminiSafetySetting` | map of string | 非必填 | - | Gemini AI 内容过滤和安全级别设定。参考[Safety settings](https://ai.google.dev/gemini-api/docs/safety-settings) |

|

||||

|

||||

**OpenAI 兼容模式**(使用 Vertex AI Chat Completions API):

|

||||

|

||||

Vertex AI 提供了 OpenAI 兼容的 Chat Completions API 端点,可以直接使用 OpenAI 格式的请求和响应,无需进行协议转换。详见 [Vertex AI OpenAI 兼容性文档](https://cloud.google.com/vertex-ai/generative-ai/docs/migrate/openai/overview)。

|

||||

|

||||

| 名称 | 数据类型 | 填写要求 | 默认值 | 描述 |

|

||||

|-----------------------------|---------------|--------|--------|-------------------------------------------------------------------------------|

|

||||

| `vertexOpenAICompatible` | boolean | 非必填 | false | 启用 OpenAI 兼容模式。启用后将使用 Vertex AI 的 OpenAI-compatible Chat Completions API |

|

||||

| `vertexAuthKey` | string | 必填 | - | 用于认证的 Google Service Account JSON Key |

|

||||

| `vertexRegion` | string | 必填 | - | Google Cloud 区域(如 us-central1, europe-west4 等) |

|

||||

| `vertexProjectId` | string | 必填 | - | Google Cloud 项目 ID |

|

||||

| `vertexAuthServiceName` | string | 必填 | - | 用于 OAuth2 认证的服务名称 |

|

||||

|

||||

**注意**:OpenAI 兼容模式与 Express Mode 互斥,不能同时配置 `apiTokens` 和 `vertexOpenAICompatible`。

|

||||

|

||||

#### AWS Bedrock

|

||||

|

||||

AWS Bedrock 所对应的 type 为 bedrock。它特有的配置字段如下:

|

||||

AWS Bedrock 所对应的 type 为 bedrock。它支持两种认证方式:

|

||||

|

||||

| 名称 | 数据类型 | 填写要求 | 默认值 | 描述 |

|

||||

|---------------------------|--------|------|-----|------------------------------|

|

||||

| `modelVersion` | string | 非必填 | - | 用于指定 Triton Server 中 model version |

|

||||

| `tritonDomain` | string | 非必填 | - | Triton Server 部署的指定请求 Domain |

|

||||

1. **AWS Signature V4 认证**:使用 `awsAccessKey` 和 `awsSecretKey` 进行 AWS 标准签名认证

|

||||

2. **Bearer Token 认证**:使用 `apiTokens` 配置 AWS Bearer Token(适用于 IAM Identity Center 等场景)

|

||||

|

||||

**注意**:两种认证方式二选一,如果同时配置了 `apiTokens`,将优先使用 Bearer Token 认证方式。

|

||||

|

||||

它特有的配置字段如下:

|

||||

|

||||

| 名称 | 数据类型 | 填写要求 | 默认值 | 描述 |

|

||||

|---------------------------|---------------|-------------------|-------|---------------------------------------------------|

|

||||

| `apiTokens` | array of string | 与 ak/sk 二选一 | - | AWS Bearer Token,用于 Bearer Token 认证方式 |

|

||||

| `awsAccessKey` | string | 与 apiTokens 二选一 | - | AWS Access Key,用于 AWS Signature V4 认证 |

|

||||

| `awsSecretKey` | string | 与 apiTokens 二选一 | - | AWS Secret Access Key,用于 AWS Signature V4 认证 |

|

||||

| `awsRegion` | string | 必填 | - | AWS 区域,例如:us-east-1 |

|

||||

| `bedrockAdditionalFields` | map | 非必填 | - | Bedrock 额外模型请求参数 |

|

||||

|

||||

#### NVIDIA Triton Interference Server

|

||||

|

||||

NVIDIA Triton Interference Server 所对应的 type 为 triton。它特有的配置字段如下:

|

||||

|

||||

| 名称 | 数据类型 | 填写要求 | 默认值 | 描述 |

|

||||

|---------------------------|--------|------|-----|------------------------------|

|

||||

| `awsAccessKey` | string | 必填 | - | AWS Access Key,用于身份认证 |

|

||||

| `awsSecretKey` | string | 必填 | - | AWS Secret Access Key,用于身份认证 |

|

||||

| `awsRegion` | string | 必填 | - | AWS 区域,例如:us-east-1 |

|

||||

| `bedrockAdditionalFields` | map | 非必填 | - | Bedrock 额外模型请求参数 |

|

||||

| 名称 | 数据类型 | 填写要求 | 默认值 | 描述 |

|

||||

|----------------------|--------|--------|-------|------------------------------------------|

|

||||

| `tritonModelVersion` | string | 非必填 | - | 用于指定 Triton Server 中 model version |

|

||||

| `tritonDomain` | string | 非必填 | - | Triton Server 部署的指定请求 Domain |

|

||||

|

||||

## 用法示例

|

||||

|

||||

@@ -1947,7 +1982,7 @@ provider:

|

||||

}

|

||||

```

|

||||

|

||||

### 使用 OpenAI 协议代理 Google Vertex 服务

|

||||

### 使用 OpenAI 协议代理 Google Vertex 服务(标准模式)

|

||||

|

||||

**配置信息**

|

||||

|

||||

@@ -2009,8 +2044,236 @@ provider:

|

||||

}

|

||||

```

|

||||

|

||||

### 使用 OpenAI 协议代理 Google Vertex 服务(Express Mode)

|

||||

|

||||

Express Mode 是 Vertex AI 的简化访问模式,只需 API Key 即可快速开始使用。

|

||||

|

||||

**配置信息**

|

||||

|

||||

```yaml

|

||||

provider:

|

||||

type: vertex

|

||||

apiTokens:

|

||||

- "YOUR_API_KEY"

|

||||

```

|

||||

|

||||

**请求示例**

|

||||

|

||||

```json

|

||||

{

|

||||

"model": "gemini-2.5-flash",

|

||||

"messages": [

|

||||

{

|

||||

"role": "user",

|

||||

"content": "你好,你是谁?"

|

||||

}

|

||||

],

|

||||

"stream": false

|

||||

}

|

||||

```

|

||||

|

||||

**响应示例**

|

||||

|

||||

```json

|

||||

{

|

||||

"id": "chatcmpl-0000000000000",

|

||||

"choices": [

|

||||

{

|

||||

"index": 0,

|

||||

"message": {

|

||||

"role": "assistant",

|

||||

"content": "你好!我是 Gemini,由 Google 开发的人工智能助手。有什么我可以帮您的吗?"

|

||||

},

|

||||

"finish_reason": "stop"

|

||||

}

|

||||

],

|

||||

"created": 1729986750,

|

||||

"model": "gemini-2.5-flash",

|

||||

"object": "chat.completion",

|

||||

"usage": {

|

||||

"prompt_tokens": 10,

|

||||

"completion_tokens": 25,

|

||||

"total_tokens": 35

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### 使用 OpenAI 协议代理 Google Vertex 服务(OpenAI 兼容模式)

|

||||

|

||||

OpenAI 兼容模式使用 Vertex AI 的 OpenAI-compatible Chat Completions API,请求和响应都使用 OpenAI 格式,无需进行协议转换。

|

||||

|

||||

**配置信息**

|

||||

|

||||

```yaml

|

||||

provider:

|

||||

type: vertex

|

||||

vertexOpenAICompatible: true

|

||||

vertexAuthKey: |

|

||||

{

|

||||

"type": "service_account",

|

||||

"project_id": "your-project-id",

|

||||

"private_key_id": "your-private-key-id",

|

||||

"private_key": "-----BEGIN PRIVATE KEY-----\n...\n-----END PRIVATE KEY-----\n",

|

||||

"client_email": "your-service-account@your-project.iam.gserviceaccount.com",

|

||||

"token_uri": "https://oauth2.googleapis.com/token"

|

||||

}

|

||||

vertexRegion: us-central1

|

||||

vertexProjectId: your-project-id

|

||||

vertexAuthServiceName: your-auth-service-name

|

||||

modelMapping:

|

||||

"gpt-4": "gemini-2.0-flash"

|

||||

"*": "gemini-1.5-flash"

|

||||

```

|

||||

|

||||

**请求示例**

|

||||

|

||||

```json

|

||||

{

|

||||

"model": "gpt-4",

|

||||

"messages": [

|

||||

{

|

||||

"role": "user",

|

||||

"content": "你好,你是谁?"

|

||||

}

|

||||

],

|

||||

"stream": false

|

||||

}

|

||||

```

|

||||

|

||||

**响应示例**

|

||||

|

||||

```json

|

||||

{

|

||||

"id": "chatcmpl-abc123",

|

||||

"choices": [

|

||||

{

|

||||

"index": 0,

|

||||

"message": {

|

||||

"role": "assistant",

|

||||

"content": "你好!我是由 Google 开发的 Gemini 模型。我可以帮助回答问题、提供信息和进行对话。有什么我可以帮您的吗?"

|

||||

},

|

||||

"finish_reason": "stop"

|

||||

}

|

||||

],

|

||||

"created": 1729986750,

|

||||

"model": "gemini-2.0-flash",

|

||||

"object": "chat.completion",

|

||||

"usage": {

|

||||

"prompt_tokens": 12,

|

||||

"completion_tokens": 35,

|

||||

"total_tokens": 47

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### 使用 OpenAI 协议代理 Google Vertex 图片生成服务

|

||||

|

||||

Vertex AI 支持使用 Gemini 模型进行图片生成。通过 ai-proxy 插件,可以使用 OpenAI 的 `/v1/images/generations` 接口协议来调用 Vertex AI 的图片生成能力。

|

||||

|

||||

**配置信息**

|

||||

|

||||

```yaml

|

||||

provider:

|

||||

type: vertex

|

||||

apiTokens:

|

||||

- "YOUR_API_KEY"

|

||||

modelMapping:

|

||||

"dall-e-3": "gemini-2.0-flash-exp"

|

||||

geminiSafetySetting:

|

||||

HARM_CATEGORY_HARASSMENT: "OFF"

|

||||

HARM_CATEGORY_HATE_SPEECH: "OFF"

|

||||

HARM_CATEGORY_SEXUALLY_EXPLICIT: "OFF"

|

||||

HARM_CATEGORY_DANGEROUS_CONTENT: "OFF"

|

||||

```

|

||||

|

||||

**使用 curl 请求**

|

||||

|

||||

```bash

|

||||

curl -X POST "http://your-gateway-address/v1/images/generations" \

|

||||

-H "Content-Type: application/json" \

|

||||

-d '{

|

||||

"model": "gemini-2.0-flash-exp",

|

||||

"prompt": "一只可爱的橘猫在阳光下打盹",

|

||||

"size": "1024x1024"

|

||||

}'

|

||||

```

|

||||

|

||||

**使用 OpenAI Python SDK**

|

||||

|

||||

```python

|

||||

from openai import OpenAI

|

||||

|

||||

client = OpenAI(

|

||||

api_key="any-value", # 可以是任意值,认证由网关处理

|

||||

base_url="http://your-gateway-address/v1"

|

||||

)

|

||||

|

||||

response = client.images.generate(

|

||||

model="gemini-2.0-flash-exp",

|

||||

prompt="一只可爱的橘猫在阳光下打盹",

|

||||

size="1024x1024",

|

||||

n=1

|

||||

)

|

||||

|

||||

# 获取生成的图片(base64 编码)

|

||||

image_data = response.data[0].b64_json

|

||||

print(f"Generated image (base64): {image_data[:100]}...")

|

||||

```

|

||||

|

||||

**响应示例**

|

||||

|

||||

```json

|

||||

{

|

||||

"created": 1729986750,

|

||||

"data": [

|

||||

{

|

||||

"b64_json": "iVBORw0KGgoAAAANSUhEUgAABAAAAAQACAIAAADwf7zUAAAA..."

|

||||

}

|

||||

],

|

||||

"usage": {

|

||||

"total_tokens": 1356,

|

||||

"input_tokens": 13,

|

||||

"output_tokens": 1120

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

**支持的尺寸参数**

|

||||

|

||||

Vertex AI 支持的宽高比(aspectRatio):`1:1`、`3:2`、`2:3`、`3:4`、`4:3`、`4:5`、`5:4`、`9:16`、`16:9`、`21:9`

|

||||

|

||||

Vertex AI 支持的分辨率(imageSize):`1k`、`2k`、`4k`

|

||||

|

||||

| OpenAI size 参数 | Vertex AI aspectRatio | Vertex AI imageSize |

|

||||

|------------------|----------------------|---------------------|

|

||||

| 256x256 | 1:1 | 1k |

|

||||

| 512x512 | 1:1 | 1k |

|

||||

| 1024x1024 | 1:1 | 1k |

|

||||

| 1792x1024 | 16:9 | 2k |

|

||||

| 1024x1792 | 9:16 | 2k |

|

||||

| 2048x2048 | 1:1 | 2k |

|

||||

| 4096x4096 | 1:1 | 4k |

|

||||

| 1536x1024 | 3:2 | 2k |

|

||||

| 1024x1536 | 2:3 | 2k |

|

||||

| 1024x768 | 4:3 | 1k |

|

||||

| 768x1024 | 3:4 | 1k |

|

||||

| 1280x1024 | 5:4 | 1k |

|

||||

| 1024x1280 | 4:5 | 1k |

|

||||

| 2560x1080 | 21:9 | 2k |

|

||||

|

||||

**注意事项**

|

||||

|

||||

- 图片生成使用 Gemini 模型(如 `gemini-2.0-flash-exp`、`gemini-3-pro-image-preview`),不同模型的可用性可能因区域而异

|

||||

- 返回的图片数据为 base64 编码格式(`b64_json`)

|

||||

- 可以通过 `geminiSafetySetting` 配置内容安全过滤级别

|

||||

- 如果需要使用模型映射(如将 `dall-e-3` 映射到 Gemini 模型),可以配置 `modelMapping`

|

||||

|

||||

### 使用 OpenAI 协议代理 AWS Bedrock 服务

|

||||

|

||||

AWS Bedrock 支持两种认证方式:

|

||||

|

||||

#### 方式一:使用 AWS Access Key/Secret Key 认证(AWS Signature V4)

|

||||

|

||||

**配置信息**

|

||||

|

||||

```yaml

|

||||

@@ -2018,7 +2281,21 @@ provider:

|

||||

type: bedrock

|

||||

awsAccessKey: "YOUR_AWS_ACCESS_KEY_ID"

|

||||

awsSecretKey: "YOUR_AWS_SECRET_ACCESS_KEY"

|

||||

awsRegion: "YOUR_AWS_REGION"

|

||||

awsRegion: "us-east-1"

|

||||

bedrockAdditionalFields:

|

||||

top_k: 200

|

||||

```

|

||||

|

||||

#### 方式二:使用 Bearer Token 认证(适用于 IAM Identity Center 等场景)

|

||||

|

||||

**配置信息**

|

||||

|

||||

```yaml

|

||||

provider:

|

||||

type: bedrock

|

||||

apiTokens:

|

||||

- "YOUR_AWS_BEARER_TOKEN"

|

||||

awsRegion: "us-east-1"

|

||||

bedrockAdditionalFields:

|

||||

top_k: 200

|

||||

```

|

||||

@@ -2027,7 +2304,7 @@ provider:

|

||||

|

||||

```json

|

||||

{

|

||||

"model": "arn:aws:bedrock:us-west-2::foundation-model/anthropic.claude-3-5-haiku-20241022-v1:0",

|

||||

"model": "us.anthropic.claude-3-5-haiku-20241022-v1:0",

|

||||

"messages": [

|

||||

{

|

||||

"role": "user",

|

||||

|

||||

@@ -25,6 +25,8 @@ The plugin now supports **automatic protocol detection**, allowing seamless comp

|

||||

|

||||

> When the request path suffix matches `/v1/embeddings`, it corresponds to text vector scenarios. The request body will be parsed using OpenAI's text vector protocol and then converted to the corresponding LLM vendor's text vector protocol.

|

||||

|

||||

> When the request path suffix matches `/v1/images/generations`, it corresponds to text-to-image scenarios. The request body will be parsed using OpenAI's image generation protocol and then converted to the corresponding LLM vendor's image generation protocol.

|

||||

|

||||

## Execution Properties

|

||||

Plugin execution phase: `Default Phase`

|

||||

Plugin execution priority: `100`

|

||||

@@ -255,7 +257,9 @@ For DeepL, the corresponding `type` is `deepl`. Its unique configuration field i

|

||||

| `targetLang` | string | Required | - | The target language required by the DeepL translation service |

|

||||

|

||||

#### Google Vertex AI

|

||||

For Vertex, the corresponding `type` is `vertex`. Its unique configuration field is:

|

||||

For Vertex, the corresponding `type` is `vertex`. It supports two authentication modes:

|

||||

|

||||

**Standard Mode** (using Service Account):

|

||||

|

||||

| Name | Data Type | Requirement | Default | Description |

|

||||

|-----------------------------|---------------|---------------| ------ |-------------------------------------------------------------------------------------------------------------------------------------------------------------|

|

||||

@@ -266,16 +270,47 @@ For Vertex, the corresponding `type` is `vertex`. Its unique configuration field

|

||||

| `vertexGeminiSafetySetting` | map of string | Optional | - | Gemini model content safety filtering settings. |

|

||||

| `vertexTokenRefreshAhead` | number | Optional | - | Vertex access token refresh ahead time in seconds |

|

||||

|

||||

**Express Mode** (using API Key, simplified configuration):

|

||||

|

||||

Express Mode is a simplified access mode introduced by Vertex AI. You can quickly get started with just an API Key, without configuring a Service Account. See [Vertex AI Express Mode documentation](https://cloud.google.com/vertex-ai/generative-ai/docs/start/express-mode/overview).

|

||||

|

||||

| Name | Data Type | Requirement | Default | Description |

|

||||

|-----------------------------|------------------|---------------| ------ |-------------------------------------------------------------------------------------------------------------------------------------------------------------|

|

||||

| `apiTokens` | array of string | Required | - | API Key for Express Mode, obtained from Google Cloud Console under API & Services > Credentials |

|

||||

| `vertexGeminiSafetySetting` | map of string | Optional | - | Gemini model content safety filtering settings. |

|

||||

|

||||

**OpenAI Compatible Mode** (using Vertex AI Chat Completions API):

|

||||

|

||||

Vertex AI provides an OpenAI-compatible Chat Completions API endpoint, allowing you to use OpenAI format requests and responses directly without protocol conversion. See [Vertex AI OpenAI Compatibility documentation](https://cloud.google.com/vertex-ai/generative-ai/docs/migrate/openai/overview).

|

||||

|

||||

| Name | Data Type | Requirement | Default | Description |

|

||||

|-----------------------------|------------------|---------------| ------ |-------------------------------------------------------------------------------------------------------------------------------------------------------------|

|

||||

| `vertexOpenAICompatible` | boolean | Optional | false | Enable OpenAI compatible mode. When enabled, uses Vertex AI's OpenAI-compatible Chat Completions API |

|

||||

| `vertexAuthKey` | string | Required | - | Google Service Account JSON Key for authentication |

|

||||

| `vertexRegion` | string | Required | - | Google Cloud region (e.g., us-central1, europe-west4) |

|

||||

| `vertexProjectId` | string | Required | - | Google Cloud Project ID |

|

||||

| `vertexAuthServiceName` | string | Required | - | Service name for OAuth2 authentication |

|

||||

|

||||

**Note**: OpenAI Compatible Mode and Express Mode are mutually exclusive. You cannot configure both `apiTokens` and `vertexOpenAICompatible` at the same time.

|

||||

|

||||

#### AWS Bedrock

|

||||

|

||||

For AWS Bedrock, the corresponding `type` is `bedrock`. Its unique configuration field is:

|

||||

For AWS Bedrock, the corresponding `type` is `bedrock`. It supports two authentication methods:

|

||||

|

||||

| Name | Data Type | Requirement | Default | Description |

|

||||

|---------------------------|-----------|-------------|---------|---------------------------------------------------------|

|

||||

| `awsAccessKey` | string | Required | - | AWS Access Key used for authentication |

|

||||

| `awsSecretKey` | string | Required | - | AWS Secret Access Key used for authentication |

|

||||

| `awsRegion` | string | Required | - | AWS region, e.g., us-east-1 |

|

||||

| `bedrockAdditionalFields` | map | Optional | - | Additional inference parameters that the model supports |

|

||||

1. **AWS Signature V4 Authentication**: Uses `awsAccessKey` and `awsSecretKey` for standard AWS signature authentication

|

||||

2. **Bearer Token Authentication**: Uses `apiTokens` to configure AWS Bearer Token (suitable for IAM Identity Center and similar scenarios)

|

||||

|

||||

**Note**: Choose one of the two authentication methods. If `apiTokens` is configured, Bearer Token authentication will be used preferentially.

|

||||

|

||||

Its unique configuration fields are:

|

||||

|

||||

| Name | Data Type | Requirement | Default | Description |

|

||||

|---------------------------|-----------------|--------------------------|---------|-------------------------------------------------------------------|

|

||||

| `apiTokens` | array of string | Either this or ak/sk | - | AWS Bearer Token for Bearer Token authentication |

|

||||

| `awsAccessKey` | string | Either this or apiTokens | - | AWS Access Key for AWS Signature V4 authentication |

|

||||

| `awsSecretKey` | string | Either this or apiTokens | - | AWS Secret Access Key for AWS Signature V4 authentication |

|

||||

| `awsRegion` | string | Required | - | AWS region, e.g., us-east-1 |

|

||||

| `bedrockAdditionalFields` | map | Optional | - | Additional inference parameters that the model supports |

|

||||

|

||||

## Usage Examples

|

||||

|

||||

@@ -1720,7 +1755,7 @@ provider:

|

||||

}

|

||||

```

|

||||

|

||||

### Utilizing OpenAI Protocol Proxy for Google Vertex Services

|

||||

### Utilizing OpenAI Protocol Proxy for Google Vertex Services (Standard Mode)

|

||||

**Configuration Information**

|

||||

```yaml

|

||||

provider:

|

||||

@@ -1778,14 +1813,250 @@ provider:

|

||||

}

|

||||

```

|

||||

|

||||

### Utilizing OpenAI Protocol Proxy for Google Vertex Services (Express Mode)

|

||||

|

||||

Express Mode is a simplified access mode for Vertex AI. You only need an API Key to get started quickly.

|

||||

|

||||

**Configuration Information**

|

||||

```yaml

|

||||

provider:

|

||||

type: vertex

|

||||

apiTokens:

|

||||

- "YOUR_API_KEY"

|

||||

```

|

||||

|

||||

**Request Example**

|

||||

```json

|

||||

{

|

||||

"model": "gemini-2.5-flash",

|

||||

"messages": [

|

||||

{

|

||||

"role": "user",

|

||||

"content": "Who are you?"

|

||||

}

|

||||

],

|

||||

"stream": false

|

||||

}

|

||||

```

|

||||

|

||||

**Response Example**

|

||||

```json

|

||||

{

|

||||

"id": "chatcmpl-0000000000000",

|

||||

"choices": [

|

||||

{

|

||||

"index": 0,

|

||||

"message": {

|

||||

"role": "assistant",

|

||||

"content": "Hello! I am Gemini, an AI assistant developed by Google. How can I help you today?"

|

||||

},

|

||||

"finish_reason": "stop"

|

||||

}

|

||||

],

|

||||

"created": 1729986750,

|

||||

"model": "gemini-2.5-flash",

|

||||

"object": "chat.completion",

|

||||

"usage": {

|

||||

"prompt_tokens": 10,

|

||||

"completion_tokens": 25,

|

||||

"total_tokens": 35

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### Utilizing OpenAI Protocol Proxy for Google Vertex Services (OpenAI Compatible Mode)

|

||||

|

||||

OpenAI Compatible Mode uses Vertex AI's OpenAI-compatible Chat Completions API. Both requests and responses use OpenAI format, requiring no protocol conversion.

|

||||

|

||||

**Configuration Information**

|

||||

```yaml

|

||||

provider:

|

||||

type: vertex

|

||||

vertexOpenAICompatible: true

|

||||

vertexAuthKey: |

|

||||

{

|

||||

"type": "service_account",

|

||||

"project_id": "your-project-id",

|

||||

"private_key_id": "your-private-key-id",

|

||||

"private_key": "-----BEGIN PRIVATE KEY-----\n...\n-----END PRIVATE KEY-----\n",

|

||||

"client_email": "your-service-account@your-project.iam.gserviceaccount.com",

|

||||

"token_uri": "https://oauth2.googleapis.com/token"

|

||||

}

|

||||

vertexRegion: us-central1

|

||||

vertexProjectId: your-project-id

|

||||

vertexAuthServiceName: your-auth-service-name

|

||||

modelMapping:

|

||||

"gpt-4": "gemini-2.0-flash"

|

||||

"*": "gemini-1.5-flash"

|

||||

```

|

||||

|

||||

**Request Example**

|

||||

```json

|

||||

{

|

||||

"model": "gpt-4",

|

||||

"messages": [

|

||||

{

|

||||

"role": "user",

|

||||

"content": "Hello, who are you?"

|

||||

}

|

||||

],

|

||||

"stream": false

|

||||

}

|

||||

```

|

||||

|

||||

**Response Example**

|

||||

```json

|

||||

{

|

||||

"id": "chatcmpl-abc123",

|

||||

"choices": [

|

||||

{

|

||||

"index": 0,

|

||||

"message": {

|

||||

"role": "assistant",

|

||||

"content": "Hello! I am Gemini, an AI model developed by Google. I can help answer questions, provide information, and engage in conversations. How can I assist you today?"

|

||||

},

|

||||

"finish_reason": "stop"

|

||||

}

|

||||

],

|

||||

"created": 1729986750,

|

||||

"model": "gemini-2.0-flash",

|

||||

"object": "chat.completion",

|

||||

"usage": {

|

||||

"prompt_tokens": 12,

|

||||

"completion_tokens": 35,

|

||||

"total_tokens": 47

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### Utilizing OpenAI Protocol Proxy for Google Vertex Image Generation

|

||||

|

||||

Vertex AI supports image generation using Gemini models. Through the ai-proxy plugin, you can use OpenAI's `/v1/images/generations` API to call Vertex AI's image generation capabilities.

|

||||

|

||||

**Configuration Information**

|

||||

|

||||

```yaml

|

||||

provider:

|

||||

type: vertex

|

||||

apiTokens:

|

||||

- "YOUR_API_KEY"

|

||||

modelMapping:

|

||||

"dall-e-3": "gemini-2.0-flash-exp"

|

||||

geminiSafetySetting:

|

||||

HARM_CATEGORY_HARASSMENT: "OFF"

|

||||

HARM_CATEGORY_HATE_SPEECH: "OFF"

|

||||

HARM_CATEGORY_SEXUALLY_EXPLICIT: "OFF"

|

||||

HARM_CATEGORY_DANGEROUS_CONTENT: "OFF"

|

||||

```

|

||||

|

||||

**Using curl**

|

||||

|

||||

```bash

|

||||

curl -X POST "http://your-gateway-address/v1/images/generations" \

|

||||

-H "Content-Type: application/json" \

|

||||

-d '{

|

||||

"model": "gemini-2.0-flash-exp",

|

||||

"prompt": "A cute orange cat napping in the sunshine",

|

||||

"size": "1024x1024"

|

||||

}'

|

||||

```

|

||||

|

||||

**Using OpenAI Python SDK**

|

||||

|

||||

```python

|

||||

from openai import OpenAI

|

||||

|

||||

client = OpenAI(

|

||||

api_key="any-value", # Can be any value, authentication is handled by the gateway

|

||||

base_url="http://your-gateway-address/v1"

|

||||

)

|

||||

|

||||

response = client.images.generate(

|

||||

model="gemini-2.0-flash-exp",

|

||||

prompt="A cute orange cat napping in the sunshine",

|

||||

size="1024x1024",

|

||||

n=1

|

||||

)

|

||||

|

||||

# Get the generated image (base64 encoded)

|

||||

image_data = response.data[0].b64_json

|

||||

print(f"Generated image (base64): {image_data[:100]}...")

|

||||

```

|

||||

|

||||

**Response Example**

|

||||

|

||||

```json

|

||||

{

|

||||

"created": 1729986750,

|

||||

"data": [

|

||||

{

|

||||

"b64_json": "iVBORw0KGgoAAAANSUhEUgAABAAAAAQACAIAAADwf7zUAAAA..."

|

||||

}

|

||||

],

|

||||

"usage": {

|

||||

"total_tokens": 1356,

|

||||

"input_tokens": 13,

|

||||

"output_tokens": 1120

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

**Supported Size Parameters**

|

||||

|

||||

Vertex AI supported aspect ratios: `1:1`, `3:2`, `2:3`, `3:4`, `4:3`, `4:5`, `5:4`, `9:16`, `16:9`, `21:9`

|

||||

|

||||

Vertex AI supported resolutions (imageSize): `1k`, `2k`, `4k`

|

||||

|

||||

| OpenAI size parameter | Vertex AI aspectRatio | Vertex AI imageSize |

|

||||

|-----------------------|----------------------|---------------------|

|

||||

| 256x256 | 1:1 | 1k |

|

||||

| 512x512 | 1:1 | 1k |

|

||||

| 1024x1024 | 1:1 | 1k |

|

||||

| 1792x1024 | 16:9 | 2k |

|

||||

| 1024x1792 | 9:16 | 2k |

|

||||

| 2048x2048 | 1:1 | 2k |

|

||||

| 4096x4096 | 1:1 | 4k |

|

||||

| 1536x1024 | 3:2 | 2k |

|

||||

| 1024x1536 | 2:3 | 2k |

|

||||

| 1024x768 | 4:3 | 1k |

|

||||

| 768x1024 | 3:4 | 1k |

|

||||

| 1280x1024 | 5:4 | 1k |

|

||||

| 1024x1280 | 4:5 | 1k |

|

||||

| 2560x1080 | 21:9 | 2k |

|

||||

|

||||

**Notes**

|

||||

|

||||

- Image generation uses Gemini models (e.g., `gemini-2.0-flash-exp`, `gemini-3-pro-image-preview`). Model availability may vary by region

|

||||

- The returned image data is in base64 encoded format (`b64_json`)

|

||||

- Content safety filtering levels can be configured via `geminiSafetySetting`

|