mirror of

https://github.com/alibaba/higress.git

synced 2026-03-01 23:20:52 +08:00

Compare commits

8 Commits

v2.1.1

...

wasm-go-ai

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

a57173ce28 | ||

|

|

3a8d8f5b94 | ||

|

|

1c37c361e1 | ||

|

|

b8133a95b2 | ||

|

|

36d5d391b8 | ||

|

|

1da9a07866 | ||

|

|

8620838f8b | ||

|

|

e7d2005382 |

46

README.md

46

README.md

@@ -22,13 +22,21 @@

|

||||

English | <a href="README_ZH.md">中文<a/> | <a href="README_JP.md">日本語<a/>

|

||||

</p>

|

||||

|

||||

## What is Higress?

|

||||

|

||||

Higress is a cloud-native API gateway based on Istio and Envoy, which can be extended with Wasm plugins written in Go/Rust/JS. It provides dozens of ready-to-use general-purpose plugins and an out-of-the-box console (try the [demo here](http://demo.higress.io/)).

|

||||

|

||||

Higress was born within Alibaba to solve the issues of Tengine reload affecting long-connection services and insufficient load balancing capabilities for gRPC/Dubbo.

|

||||

### Core Use Cases

|

||||

|

||||

Alibaba Cloud has built its cloud-native API gateway product based on Higress, providing 99.99% gateway high availability guarantee service capabilities for a large number of enterprise customers.

|

||||

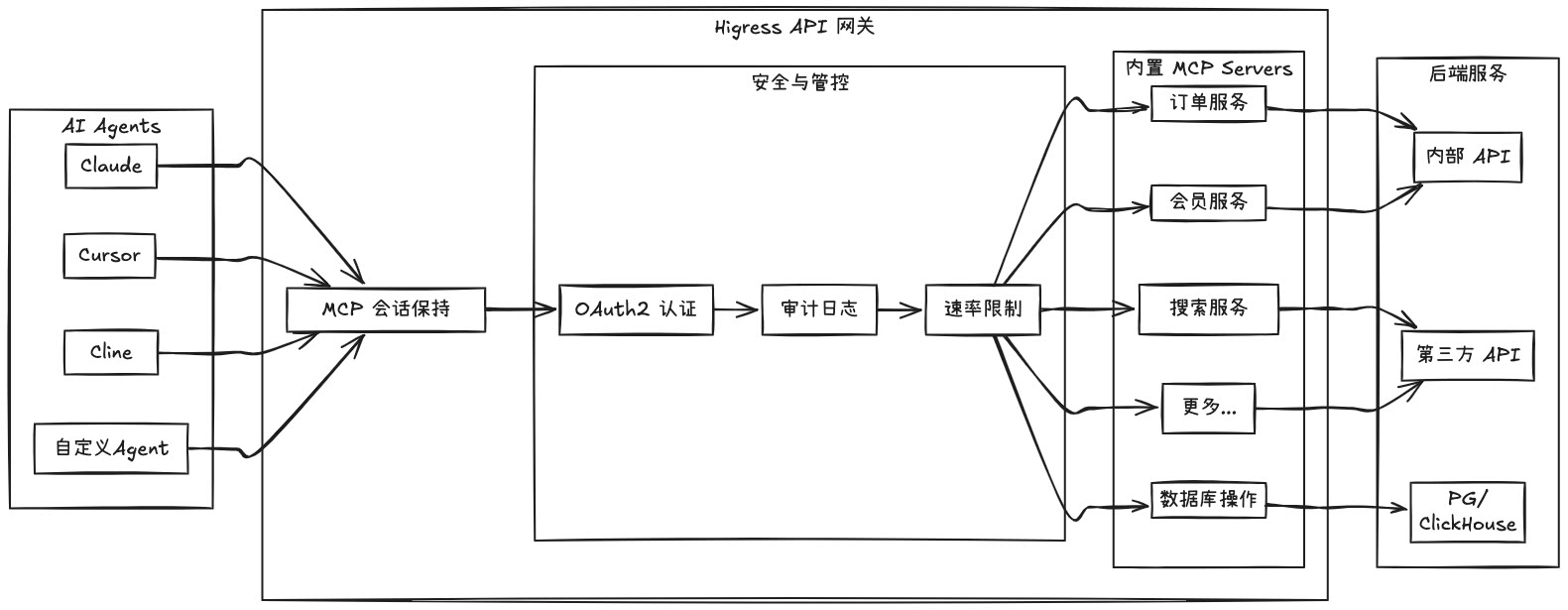

Higress's AI gateway capabilities support all [mainstream model providers](https://github.com/alibaba/higress/tree/main/plugins/wasm-go/extensions/ai-proxy/provider) both domestic and international. It also supports hosting MCP (Model Context Protocol) Servers through its plugin mechanism, enabling AI Agents to easily call various tools and services. With the [openapi-to-mcp tool](https://github.com/higress-group/openapi-to-mcpserver), you can quickly convert OpenAPI specifications into remote MCP servers for hosting. Higress provides unified management for both LLM API and MCP API.

|

||||

|

||||

Higress's AI gateway capabilities support all [mainstream model providers](https://github.com/alibaba/higress/tree/main/plugins/wasm-go/extensions/ai-proxy/provider) both domestic and international, as well as self-built DeepSeek models based on vllm/ollama. Within Alibaba Cloud, it supports AI businesses such as Tongyi Qianwen APP, Bailian large model API, and machine learning PAI platform. It also serves leading AIGC enterprises (such as Zero One Infinite) and AI products (such as FastGPT).

|

||||

**🌟 Try it now at [https://mcp.higress.ai/](https://mcp.higress.ai/)** to experience Higress-hosted Remote MCP Servers firsthand:

|

||||

|

||||

|

||||

|

||||

### Enterprise Adoption

|

||||

|

||||

Higress was born within Alibaba to solve the issues of Tengine reload affecting long-connection services and insufficient load balancing capabilities for gRPC/Dubbo. Within Alibaba Cloud, Higress's AI gateway capabilities support core AI applications such as Tongyi Bailian model studio, machine learning PAI platform, and other critical AI services. Alibaba Cloud has built its cloud-native API gateway product based on Higress, providing 99.99% gateway high availability guarantee service capabilities for a large number of enterprise customers.

|

||||

|

||||

## Summary

|

||||

|

||||

@@ -64,32 +72,28 @@ For other installation methods such as Helm deployment under K8s, please refer t

|

||||

|

||||

## Use Cases

|

||||

|

||||

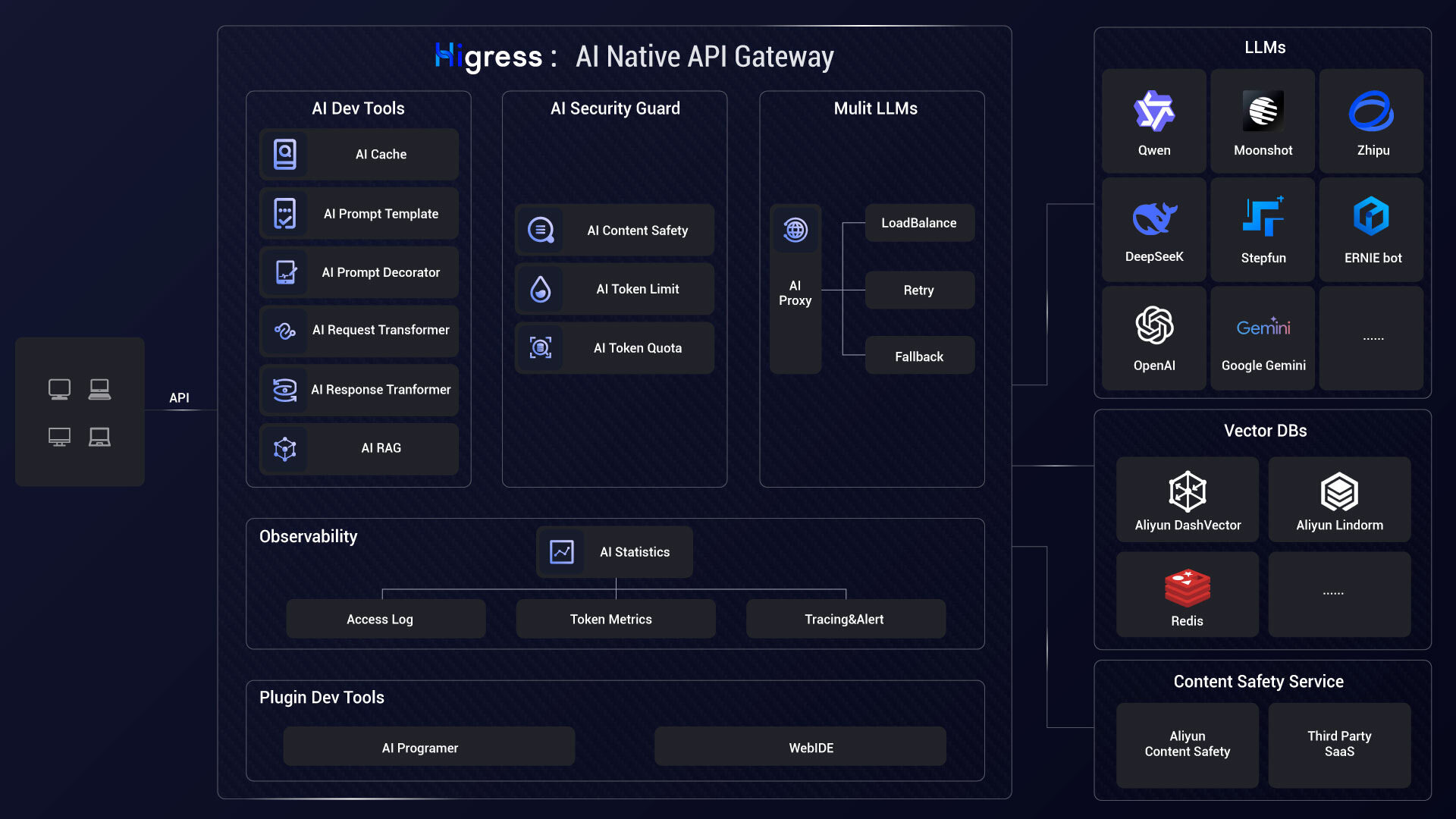

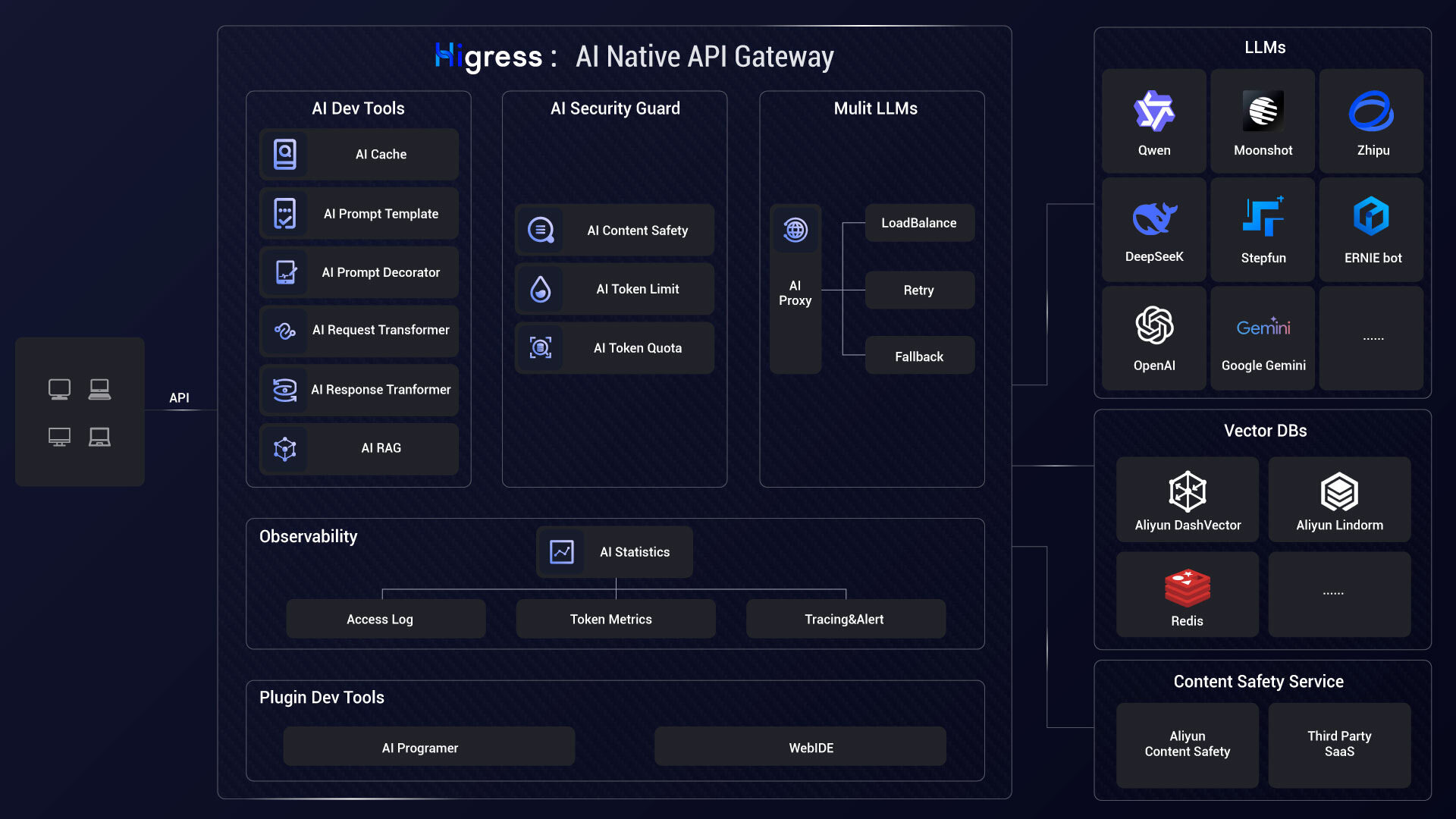

- **AI Gateway**:

|

||||

|

||||

Higress can connect to all LLM model providers both domestic and international using a unified protocol, while also providing rich AI observability, multi-model load balancing/fallback, AI token rate limiting, AI caching, and other capabilities:

|

||||

|

||||

|

||||

|

||||

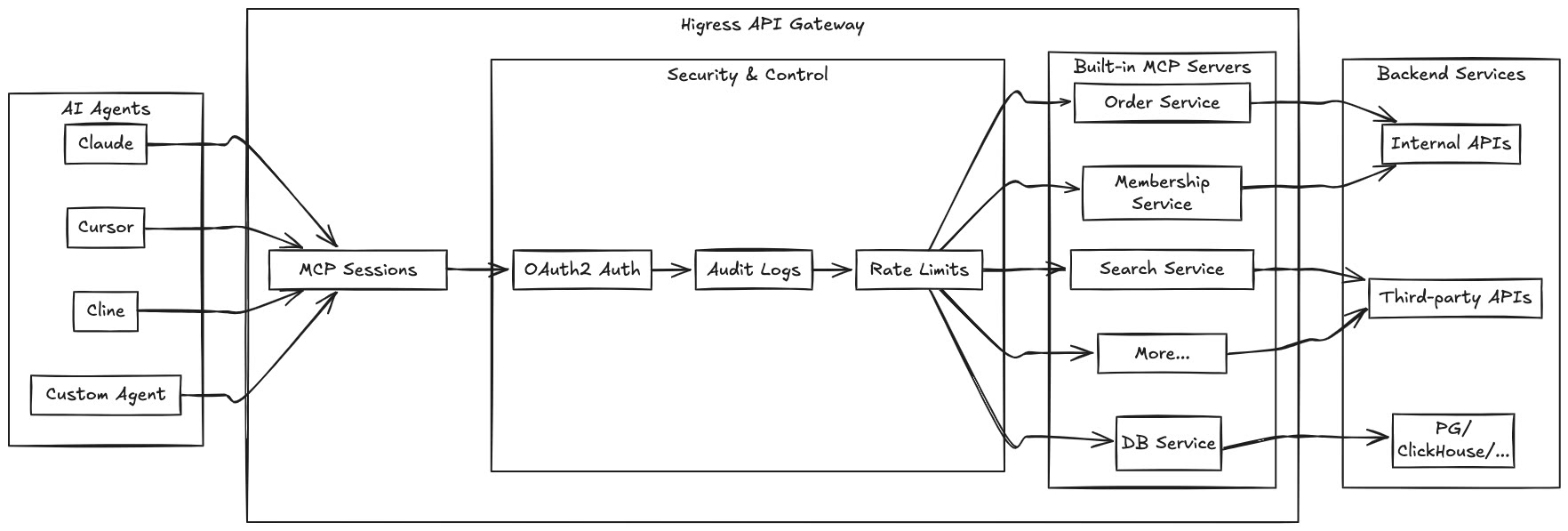

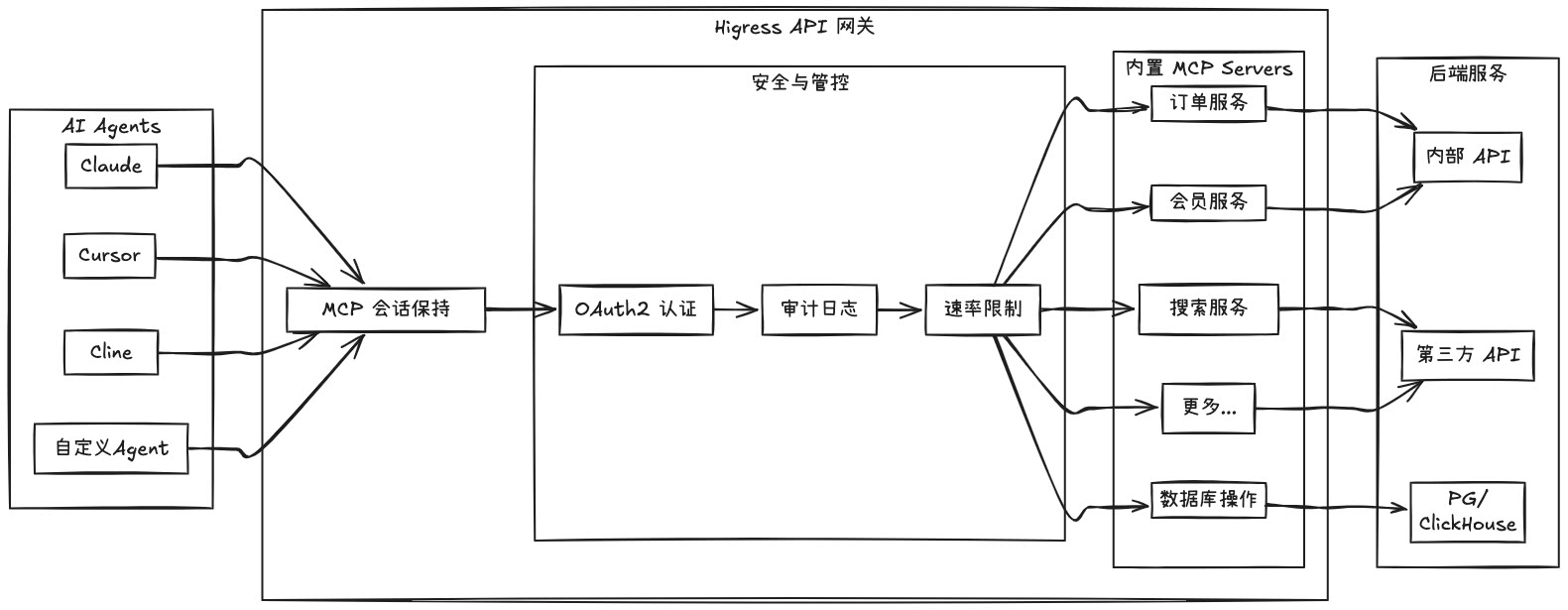

- **MCP Server Hosting**:

|

||||

|

||||

Higress, as an Envoy-based API gateway, supports hosting MCP Servers through its plugin mechanism. MCP (Model Context Protocol) is essentially an AI-friendly API that enables AI Agents to more easily call various tools and services. Higress provides unified capabilities for authentication, authorization, rate limiting, and observability for tool calls, simplifying the development and deployment of AI applications.

|

||||

Higress hosts MCP Servers through its plugin mechanism, enabling AI Agents to easily call various tools and services. With the [openapi-to-mcp tool](https://github.com/higress-group/openapi-to-mcpserver), you can quickly convert OpenAPI specifications into remote MCP servers.

|

||||

|

||||

|

||||

|

||||

**🌟 Try it now!** Experience Higress-hosted Remote MCP Servers at [https://mcp.higress.ai/](https://mcp.higress.ai/)

|

||||

|

||||

|

||||

|

||||

By hosting MCP Servers with Higress, you can achieve:

|

||||

- Unified authentication and authorization mechanisms, ensuring the security of AI tool calls

|

||||

- Fine-grained rate limiting to prevent abuse and resource exhaustion

|

||||

- Comprehensive audit logs recording all tool call behaviors

|

||||

- Rich observability for monitoring the performance and health of tool calls

|

||||

- Simplified deployment and management through Higress's plugin mechanism for quickly adding new MCP Servers

|

||||

- Dynamic updates without disruption: Thanks to Envoy's friendly handling of long connections and Wasm plugin's dynamic update mechanism, MCP Server logic can be updated on-the-fly without any traffic disruption or connection drops

|

||||

Key benefits of hosting MCP Servers with Higress:

|

||||

- Unified authentication and authorization mechanisms

|

||||

- Fine-grained rate limiting to prevent abuse

|

||||

- Comprehensive audit logs for all tool calls

|

||||

- Rich observability for monitoring performance

|

||||

- Simplified deployment through Higress's plugin mechanism

|

||||

- Dynamic updates without disruption or connection drops

|

||||

|

||||

[Learn more...](https://higress.cn/en/ai/mcp-quick-start/?spm=36971b57.7beea2de.0.0.d85f20a94jsWGm)

|

||||

|

||||

- **AI Gateway**:

|

||||

|

||||

Higress connects to all LLM model providers using a unified protocol, with AI observability, multi-model load balancing, token rate limiting, and caching capabilities:

|

||||

|

||||

|

||||

|

||||

- **Kubernetes ingress controller**:

|

||||

|

||||

Higress can function as a feature-rich ingress controller, which is compatible with many annotations of K8s' nginx ingress controller.

|

||||

|

||||

18

README_JP.md

18

README_JP.md

@@ -22,15 +22,21 @@

|

||||

</p>

|

||||

|

||||

|

||||

## Higressとは?

|

||||

|

||||

Higressは、IstioとEnvoyをベースにしたクラウドネイティブAPIゲートウェイで、Go/Rust/JSなどを使用してWasmプラグインを作成できます。数十の既製の汎用プラグインと、すぐに使用できるコンソールを提供しています(デモは[こちら](http://demo.higress.io/))。

|

||||

|

||||

Higressは、Tengineのリロードが長時間接続のビジネスに影響を与える問題や、gRPC/Dubboの負荷分散能力の不足を解決するために、Alibaba内部で誕生しました。

|

||||

### 主な使用シナリオ

|

||||

|

||||

Alibaba Cloudは、Higressを基盤にクラウドネイティブAPIゲートウェイ製品を構築し、多くの企業顧客に99.99%のゲートウェイ高可用性保証サービスを提供しています。

|

||||

HigressのAIゲートウェイ機能は、国内外のすべての[主要モデルプロバイダー](https://github.com/alibaba/higress/tree/main/plugins/wasm-go/extensions/ai-proxy/provider)をサポートし、vllm/ollamaなどに基づく自己構築DeepSeekモデルにも対応しています。また、プラグインメカニズムを通じてMCP(Model Context Protocol)サーバーをホストすることもでき、AI Agentが様々なツールやサービスを簡単に呼び出せるようにします。[openapi-to-mcpツール](https://github.com/higress-group/openapi-to-mcpserver)を使用すると、OpenAPI仕様を迅速にリモートMCPサーバーに変換してホスティングできます。HigressはLLM APIとMCP APIの統一管理を提供します。

|

||||

|

||||

Higressは、AIゲートウェイ機能を基盤に、Tongyi Qianwen APP、Bailian大規模モデルAPI、機械学習PAIプラットフォームなどのAIビジネスをサポートしています。また、国内の主要なAIGC企業(例:ZeroOne)やAI製品(例:FastGPT)にもサービスを提供しています。

|

||||

**🌟 今すぐ[https://mcp.higress.ai/](https://mcp.higress.ai/)で体験**してください。HigressがホストするリモートMCPサーバーを直接体験できます:

|

||||

|

||||

|

||||

|

||||

|

||||

### 企業での採用

|

||||

|

||||

Higressは、Tengineのリロードが長時間接続のビジネスに影響を与える問題や、gRPC/Dubboの負荷分散能力の不足を解決するために、Alibaba内部で誕生しました。Alibaba Cloud内では、HigressのAIゲートウェイ機能がTongyi Qianwen APP、Tongyi Bailian Model Studio、機械学習PAIプラットフォームなどの中核的なAIアプリケーションをサポートしています。また、国内の主要なAIGC企業(例:ZeroOne)やAI製品(例:FastGPT)にもサービスを提供しています。Alibaba Cloudは、Higressを基盤にクラウドネイティブAPIゲートウェイ製品を構築し、多くの企業顧客に99.99%のゲートウェイ高可用性保証サービスを提供しています。

|

||||

|

||||

|

||||

## 目次

|

||||

@@ -79,10 +85,6 @@ K8sでのHelmデプロイなどの他のインストール方法については

|

||||

|

||||

|

||||

|

||||

**🌟 今すぐ試してみよう!** [https://mcp.higress.ai/](https://mcp.higress.ai/) でHigressがホストするリモートMCPサーバーを体験できます。このプラットフォームでは、HigressがどのようにリモートMCPサーバーをホストおよび管理するかを直接体験できます。

|

||||

|

||||

|

||||

|

||||

Higressを使用してMCP Serverをホストすることで、以下のことが実現できます:

|

||||

- 統一された認証と認可メカニズム、AIツール呼び出しのセキュリティを確保

|

||||

- きめ細かいレート制限、乱用やリソース枯渇を防止

|

||||

|

||||

18

README_ZH.md

18

README_ZH.md

@@ -28,15 +28,21 @@

|

||||

</p>

|

||||

|

||||

|

||||

## Higress 是什么?

|

||||

|

||||

Higress 是一款云原生 API 网关,内核基于 Istio 和 Envoy,可以用 Go/Rust/JS 等编写 Wasm 插件,提供了数十个现成的通用插件,以及开箱即用的控制台(demo 点[这里](http://demo.higress.io/))

|

||||

|

||||

Higress 在阿里内部为解决 Tengine reload 对长连接业务有损,以及 gRPC/Dubbo 负载均衡能力不足而诞生。

|

||||

### 核心使用场景

|

||||

|

||||

阿里云基于 Higress 构建了云原生 API 网关产品,为大量企业客户提供 99.99% 的网关高可用保障服务能力。

|

||||

Higress 的 AI 网关能力支持国内外所有[主流模型供应商](https://github.com/alibaba/higress/tree/main/plugins/wasm-go/extensions/ai-proxy/provider)和基于 vllm/ollama 等自建的 DeepSeek 模型。同时,Higress 支持通过插件方式托管 MCP (Model Context Protocol) 服务器,使 AI Agent 能够更容易地调用各种工具和服务。借助 [openapi-to-mcp 工具](https://github.com/higress-group/openapi-to-mcpserver),您可以快速将 OpenAPI 规范转换为远程 MCP 服务器进行托管。Higress 提供了对 LLM API 和 MCP API 的统一管理。

|

||||

|

||||

Higress 的 AI 网关能力支持国内外所有[主流模型供应商](https://github.com/alibaba/higress/tree/main/plugins/wasm-go/extensions/ai-proxy/provider)和基于 vllm/ollama 等自建的 DeepSeek 模型;在阿里云内部支撑了通义千问 APP、百炼大模型 API、机器学习 PAI 平台等 AI 业务。同时服务国内头部的 AIGC 企业(如零一万物),以及 AI 产品(如 FastGPT)

|

||||

**🌟 立即体验 [https://mcp.higress.ai/](https://mcp.higress.ai/)** 基于 Higress 托管的远程 MCP 服务器:

|

||||

|

||||

|

||||

|

||||

|

||||

### 生产环境采用

|

||||

|

||||

Higress 在阿里内部为解决 Tengine reload 对长连接业务有损,以及 gRPC/Dubbo 负载均衡能力不足而诞生。在阿里云内部,Higress 的 AI 网关能力支撑了通义千问 APP、通义百炼模型工作室、机器学习 PAI 平台等核心 AI 应用。同时服务国内头部的 AIGC 企业(如零一万物),以及 AI 产品(如 FastGPT)。阿里云基于 Higress 构建了云原生 API 网关产品,为大量企业客户提供 99.99% 的网关高可用保障服务能力。

|

||||

|

||||

|

||||

## Summary

|

||||

@@ -89,10 +95,6 @@ K8s 下使用 Helm 部署等其他安装方式可以参考官网 [Quick Start

|

||||

|

||||

|

||||

|

||||

**🌟 立即体验!** 在 [https://mcp.higress.ai/](https://mcp.higress.ai/) 体验 Higress 托管的远程 MCP 服务器。这个平台让您可以体验基于 Higress 托管的远程 MCP 服务器的效果。

|

||||

|

||||

|

||||

|

||||

通过 Higress 托管 MCP Server,可以实现:

|

||||

- 统一的认证和鉴权机制,确保 AI 工具调用的安全性

|

||||

- 精细化的速率限制,防止滥用和资源耗尽

|

||||

|

||||

@@ -152,6 +152,7 @@ type IngressConfig struct {

|

||||

|

||||

httpsConfigMgr *cert.ConfigMgr

|

||||

|

||||

commonOptions common.Options

|

||||

// templateProcessor processes template variables in config

|

||||

templateProcessor *TemplateProcessor

|

||||

|

||||

@@ -197,6 +198,7 @@ func NewIngressConfig(localKubeClient kube.Client, xdsUpdater istiomodel.XDSUpda

|

||||

namespace: namespace,

|

||||

wasmPlugins: make(map[string]*extensions.WasmPlugin),

|

||||

http2rpcs: make(map[string]*higressv1.Http2Rpc),

|

||||

commonOptions: options,

|

||||

}

|

||||

|

||||

// Initialize secret config manager

|

||||

@@ -904,7 +906,7 @@ func (m *IngressConfig) convertIstioWasmPlugin(obj *higressext.WasmPlugin) (*ext

|

||||

result := &extensions.WasmPlugin{

|

||||

Selector: &istiotype.WorkloadSelector{

|

||||

MatchLabels: map[string]string{

|

||||

"higress": m.namespace + "-higress-gateway",

|

||||

m.commonOptions.GatewaySelectorKey: m.commonOptions.GatewaySelectorValue,

|

||||

},

|

||||

},

|

||||

Url: obj.Url,

|

||||

|

||||

@@ -55,6 +55,7 @@ constexpr std::string_view Host(":authority");

|

||||

constexpr std::string_view Path(":path");

|

||||

constexpr std::string_view EnvoyOriginalPath("x-envoy-original-path");

|

||||

constexpr std::string_view Accept("accept");

|

||||

constexpr std::string_view ContentDisposition("content-disposition");

|

||||

constexpr std::string_view ContentMD5("content-md5");

|

||||

constexpr std::string_view ContentType("content-type");

|

||||

constexpr std::string_view ContentLength("content-length");

|

||||

@@ -68,6 +69,7 @@ constexpr std::string_view StrictTransportSecurity("strict-transport-security");

|

||||

namespace ContentTypeValues {

|

||||

constexpr std::string_view Grpc{"application/grpc"};

|

||||

constexpr std::string_view Json{"application/json"};

|

||||

constexpr std::string_view MultipartFormData{"multipart/form-data"};

|

||||

} // namespace ContentTypeValues

|

||||

|

||||

class PercentEncoding {

|

||||

|

||||

@@ -16,6 +16,7 @@

|

||||

|

||||

#include <array>

|

||||

#include <limits>

|

||||

#include <regex>

|

||||

|

||||

#include "absl/strings/str_cat.h"

|

||||

#include "absl/strings/str_split.h"

|

||||

@@ -123,6 +124,7 @@ bool PluginRootContext::configure(size_t configuration_size) {

|

||||

}

|

||||

|

||||

FilterHeadersStatus PluginRootContext::onHeader(

|

||||

PluginContext& ctx,

|

||||

const ModelRouterConfigRule& rule) {

|

||||

if (!Wasm::Common::Http::hasRequestBody()) {

|

||||

return FilterHeadersStatus::Continue;

|

||||

@@ -150,19 +152,49 @@ FilterHeadersStatus PluginRootContext::onHeader(

|

||||

if (!enable) {

|

||||

return FilterHeadersStatus::Continue;

|

||||

}

|

||||

auto content_type_value =

|

||||

auto content_type_ptr =

|

||||

getRequestHeader(Wasm::Common::Http::Header::ContentType);

|

||||

if (!absl::StrContains(content_type_value->view(),

|

||||

auto content_type_value = content_type_ptr->view();

|

||||

LOG_DEBUG(absl::StrCat("Content-Type: ", content_type_value));

|

||||

if (absl::StrContains(content_type_value,

|

||||

Wasm::Common::Http::ContentTypeValues::Json)) {

|

||||

return FilterHeadersStatus::Continue;

|

||||

ctx.mode_ = MODE_JSON;

|

||||

LOG_DEBUG("Enable JSON mode.");

|

||||

removeRequestHeader(Wasm::Common::Http::Header::ContentLength);

|

||||

setFilterState(SetDecoderBufferLimitKey, DefaultMaxBodyBytes);

|

||||

LOG_INFO(absl::StrCat("SetRequestBodyBufferLimit: ", DefaultMaxBodyBytes));

|

||||

return FilterHeadersStatus::StopIteration;

|

||||

}

|

||||

removeRequestHeader(Wasm::Common::Http::Header::ContentLength);

|

||||

setFilterState(SetDecoderBufferLimitKey, DefaultMaxBodyBytes);

|

||||

LOG_INFO(absl::StrCat("SetRequestBodyBufferLimit: ", DefaultMaxBodyBytes));

|

||||

return FilterHeadersStatus::StopIteration;

|

||||

if (absl::StrContains(content_type_value,

|

||||

Wasm::Common::Http::ContentTypeValues::MultipartFormData)) {

|

||||

// Get the boundary from the content type

|

||||

auto boundary_start = content_type_value.find("boundary=");

|

||||

if (boundary_start == std::string::npos) {

|

||||

LOG_WARN(absl::StrCat("No boundary found in a multipart/form-data content-type: ", content_type_value));

|

||||

return FilterHeadersStatus::Continue;

|

||||

}

|

||||

boundary_start += 9;

|

||||

auto boundary_end = content_type_value.find(';', boundary_start);

|

||||

if (boundary_end == std::string::npos) {

|

||||

boundary_end = content_type_value.size();

|

||||

}

|

||||

auto boundary_length = boundary_end - boundary_start;

|

||||

if (boundary_length < 1 || boundary_length > 70) {

|

||||

// See https://www.w3.org/Protocols/rfc1341/7_2_Multipart.html

|

||||

LOG_WARN(absl::StrCat("Invalid boundary value in a multipart/form-data content-type: ", content_type_value));

|

||||

return FilterHeadersStatus::Continue;

|

||||

}

|

||||

auto boundary_value = content_type_value.substr(boundary_start, boundary_end - boundary_start);

|

||||

ctx.mode_ = MODE_MULTIPART;

|

||||

ctx.boundary_ = boundary_value;

|

||||

LOG_DEBUG(absl::StrCat("Enable multipart/form-data mode. Boundary=", boundary_value));

|

||||

removeRequestHeader(Wasm::Common::Http::Header::ContentLength);

|

||||

return FilterHeadersStatus::StopIteration;

|

||||

}

|

||||

return FilterHeadersStatus::Continue;

|

||||

}

|

||||

|

||||

FilterDataStatus PluginRootContext::onBody(const ModelRouterConfigRule& rule,

|

||||

FilterDataStatus PluginRootContext::onJsonBody(const ModelRouterConfigRule& rule,

|

||||

std::string_view body) {

|

||||

const auto& model_key = rule.model_key_;

|

||||

const auto& add_provider_header = rule.add_provider_header_;

|

||||

@@ -198,10 +230,85 @@ FilterDataStatus PluginRootContext::onBody(const ModelRouterConfigRule& rule,

|

||||

return FilterDataStatus::Continue;

|

||||

}

|

||||

|

||||

FilterDataStatus PluginRootContext::onMultipartBody(

|

||||

PluginContext& ctx,

|

||||

const ModelRouterConfigRule& rule,

|

||||

WasmDataPtr& body,

|

||||

bool end_stream) {

|

||||

const auto& add_provider_header = rule.add_provider_header_;

|

||||

const auto& model_to_header = rule.model_to_header_;

|

||||

|

||||

const auto boundary = ctx.boundary_;

|

||||

const auto body_view = body->view();

|

||||

const auto model_param_header = absl::StrCat("Content-Disposition: form-data; name=\"", rule.model_key_, "\"");

|

||||

|

||||

for (size_t pos = 0; (pos = body_view.find(boundary, pos)) != std::string_view::npos;) {

|

||||

LOG_DEBUG(absl::StrCat("Found boundary at ", pos));

|

||||

pos += boundary.length();

|

||||

size_t end_pos = body_view.find(boundary, pos);

|

||||

if (end_pos == std::string_view::npos) {

|

||||

end_pos = body_view.length();

|

||||

}

|

||||

std::string_view part = body_view.substr(pos, end_pos - pos);

|

||||

LOG_DEBUG(absl::StrCat("Part: ", part));

|

||||

auto part_pos = pos;

|

||||

pos = end_pos;

|

||||

|

||||

// Check if this part contains the model parameter

|

||||

if (!absl::StrContains(part, model_param_header)) {

|

||||

LOG_DEBUG("Part does not contain model parameter");

|

||||

continue;

|

||||

}

|

||||

size_t value_start = part.find(CRLF_CRLF);

|

||||

if (value_start == std::string_view::npos) {

|

||||

LOG_DEBUG("No value start found in part");

|

||||

break;

|

||||

}

|

||||

value_start += 4; // Skip the "\r\n\r\n"

|

||||

// model parameter should be only one line

|

||||

size_t value_end = part.find(CRLF, value_start);

|

||||

if (value_end == std::string_view::npos) {

|

||||

LOG_DEBUG("No value end found in part");

|

||||

break;

|

||||

}

|

||||

auto model_value = part.substr(value_start, value_end - value_start);

|

||||

LOG_DEBUG(absl::StrCat("Model value: ", model_value));

|

||||

if (!model_to_header.empty()) {

|

||||

replaceRequestHeader(model_to_header, model_value);

|

||||

}

|

||||

if (!add_provider_header.empty()) {

|

||||

auto pos = model_value.find('/');

|

||||

if (pos != std::string::npos) {

|

||||

const auto& provider = model_value.substr(0, pos);

|

||||

const auto& model = model_value.substr(pos + 1);

|

||||

replaceRequestHeader(add_provider_header, provider);

|

||||

size_t new_size = 0;

|

||||

auto new_buffer_data = absl::StrCat(body_view.substr(0, part_pos + value_start), model, body_view.substr(part_pos + value_end));

|

||||

auto result = setBuffer(WasmBufferType::HttpRequestBody, 0, std::numeric_limits<size_t>::max(), new_buffer_data, &new_size);

|

||||

LOG_DEBUG(absl::StrCat("model route to provider:", provider,

|

||||

", model:", model));

|

||||

LOG_DEBUG(absl::StrCat("result=", result, " new_size=", new_size));

|

||||

} else {

|

||||

LOG_DEBUG(absl::StrCat("model route to provider not work, model:",

|

||||

model_value));

|

||||

}

|

||||

}

|

||||

// We are done now. We can stop processing the body.

|

||||

LOG_DEBUG(absl::StrCat("Done processing multipart body after caching ", body_view.length() , " bytes."));

|

||||

ctx.mode_ = MODE_BYPASS;

|

||||

return FilterDataStatus::Continue;

|

||||

}

|

||||

if (end_stream) {

|

||||

LOG_DEBUG("No model parameter found in the body");

|

||||

return FilterDataStatus::Continue;

|

||||

}

|

||||

return FilterDataStatus::StopIterationAndBuffer;

|

||||

}

|

||||

|

||||

FilterHeadersStatus PluginContext::onRequestHeaders(uint32_t, bool) {

|

||||

auto* rootCtx = rootContext();

|

||||

return rootCtx->onHeaders([rootCtx, this](const auto& config) {

|

||||

auto ret = rootCtx->onHeader(config);

|

||||

auto ret = rootCtx->onHeader(*this, config);

|

||||

if (ret == FilterHeadersStatus::StopIteration) {

|

||||

this->config_ = &config;

|

||||

}

|

||||

@@ -214,14 +321,28 @@ FilterDataStatus PluginContext::onRequestBody(size_t body_size,

|

||||

if (config_ == nullptr) {

|

||||

return FilterDataStatus::Continue;

|

||||

}

|

||||

body_total_size_ += body_size;

|

||||

if (!end_stream) {

|

||||

return FilterDataStatus::StopIterationAndBuffer;

|

||||

}

|

||||

auto body =

|

||||

getBufferBytes(WasmBufferType::HttpRequestBody, 0, body_total_size_);

|

||||

auto* rootCtx = rootContext();

|

||||

return rootCtx->onBody(*config_, body->view());

|

||||

body_total_size_ += body_size;

|

||||

switch (mode_) {

|

||||

case MODE_JSON:

|

||||

{

|

||||

if (!end_stream) {

|

||||

return FilterDataStatus::StopIterationAndBuffer;

|

||||

}

|

||||

auto body =

|

||||

getBufferBytes(WasmBufferType::HttpRequestBody, 0, body_total_size_);

|

||||

return rootCtx->onJsonBody(*config_, body->view());

|

||||

}

|

||||

case MODE_MULTIPART:

|

||||

{

|

||||

auto body =

|

||||

getBufferBytes(WasmBufferType::HttpRequestBody, 0, body_total_size_);

|

||||

return rootCtx->onMultipartBody(*this, *config_, body, end_stream);

|

||||

}

|

||||

case MODE_BYPASS:

|

||||

default:

|

||||

return FilterDataStatus::Continue;

|

||||

}

|

||||

}

|

||||

|

||||

#ifdef NULL_PLUGIN

|

||||

|

||||

@@ -36,6 +36,13 @@ namespace model_router {

|

||||

|

||||

#endif

|

||||

|

||||

#define MODE_BYPASS 0

|

||||

#define MODE_JSON 1

|

||||

#define MODE_MULTIPART 2

|

||||

|

||||

#define CRLF ("\r\n")

|

||||

#define CRLF_CRLF ("\r\n\r\n")

|

||||

|

||||

struct ModelRouterConfigRule {

|

||||

std::string model_key_ = "model";

|

||||

std::string add_provider_header_;

|

||||

@@ -45,6 +52,8 @@ struct ModelRouterConfigRule {

|

||||

"/audio/speech", "/fine_tuning/jobs", "/moderations"};

|

||||

};

|

||||

|

||||

class PluginContext;

|

||||

|

||||

// PluginRootContext is the root context for all streams processed by the

|

||||

// thread. It has the same lifetime as the worker thread and acts as target for

|

||||

// interactions that outlives individual stream, e.g. timer, async calls.

|

||||

@@ -55,8 +64,9 @@ class PluginRootContext : public RootContext,

|

||||

: RootContext(id, root_id) {}

|

||||

~PluginRootContext() {}

|

||||

bool onConfigure(size_t) override;

|

||||

FilterHeadersStatus onHeader(const ModelRouterConfigRule&);

|

||||

FilterDataStatus onBody(const ModelRouterConfigRule&, std::string_view);

|

||||

FilterHeadersStatus onHeader(PluginContext& ctx, const ModelRouterConfigRule&);

|

||||

FilterDataStatus onJsonBody(const ModelRouterConfigRule&, std::string_view);

|

||||

FilterDataStatus onMultipartBody(PluginContext& ctx, const ModelRouterConfigRule& rule, WasmDataPtr& body, bool end_stream);

|

||||

bool configure(size_t);

|

||||

|

||||

private:

|

||||

@@ -69,6 +79,8 @@ class PluginContext : public Context {

|

||||

explicit PluginContext(uint32_t id, RootContext* root) : Context(id, root) {}

|

||||

FilterHeadersStatus onRequestHeaders(uint32_t, bool) override;

|

||||

FilterDataStatus onRequestBody(size_t, bool) override;

|

||||

int mode_;

|

||||

std::string boundary_;

|

||||

|

||||

private:

|

||||

inline PluginRootContext* rootContext() {

|

||||

|

||||

@@ -15,6 +15,7 @@

|

||||

#include "extensions/model_router/plugin.h"

|

||||

|

||||

#include <cstddef>

|

||||

#include <regex>

|

||||

|

||||

#include "gmock/gmock.h"

|

||||

#include "gtest/gtest.h"

|

||||

@@ -86,7 +87,7 @@ class ModelRouterTest : public ::testing::Test {

|

||||

.WillByDefault([&](WasmHeaderMapType, std::string_view header,

|

||||

std::string_view* result) {

|

||||

if (header == "content-type") {

|

||||

*result = "application/json";

|

||||

*result = content_type_;

|

||||

} else if (header == "content-length") {

|

||||

*result = "1024";

|

||||

} else if (header == ":path") {

|

||||

@@ -125,6 +126,7 @@ class ModelRouterTest : public ::testing::Test {

|

||||

std::unique_ptr<PluginContext> context_;

|

||||

std::string route_name_;

|

||||

std::string path_;

|

||||

std::string content_type_ = "application/json";

|

||||

BufferBase body_;

|

||||

BufferBase config_;

|

||||

};

|

||||

@@ -133,7 +135,7 @@ TEST_F(ModelRouterTest, RewriteModelAndHeader) {

|

||||

std::string configuration = R"(

|

||||

{

|

||||

"addProviderHeader": "x-higress-llm-provider"

|

||||

})";

|

||||

})";

|

||||

|

||||

config_.set(configuration);

|

||||

EXPECT_TRUE(root_context_->configure(configuration.size()));

|

||||

@@ -155,14 +157,14 @@ TEST_F(ModelRouterTest, RewriteModelAndHeader) {

|

||||

body_.set(request_json);

|

||||

EXPECT_EQ(context_->onRequestHeaders(0, false),

|

||||

FilterHeadersStatus::StopIteration);

|

||||

EXPECT_EQ(context_->onRequestBody(28, true), FilterDataStatus::Continue);

|

||||

EXPECT_EQ(context_->onRequestBody(request_json.length(), true), FilterDataStatus::Continue);

|

||||

}

|

||||

|

||||

TEST_F(ModelRouterTest, ModelToHeader) {

|

||||

std::string configuration = R"(

|

||||

{

|

||||

"modelToHeader": "x-higress-llm-model"

|

||||

})";

|

||||

})";

|

||||

|

||||

config_.set(configuration);

|

||||

EXPECT_TRUE(root_context_->configure(configuration.size()));

|

||||

@@ -181,14 +183,14 @@ TEST_F(ModelRouterTest, ModelToHeader) {

|

||||

body_.set(request_json);

|

||||

EXPECT_EQ(context_->onRequestHeaders(0, false),

|

||||

FilterHeadersStatus::StopIteration);

|

||||

EXPECT_EQ(context_->onRequestBody(28, true), FilterDataStatus::Continue);

|

||||

EXPECT_EQ(context_->onRequestBody(request_json.length(), true), FilterDataStatus::Continue);

|

||||

}

|

||||

|

||||

TEST_F(ModelRouterTest, IgnorePath) {

|

||||

std::string configuration = R"(

|

||||

{

|

||||

"addProviderHeader": "x-higress-llm-provider"

|

||||

})";

|

||||

})";

|

||||

|

||||

config_.set(configuration);

|

||||

EXPECT_TRUE(root_context_->configure(configuration.size()));

|

||||

@@ -208,7 +210,7 @@ TEST_F(ModelRouterTest, IgnorePath) {

|

||||

body_.set(request_json);

|

||||

EXPECT_EQ(context_->onRequestHeaders(0, false),

|

||||

FilterHeadersStatus::Continue);

|

||||

EXPECT_EQ(context_->onRequestBody(28, true), FilterDataStatus::Continue);

|

||||

EXPECT_EQ(context_->onRequestBody(request_json.length(), true), FilterDataStatus::Continue);

|

||||

}

|

||||

|

||||

TEST_F(ModelRouterTest, RouteLevelRewriteModelAndHeader) {

|

||||

@@ -242,7 +244,178 @@ TEST_F(ModelRouterTest, RouteLevelRewriteModelAndHeader) {

|

||||

route_name_ = "route-a";

|

||||

EXPECT_EQ(context_->onRequestHeaders(0, false),

|

||||

FilterHeadersStatus::StopIteration);

|

||||

EXPECT_EQ(context_->onRequestBody(28, true), FilterDataStatus::Continue);

|

||||

EXPECT_EQ(context_->onRequestBody(request_json.length(), true), FilterDataStatus::Continue);

|

||||

}

|

||||

|

||||

|

||||

TEST_F(ModelRouterTest, RewriteModelAndHeaderMultipartFormData) {

|

||||

std::string configuration = R"({

|

||||

"addProviderHeader": "x-higress-llm-provider"

|

||||

})";

|

||||

|

||||

config_.set(configuration);

|

||||

EXPECT_TRUE(root_context_->configure(configuration.size()));

|

||||

|

||||

path_ = "/v1/chat/completions";

|

||||

content_type_ = "multipart/form-data; boundary=--------------------------100751621174704322650451";

|

||||

std::string request_data = std::regex_replace(R"(

|

||||

----------------------------100751621174704322650451

|

||||

Content-Disposition: form-data; name="purpose"

|

||||

|

||||

batch

|

||||

----------------------------100751621174704322650451

|

||||

Content-Disposition: form-data; name="model"

|

||||

|

||||

qwen/qwen-turbo

|

||||

----------------------------100751621174704322650451

|

||||

Content-Disposition: form-data; name="file"; filename="test-data.json"

|

||||

Content-Type: application/json

|

||||

|

||||

[

|

||||

]

|

||||

----------------------------100751621174704322650451--

|

||||

)", std::regex("\n"), "\r\n"); // Multipart data requires CRLF line endings

|

||||

EXPECT_CALL(*mock_context_,

|

||||

setBuffer(testing::_, testing::_, testing::_, testing::_))

|

||||

.WillOnce([&](WasmBufferType, size_t start, size_t length, std::string_view body) {

|

||||

std::cerr << "===============" << "\n";

|

||||

std::cerr << body << "\n";

|

||||

std::cerr << "===============" << "\n";

|

||||

EXPECT_EQ(start, 0);

|

||||

EXPECT_EQ(length, std::numeric_limits<size_t>::max());

|

||||

auto expected_body= std::regex_replace(R"(

|

||||

----------------------------100751621174704322650451

|

||||

Content-Disposition: form-data; name="purpose"

|

||||

|

||||

batch

|

||||

----------------------------100751621174704322650451

|

||||

Content-Disposition: form-data; name="model"

|

||||

|

||||

qwen-turbo

|

||||

)", std::regex("\n"), "\r\n"); // Multipart data requires CRLF line endings

|

||||

EXPECT_EQ(body, expected_body);

|

||||

return WasmResult::Ok;

|

||||

});

|

||||

|

||||

EXPECT_CALL(*mock_context_,

|

||||

replaceHeaderMapValue(testing::_,

|

||||

std::string_view("x-higress-llm-provider"),

|

||||

std::string_view("qwen")));

|

||||

|

||||

body_.set(request_data);

|

||||

EXPECT_EQ(context_->onRequestHeaders(0, false),

|

||||

FilterHeadersStatus::StopIteration);

|

||||

|

||||

auto last_body_size = 0;

|

||||

|

||||

auto body = request_data.substr(0, request_data.find("batch") + 5 + 2 /* batch + CRLF */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::StopIterationAndBuffer);

|

||||

last_body_size = body.size();

|

||||

|

||||

body = request_data.substr(0, request_data.find("\"model\"") + 5 + 2 + 2 /* "model" + CRLF + CRLF */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::StopIterationAndBuffer);

|

||||

last_body_size = body.size();

|

||||

|

||||

body = request_data.substr(0, request_data.find("qwen") + 4 /* "qwen" */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::StopIterationAndBuffer);

|

||||

last_body_size = body.size();

|

||||

|

||||

body = request_data.substr(0, request_data.find("qwen-turbo") + 10 /* "qwen-turbo" */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::StopIterationAndBuffer);

|

||||

last_body_size = body.size();

|

||||

|

||||

body = request_data.substr(0, request_data.find("qwen-turbo") + 10 + 2 /* "qwen-turbo" + CRLF */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::Continue);

|

||||

last_body_size = body.size();

|

||||

|

||||

body = request_data.substr(0, request_data.find("qwen-turbo") + 10 + 2 + 50 /* "qwen-turbo" + CRLF + boundary */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::Continue);

|

||||

last_body_size = body.size();

|

||||

|

||||

body_.set(request_data);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, true), FilterDataStatus::Continue);

|

||||

}

|

||||

|

||||

TEST_F(ModelRouterTest, ModelToHeaderMultipartFormData) {

|

||||

std::string configuration = R"(

|

||||

{

|

||||

"modelToHeader": "x-higress-llm-model"

|

||||

})";

|

||||

|

||||

config_.set(configuration);

|

||||

EXPECT_TRUE(root_context_->configure(configuration.size()));

|

||||

|

||||

path_ = "/v1/chat/completions";

|

||||

content_type_ = "multipart/form-data; boundary=--------------------------100751621174704322650451";

|

||||

std::string request_data = std::regex_replace(R"(

|

||||

----------------------------100751621174704322650451

|

||||

Content-Disposition: form-data; name="purpose"

|

||||

|

||||

batch

|

||||

----------------------------100751621174704322650451

|

||||

Content-Disposition: form-data; name="model"

|

||||

|

||||

qwen-max

|

||||

----------------------------100751621174704322650451

|

||||

Content-Disposition: form-data; name="file"; filename="test-data.json"

|

||||

Content-Type: application/json

|

||||

|

||||

[

|

||||

]

|

||||

----------------------------100751621174704322650451--

|

||||

)", std::regex("\n"), "\r\n"); // Multipart data requires CRLF line endings

|

||||

EXPECT_CALL(*mock_context_,

|

||||

setBuffer(testing::_, testing::_, testing::_, testing::_))

|

||||

.Times(0);

|

||||

|

||||

EXPECT_CALL(

|

||||

*mock_context_,

|

||||

replaceHeaderMapValue(testing::_, std::string_view("x-higress-llm-model"),

|

||||

std::string_view("qwen-max")));

|

||||

|

||||

EXPECT_EQ(context_->onRequestHeaders(0, false),

|

||||

FilterHeadersStatus::StopIteration);

|

||||

|

||||

auto last_body_size = 0;

|

||||

|

||||

auto body = request_data.substr(0, request_data.find("batch") + 5 + 2 /* batch + CRLF */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::StopIterationAndBuffer);

|

||||

last_body_size = body.size();

|

||||

|

||||

body = request_data.substr(0, request_data.find("\"model\"") + 5 + 2 + 2 /* "model" + CRLF + CRLF */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::StopIterationAndBuffer);

|

||||

last_body_size = body.size();

|

||||

|

||||

body = request_data.substr(0, request_data.find("qwen") + 4 /* "qwen" */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::StopIterationAndBuffer);

|

||||

last_body_size = body.size();

|

||||

|

||||

body = request_data.substr(0, request_data.find("qwen-max") + 8 /* "qwen-max" */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::StopIterationAndBuffer);

|

||||

last_body_size = body.size();

|

||||

|

||||

body = request_data.substr(0, request_data.find("qwen-max") + 8 + 2 /* "qwen-max" + CRLF */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::Continue);

|

||||

last_body_size = body.size();

|

||||

|

||||

body = request_data.substr(0, request_data.find("qwen-max") + 8 + 2 + 50 /* "qwen-max" + CRLF */);

|

||||

body_.set(body);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, false), FilterDataStatus::Continue);

|

||||

last_body_size = body.size();

|

||||

|

||||

body_.set(request_data);

|

||||

EXPECT_EQ(context_->onRequestBody(body.size() - last_body_size, true), FilterDataStatus::Continue);

|

||||

}

|

||||

|

||||

} // namespace model_router

|

||||

|

||||

817

plugins/wasm-go/extensions/ai-proxy/provider/bedrock.go

Normal file

817

plugins/wasm-go/extensions/ai-proxy/provider/bedrock.go

Normal file

@@ -0,0 +1,817 @@

|

||||

package provider

|

||||

|

||||

import (

|

||||

"bytes"

|

||||

"crypto/hmac"

|

||||

"crypto/sha256"

|

||||

"encoding/binary"

|

||||

"encoding/hex"

|

||||

"encoding/json"

|

||||

"errors"

|

||||

"fmt"

|

||||

"hash"

|

||||

"hash/crc32"

|

||||

"io"

|

||||

"net/http"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

"github.com/alibaba/higress/plugins/wasm-go/extensions/ai-proxy/util"

|

||||

"github.com/alibaba/higress/plugins/wasm-go/pkg/log"

|

||||

"github.com/alibaba/higress/plugins/wasm-go/pkg/wrapper"

|

||||

"github.com/higress-group/proxy-wasm-go-sdk/proxywasm"

|

||||

"github.com/higress-group/proxy-wasm-go-sdk/proxywasm/types"

|

||||

)

|

||||

|

||||

const (

|

||||

httpPostMethod = "POST"

|

||||

awsService = "bedrock"

|

||||

// bedrock-runtime.{awsRegion}.amazonaws.com

|

||||

bedrockDefaultDomain = "bedrock-runtime.%s.amazonaws.com"

|

||||

// converse路径 /model/{modelId}/converse

|

||||

bedrockChatCompletionPath = "/model/%s/converse"

|

||||

// converseStream路径 /model/{modelId}/converse-stream

|

||||

bedrockStreamChatCompletionPath = "/model/%s/converse-stream"

|

||||

bedrockSignedHeaders = "host;x-amz-date"

|

||||

)

|

||||

|

||||

type bedrockProviderInitializer struct {

|

||||

}

|

||||

|

||||

func (b *bedrockProviderInitializer) ValidateConfig(config *ProviderConfig) error {

|

||||

if len(config.awsAccessKey) == 0 || len(config.awsSecretKey) == 0 {

|

||||

return errors.New("missing bedrock access authentication parameters")

|

||||

}

|

||||

if len(config.awsRegion) == 0 {

|

||||

return errors.New("missing bedrock region parameters")

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (b *bedrockProviderInitializer) DefaultCapabilities() map[string]string {

|

||||

return map[string]string{

|

||||

string(ApiNameChatCompletion): bedrockChatCompletionPath,

|

||||

}

|

||||

}

|

||||

|

||||

func (b *bedrockProviderInitializer) CreateProvider(config ProviderConfig) (Provider, error) {

|

||||

config.setDefaultCapabilities(b.DefaultCapabilities())

|

||||

return &bedrockProvider{

|

||||

config: config,

|

||||

contextCache: createContextCache(&config),

|

||||

}, nil

|

||||

}

|

||||

|

||||

type bedrockProvider struct {

|

||||

config ProviderConfig

|

||||

contextCache *contextCache

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) OnStreamingResponseBody(ctx wrapper.HttpContext, name ApiName, chunk []byte, isLastChunk bool) ([]byte, error) {

|

||||

events := extractAmazonEventStreamEvents(ctx, chunk)

|

||||

if len(events) == 0 {

|

||||

return chunk, fmt.Errorf("No events are extracted ")

|

||||

}

|

||||

var responseBuilder strings.Builder

|

||||

for _, event := range events {

|

||||

outputEvent, err := b.convertEventFromBedrockToOpenAI(ctx, event)

|

||||

if err != nil {

|

||||

log.Errorf("[onStreamingResponseBody] failed to process streaming event: %v\n%s", err, chunk)

|

||||

return chunk, err

|

||||

}

|

||||

responseBuilder.WriteString(string(outputEvent))

|

||||

}

|

||||

return []byte(responseBuilder.String()), nil

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) convertEventFromBedrockToOpenAI(ctx wrapper.HttpContext, bedrockEvent ConverseStreamEvent) ([]byte, error) {

|

||||

choices := make([]chatCompletionChoice, 0)

|

||||

chatChoice := chatCompletionChoice{

|

||||

Delta: &chatMessage{},

|

||||

}

|

||||

if bedrockEvent.Role != nil {

|

||||

chatChoice.Delta.Role = *bedrockEvent.Role

|

||||

}

|

||||

if bedrockEvent.Delta != nil {

|

||||

chatChoice.Delta = &chatMessage{Content: bedrockEvent.Delta.Text}

|

||||

}

|

||||

if bedrockEvent.StopReason != nil {

|

||||

chatChoice.FinishReason = stopReasonBedrock2OpenAI(*bedrockEvent.StopReason)

|

||||

}

|

||||

choices = append(choices, chatChoice)

|

||||

requestId := ctx.GetStringContext("X-Amzn-Requestid", "")

|

||||

openAIFormattedChunk := &chatCompletionResponse{

|

||||

Id: requestId,

|

||||

Created: time.Now().UnixMilli() / 1000,

|

||||

Model: ctx.GetStringContext(ctxKeyFinalRequestModel, ""),

|

||||

SystemFingerprint: "",

|

||||

Object: objectChatCompletion,

|

||||

Choices: choices,

|

||||

}

|

||||

if bedrockEvent.Usage != nil {

|

||||

openAIFormattedChunk.Choices = choices[:0]

|

||||

openAIFormattedChunk.Usage = usage{

|

||||

CompletionTokens: bedrockEvent.Usage.OutputTokens,

|

||||

PromptTokens: bedrockEvent.Usage.InputTokens,

|

||||

TotalTokens: bedrockEvent.Usage.TotalTokens,

|

||||

}

|

||||

}

|

||||

|

||||

openAIFormattedChunkBytes, _ := json.Marshal(openAIFormattedChunk)

|

||||

var openAIChunk strings.Builder

|

||||

openAIChunk.WriteString(ssePrefix)

|

||||

openAIChunk.WriteString(string(openAIFormattedChunkBytes))

|

||||

openAIChunk.WriteString("\n\n")

|

||||

return []byte(openAIChunk.String()), nil

|

||||

}

|

||||

|

||||

type ConverseStreamEvent struct {

|

||||

ContentBlockIndex int `json:"contentBlockIndex,omitempty"`

|

||||

Delta *converseStreamEventContentBlockDelta `json:"delta,omitempty"`

|

||||

Role *string `json:"role,omitempty"`

|

||||

StopReason *string `json:"stopReason,omitempty"`

|

||||

Usage *tokenUsage `json:"usage,omitempty"`

|

||||

Start *contentBlockStart `json:"start,omitempty"`

|

||||

}

|

||||

|

||||

type converseStreamEventContentBlockDelta struct {

|

||||

Text *string `json:"text,omitempty"`

|

||||

ToolUse *toolUseBlockDelta `json:"toolUse,omitempty"`

|

||||

}

|

||||

|

||||

type toolUseBlockStart struct {

|

||||

Name string `json:"name"`

|

||||

ToolUseID string `json:"toolUseId"`

|

||||

}

|

||||

|

||||

type contentBlockStart struct {

|

||||

ToolUse *toolUseBlockStart `json:"toolUse,omitempty"`

|

||||

}

|

||||

|

||||

type toolUseBlockDelta struct {

|

||||

Input string `json:"input"`

|

||||

}

|

||||

|

||||

func extractAmazonEventStreamEvents(ctx wrapper.HttpContext, chunk []byte) []ConverseStreamEvent {

|

||||

body := chunk

|

||||

if bufferedStreamingBody, has := ctx.GetContext(ctxKeyStreamingBody).([]byte); has {

|

||||

body = append(bufferedStreamingBody, chunk...)

|

||||

}

|

||||

|

||||

r := bytes.NewReader(body)

|

||||

var events []ConverseStreamEvent

|

||||

var lastRead int64 = -1

|

||||

messageBuffer := make([]byte, 1024)

|

||||

defer func() {

|

||||

log.Infof("extractAmazonEventStreamEvents: lastRead=%d, r.Size=%d", lastRead, r.Size())

|

||||

ctx.SetContext(ctxKeyStreamingBody, nil)

|

||||

}()

|

||||

|

||||

for {

|

||||

msg, err := decodeMessage(r, messageBuffer)

|

||||

if err != nil {

|

||||

if err == io.EOF {

|

||||

break

|

||||

}

|

||||

log.Errorf("failed to decode message: %v", err)

|

||||

break

|

||||

}

|

||||

var event ConverseStreamEvent

|

||||

if err = json.Unmarshal(msg.Payload, &event); err == nil {

|

||||

events = append(events, event)

|

||||

}

|

||||

lastRead = r.Size() - int64(r.Len())

|

||||

}

|

||||

return events

|

||||

}

|

||||

|

||||

type bedrockStreamMessage struct {

|

||||

Headers headers

|

||||

Payload []byte

|

||||

}

|

||||

|

||||

type EventFrame struct {

|

||||

TotalLength uint32

|

||||

HeadersLength uint32

|

||||

PreludeCRC uint32

|

||||

Headers map[string]interface{}

|

||||

Payload []byte

|

||||

PayloadCRC uint32

|

||||

}

|

||||

|

||||

type headers []header

|

||||

|

||||

type header struct {

|

||||

Name string

|

||||

Value Value

|

||||

}

|

||||

|

||||

func (hs *headers) Set(name string, value Value) {

|

||||

var i int

|

||||

for ; i < len(*hs); i++ {

|

||||

if (*hs)[i].Name == name {

|

||||

(*hs)[i].Value = value

|

||||

return

|

||||

}

|

||||

}

|

||||

|

||||

*hs = append(*hs, header{

|

||||

Name: name, Value: value,

|

||||

})

|

||||

}

|

||||

|

||||

func decodeMessage(reader io.Reader, payloadBuf []byte) (m bedrockStreamMessage, err error) {

|

||||

crc := crc32.New(crc32.MakeTable(crc32.IEEE))

|

||||

hashReader := io.TeeReader(reader, crc)

|

||||

|

||||

prelude, err := decodePrelude(hashReader, crc)

|

||||

if err != nil {

|

||||

return bedrockStreamMessage{}, err

|

||||

}

|

||||

|

||||

if prelude.HeadersLen > 0 {

|

||||

lr := io.LimitReader(hashReader, int64(prelude.HeadersLen))

|

||||

m.Headers, err = decodeHeaders(lr)

|

||||

if err != nil {

|

||||

return bedrockStreamMessage{}, err

|

||||

}

|

||||

}

|

||||

|

||||

if payloadLen := prelude.PayloadLen(); payloadLen > 0 {

|

||||

buf, err := decodePayload(payloadBuf, io.LimitReader(hashReader, int64(payloadLen)))

|

||||

if err != nil {

|

||||

return bedrockStreamMessage{}, err

|

||||

}

|

||||

m.Payload = buf

|

||||

}

|

||||

|

||||

msgCRC := crc.Sum32()

|

||||

if err := validateCRC(reader, msgCRC); err != nil {

|

||||

return bedrockStreamMessage{}, err

|

||||

}

|

||||

|

||||

return m, nil

|

||||

}

|

||||

|

||||

func decodeHeaders(r io.Reader) (headers, error) {

|

||||

hs := headers{}

|

||||

|

||||

for {

|

||||

name, err := decodeHeaderName(r)

|

||||

if err != nil {

|

||||

if err == io.EOF {

|

||||

// EOF while getting header name means no more headers

|

||||

break

|

||||

}

|

||||

return nil, err

|

||||

}

|

||||

|

||||

value, err := decodeHeaderValue(r)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

hs.Set(name, value)

|

||||

}

|

||||

|

||||

return hs, nil

|

||||

}

|

||||

|

||||

func decodeHeaderValue(r io.Reader) (Value, error) {

|

||||

var raw rawValue

|

||||

|

||||

typ, err := decodeUint8(r)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

raw.Type = valueType(typ)

|

||||

|

||||

var v Value

|

||||

|

||||

switch raw.Type {

|

||||

case stringValueType:

|

||||

var tv StringValue

|

||||

err = tv.decode(r)

|

||||

v = tv

|

||||

default:

|

||||

log.Errorf("unknown value type %d", raw.Type)

|

||||

}

|

||||

|

||||

// Error could be EOF, let caller deal with it

|

||||

return v, err

|

||||

}

|

||||

|

||||

type Value interface {

|

||||

Get() interface{}

|

||||

}

|

||||

|

||||

type StringValue string

|

||||

|

||||

func (v StringValue) Get() interface{} {

|

||||

return string(v)

|

||||

}

|

||||

|

||||

func (v *StringValue) decode(r io.Reader) error {

|

||||

s, err := decodeStringValue(r)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

*v = StringValue(s)

|

||||

return nil

|

||||

}

|

||||

|

||||

func decodeBytesValue(r io.Reader) ([]byte, error) {

|

||||

var raw rawValue

|

||||

var err error

|

||||

raw.Len, err = decodeUint16(r)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

buf := make([]byte, raw.Len)

|

||||

_, err = io.ReadFull(r, buf)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

return buf, nil

|

||||

}

|

||||

|

||||

func decodeUint16(r io.Reader) (uint16, error) {

|

||||

var b [2]byte

|

||||

bs := b[:]

|

||||

_, err := io.ReadFull(r, bs)

|

||||

if err != nil {

|

||||

return 0, err

|

||||

}

|

||||

return binary.BigEndian.Uint16(bs), nil

|

||||

}

|

||||

|

||||

func decodeStringValue(r io.Reader) (string, error) {

|

||||

v, err := decodeBytesValue(r)

|

||||

return string(v), err

|

||||

}

|

||||

|

||||

type rawValue struct {

|

||||

Type valueType

|

||||

Len uint16 // Only set for variable length slices

|

||||

Value []byte // byte representation of value, BigEndian encoding.

|

||||

}

|

||||

|

||||

type valueType uint8

|

||||

|

||||

const (

|

||||

trueValueType valueType = iota

|

||||

falseValueType

|

||||

int8ValueType // Byte

|

||||

int16ValueType // Short

|

||||

int32ValueType // Integer

|

||||

int64ValueType // Long

|

||||

bytesValueType

|

||||

stringValueType

|

||||

timestampValueType

|

||||

uuidValueType

|

||||

)

|

||||

|

||||

func decodeHeaderName(r io.Reader) (string, error) {

|

||||

var n headerName

|

||||

|

||||

var err error

|

||||

n.Len, err = decodeUint8(r)

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

|

||||

name := n.Name[:n.Len]

|

||||

if _, err := io.ReadFull(r, name); err != nil {

|

||||

return "", err

|

||||

}

|

||||

|

||||

return string(name), nil

|

||||

}

|

||||

|

||||

func decodeUint8(r io.Reader) (uint8, error) {

|

||||

type byteReader interface {

|

||||

ReadByte() (byte, error)

|

||||

}

|

||||

|

||||

if br, ok := r.(byteReader); ok {

|

||||

v, err := br.ReadByte()

|

||||

return v, err

|

||||

}

|

||||

|

||||

var b [1]byte

|

||||

_, err := io.ReadFull(r, b[:])

|

||||

return b[0], err

|

||||

}

|

||||

|

||||

const maxHeaderNameLen = 255

|

||||

|

||||

type headerName struct {

|

||||

Len uint8

|

||||

Name [maxHeaderNameLen]byte

|

||||

}

|

||||

|

||||

func decodePayload(buf []byte, r io.Reader) ([]byte, error) {

|

||||

w := bytes.NewBuffer(buf[0:0])

|

||||

|

||||

_, err := io.Copy(w, r)

|

||||

return w.Bytes(), err

|

||||

}

|

||||

|

||||

type messagePrelude struct {

|

||||

Length uint32

|

||||

HeadersLen uint32

|

||||

PreludeCRC uint32

|

||||

}

|

||||

|

||||

func (p messagePrelude) ValidateLens() error {

|

||||

if p.Length == 0 {

|

||||

return fmt.Errorf("message prelude want: 16, have: %v", int(p.Length))

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (p messagePrelude) PayloadLen() uint32 {

|

||||

return p.Length - p.HeadersLen - 16

|

||||

}

|

||||

|

||||

func decodePrelude(r io.Reader, crc hash.Hash32) (messagePrelude, error) {

|

||||

var p messagePrelude

|

||||

|

||||

var err error

|

||||

p.Length, err = decodeUint32(r)

|

||||

if err != nil {

|

||||

return messagePrelude{}, err

|

||||

}

|

||||

|

||||

p.HeadersLen, err = decodeUint32(r)

|

||||

if err != nil {

|

||||

return messagePrelude{}, err

|

||||

}

|

||||

|

||||

if err := p.ValidateLens(); err != nil {

|

||||

return messagePrelude{}, err

|

||||

}

|

||||

|

||||

preludeCRC := crc.Sum32()

|

||||

if err := validateCRC(r, preludeCRC); err != nil {

|

||||

return messagePrelude{}, err

|

||||

}

|

||||

|

||||

p.PreludeCRC = preludeCRC

|

||||

|

||||

return p, nil

|

||||

}

|

||||

|

||||

func decodeUint32(r io.Reader) (uint32, error) {

|

||||

var b [4]byte

|

||||

bs := b[:]

|

||||

_, err := io.ReadFull(r, bs)

|

||||

if err != nil {

|

||||

return 0, err

|

||||

}

|

||||

return binary.BigEndian.Uint32(bs), nil

|

||||

}

|

||||

|

||||

func validateCRC(r io.Reader, expect uint32) error {

|

||||

msgCRC, err := decodeUint32(r)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

if msgCRC != expect {

|

||||

return fmt.Errorf("message checksum mismatch")

|

||||

}

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) TransformResponseHeaders(ctx wrapper.HttpContext, apiName ApiName, headers http.Header) {

|

||||

ctx.SetContext("X-Amzn-Requestid", headers.Get("X-Amzn-Requestid"))

|

||||

if headers.Get("Content-Type") == "application/vnd.amazon.eventstream" {

|

||||

headers.Set("Content-Type", "text/event-stream; charset=utf-8")

|

||||

}

|

||||

headers.Del("Content-Length")

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) GetProviderType() string {

|

||||

return providerTypeBedrock

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) OnRequestHeaders(ctx wrapper.HttpContext, apiName ApiName) error {

|

||||

b.config.handleRequestHeaders(b, ctx, apiName)

|

||||

return nil

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) TransformRequestHeaders(ctx wrapper.HttpContext, apiName ApiName, headers http.Header) {

|

||||

util.OverwriteRequestHostHeader(headers, fmt.Sprintf(bedrockDefaultDomain, b.config.awsRegion))

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) OnRequestBody(ctx wrapper.HttpContext, apiName ApiName, body []byte) (types.Action, error) {

|

||||

if !b.config.isSupportedAPI(apiName) {

|

||||

return types.ActionContinue, errUnsupportedApiName

|

||||

}

|

||||

return b.config.handleRequestBody(b, b.contextCache, ctx, apiName, body)

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) insertHttpContextMessage(body []byte, content string, onlyOneSystemBeforeFile bool) ([]byte, error) {

|

||||

request := &bedrockTextGenRequest{}

|

||||

if err := json.Unmarshal(body, request); err != nil {

|

||||

return nil, fmt.Errorf("unable to unmarshal request: %v", err)

|

||||

}

|

||||

|

||||

if len(request.System) > 0 {

|

||||

request.System = append(request.System, systemContentBlock{Text: content})

|

||||

} else {

|

||||

request.System = []systemContentBlock{{Text: content}}

|

||||

}

|

||||

|

||||

requestBytes, err := json.Marshal(request)

|

||||

b.setAuthHeaders(requestBytes, nil)

|

||||

return requestBytes, err

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) TransformRequestBodyHeaders(ctx wrapper.HttpContext, apiName ApiName, body []byte, headers http.Header) ([]byte, error) {

|

||||

switch apiName {

|

||||

case ApiNameChatCompletion:

|

||||

return b.onChatCompletionRequestBody(ctx, body, headers)

|

||||

default:

|

||||

return b.config.defaultTransformRequestBody(ctx, apiName, body)

|

||||

}

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) TransformResponseBody(ctx wrapper.HttpContext, apiName ApiName, body []byte) ([]byte, error) {

|

||||

if apiName == ApiNameChatCompletion {

|

||||

return b.onChatCompletionResponseBody(ctx, body)

|

||||

}

|

||||

return nil, errUnsupportedApiName

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) onChatCompletionResponseBody(ctx wrapper.HttpContext, body []byte) ([]byte, error) {

|

||||

bedrockResponse := &bedrockConverseResponse{}

|

||||

if err := json.Unmarshal(body, bedrockResponse); err != nil {

|

||||

log.Errorf("unable to unmarshal bedrock response: %v", err)

|

||||

return nil, fmt.Errorf("unable to unmarshal bedrock response: %v", err)

|

||||

}

|

||||

response := b.buildChatCompletionResponse(ctx, bedrockResponse)

|

||||

return json.Marshal(response)

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) onChatCompletionRequestBody(ctx wrapper.HttpContext, body []byte, headers http.Header) ([]byte, error) {

|

||||

request := &chatCompletionRequest{}

|

||||

err := b.config.parseRequestAndMapModel(ctx, request, body)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

streaming := request.Stream

|

||||

headers.Set("Accept", "*/*")

|

||||

if streaming {

|

||||

util.OverwriteRequestPathHeader(headers, fmt.Sprintf(bedrockStreamChatCompletionPath, request.Model))

|

||||

} else {

|

||||

util.OverwriteRequestPathHeader(headers, fmt.Sprintf(bedrockChatCompletionPath, request.Model))

|

||||

}

|

||||

return b.buildBedrockTextGenerationRequest(request, headers)

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) buildBedrockTextGenerationRequest(origRequest *chatCompletionRequest, headers http.Header) ([]byte, error) {

|

||||

messages := make([]bedrockMessage, 0, len(origRequest.Messages))

|

||||

for i := range origRequest.Messages {

|

||||

messages = append(messages, chatMessage2BedrockMessage(origRequest.Messages[i]))

|

||||

}

|

||||

request := &bedrockTextGenRequest{

|

||||

Messages: messages,

|

||||

InferenceConfig: bedrockInferenceConfig{

|

||||

MaxTokens: origRequest.MaxTokens,

|

||||

Temperature: origRequest.Temperature,

|

||||

TopP: origRequest.TopP,

|

||||

},

|

||||

AdditionalModelRequestFields: map[string]interface{}{},

|

||||

PerformanceConfig: PerformanceConfiguration{

|

||||

Latency: "standard",

|

||||

},

|

||||

}

|

||||

requestBytes, err := json.Marshal(request)

|

||||

b.setAuthHeaders(requestBytes, headers)

|

||||

return requestBytes, err

|

||||

}

|

||||

|

||||

func (b *bedrockProvider) buildChatCompletionResponse(ctx wrapper.HttpContext, bedrockResponse *bedrockConverseResponse) *chatCompletionResponse {

|

||||

var outputContent string

|

||||

if len(bedrockResponse.Output.Message.Content) > 0 {

|

||||

outputContent = bedrockResponse.Output.Message.Content[0].Text

|

||||

}

|

||||

choices := []chatCompletionChoice{

|

||||

{

|

||||

Index: 0,

|

||||

Message: &chatMessage{

|

||||

Role: bedrockResponse.Output.Message.Role,

|

||||

Content: outputContent,

|

||||

},

|

||||

FinishReason: stopReasonBedrock2OpenAI(bedrockResponse.StopReason),

|

||||

},

|

||||

}

|

||||

requestId := ctx.GetStringContext("X-Amzn-Requestid", "")

|

||||

return &chatCompletionResponse{

|

||||

Id: requestId,

|

||||

Created: time.Now().UnixMilli() / 1000,

|

||||

Model: ctx.GetStringContext(ctxKeyFinalRequestModel, ""),

|

||||

SystemFingerprint: "",

|

||||

Object: objectChatCompletion,

|

||||

Choices: choices,

|

||||

Usage: usage{

|

||||

PromptTokens: bedrockResponse.Usage.InputTokens,

|

||||

CompletionTokens: bedrockResponse.Usage.OutputTokens,

|

||||

TotalTokens: bedrockResponse.Usage.TotalTokens,

|

||||

},

|

||||

}

|

||||

}

|

||||

|

||||

func stopReasonBedrock2OpenAI(reason string) string {

|

||||

switch reason {

|

||||

case "end_turn":

|

||||

return finishReasonStop

|

||||

case "stop_sequence":

|

||||

return finishReasonStop

|

||||

case "max_tokens":

|

||||

return finishReasonLength

|

||||

default:

|

||||

return reason

|

||||

}

|

||||

}

|

||||

|

||||

type bedrockTextGenRequest struct {

|

||||

Messages []bedrockMessage `json:"messages"`

|

||||

System []systemContentBlock `json:"system,omitempty"`

|

||||