mirror of

https://github.com/alibaba/higress.git

synced 2026-02-25 21:21:01 +08:00

Compare commits

81 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

b5eadcdbee | ||

|

|

8ca8fd27ab | ||

|

|

ab014cf912 | ||

|

|

3f67b05fab | ||

|

|

cd271c1f87 | ||

|

|

755de5ae67 | ||

|

|

40402e7dbd | ||

|

|

0a2fb35ae2 | ||

|

|

b16954d8c1 | ||

|

|

29370b18d7 | ||

|

|

c9733d405c | ||

|

|

ec6004dd27 | ||

|

|

ea9a6de8c3 | ||

|

|

5e40a700ae | ||

|

|

48b220453b | ||

|

|

489a800868 | ||

|

|

60c9f21e1c | ||

|

|

ab73f21017 | ||

|

|

806563298b | ||

|

|

02fabbb35f | ||

|

|

07154d1f49 | ||

|

|

db30c0962a | ||

|

|

731fe43d14 | ||

|

|

5bd20aa559 | ||

|

|

a2e4f944e9 | ||

|

|

7955aec639 | ||

|

|

e12feb9f57 | ||

|

|

03b4144cff | ||

|

|

c382635e7f | ||

|

|

e381806ba0 | ||

|

|

52114b37f8 | ||

|

|

b4e68c02f9 | ||

|

|

c241ccf19d | ||

|

|

e4fa1e6390 | ||

|

|

b103b9d7cb | ||

|

|

90b02a90e0 | ||

|

|

38f718b965 | ||

|

|

8752a763c2 | ||

|

|

a57173ce28 | ||

|

|

3a8d8f5b94 | ||

|

|

1c37c361e1 | ||

|

|

b8133a95b2 | ||

|

|

36d5d391b8 | ||

|

|

1da9a07866 | ||

|

|

8620838f8b | ||

|

|

e7d2005382 | ||

|

|

4f47d3fc12 | ||

|

|

6773482300 | ||

|

|

b6d61f9568 | ||

|

|

1834d4acef | ||

|

|

7f9ae38e51 | ||

|

|

b13bce6a36 | ||

|

|

275cac9dbb | ||

|

|

8cce7f5d50 | ||

|

|

4f0834d817 | ||

|

|

7cf0dae824 | ||

|

|

707061fb68 | ||

|

|

3255925bf0 | ||

|

|

a44f7ef76e | ||

|

|

c7abfb8aff | ||

|

|

ed925ddf84 | ||

|

|

1301af4638 | ||

|

|

de6144439f | ||

|

|

e37c4dc286 | ||

|

|

b8e0baa5ab | ||

|

|

4a157e98e9 | ||

|

|

6af8b17216 | ||

|

|

4500b10a42 | ||

|

|

c5a86b5298 | ||

|

|

36806d9e5c | ||

|

|

d1700009e8 | ||

|

|

2c3188dad7 | ||

|

|

7d423cddbd | ||

|

|

0e94e1a58a | ||

|

|

b1307ba97e | ||

|

|

8ae810b01a | ||

|

|

83b38b896c | ||

|

|

1385028f01 | ||

|

|

af663b701a | ||

|

|

e5c24a10fb | ||

|

|

ea85ccb694 |

69

.github/workflows/helm-docs.yaml

vendored

69

.github/workflows/helm-docs.yaml

vendored

@@ -6,7 +6,7 @@ on:

|

||||

- "*"

|

||||

paths:

|

||||

- 'helm/**'

|

||||

workflow_dispatch: ~

|

||||

workflow_dispatch: ~

|

||||

push:

|

||||

branches: [ main ]

|

||||

paths:

|

||||

@@ -39,7 +39,6 @@ jobs:

|

||||

rm -f ./helm-docs

|

||||

|

||||

translate-readme:

|

||||

if: ${{ ! always() }}

|

||||

needs: helm

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

@@ -52,7 +51,26 @@ jobs:

|

||||

sudo apt-get update

|

||||

sudo apt-get install -y jq

|

||||

|

||||

- name: Compare README.md

|

||||

id: compare_readme

|

||||

run: |

|

||||

cd ./helm/higress

|

||||

BASE_BRANCH=main

|

||||

UPSTREAM_REPO=https://github.com/alibaba/higress.git

|

||||

|

||||

TEMP_DIR=$(mktemp -d)

|

||||

git clone --depth 1 --branch $BASE_BRANCH $UPSTREAM_REPO $TEMP_DIR

|

||||

|

||||

if diff -q "$TEMP_DIR/README.md" README.md > /dev/null; then

|

||||

echo "README.md has no changes in comparison to base branch. Skipping translation."

|

||||

echo "skip_translation=true" >> $GITHUB_ENV

|

||||

else

|

||||

echo "README.md has changed in comparison to base branch. Proceeding with translation."

|

||||

echo "skip_translation=false" >> $GITHUB_ENV

|

||||

fi

|

||||

|

||||

- name: Translate README.md to Chinese

|

||||

if: env.skip_translation == 'false'

|

||||

env:

|

||||

API_URL: ${{ secrets.HIGRESS_OPENAI_API_URL }}

|

||||

API_KEY: ${{ secrets.HIGRESS_OPENAI_API_KEY }}

|

||||

@@ -79,37 +97,30 @@ jobs:

|

||||

-H "Authorization: Bearer $API_KEY" \

|

||||

-d "$PAYLOAD")

|

||||

|

||||

echo "response: $RESPONSE"

|

||||

echo "Response: $RESPONSE"

|

||||

|

||||

TRANSLATED_CONTENT=$(echo "$RESPONSE" | jq -r '.choices[0].message.content')

|

||||

echo "$RESPONSE" | jq -c -r '.choices[] | .message.content' > README.zh.new.md

|

||||

|

||||

if [ -z "$TRANSLATED_CONTENT" ]; then

|

||||

echo "Translation failed! Response: $RESPONSE"

|

||||

if [ -f "README.zh.new.md" ]; then

|

||||

echo "Translation completed and saved to README.zh.new.md."

|

||||

else

|

||||

echo "Translation failed or no content returned!"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

echo "$TRANSLATED_CONTENT" > README.zh.new.md

|

||||

echo "Translation completed and saved to README.zh.new.md."

|

||||

mv README.zh.new.md README.zh.md

|

||||

|

||||

- name: Compare README.zh.md

|

||||

id: compare

|

||||

run: |

|

||||

cd ./helm/higress

|

||||

NEW_README_ZH="README.zh.new.md"

|

||||

EXISTING_README_ZH="README.zh.md"

|

||||

- name: Create Pull Request

|

||||

if: env.skip_translation == 'false'

|

||||

uses: peter-evans/create-pull-request@v7

|

||||

with:

|

||||

token: ${{ secrets.GITHUB_TOKEN }}

|

||||

commit-message: "Update helm translated README.zh.md"

|

||||

branch: update-helm-readme-zh

|

||||

title: "Update helm translated README.zh.md"

|

||||

body: |

|

||||

This PR updates the translated README.zh.md file.

|

||||

|

||||

if [ ! -f "$EXISTING_README_ZH" ]; then

|

||||

echo "Add README.zh.md."

|

||||

mv "$NEW_README_ZH" "$EXISTING_README_ZH"

|

||||

echo "updated=true" >> $GITHUB_ENV

|

||||

exit 0

|

||||

fi

|

||||

|

||||

if ! diff -q "$NEW_README_ZH" "$EXISTING_README_ZH"; then

|

||||

echo "Files are different. Updating README.zh.md."

|

||||

mv "$NEW_README_ZH" "$EXISTING_README_ZH"

|

||||

echo "updated=true" >> $GITHUB_ENV

|

||||

else

|

||||

echo "Files are identical. No update needed."

|

||||

echo "updated=false" >> $GITHUB_ENV

|

||||

fi

|

||||

- Automatically generated by GitHub Actions

|

||||

labels: translation, automated

|

||||

base: main

|

||||

@@ -144,7 +144,7 @@ docker-buildx-push: clean-env docker.higress-buildx

|

||||

export PARENT_GIT_TAG:=$(shell cat VERSION)

|

||||

export PARENT_GIT_REVISION:=$(TAG)

|

||||

|

||||

export ENVOY_PACKAGE_URL_PATTERN?=https://github.com/higress-group/proxy/releases/download/v2.1.3/envoy-symbol-ARCH.tar.gz

|

||||

export ENVOY_PACKAGE_URL_PATTERN?=https://github.com/higress-group/proxy/releases/download/v2.1.5/envoy-symbol-ARCH.tar.gz

|

||||

|

||||

build-envoy: prebuild

|

||||

./tools/hack/build-envoy.sh

|

||||

@@ -235,8 +235,7 @@ clean-gateway: clean-istio

|

||||

rm -rf external/proxy

|

||||

rm -rf external/go-control-plane

|

||||

rm -rf external/package/envoy.tar.gz

|

||||

rm -rf external/package/mcp-server_amd64.so

|

||||

rm -rf external/package/mcp-server_arm64.so

|

||||

rm -rf external/package/*.so

|

||||

|

||||

clean-env:

|

||||

rm -rf out/

|

||||

|

||||

59

README.md

59

README.md

@@ -11,27 +11,33 @@

|

||||

[](https://github.com/alibaba/higress/actions)

|

||||

[](https://www.apache.org/licenses/LICENSE-2.0.html)

|

||||

|

||||

<a href="https://trendshift.io/repositories/10918" target="_blank"><img src="https://trendshift.io/api/badge/repositories/10918" alt="alibaba%2Fhigress | Trendshift" style="width: 250px; height: 55px;" width="250" height="55"/></a>

|

||||

<a href="https://trendshift.io/repositories/10918" target="_blank"><img src="https://trendshift.io/api/badge/repositories/10918" alt="alibaba%2Fhigress | Trendshift" style="width: 250px; height: 55px;" width="250" height="55"/></a> <a href="https://www.producthunt.com/posts/higress?embed=true&utm_source=badge-featured&utm_medium=badge&utm_souce=badge-higress" target="_blank"><img src="https://api.producthunt.com/widgets/embed-image/v1/featured.svg?post_id=951287&theme=light&t=1745492822283" alt="Higress - Global APIs as MCP powered by AI Gateway | Product Hunt" style="width: 250px; height: 54px;" width="250" height="54" /></a>

|

||||

|

||||

</div>

|

||||

|

||||

[**Official Site**](https://higress.io/en-us/) |

|

||||

[**Docs**](https://higress.io/en-us/docs/overview/what-is-higress) |

|

||||

[**Blog**](https://higress.io/en-us/blog) |

|

||||

[**Developer**](https://higress.io/en-us/docs/developers/developers_dev) |

|

||||

[**Higress in Cloud**](https://www.alibabacloud.com/product/microservices-engine?spm=higress-website.topbar.0.0.0)

|

||||

|

||||

[**Official Site**](https://higress.ai/en/) |

|

||||

[**MCP Server QuickStart**](https://higress.cn/en/ai/mcp-quick-start/) |

|

||||

[**Wasm Plugin Hub**](https://higress.cn/en/plugin/) |

|

||||

|

||||

<p>

|

||||

English | <a href="README_ZH.md">中文<a/> | <a href="README_JP.md">日本語<a/>

|

||||

</p>

|

||||

|

||||

## What is Higress?

|

||||

|

||||

Higress is a cloud-native API gateway based on Istio and Envoy, which can be extended with Wasm plugins written in Go/Rust/JS. It provides dozens of ready-to-use general-purpose plugins and an out-of-the-box console (try the [demo here](http://demo.higress.io/)).

|

||||

|

||||

Higress was born within Alibaba to solve the issues of Tengine reload affecting long-connection services and insufficient load balancing capabilities for gRPC/Dubbo.

|

||||

### Core Use Cases

|

||||

|

||||

Alibaba Cloud has built its cloud-native API gateway product based on Higress, providing 99.99% gateway high availability guarantee service capabilities for a large number of enterprise customers.

|

||||

Higress's AI gateway capabilities support all [mainstream model providers](https://github.com/alibaba/higress/tree/main/plugins/wasm-go/extensions/ai-proxy/provider) both domestic and international. It also supports hosting MCP (Model Context Protocol) Servers through its plugin mechanism, enabling AI Agents to easily call various tools and services. With the [openapi-to-mcp tool](https://github.com/higress-group/openapi-to-mcpserver), you can quickly convert OpenAPI specifications into remote MCP servers for hosting. Higress provides unified management for both LLM API and MCP API.

|

||||

|

||||

Higress's AI gateway capabilities support all [mainstream model providers](https://github.com/alibaba/higress/tree/main/plugins/wasm-go/extensions/ai-proxy/provider) both domestic and international, as well as self-built DeepSeek models based on vllm/ollama. Within Alibaba Cloud, it supports AI businesses such as Tongyi Qianwen APP, Bailian large model API, and machine learning PAI platform. It also serves leading AIGC enterprises (such as Zero One Infinite) and AI products (such as FastGPT).

|

||||

**🌟 Try it now at [https://mcp.higress.ai/](https://mcp.higress.ai/)** to experience Higress-hosted Remote MCP Servers firsthand:

|

||||

|

||||

|

||||

|

||||

### Enterprise Adoption

|

||||

|

||||

Higress was born within Alibaba to solve the issues of Tengine reload affecting long-connection services and insufficient load balancing capabilities for gRPC/Dubbo. Within Alibaba Cloud, Higress's AI gateway capabilities support core AI applications such as Tongyi Bailian model studio, machine learning PAI platform, and other critical AI services. Alibaba Cloud has built its cloud-native API gateway product based on Higress, providing 99.99% gateway high availability guarantee service capabilities for a large number of enterprise customers.

|

||||

|

||||

## Summary

|

||||

|

||||

@@ -60,31 +66,34 @@ Port descriptions:

|

||||

- Port 8080: Gateway HTTP protocol entry

|

||||

- Port 8443: Gateway HTTPS protocol entry

|

||||

|

||||

**All Higress Docker images use their own dedicated repository, unaffected by Docker Hub access restrictions in certain regions**

|

||||

> All Higress Docker images use Higress's own image repository and are not affected by Docker Hub rate limits.

|

||||

> In addition, the submission and updates of the images are protected by a security scanning mechanism (powered by Alibaba Cloud ACR), making them very secure for use in production environments.

|

||||

|

||||

For other installation methods such as Helm deployment under K8s, please refer to the official [Quick Start documentation](https://higress.io/en-us/docs/user/quickstart).

|

||||

|

||||

## Use Cases

|

||||

|

||||

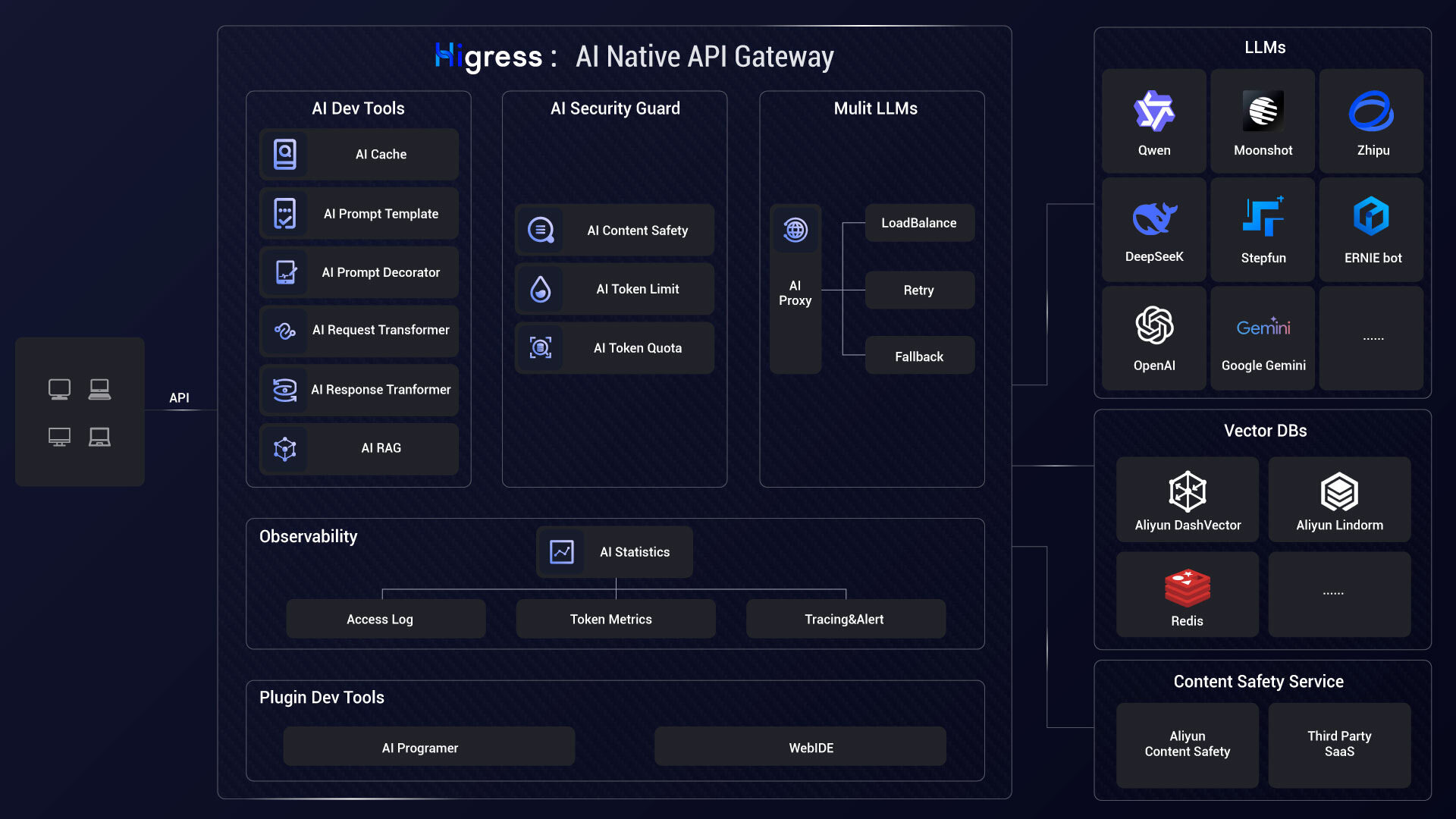

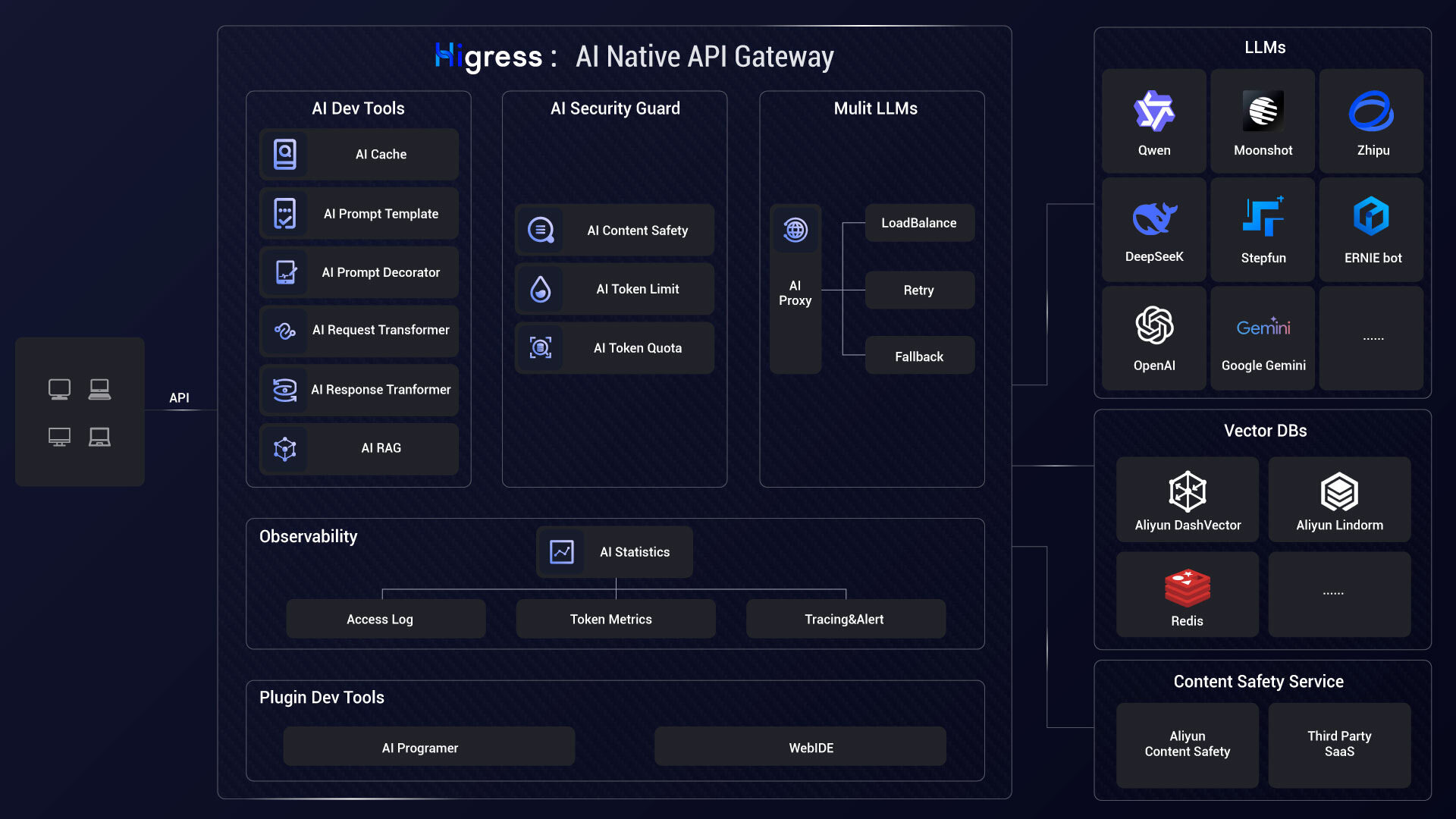

- **AI Gateway**:

|

||||

|

||||

Higress can connect to all LLM model providers both domestic and international using a unified protocol, while also providing rich AI observability, multi-model load balancing/fallback, AI token rate limiting, AI caching, and other capabilities:

|

||||

|

||||

|

||||

|

||||

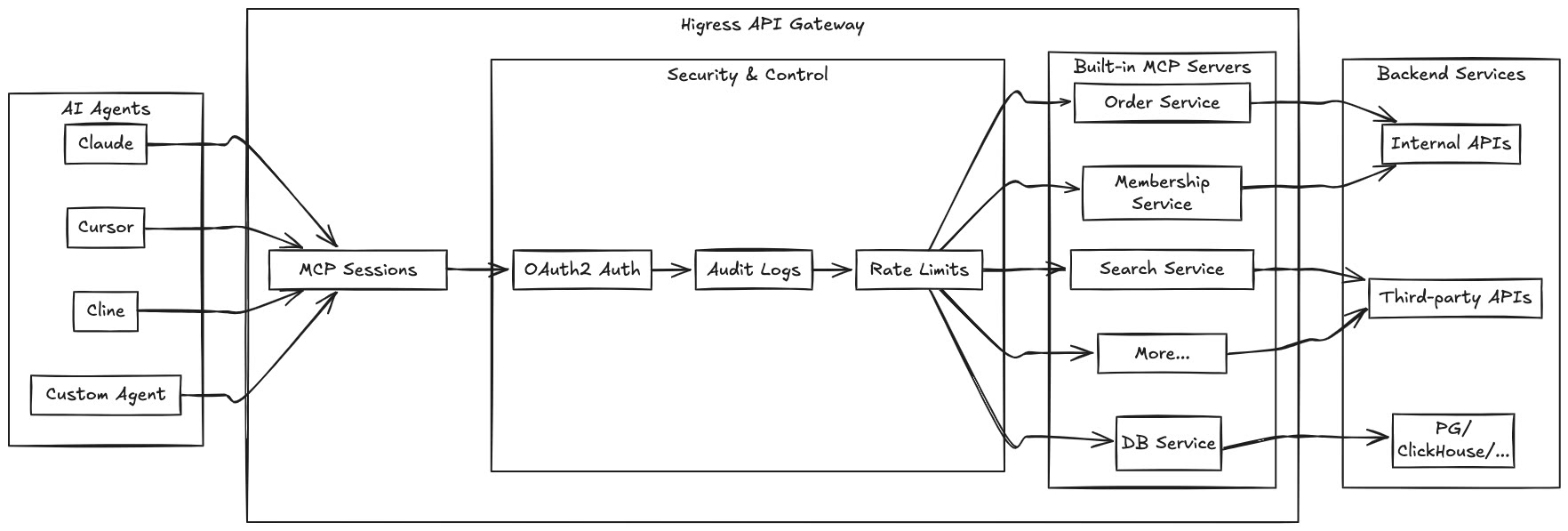

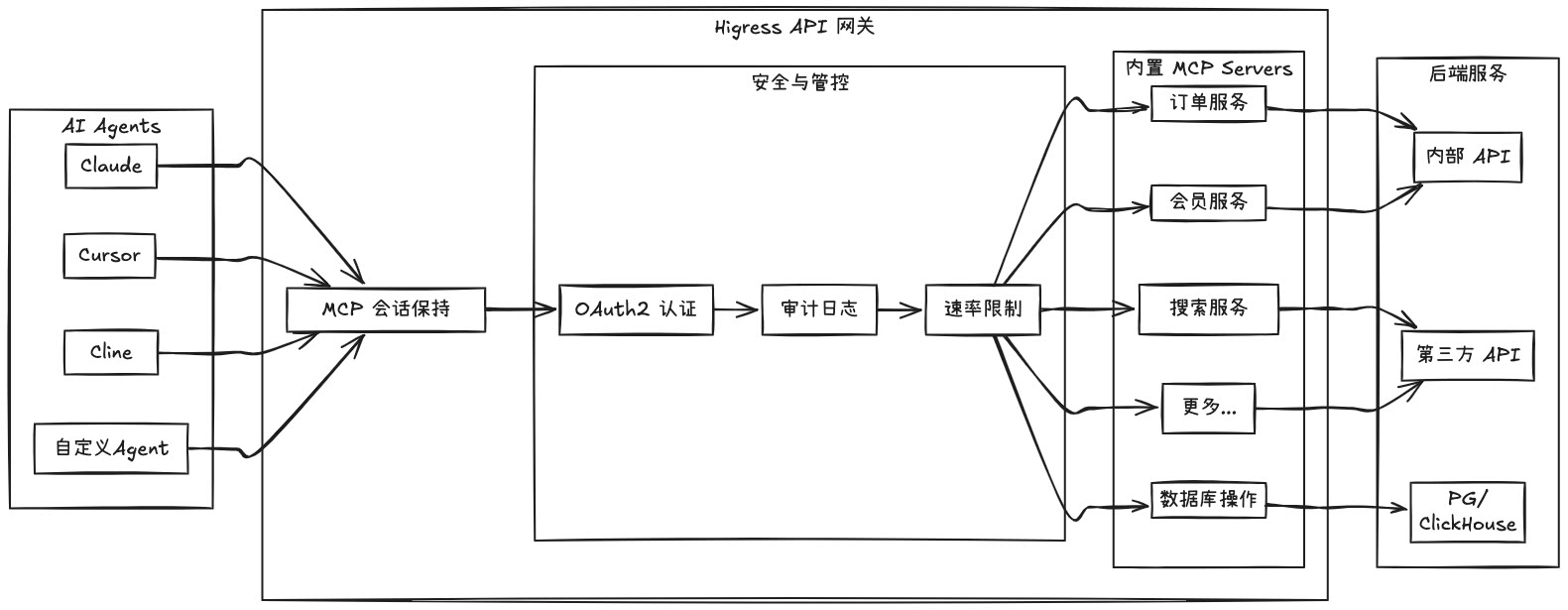

- **MCP Server Hosting**:

|

||||

|

||||

Higress, as an Envoy-based API gateway, supports hosting MCP Servers through its plugin mechanism. MCP (Model Context Protocol) is essentially an AI-friendly API that enables AI Agents to more easily call various tools and services. Higress provides unified capabilities for authentication, authorization, rate limiting, and observability for tool calls, simplifying the development and deployment of AI applications.

|

||||

Higress hosts MCP Servers through its plugin mechanism, enabling AI Agents to easily call various tools and services. With the [openapi-to-mcp tool](https://github.com/higress-group/openapi-to-mcpserver), you can quickly convert OpenAPI specifications into remote MCP servers.

|

||||

|

||||

|

||||

|

||||

By hosting MCP Servers with Higress, you can achieve:

|

||||

- Unified authentication and authorization mechanisms, ensuring the security of AI tool calls

|

||||

- Fine-grained rate limiting to prevent abuse and resource exhaustion

|

||||

- Comprehensive audit logs recording all tool call behaviors

|

||||

- Rich observability for monitoring the performance and health of tool calls

|

||||

- Simplified deployment and management through Higress's plugin mechanism for quickly adding new MCP Servers

|

||||

- Dynamic updates without disruption: Thanks to Envoy's friendly handling of long connections and Wasm plugin's dynamic update mechanism, MCP Server logic can be updated on-the-fly without any traffic disruption or connection drops

|

||||

Key benefits of hosting MCP Servers with Higress:

|

||||

- Unified authentication and authorization mechanisms

|

||||

- Fine-grained rate limiting to prevent abuse

|

||||

- Comprehensive audit logs for all tool calls

|

||||

- Rich observability for monitoring performance

|

||||

- Simplified deployment through Higress's plugin mechanism

|

||||

- Dynamic updates without disruption or connection drops

|

||||

|

||||

[Learn more...](https://higress.cn/en/ai/mcp-quick-start/?spm=36971b57.7beea2de.0.0.d85f20a94jsWGm)

|

||||

|

||||

- **AI Gateway**:

|

||||

|

||||

Higress connects to all LLM model providers using a unified protocol, with AI observability, multi-model load balancing, token rate limiting, and caching capabilities:

|

||||

|

||||

|

||||

|

||||

- **Kubernetes ingress controller**:

|

||||

|

||||

|

||||

30

README_JP.md

30

README_JP.md

@@ -22,15 +22,21 @@

|

||||

</p>

|

||||

|

||||

|

||||

## Higressとは?

|

||||

|

||||

Higressは、IstioとEnvoyをベースにしたクラウドネイティブAPIゲートウェイで、Go/Rust/JSなどを使用してWasmプラグインを作成できます。数十の既製の汎用プラグインと、すぐに使用できるコンソールを提供しています(デモは[こちら](http://demo.higress.io/))。

|

||||

|

||||

Higressは、Tengineのリロードが長時間接続のビジネスに影響を与える問題や、gRPC/Dubboの負荷分散能力の不足を解決するために、Alibaba内部で誕生しました。

|

||||

### 主な使用シナリオ

|

||||

|

||||

Alibaba Cloudは、Higressを基盤にクラウドネイティブAPIゲートウェイ製品を構築し、多くの企業顧客に99.99%のゲートウェイ高可用性保証サービスを提供しています。

|

||||

HigressのAIゲートウェイ機能は、国内外のすべての[主要モデルプロバイダー](https://github.com/alibaba/higress/tree/main/plugins/wasm-go/extensions/ai-proxy/provider)をサポートし、vllm/ollamaなどに基づく自己構築DeepSeekモデルにも対応しています。また、プラグインメカニズムを通じてMCP(Model Context Protocol)サーバーをホストすることもでき、AI Agentが様々なツールやサービスを簡単に呼び出せるようにします。[openapi-to-mcpツール](https://github.com/higress-group/openapi-to-mcpserver)を使用すると、OpenAPI仕様を迅速にリモートMCPサーバーに変換してホスティングできます。HigressはLLM APIとMCP APIの統一管理を提供します。

|

||||

|

||||

Higressは、AIゲートウェイ機能を基盤に、Tongyi Qianwen APP、Bailian大規模モデルAPI、機械学習PAIプラットフォームなどのAIビジネスをサポートしています。また、国内の主要なAIGC企業(例:ZeroOne)やAI製品(例:FastGPT)にもサービスを提供しています。

|

||||

**🌟 今すぐ[https://mcp.higress.ai/](https://mcp.higress.ai/)で体験**してください。HigressがホストするリモートMCPサーバーを直接体験できます:

|

||||

|

||||

|

||||

|

||||

|

||||

### 企業での採用

|

||||

|

||||

Higressは、Tengineのリロードが長時間接続のビジネスに影響を与える問題や、gRPC/Dubboの負荷分散能力の不足を解決するために、Alibaba内部で誕生しました。Alibaba Cloud内では、HigressのAIゲートウェイ機能がTongyi Qianwen APP、Tongyi Bailian Model Studio、機械学習PAIプラットフォームなどの中核的なAIアプリケーションをサポートしています。また、国内の主要なAIGC企業(例:ZeroOne)やAI製品(例:FastGPT)にもサービスを提供しています。Alibaba Cloudは、Higressを基盤にクラウドネイティブAPIゲートウェイ製品を構築し、多くの企業顧客に99.99%のゲートウェイ高可用性保証サービスを提供しています。

|

||||

|

||||

|

||||

## 目次

|

||||

@@ -73,6 +79,20 @@ K8sでのHelmデプロイなどの他のインストール方法については

|

||||

|

||||

|

||||

|

||||

- **MCP Server ホスティング**:

|

||||

|

||||

Higressは、EnvoyベースのAPIゲートウェイとして、プラグインメカニズムを通じてMCP Serverをホストすることができます。MCP(Model Context Protocol)は本質的にAIにより親和性の高いAPIであり、AI Agentが様々なツールやサービスを簡単に呼び出せるようにします。Higressはツール呼び出しの認証、認可、レート制限、可観測性などの統一機能を提供し、AIアプリケーションの開発とデプロイを簡素化します。

|

||||

|

||||

|

||||

|

||||

Higressを使用してMCP Serverをホストすることで、以下のことが実現できます:

|

||||

- 統一された認証と認可メカニズム、AIツール呼び出しのセキュリティを確保

|

||||

- きめ細かいレート制限、乱用やリソース枯渇を防止

|

||||

- 包括的な監査ログ、すべてのツール呼び出し行動を記録

|

||||

- 豊富な可観測性、ツール呼び出しのパフォーマンスと健全性を監視

|

||||

- 簡素化されたデプロイと管理、Higressのプラグインメカニズムを通じて新しいMCP Serverを迅速に追加

|

||||

- 動的更新による無停止:Envoyの長時間接続に対する友好的なサポートとWasmプラグインの動的更新メカニズムにより、MCP Serverのロジックをリアルタイムで更新でき、トラフィックに完全に影響を与えず、接続が切断されることはありません

|

||||

|

||||

- **Kubernetes Ingressゲートウェイ**:

|

||||

|

||||

HigressはK8sクラスターのIngressエントリーポイントゲートウェイとして機能し、多くのK8s Nginx Ingressの注釈に対応しています。K8s Nginx IngressからHigressへのスムーズな移行が可能です。

|

||||

@@ -203,4 +223,4 @@ WeChat公式アカウント:

|

||||

<a href="#readme-top" style="text-decoration: none; color: #007bff; font-weight: bold;">

|

||||

↑ トップに戻る ↑

|

||||

</a>

|

||||

</p>

|

||||

</p>

|

||||

|

||||

16

README_ZH.md

16

README_ZH.md

@@ -11,7 +11,7 @@

|

||||

[](https://github.com/alibaba/higress/actions)

|

||||

[](https://www.apache.org/licenses/LICENSE-2.0.html)

|

||||

|

||||

<a href="https://trendshift.io/repositories/10918" target="_blank"><img src="https://trendshift.io/api/badge/repositories/10918" alt="alibaba%2Fhigress | Trendshift" style="width: 250px; height: 55px;" width="250" height="55"/></a>

|

||||

<a href="https://trendshift.io/repositories/10918" target="_blank"><img src="https://trendshift.io/api/badge/repositories/10918" alt="alibaba%2Fhigress | Trendshift" style="width: 250px; height: 55px;" width="250" height="55"/></a> <a href="https://www.producthunt.com/posts/higress?embed=true&utm_source=badge-featured&utm_medium=badge&utm_souce=badge-higress" target="_blank"><img src="https://api.producthunt.com/widgets/embed-image/v1/featured.svg?post_id=951287&theme=light&t=1745492822283" alt="Higress - Global APIs as MCP powered by AI Gateway | Product Hunt" style="width: 250px; height: 54px;" width="250" height="54" /></a>

|

||||

</div>

|

||||

|

||||

[**官网**](https://higress.cn/) |

|

||||

@@ -28,15 +28,21 @@

|

||||

</p>

|

||||

|

||||

|

||||

## Higress 是什么?

|

||||

|

||||

Higress 是一款云原生 API 网关,内核基于 Istio 和 Envoy,可以用 Go/Rust/JS 等编写 Wasm 插件,提供了数十个现成的通用插件,以及开箱即用的控制台(demo 点[这里](http://demo.higress.io/))

|

||||

|

||||

Higress 在阿里内部为解决 Tengine reload 对长连接业务有损,以及 gRPC/Dubbo 负载均衡能力不足而诞生。

|

||||

### 核心使用场景

|

||||

|

||||

阿里云基于 Higress 构建了云原生 API 网关产品,为大量企业客户提供 99.99% 的网关高可用保障服务能力。

|

||||

Higress 的 AI 网关能力支持国内外所有[主流模型供应商](https://github.com/alibaba/higress/tree/main/plugins/wasm-go/extensions/ai-proxy/provider)和基于 vllm/ollama 等自建的 DeepSeek 模型。同时,Higress 支持通过插件方式托管 MCP (Model Context Protocol) 服务器,使 AI Agent 能够更容易地调用各种工具和服务。借助 [openapi-to-mcp 工具](https://github.com/higress-group/openapi-to-mcpserver),您可以快速将 OpenAPI 规范转换为远程 MCP 服务器进行托管。Higress 提供了对 LLM API 和 MCP API 的统一管理。

|

||||

|

||||

Higress 的 AI 网关能力支持国内外所有[主流模型供应商](https://github.com/alibaba/higress/tree/main/plugins/wasm-go/extensions/ai-proxy/provider)和基于 vllm/ollama 等自建的 DeepSeek 模型;在阿里云内部支撑了通义千问 APP、百炼大模型 API、机器学习 PAI 平台等 AI 业务。同时服务国内头部的 AIGC 企业(如零一万物),以及 AI 产品(如 FastGPT)

|

||||

**🌟 立即体验 [https://mcp.higress.ai/](https://mcp.higress.ai/)** 基于 Higress 托管的远程 MCP 服务器:

|

||||

|

||||

|

||||

|

||||

|

||||

### 生产环境采用

|

||||

|

||||

Higress 在阿里内部为解决 Tengine reload 对长连接业务有损,以及 gRPC/Dubbo 负载均衡能力不足而诞生。在阿里云内部,Higress 的 AI 网关能力支撑了通义千问 APP、通义百炼模型工作室、机器学习 PAI 平台等核心 AI 应用。同时服务国内头部的 AIGC 企业(如零一万物),以及 AI 产品(如 FastGPT)。阿里云基于 Higress 构建了云原生 API 网关产品,为大量企业客户提供 99.99% 的网关高可用保障服务能力。

|

||||

|

||||

|

||||

## Summary

|

||||

|

||||

@@ -263,6 +263,14 @@ spec:

|

||||

type: string

|

||||

domain:

|

||||

type: string

|

||||

enableMCPServer:

|

||||

type: boolean

|

||||

mcpServerBaseUrl:

|

||||

type: string

|

||||

mcpServerExportDomains:

|

||||

items:

|

||||

type: string

|

||||

type: array

|

||||

nacosAccessKey:

|

||||

type: string

|

||||

nacosAddressServer:

|

||||

|

||||

@@ -26,6 +26,8 @@

|

||||

package v1

|

||||

|

||||

import (

|

||||

_ "github.com/golang/protobuf/ptypes/struct"

|

||||

wrappers "github.com/golang/protobuf/ptypes/wrappers"

|

||||

_ "google.golang.org/genproto/googleapis/api/annotations"

|

||||

protoreflect "google.golang.org/protobuf/reflect/protoreflect"

|

||||

protoimpl "google.golang.org/protobuf/runtime/protoimpl"

|

||||

@@ -109,25 +111,28 @@ type RegistryConfig struct {

|

||||

sizeCache protoimpl.SizeCache

|

||||

unknownFields protoimpl.UnknownFields

|

||||

|

||||

Type string `protobuf:"bytes,1,opt,name=type,proto3" json:"type,omitempty"`

|

||||

Name string `protobuf:"bytes,2,opt,name=name,proto3" json:"name,omitempty"`

|

||||

Domain string `protobuf:"bytes,3,opt,name=domain,proto3" json:"domain,omitempty"`

|

||||

Port uint32 `protobuf:"varint,4,opt,name=port,proto3" json:"port,omitempty"`

|

||||

NacosAddressServer string `protobuf:"bytes,5,opt,name=nacosAddressServer,proto3" json:"nacosAddressServer,omitempty"`

|

||||

NacosAccessKey string `protobuf:"bytes,6,opt,name=nacosAccessKey,proto3" json:"nacosAccessKey,omitempty"`

|

||||

NacosSecretKey string `protobuf:"bytes,7,opt,name=nacosSecretKey,proto3" json:"nacosSecretKey,omitempty"`

|

||||

NacosNamespaceId string `protobuf:"bytes,8,opt,name=nacosNamespaceId,proto3" json:"nacosNamespaceId,omitempty"`

|

||||

NacosNamespace string `protobuf:"bytes,9,opt,name=nacosNamespace,proto3" json:"nacosNamespace,omitempty"`

|

||||

NacosGroups []string `protobuf:"bytes,10,rep,name=nacosGroups,proto3" json:"nacosGroups,omitempty"`

|

||||

NacosRefreshInterval int64 `protobuf:"varint,11,opt,name=nacosRefreshInterval,proto3" json:"nacosRefreshInterval,omitempty"`

|

||||

ConsulNamespace string `protobuf:"bytes,12,opt,name=consulNamespace,proto3" json:"consulNamespace,omitempty"`

|

||||

ZkServicesPath []string `protobuf:"bytes,13,rep,name=zkServicesPath,proto3" json:"zkServicesPath,omitempty"`

|

||||

ConsulDatacenter string `protobuf:"bytes,14,opt,name=consulDatacenter,proto3" json:"consulDatacenter,omitempty"`

|

||||

ConsulServiceTag string `protobuf:"bytes,15,opt,name=consulServiceTag,proto3" json:"consulServiceTag,omitempty"`

|

||||

ConsulRefreshInterval int64 `protobuf:"varint,16,opt,name=consulRefreshInterval,proto3" json:"consulRefreshInterval,omitempty"`

|

||||

AuthSecretName string `protobuf:"bytes,17,opt,name=authSecretName,proto3" json:"authSecretName,omitempty"`

|

||||

Protocol string `protobuf:"bytes,18,opt,name=protocol,proto3" json:"protocol,omitempty"`

|

||||

Sni string `protobuf:"bytes,19,opt,name=sni,proto3" json:"sni,omitempty"`

|

||||

Type string `protobuf:"bytes,1,opt,name=type,proto3" json:"type,omitempty"`

|

||||

Name string `protobuf:"bytes,2,opt,name=name,proto3" json:"name,omitempty"`

|

||||

Domain string `protobuf:"bytes,3,opt,name=domain,proto3" json:"domain,omitempty"`

|

||||

Port uint32 `protobuf:"varint,4,opt,name=port,proto3" json:"port,omitempty"`

|

||||

NacosAddressServer string `protobuf:"bytes,5,opt,name=nacosAddressServer,proto3" json:"nacosAddressServer,omitempty"`

|

||||

NacosAccessKey string `protobuf:"bytes,6,opt,name=nacosAccessKey,proto3" json:"nacosAccessKey,omitempty"`

|

||||

NacosSecretKey string `protobuf:"bytes,7,opt,name=nacosSecretKey,proto3" json:"nacosSecretKey,omitempty"`

|

||||

NacosNamespaceId string `protobuf:"bytes,8,opt,name=nacosNamespaceId,proto3" json:"nacosNamespaceId,omitempty"`

|

||||

NacosNamespace string `protobuf:"bytes,9,opt,name=nacosNamespace,proto3" json:"nacosNamespace,omitempty"`

|

||||

NacosGroups []string `protobuf:"bytes,10,rep,name=nacosGroups,proto3" json:"nacosGroups,omitempty"`

|

||||

NacosRefreshInterval int64 `protobuf:"varint,11,opt,name=nacosRefreshInterval,proto3" json:"nacosRefreshInterval,omitempty"`

|

||||

ConsulNamespace string `protobuf:"bytes,12,opt,name=consulNamespace,proto3" json:"consulNamespace,omitempty"`

|

||||

ZkServicesPath []string `protobuf:"bytes,13,rep,name=zkServicesPath,proto3" json:"zkServicesPath,omitempty"`

|

||||

ConsulDatacenter string `protobuf:"bytes,14,opt,name=consulDatacenter,proto3" json:"consulDatacenter,omitempty"`

|

||||

ConsulServiceTag string `protobuf:"bytes,15,opt,name=consulServiceTag,proto3" json:"consulServiceTag,omitempty"`

|

||||

ConsulRefreshInterval int64 `protobuf:"varint,16,opt,name=consulRefreshInterval,proto3" json:"consulRefreshInterval,omitempty"`

|

||||

AuthSecretName string `protobuf:"bytes,17,opt,name=authSecretName,proto3" json:"authSecretName,omitempty"`

|

||||

Protocol string `protobuf:"bytes,18,opt,name=protocol,proto3" json:"protocol,omitempty"`

|

||||

Sni string `protobuf:"bytes,19,opt,name=sni,proto3" json:"sni,omitempty"`

|

||||

McpServerExportDomains []string `protobuf:"bytes,20,rep,name=mcpServerExportDomains,proto3" json:"mcpServerExportDomains,omitempty"`

|

||||

McpServerBaseUrl string `protobuf:"bytes,21,opt,name=mcpServerBaseUrl,proto3" json:"mcpServerBaseUrl,omitempty"`

|

||||

EnableMCPServer *wrappers.BoolValue `protobuf:"bytes,22,opt,name=enableMCPServer,proto3" json:"enableMCPServer,omitempty"`

|

||||

}

|

||||

|

||||

func (x *RegistryConfig) Reset() {

|

||||

@@ -295,6 +300,27 @@ func (x *RegistryConfig) GetSni() string {

|

||||

return ""

|

||||

}

|

||||

|

||||

func (x *RegistryConfig) GetMcpServerExportDomains() []string {

|

||||

if x != nil {

|

||||

return x.McpServerExportDomains

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (x *RegistryConfig) GetMcpServerBaseUrl() string {

|

||||

if x != nil {

|

||||

return x.McpServerBaseUrl

|

||||

}

|

||||

return ""

|

||||

}

|

||||

|

||||

func (x *RegistryConfig) GetEnableMCPServer() *wrappers.BoolValue {

|

||||

if x != nil {

|

||||

return x.EnableMCPServer

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

var File_networking_v1_mcp_bridge_proto protoreflect.FileDescriptor

|

||||

|

||||

var file_networking_v1_mcp_bridge_proto_rawDesc = []byte{

|

||||

@@ -303,61 +329,76 @@ var file_networking_v1_mcp_bridge_proto_rawDesc = []byte{

|

||||

0x12, 0x15, 0x68, 0x69, 0x67, 0x72, 0x65, 0x73, 0x73, 0x2e, 0x6e, 0x65, 0x74, 0x77, 0x6f, 0x72,

|

||||

0x6b, 0x69, 0x6e, 0x67, 0x2e, 0x76, 0x31, 0x1a, 0x1f, 0x67, 0x6f, 0x6f, 0x67, 0x6c, 0x65, 0x2f,

|

||||

0x61, 0x70, 0x69, 0x2f, 0x66, 0x69, 0x65, 0x6c, 0x64, 0x5f, 0x62, 0x65, 0x68, 0x61, 0x76, 0x69,

|

||||

0x6f, 0x72, 0x2e, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x22, 0x52, 0x0a, 0x09, 0x4d, 0x63, 0x70, 0x42,

|

||||

0x72, 0x69, 0x64, 0x67, 0x65, 0x12, 0x45, 0x0a, 0x0a, 0x72, 0x65, 0x67, 0x69, 0x73, 0x74, 0x72,

|

||||

0x69, 0x65, 0x73, 0x18, 0x01, 0x20, 0x03, 0x28, 0x0b, 0x32, 0x25, 0x2e, 0x68, 0x69, 0x67, 0x72,

|

||||

0x65, 0x73, 0x73, 0x2e, 0x6e, 0x65, 0x74, 0x77, 0x6f, 0x72, 0x6b, 0x69, 0x6e, 0x67, 0x2e, 0x76,

|

||||

0x31, 0x2e, 0x52, 0x65, 0x67, 0x69, 0x73, 0x74, 0x72, 0x79, 0x43, 0x6f, 0x6e, 0x66, 0x69, 0x67,

|

||||

0x52, 0x0a, 0x72, 0x65, 0x67, 0x69, 0x73, 0x74, 0x72, 0x69, 0x65, 0x73, 0x22, 0xd3, 0x05, 0x0a,

|

||||

0x0e, 0x52, 0x65, 0x67, 0x69, 0x73, 0x74, 0x72, 0x79, 0x43, 0x6f, 0x6e, 0x66, 0x69, 0x67, 0x12,

|

||||

0x17, 0x0a, 0x04, 0x74, 0x79, 0x70, 0x65, 0x18, 0x01, 0x20, 0x01, 0x28, 0x09, 0x42, 0x03, 0xe0,

|

||||

0x41, 0x02, 0x52, 0x04, 0x74, 0x79, 0x70, 0x65, 0x12, 0x12, 0x0a, 0x04, 0x6e, 0x61, 0x6d, 0x65,

|

||||

0x18, 0x02, 0x20, 0x01, 0x28, 0x09, 0x52, 0x04, 0x6e, 0x61, 0x6d, 0x65, 0x12, 0x1b, 0x0a, 0x06,

|

||||

0x64, 0x6f, 0x6d, 0x61, 0x69, 0x6e, 0x18, 0x03, 0x20, 0x01, 0x28, 0x09, 0x42, 0x03, 0xe0, 0x41,

|

||||

0x02, 0x52, 0x06, 0x64, 0x6f, 0x6d, 0x61, 0x69, 0x6e, 0x12, 0x17, 0x0a, 0x04, 0x70, 0x6f, 0x72,

|

||||

0x74, 0x18, 0x04, 0x20, 0x01, 0x28, 0x0d, 0x42, 0x03, 0xe0, 0x41, 0x02, 0x52, 0x04, 0x70, 0x6f,

|

||||

0x72, 0x74, 0x12, 0x2e, 0x0a, 0x12, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x41, 0x64, 0x64, 0x72, 0x65,

|

||||

0x73, 0x73, 0x53, 0x65, 0x72, 0x76, 0x65, 0x72, 0x18, 0x05, 0x20, 0x01, 0x28, 0x09, 0x52, 0x12,

|

||||

0x6e, 0x61, 0x63, 0x6f, 0x73, 0x41, 0x64, 0x64, 0x72, 0x65, 0x73, 0x73, 0x53, 0x65, 0x72, 0x76,

|

||||

0x65, 0x72, 0x12, 0x26, 0x0a, 0x0e, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x41, 0x63, 0x63, 0x65, 0x73,

|

||||

0x73, 0x4b, 0x65, 0x79, 0x18, 0x06, 0x20, 0x01, 0x28, 0x09, 0x52, 0x0e, 0x6e, 0x61, 0x63, 0x6f,

|

||||

0x73, 0x41, 0x63, 0x63, 0x65, 0x73, 0x73, 0x4b, 0x65, 0x79, 0x12, 0x26, 0x0a, 0x0e, 0x6e, 0x61,

|

||||

0x63, 0x6f, 0x73, 0x53, 0x65, 0x63, 0x72, 0x65, 0x74, 0x4b, 0x65, 0x79, 0x18, 0x07, 0x20, 0x01,

|

||||

0x28, 0x09, 0x52, 0x0e, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x53, 0x65, 0x63, 0x72, 0x65, 0x74, 0x4b,

|

||||

0x65, 0x79, 0x12, 0x2a, 0x0a, 0x10, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x4e, 0x61, 0x6d, 0x65, 0x73,

|

||||

0x70, 0x61, 0x63, 0x65, 0x49, 0x64, 0x18, 0x08, 0x20, 0x01, 0x28, 0x09, 0x52, 0x10, 0x6e, 0x61,

|

||||

0x63, 0x6f, 0x73, 0x4e, 0x61, 0x6d, 0x65, 0x73, 0x70, 0x61, 0x63, 0x65, 0x49, 0x64, 0x12, 0x26,

|

||||

0x0a, 0x0e, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x4e, 0x61, 0x6d, 0x65, 0x73, 0x70, 0x61, 0x63, 0x65,

|

||||

0x18, 0x09, 0x20, 0x01, 0x28, 0x09, 0x52, 0x0e, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x4e, 0x61, 0x6d,

|

||||

0x65, 0x73, 0x70, 0x61, 0x63, 0x65, 0x12, 0x20, 0x0a, 0x0b, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x47,

|

||||

0x72, 0x6f, 0x75, 0x70, 0x73, 0x18, 0x0a, 0x20, 0x03, 0x28, 0x09, 0x52, 0x0b, 0x6e, 0x61, 0x63,

|

||||

0x6f, 0x73, 0x47, 0x72, 0x6f, 0x75, 0x70, 0x73, 0x12, 0x32, 0x0a, 0x14, 0x6e, 0x61, 0x63, 0x6f,

|

||||

0x73, 0x52, 0x65, 0x66, 0x72, 0x65, 0x73, 0x68, 0x49, 0x6e, 0x74, 0x65, 0x72, 0x76, 0x61, 0x6c,

|

||||

0x18, 0x0b, 0x20, 0x01, 0x28, 0x03, 0x52, 0x14, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x52, 0x65, 0x66,

|

||||

0x72, 0x65, 0x73, 0x68, 0x49, 0x6e, 0x74, 0x65, 0x72, 0x76, 0x61, 0x6c, 0x12, 0x28, 0x0a, 0x0f,

|

||||

0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x4e, 0x61, 0x6d, 0x65, 0x73, 0x70, 0x61, 0x63, 0x65, 0x18,

|

||||

0x0c, 0x20, 0x01, 0x28, 0x09, 0x52, 0x0f, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x4e, 0x61, 0x6d,

|

||||

0x65, 0x73, 0x70, 0x61, 0x63, 0x65, 0x12, 0x26, 0x0a, 0x0e, 0x7a, 0x6b, 0x53, 0x65, 0x72, 0x76,

|

||||

0x69, 0x63, 0x65, 0x73, 0x50, 0x61, 0x74, 0x68, 0x18, 0x0d, 0x20, 0x03, 0x28, 0x09, 0x52, 0x0e,

|

||||

0x7a, 0x6b, 0x53, 0x65, 0x72, 0x76, 0x69, 0x63, 0x65, 0x73, 0x50, 0x61, 0x74, 0x68, 0x12, 0x2a,

|

||||

0x0a, 0x10, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x44, 0x61, 0x74, 0x61, 0x63, 0x65, 0x6e, 0x74,

|

||||

0x65, 0x72, 0x18, 0x0e, 0x20, 0x01, 0x28, 0x09, 0x52, 0x10, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c,

|

||||

0x44, 0x61, 0x74, 0x61, 0x63, 0x65, 0x6e, 0x74, 0x65, 0x72, 0x12, 0x2a, 0x0a, 0x10, 0x63, 0x6f,

|

||||

0x6e, 0x73, 0x75, 0x6c, 0x53, 0x65, 0x72, 0x76, 0x69, 0x63, 0x65, 0x54, 0x61, 0x67, 0x18, 0x0f,

|

||||

0x20, 0x01, 0x28, 0x09, 0x52, 0x10, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x53, 0x65, 0x72, 0x76,

|

||||

0x69, 0x63, 0x65, 0x54, 0x61, 0x67, 0x12, 0x34, 0x0a, 0x15, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c,

|

||||

0x52, 0x65, 0x66, 0x72, 0x65, 0x73, 0x68, 0x49, 0x6e, 0x74, 0x65, 0x72, 0x76, 0x61, 0x6c, 0x18,

|

||||

0x10, 0x20, 0x01, 0x28, 0x03, 0x52, 0x15, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x52, 0x65, 0x66,

|

||||

0x72, 0x65, 0x73, 0x68, 0x49, 0x6e, 0x74, 0x65, 0x72, 0x76, 0x61, 0x6c, 0x12, 0x26, 0x0a, 0x0e,

|

||||

0x61, 0x75, 0x74, 0x68, 0x53, 0x65, 0x63, 0x72, 0x65, 0x74, 0x4e, 0x61, 0x6d, 0x65, 0x18, 0x11,

|

||||

0x20, 0x01, 0x28, 0x09, 0x52, 0x0e, 0x61, 0x75, 0x74, 0x68, 0x53, 0x65, 0x63, 0x72, 0x65, 0x74,

|

||||

0x4e, 0x61, 0x6d, 0x65, 0x12, 0x1a, 0x0a, 0x08, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x63, 0x6f, 0x6c,

|

||||

0x18, 0x12, 0x20, 0x01, 0x28, 0x09, 0x52, 0x08, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x63, 0x6f, 0x6c,

|

||||

0x12, 0x10, 0x0a, 0x03, 0x73, 0x6e, 0x69, 0x18, 0x13, 0x20, 0x01, 0x28, 0x09, 0x52, 0x03, 0x73,

|

||||

0x6e, 0x69, 0x42, 0x2e, 0x5a, 0x2c, 0x67, 0x69, 0x74, 0x68, 0x75, 0x62, 0x2e, 0x63, 0x6f, 0x6d,

|

||||

0x2f, 0x61, 0x6c, 0x69, 0x62, 0x61, 0x62, 0x61, 0x2f, 0x68, 0x69, 0x67, 0x72, 0x65, 0x73, 0x73,

|

||||

0x2f, 0x61, 0x70, 0x69, 0x2f, 0x6e, 0x65, 0x74, 0x77, 0x6f, 0x72, 0x6b, 0x69, 0x6e, 0x67, 0x2f,

|

||||

0x76, 0x31, 0x62, 0x06, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x33,

|

||||

0x6f, 0x72, 0x2e, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x1a, 0x1e, 0x67, 0x6f, 0x6f, 0x67, 0x6c, 0x65,

|

||||

0x2f, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x62, 0x75, 0x66, 0x2f, 0x77, 0x72, 0x61, 0x70, 0x70, 0x65,

|

||||

0x72, 0x73, 0x2e, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x1a, 0x1c, 0x67, 0x6f, 0x6f, 0x67, 0x6c, 0x65,

|

||||

0x2f, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x62, 0x75, 0x66, 0x2f, 0x73, 0x74, 0x72, 0x75, 0x63, 0x74,

|

||||

0x2e, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x22, 0x52, 0x0a, 0x09, 0x4d, 0x63, 0x70, 0x42, 0x72, 0x69,

|

||||

0x64, 0x67, 0x65, 0x12, 0x45, 0x0a, 0x0a, 0x72, 0x65, 0x67, 0x69, 0x73, 0x74, 0x72, 0x69, 0x65,

|

||||

0x73, 0x18, 0x01, 0x20, 0x03, 0x28, 0x0b, 0x32, 0x25, 0x2e, 0x68, 0x69, 0x67, 0x72, 0x65, 0x73,

|

||||

0x73, 0x2e, 0x6e, 0x65, 0x74, 0x77, 0x6f, 0x72, 0x6b, 0x69, 0x6e, 0x67, 0x2e, 0x76, 0x31, 0x2e,

|

||||

0x52, 0x65, 0x67, 0x69, 0x73, 0x74, 0x72, 0x79, 0x43, 0x6f, 0x6e, 0x66, 0x69, 0x67, 0x52, 0x0a,

|

||||

0x72, 0x65, 0x67, 0x69, 0x73, 0x74, 0x72, 0x69, 0x65, 0x73, 0x22, 0xfd, 0x06, 0x0a, 0x0e, 0x52,

|

||||

0x65, 0x67, 0x69, 0x73, 0x74, 0x72, 0x79, 0x43, 0x6f, 0x6e, 0x66, 0x69, 0x67, 0x12, 0x17, 0x0a,

|

||||

0x04, 0x74, 0x79, 0x70, 0x65, 0x18, 0x01, 0x20, 0x01, 0x28, 0x09, 0x42, 0x03, 0xe0, 0x41, 0x02,

|

||||

0x52, 0x04, 0x74, 0x79, 0x70, 0x65, 0x12, 0x12, 0x0a, 0x04, 0x6e, 0x61, 0x6d, 0x65, 0x18, 0x02,

|

||||

0x20, 0x01, 0x28, 0x09, 0x52, 0x04, 0x6e, 0x61, 0x6d, 0x65, 0x12, 0x1b, 0x0a, 0x06, 0x64, 0x6f,

|

||||

0x6d, 0x61, 0x69, 0x6e, 0x18, 0x03, 0x20, 0x01, 0x28, 0x09, 0x42, 0x03, 0xe0, 0x41, 0x02, 0x52,

|

||||

0x06, 0x64, 0x6f, 0x6d, 0x61, 0x69, 0x6e, 0x12, 0x17, 0x0a, 0x04, 0x70, 0x6f, 0x72, 0x74, 0x18,

|

||||

0x04, 0x20, 0x01, 0x28, 0x0d, 0x42, 0x03, 0xe0, 0x41, 0x02, 0x52, 0x04, 0x70, 0x6f, 0x72, 0x74,

|

||||

0x12, 0x2e, 0x0a, 0x12, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x41, 0x64, 0x64, 0x72, 0x65, 0x73, 0x73,

|

||||

0x53, 0x65, 0x72, 0x76, 0x65, 0x72, 0x18, 0x05, 0x20, 0x01, 0x28, 0x09, 0x52, 0x12, 0x6e, 0x61,

|

||||

0x63, 0x6f, 0x73, 0x41, 0x64, 0x64, 0x72, 0x65, 0x73, 0x73, 0x53, 0x65, 0x72, 0x76, 0x65, 0x72,

|

||||

0x12, 0x26, 0x0a, 0x0e, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x41, 0x63, 0x63, 0x65, 0x73, 0x73, 0x4b,

|

||||

0x65, 0x79, 0x18, 0x06, 0x20, 0x01, 0x28, 0x09, 0x52, 0x0e, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x41,

|

||||

0x63, 0x63, 0x65, 0x73, 0x73, 0x4b, 0x65, 0x79, 0x12, 0x26, 0x0a, 0x0e, 0x6e, 0x61, 0x63, 0x6f,

|

||||

0x73, 0x53, 0x65, 0x63, 0x72, 0x65, 0x74, 0x4b, 0x65, 0x79, 0x18, 0x07, 0x20, 0x01, 0x28, 0x09,

|

||||

0x52, 0x0e, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x53, 0x65, 0x63, 0x72, 0x65, 0x74, 0x4b, 0x65, 0x79,

|

||||

0x12, 0x2a, 0x0a, 0x10, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x4e, 0x61, 0x6d, 0x65, 0x73, 0x70, 0x61,

|

||||

0x63, 0x65, 0x49, 0x64, 0x18, 0x08, 0x20, 0x01, 0x28, 0x09, 0x52, 0x10, 0x6e, 0x61, 0x63, 0x6f,

|

||||

0x73, 0x4e, 0x61, 0x6d, 0x65, 0x73, 0x70, 0x61, 0x63, 0x65, 0x49, 0x64, 0x12, 0x26, 0x0a, 0x0e,

|

||||

0x6e, 0x61, 0x63, 0x6f, 0x73, 0x4e, 0x61, 0x6d, 0x65, 0x73, 0x70, 0x61, 0x63, 0x65, 0x18, 0x09,

|

||||

0x20, 0x01, 0x28, 0x09, 0x52, 0x0e, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x4e, 0x61, 0x6d, 0x65, 0x73,

|

||||

0x70, 0x61, 0x63, 0x65, 0x12, 0x20, 0x0a, 0x0b, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x47, 0x72, 0x6f,

|

||||

0x75, 0x70, 0x73, 0x18, 0x0a, 0x20, 0x03, 0x28, 0x09, 0x52, 0x0b, 0x6e, 0x61, 0x63, 0x6f, 0x73,

|

||||

0x47, 0x72, 0x6f, 0x75, 0x70, 0x73, 0x12, 0x32, 0x0a, 0x14, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x52,

|

||||

0x65, 0x66, 0x72, 0x65, 0x73, 0x68, 0x49, 0x6e, 0x74, 0x65, 0x72, 0x76, 0x61, 0x6c, 0x18, 0x0b,

|

||||

0x20, 0x01, 0x28, 0x03, 0x52, 0x14, 0x6e, 0x61, 0x63, 0x6f, 0x73, 0x52, 0x65, 0x66, 0x72, 0x65,

|

||||

0x73, 0x68, 0x49, 0x6e, 0x74, 0x65, 0x72, 0x76, 0x61, 0x6c, 0x12, 0x28, 0x0a, 0x0f, 0x63, 0x6f,

|

||||

0x6e, 0x73, 0x75, 0x6c, 0x4e, 0x61, 0x6d, 0x65, 0x73, 0x70, 0x61, 0x63, 0x65, 0x18, 0x0c, 0x20,

|

||||

0x01, 0x28, 0x09, 0x52, 0x0f, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x4e, 0x61, 0x6d, 0x65, 0x73,

|

||||

0x70, 0x61, 0x63, 0x65, 0x12, 0x26, 0x0a, 0x0e, 0x7a, 0x6b, 0x53, 0x65, 0x72, 0x76, 0x69, 0x63,

|

||||

0x65, 0x73, 0x50, 0x61, 0x74, 0x68, 0x18, 0x0d, 0x20, 0x03, 0x28, 0x09, 0x52, 0x0e, 0x7a, 0x6b,

|

||||

0x53, 0x65, 0x72, 0x76, 0x69, 0x63, 0x65, 0x73, 0x50, 0x61, 0x74, 0x68, 0x12, 0x2a, 0x0a, 0x10,

|

||||

0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x44, 0x61, 0x74, 0x61, 0x63, 0x65, 0x6e, 0x74, 0x65, 0x72,

|

||||

0x18, 0x0e, 0x20, 0x01, 0x28, 0x09, 0x52, 0x10, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x44, 0x61,

|

||||

0x74, 0x61, 0x63, 0x65, 0x6e, 0x74, 0x65, 0x72, 0x12, 0x2a, 0x0a, 0x10, 0x63, 0x6f, 0x6e, 0x73,

|

||||

0x75, 0x6c, 0x53, 0x65, 0x72, 0x76, 0x69, 0x63, 0x65, 0x54, 0x61, 0x67, 0x18, 0x0f, 0x20, 0x01,

|

||||

0x28, 0x09, 0x52, 0x10, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x53, 0x65, 0x72, 0x76, 0x69, 0x63,

|

||||

0x65, 0x54, 0x61, 0x67, 0x12, 0x34, 0x0a, 0x15, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x52, 0x65,

|

||||

0x66, 0x72, 0x65, 0x73, 0x68, 0x49, 0x6e, 0x74, 0x65, 0x72, 0x76, 0x61, 0x6c, 0x18, 0x10, 0x20,

|

||||

0x01, 0x28, 0x03, 0x52, 0x15, 0x63, 0x6f, 0x6e, 0x73, 0x75, 0x6c, 0x52, 0x65, 0x66, 0x72, 0x65,

|

||||

0x73, 0x68, 0x49, 0x6e, 0x74, 0x65, 0x72, 0x76, 0x61, 0x6c, 0x12, 0x26, 0x0a, 0x0e, 0x61, 0x75,

|

||||

0x74, 0x68, 0x53, 0x65, 0x63, 0x72, 0x65, 0x74, 0x4e, 0x61, 0x6d, 0x65, 0x18, 0x11, 0x20, 0x01,

|

||||

0x28, 0x09, 0x52, 0x0e, 0x61, 0x75, 0x74, 0x68, 0x53, 0x65, 0x63, 0x72, 0x65, 0x74, 0x4e, 0x61,

|

||||

0x6d, 0x65, 0x12, 0x1a, 0x0a, 0x08, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x63, 0x6f, 0x6c, 0x18, 0x12,

|

||||

0x20, 0x01, 0x28, 0x09, 0x52, 0x08, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x63, 0x6f, 0x6c, 0x12, 0x10,

|

||||

0x0a, 0x03, 0x73, 0x6e, 0x69, 0x18, 0x13, 0x20, 0x01, 0x28, 0x09, 0x52, 0x03, 0x73, 0x6e, 0x69,

|

||||

0x12, 0x36, 0x0a, 0x16, 0x6d, 0x63, 0x70, 0x53, 0x65, 0x72, 0x76, 0x65, 0x72, 0x45, 0x78, 0x70,

|

||||

0x6f, 0x72, 0x74, 0x44, 0x6f, 0x6d, 0x61, 0x69, 0x6e, 0x73, 0x18, 0x14, 0x20, 0x03, 0x28, 0x09,

|

||||

0x52, 0x16, 0x6d, 0x63, 0x70, 0x53, 0x65, 0x72, 0x76, 0x65, 0x72, 0x45, 0x78, 0x70, 0x6f, 0x72,

|

||||

0x74, 0x44, 0x6f, 0x6d, 0x61, 0x69, 0x6e, 0x73, 0x12, 0x2a, 0x0a, 0x10, 0x6d, 0x63, 0x70, 0x53,

|

||||

0x65, 0x72, 0x76, 0x65, 0x72, 0x42, 0x61, 0x73, 0x65, 0x55, 0x72, 0x6c, 0x18, 0x15, 0x20, 0x01,

|

||||

0x28, 0x09, 0x52, 0x10, 0x6d, 0x63, 0x70, 0x53, 0x65, 0x72, 0x76, 0x65, 0x72, 0x42, 0x61, 0x73,

|

||||

0x65, 0x55, 0x72, 0x6c, 0x12, 0x44, 0x0a, 0x0f, 0x65, 0x6e, 0x61, 0x62, 0x6c, 0x65, 0x4d, 0x43,

|

||||

0x50, 0x53, 0x65, 0x72, 0x76, 0x65, 0x72, 0x18, 0x16, 0x20, 0x01, 0x28, 0x0b, 0x32, 0x1a, 0x2e,

|

||||

0x67, 0x6f, 0x6f, 0x67, 0x6c, 0x65, 0x2e, 0x70, 0x72, 0x6f, 0x74, 0x6f, 0x62, 0x75, 0x66, 0x2e,

|

||||

0x42, 0x6f, 0x6f, 0x6c, 0x56, 0x61, 0x6c, 0x75, 0x65, 0x52, 0x0f, 0x65, 0x6e, 0x61, 0x62, 0x6c,

|

||||

0x65, 0x4d, 0x43, 0x50, 0x53, 0x65, 0x72, 0x76, 0x65, 0x72, 0x42, 0x2e, 0x5a, 0x2c, 0x67, 0x69,

|

||||

0x74, 0x68, 0x75, 0x62, 0x2e, 0x63, 0x6f, 0x6d, 0x2f, 0x61, 0x6c, 0x69, 0x62, 0x61, 0x62, 0x61,

|

||||

0x2f, 0x68, 0x69, 0x67, 0x72, 0x65, 0x73, 0x73, 0x2f, 0x61, 0x70, 0x69, 0x2f, 0x6e, 0x65, 0x74,

|

||||

0x77, 0x6f, 0x72, 0x6b, 0x69, 0x6e, 0x67, 0x2f, 0x76, 0x31, 0x62, 0x06, 0x70, 0x72, 0x6f, 0x74,

|

||||

0x6f, 0x33,

|

||||

}

|

||||

|

||||

var (

|

||||

@@ -374,16 +415,18 @@ func file_networking_v1_mcp_bridge_proto_rawDescGZIP() []byte {

|

||||

|

||||

var file_networking_v1_mcp_bridge_proto_msgTypes = make([]protoimpl.MessageInfo, 2)

|

||||

var file_networking_v1_mcp_bridge_proto_goTypes = []interface{}{

|

||||

(*McpBridge)(nil), // 0: higress.networking.v1.McpBridge

|

||||

(*RegistryConfig)(nil), // 1: higress.networking.v1.RegistryConfig

|

||||

(*McpBridge)(nil), // 0: higress.networking.v1.McpBridge

|

||||

(*RegistryConfig)(nil), // 1: higress.networking.v1.RegistryConfig

|

||||

(*wrappers.BoolValue)(nil), // 2: google.protobuf.BoolValue

|

||||

}

|

||||

var file_networking_v1_mcp_bridge_proto_depIdxs = []int32{

|

||||

1, // 0: higress.networking.v1.McpBridge.registries:type_name -> higress.networking.v1.RegistryConfig

|

||||

1, // [1:1] is the sub-list for method output_type

|

||||

1, // [1:1] is the sub-list for method input_type

|

||||

1, // [1:1] is the sub-list for extension type_name

|

||||

1, // [1:1] is the sub-list for extension extendee

|

||||

0, // [0:1] is the sub-list for field type_name

|

||||

2, // 1: higress.networking.v1.RegistryConfig.enableMCPServer:type_name -> google.protobuf.BoolValue

|

||||

2, // [2:2] is the sub-list for method output_type

|

||||

2, // [2:2] is the sub-list for method input_type

|

||||

2, // [2:2] is the sub-list for extension type_name

|

||||

2, // [2:2] is the sub-list for extension extendee

|

||||

0, // [0:2] is the sub-list for field type_name

|

||||

}

|

||||

|

||||

func init() { file_networking_v1_mcp_bridge_proto_init() }

|

||||

|

||||

@@ -15,6 +15,8 @@

|

||||

syntax = "proto3";

|

||||

|

||||

import "google/api/field_behavior.proto";

|

||||

import "google/protobuf/wrappers.proto";

|

||||

import "google/protobuf/struct.proto";

|

||||

|

||||

// $schema: higress.networking.v1.McpBridge

|

||||

// $title: McpBridge

|

||||

@@ -66,4 +68,7 @@ message RegistryConfig {

|

||||

string authSecretName = 17;

|

||||

string protocol = 18;

|

||||

string sni = 19;

|

||||

repeated string mcpServerExportDomains = 20;

|

||||

string mcpServerBaseUrl = 21;

|

||||

google.protobuf.BoolValue enableMCPServer = 22;

|

||||

}

|

||||

|

||||

Submodule envoy/envoy updated: a2c5a07960...17cf01d9f6

39

go.mod

39

go.mod

@@ -39,7 +39,7 @@ require (

|

||||

github.com/tidwall/gjson v1.17.0

|

||||

go.uber.org/atomic v1.11.0

|

||||

go.uber.org/zap v1.27.0

|

||||

golang.org/x/net v0.27.0

|

||||

golang.org/x/net v0.33.0

|

||||

google.golang.org/genproto/googleapis/api v0.0.0-20230920204549-e6e6cdab5c13

|

||||

google.golang.org/grpc v1.59.0

|

||||

google.golang.org/protobuf v1.33.0

|

||||

@@ -71,7 +71,27 @@ require (

|

||||

github.com/Masterminds/sprig/v3 v3.2.3 // indirect

|

||||

github.com/alecholmes/xfccparser v0.1.0 // indirect

|

||||

github.com/alecthomas/participle v0.4.1 // indirect

|

||||

github.com/aliyun/alibaba-cloud-sdk-go v1.61.1704 // indirect

|

||||

github.com/alibabacloud-go/alibabacloud-gateway-pop v0.0.6 // indirect

|

||||

github.com/alibabacloud-go/alibabacloud-gateway-spi v0.0.5 // indirect

|

||||

github.com/alibabacloud-go/darabonba-array v0.1.0 // indirect

|

||||

github.com/alibabacloud-go/darabonba-encode-util v0.0.2 // indirect

|

||||

github.com/alibabacloud-go/darabonba-map v0.0.2 // indirect

|

||||

github.com/alibabacloud-go/darabonba-openapi/v2 v2.0.10 // indirect

|

||||

github.com/alibabacloud-go/darabonba-signature-util v0.0.7 // indirect

|

||||

github.com/alibabacloud-go/darabonba-string v1.0.2 // indirect

|

||||

github.com/alibabacloud-go/debug v1.0.1 // indirect

|

||||

github.com/alibabacloud-go/endpoint-util v1.1.0 // indirect

|

||||

github.com/alibabacloud-go/kms-20160120/v3 v3.2.3 // indirect

|

||||

github.com/alibabacloud-go/openapi-util v0.1.0 // indirect

|

||||

github.com/alibabacloud-go/tea v1.2.2 // indirect

|

||||

github.com/alibabacloud-go/tea-utils v1.4.4 // indirect

|

||||

github.com/alibabacloud-go/tea-utils/v2 v2.0.7 // indirect

|

||||

github.com/alibabacloud-go/tea-xml v1.1.3 // indirect

|

||||

github.com/aliyun/alibaba-cloud-sdk-go v1.61.1800 // indirect

|

||||

github.com/aliyun/alibabacloud-dkms-gcs-go-sdk v0.5.1 // indirect

|

||||

github.com/aliyun/alibabacloud-dkms-transfer-go-sdk v0.1.8 // indirect

|

||||

github.com/aliyun/aliyun-secretsmanager-client-go v1.1.5 // indirect

|

||||

github.com/aliyun/credentials-go v1.4.3 // indirect

|

||||

github.com/antlr/antlr4/runtime/Go/antlr/v4 v4.0.0-20230305170008-8188dc5388df // indirect

|

||||

github.com/armon/go-metrics v0.4.1 // indirect

|

||||

github.com/asaskevich/govalidator v0.0.0-20230301143203-a9d515a09cc2 // indirect

|

||||

@@ -82,10 +102,12 @@ require (

|

||||

github.com/census-instrumentation/opencensus-proto v0.4.1 // indirect

|

||||

github.com/cespare/xxhash/v2 v2.2.0 // indirect

|

||||

github.com/clbanning/mxj v1.8.4 // indirect

|

||||

github.com/clbanning/mxj/v2 v2.5.5 // indirect

|

||||

github.com/cncf/xds/go v0.0.0-20230607035331-e9ce68804cb4 // indirect

|

||||

github.com/containerd/stargz-snapshotter/estargz v0.14.3 // indirect

|

||||

github.com/coreos/go-oidc/v3 v3.6.0 // indirect

|

||||

github.com/davecgh/go-spew v1.1.2-0.20180830191138-d8f796af33cc // indirect

|

||||

github.com/deckarep/golang-set v1.7.1 // indirect

|

||||

github.com/decred/dcrd/dcrec/secp256k1/v4 v4.2.0 // indirect

|

||||

github.com/docker/cli v24.0.7+incompatible // indirect

|

||||

github.com/docker/distribution v2.8.2+incompatible // indirect

|

||||

@@ -165,6 +187,7 @@ require (

|

||||

github.com/opencontainers/go-digest v1.0.0 // indirect

|

||||

github.com/opencontainers/image-spec v1.1.0-rc5 // indirect

|

||||

github.com/openshift/api v0.0.0-20230720094506-afcbe27aec7c // indirect

|

||||

github.com/orcaman/concurrent-map v0.0.0-20210501183033-44dafcb38ecc // indirect

|

||||

github.com/peterbourgon/diskv v2.0.1+incompatible // indirect

|

||||

github.com/pkg/errors v0.9.1 // indirect

|

||||

github.com/pmezard/go-difflib v1.0.1-0.20181226105442-5d4384ee4fb2 // indirect

|

||||

@@ -182,6 +205,7 @@ require (

|

||||

github.com/tetratelabs/wazero v1.7.3 // indirect

|

||||

github.com/tidwall/match v1.1.1 // indirect

|

||||

github.com/tidwall/pretty v1.2.0 // indirect

|

||||

github.com/tjfoc/gmsm v1.4.1 // indirect

|

||||

github.com/toolkits/concurrent v0.0.0-20150624120057-a4371d70e3e3 // indirect

|

||||

github.com/vbatts/tar-split v0.11.3 // indirect

|

||||

github.com/xlab/treeprint v1.2.0 // indirect

|

||||

@@ -197,14 +221,14 @@ require (

|

||||

go.opentelemetry.io/proto/otlp v1.0.0 // indirect

|

||||

go.starlark.net v0.0.0-20230525235612-a134d8f9ddca // indirect

|

||||

go.uber.org/multierr v1.11.0 // indirect

|

||||

golang.org/x/crypto v0.25.0 // indirect

|

||||

golang.org/x/crypto v0.31.0 // indirect

|

||||

golang.org/x/exp v0.0.0-20231006140011-7918f672742d // indirect

|

||||

golang.org/x/mod v0.17.0 // indirect

|

||||

golang.org/x/oauth2 v0.13.0 // indirect

|

||||

golang.org/x/sync v0.7.0 // indirect

|

||||

golang.org/x/sys v0.22.0 // indirect

|

||||

golang.org/x/term v0.22.0 // indirect

|

||||

golang.org/x/text v0.16.0 // indirect

|

||||

golang.org/x/sync v0.10.0 // indirect

|

||||

golang.org/x/sys v0.28.0 // indirect

|

||||

golang.org/x/term v0.27.0 // indirect

|

||||

golang.org/x/text v0.21.0 // indirect

|

||||

golang.org/x/time v0.3.0 // indirect

|

||||

golang.org/x/tools v0.21.1-0.20240508182429-e35e4ccd0d2d // indirect

|

||||

gomodules.xyz/jsonpatch/v2 v2.4.0 // indirect

|

||||

@@ -250,5 +274,6 @@ replace github.com/caddyserver/certmagic => github.com/2456868764/certmagic v1.0

|

||||

|

||||

replace (

|

||||

github.com/dubbogo/gost => github.com/johnlanni/gost v1.11.23-0.20220713132522-0967a24036c6

|

||||

github.com/nacos-group/nacos-sdk-go/v2 => github.com/luoxiner/nacos-sdk-go/v2 v2.2.9-60

|

||||

golang.org/x/exp => golang.org/x/exp v0.0.0-20230713183714-613f0c0eb8a1

|

||||

)

|

||||

|

||||

144

go.sum

144

go.sum

@@ -683,9 +683,68 @@ github.com/alecthomas/units v0.0.0-20151022065526-2efee857e7cf/go.mod h1:ybxpYRF

|

||||

github.com/alecthomas/units v0.0.0-20190717042225-c3de453c63f4/go.mod h1:ybxpYRFXyAe+OPACYpWeL0wqObRcbAqCMya13uyzqw0=

|

||||

github.com/alecthomas/units v0.0.0-20190924025748-f65c72e2690d/go.mod h1:rBZYJk541a8SKzHPHnH3zbiI+7dagKZ0cgpgrD7Fyho=

|

||||

github.com/alessio/shellescape v1.2.2/go.mod h1:PZAiSCk0LJaZkiCSkPv8qIobYglO3FPpyFjDCtHLS30=

|

||||

github.com/alibabacloud-go/alibabacloud-gateway-pop v0.0.6 h1:eIf+iGJxdU4U9ypaUfbtOWCsZSbTb8AUHvyPrxu6mAA=

|

||||

github.com/alibabacloud-go/alibabacloud-gateway-pop v0.0.6/go.mod h1:4EUIoxs/do24zMOGGqYVWgw0s9NtiylnJglOeEB5UJo=

|

||||

github.com/alibabacloud-go/alibabacloud-gateway-spi v0.0.4/go.mod h1:sCavSAvdzOjul4cEqeVtvlSaSScfNsTQ+46HwlTL1hc=

|

||||

github.com/alibabacloud-go/alibabacloud-gateway-spi v0.0.5 h1:zE8vH9C7JiZLNJJQ5OwjU9mSi4T9ef9u3BURT6LCLC8=

|

||||

github.com/alibabacloud-go/alibabacloud-gateway-spi v0.0.5/go.mod h1:tWnyE9AjF8J8qqLk645oUmVUnFybApTQWklQmi5tY6g=

|

||||

github.com/alibabacloud-go/darabonba-array v0.1.0 h1:vR8s7b1fWAQIjEjWnuF0JiKsCvclSRTfDzZHTYqfufY=

|

||||

github.com/alibabacloud-go/darabonba-array v0.1.0/go.mod h1:BLKxr0brnggqOJPqT09DFJ8g3fsDshapUD3C3aOEFaI=

|

||||

github.com/alibabacloud-go/darabonba-encode-util v0.0.2 h1:1uJGrbsGEVqWcWxrS9MyC2NG0Ax+GpOM5gtupki31XE=

|

||||

github.com/alibabacloud-go/darabonba-encode-util v0.0.2/go.mod h1:JiW9higWHYXm7F4PKuMgEUETNZasrDM6vqVr/Can7H8=

|

||||

github.com/alibabacloud-go/darabonba-map v0.0.2 h1:qvPnGB4+dJbJIxOOfawxzF3hzMnIpjmafa0qOTp6udc=

|

||||

github.com/alibabacloud-go/darabonba-map v0.0.2/go.mod h1:28AJaX8FOE/ym8OUFWga+MtEzBunJwQGceGQlvaPGPc=

|

||||

github.com/alibabacloud-go/darabonba-openapi/v2 v2.0.9/go.mod h1:bb+Io8Sn2RuM3/Rpme6ll86jMyFSrD1bxeV/+v61KeU=

|

||||

github.com/alibabacloud-go/darabonba-openapi/v2 v2.0.10 h1:GEYkMApgpKEVDn6z12DcH1EGYpDYRB8JxsazM4Rywak=

|

||||

github.com/alibabacloud-go/darabonba-openapi/v2 v2.0.10/go.mod h1:26a14FGhZVELuz2cc2AolvW4RHmIO3/HRwsdHhaIPDE=

|

||||

github.com/alibabacloud-go/darabonba-signature-util v0.0.7 h1:UzCnKvsjPFzApvODDNEYqBHMFt1w98wC7FOo0InLyxg=

|

||||

github.com/alibabacloud-go/darabonba-signature-util v0.0.7/go.mod h1:oUzCYV2fcCH797xKdL6BDH8ADIHlzrtKVjeRtunBNTQ=

|

||||

github.com/alibabacloud-go/darabonba-string v1.0.2 h1:E714wms5ibdzCqGeYJ9JCFywE5nDyvIXIIQbZVFkkqo=

|

||||

github.com/alibabacloud-go/darabonba-string v1.0.2/go.mod h1:93cTfV3vuPhhEwGGpKKqhVW4jLe7tDpo3LUM0i0g6mA=

|

||||

github.com/alibabacloud-go/debug v0.0.0-20190504072949-9472017b5c68/go.mod h1:6pb/Qy8c+lqua8cFpEy7g39NRRqOWc3rOwAy8m5Y2BY=

|

||||

github.com/alibabacloud-go/debug v1.0.0/go.mod h1:8gfgZCCAC3+SCzjWtY053FrOcd4/qlH6IHTI4QyICOc=

|

||||

github.com/alibabacloud-go/debug v1.0.1 h1:MsW9SmUtbb1Fnt3ieC6NNZi6aEwrXfDksD4QA6GSbPg=

|

||||

github.com/alibabacloud-go/debug v1.0.1/go.mod h1:8gfgZCCAC3+SCzjWtY053FrOcd4/qlH6IHTI4QyICOc=

|

||||

github.com/alibabacloud-go/endpoint-util v1.1.0 h1:r/4D3VSw888XGaeNpP994zDUaxdgTSHBbVfZlzf6b5Q=

|

||||

github.com/alibabacloud-go/endpoint-util v1.1.0/go.mod h1:O5FuCALmCKs2Ff7JFJMudHs0I5EBgecXXxZRyswlEjE=

|

||||

github.com/alibabacloud-go/kms-20160120/v3 v3.2.3 h1:vamGcYQFwXVqR6RWcrVTTqlIXZVsYjaA7pZbx+Xw6zw=

|

||||

github.com/alibabacloud-go/kms-20160120/v3 v3.2.3/go.mod h1:3rIyughsFDLie1ut9gQJXkWkMg/NfXBCk+OtXnPu3lw=

|

||||

github.com/alibabacloud-go/openapi-util v0.1.0 h1:0z75cIULkDrdEhkLWgi9tnLe+KhAFE/r5Pb3312/eAY=

|

||||

github.com/alibabacloud-go/openapi-util v0.1.0/go.mod h1:sQuElr4ywwFRlCCberQwKRFhRzIyG4QTP/P4y1CJ6Ws=

|

||||

github.com/alibabacloud-go/tea v1.1.0/go.mod h1:IkGyUSX4Ba1V+k4pCtJUc6jDpZLFph9QMy2VUPTwukg=

|

||||

github.com/alibabacloud-go/tea v1.1.7/go.mod h1:/tmnEaQMyb4Ky1/5D+SE1BAsa5zj/KeGOFfwYm3N/p4=

|

||||

github.com/alibabacloud-go/tea v1.1.8/go.mod h1:/tmnEaQMyb4Ky1/5D+SE1BAsa5zj/KeGOFfwYm3N/p4=

|

||||

github.com/alibabacloud-go/tea v1.1.11/go.mod h1:/tmnEaQMyb4Ky1/5D+SE1BAsa5zj/KeGOFfwYm3N/p4=

|

||||

github.com/alibabacloud-go/tea v1.1.17/go.mod h1:nXxjm6CIFkBhwW4FQkNrolwbfon8Svy6cujmKFUq98A=

|

||||

github.com/alibabacloud-go/tea v1.1.20/go.mod h1:nXxjm6CIFkBhwW4FQkNrolwbfon8Svy6cujmKFUq98A=

|

||||

github.com/alibabacloud-go/tea v1.2.1/go.mod h1:qbzof29bM/IFhLMtJPrgTGK3eauV5J2wSyEUo4OEmnA=

|

||||

github.com/alibabacloud-go/tea v1.2.2 h1:aTsR6Rl3ANWPfqeQugPglfurloyBJY85eFy7Gc1+8oU=

|

||||

github.com/alibabacloud-go/tea v1.2.2/go.mod h1:CF3vOzEMAG+bR4WOql8gc2G9H3EkH3ZLAQdpmpXMgwk=

|

||||

github.com/alibabacloud-go/tea-utils v1.3.1/go.mod h1:EI/o33aBfj3hETm4RLiAxF/ThQdSngxrpF8rKUDJjPE=

|

||||

github.com/alibabacloud-go/tea-utils v1.4.4 h1:lxCDvNCdTo9FaXKKq45+4vGETQUKNOW/qKTcX9Sk53o=

|

||||

github.com/alibabacloud-go/tea-utils v1.4.4/go.mod h1:KNcT0oXlZZxOXINnZBs6YvgOd5aYp9U67G+E3R8fcQw=

|

||||

github.com/alibabacloud-go/tea-utils/v2 v2.0.3/go.mod h1:sj1PbjPodAVTqGTA3olprfeeqqmwD0A5OQz94o9EuXQ=

|

||||

github.com/alibabacloud-go/tea-utils/v2 v2.0.5/go.mod h1:dL6vbUT35E4F4bFTHL845eUloqaerYBYPsdWR2/jhe4=

|

||||

github.com/alibabacloud-go/tea-utils/v2 v2.0.6/go.mod h1:qxn986l+q33J5VkialKMqT/TTs3E+U9MJpd001iWQ9I=

|

||||

github.com/alibabacloud-go/tea-utils/v2 v2.0.7 h1:WDx5qW3Xa5ZgJ1c8NfqJkF6w+AU5wB8835UdhPr6Ax0=

|

||||

github.com/alibabacloud-go/tea-utils/v2 v2.0.7/go.mod h1:qxn986l+q33J5VkialKMqT/TTs3E+U9MJpd001iWQ9I=

|

||||

github.com/alibabacloud-go/tea-xml v1.1.3 h1:7LYnm+JbOq2B+T/B0fHC4Ies4/FofC4zHzYtqw7dgt0=

|

||||

github.com/alibabacloud-go/tea-xml v1.1.3/go.mod h1:Rq08vgCcCAjHyRi/M7xlHKUykZCEtyBy9+DPF6GgEu8=

|

||||

github.com/aliyun/alibaba-cloud-sdk-go v1.61.18/go.mod h1:v8ESoHo4SyHmuB4b1tJqDHxfTGEciD+yhvOU/5s1Rfk=

|

||||

github.com/aliyun/alibaba-cloud-sdk-go v1.61.1704 h1:PpfENOj/vPfhhy9N2OFRjpue0hjM5XqAp2thFmkXXIk=

|

||||

github.com/aliyun/alibaba-cloud-sdk-go v1.61.1704/go.mod h1:RcDobYh8k5VP6TNybz9m++gL3ijVI5wueVr0EM10VsU=

|

||||

github.com/aliyun/alibaba-cloud-sdk-go v1.61.1800 h1:ie/8RxBOfKZWcrbYSJi2Z8uX8TcOlSMwPlEJh83OeOw=

|

||||

github.com/aliyun/alibaba-cloud-sdk-go v1.61.1800/go.mod h1:RcDobYh8k5VP6TNybz9m++gL3ijVI5wueVr0EM10VsU=

|

||||

github.com/aliyun/alibabacloud-dkms-gcs-go-sdk v0.5.1 h1:nJYyoFP+aqGKgPs9JeZgS1rWQ4NndNR0Zfhh161ZltU=

|

||||

github.com/aliyun/alibabacloud-dkms-gcs-go-sdk v0.5.1/go.mod h1:WzGOmFFTlUzXM03CJnHWMQ85UN6QGpOXZocCjwkiyOg=

|

||||

github.com/aliyun/alibabacloud-dkms-transfer-go-sdk v0.1.8 h1:QeUdR7JF7iNCvO/81EhxEr3wDwxk4YBoYZOq6E0AjHI=

|

||||

github.com/aliyun/alibabacloud-dkms-transfer-go-sdk v0.1.8/go.mod h1:xP0KIZry6i7oGPF24vhAPr1Q8vLZRcMcxtft5xDKwCU=

|

||||

github.com/aliyun/aliyun-secretsmanager-client-go v1.1.5 h1:8S0mtD101RDYa0LXwdoqgN0RxdMmmJYjq8g2mk7/lQ4=

|

||||

github.com/aliyun/aliyun-secretsmanager-client-go v1.1.5/go.mod h1:M19fxYz3gpm0ETnoKweYyYtqrtnVtrpKFpwsghbw+cQ=

|

||||

github.com/aliyun/credentials-go v1.1.2/go.mod h1:ozcZaMR5kLM7pwtCMEpVmQ242suV6qTJya2bDq4X1Tw=

|

||||

github.com/aliyun/credentials-go v1.3.1/go.mod h1:8jKYhQuDawt8x2+fusqa1Y6mPxemTsBEN04dgcAcYz0=

|

||||

github.com/aliyun/credentials-go v1.3.6/go.mod h1:1LxUuX7L5YrZUWzBrRyk0SwSdH4OmPrib8NVePL3fxM=

|

||||

github.com/aliyun/credentials-go v1.3.10/go.mod h1:Jm6d+xIgwJVLVWT561vy67ZRP4lPTQxMbEYRuT2Ti1U=

|

||||

github.com/aliyun/credentials-go v1.4.3 h1:N3iHyvHRMyOwY1+0qBLSf3hb5JFiOujVSVuEpgeGttY=

|

||||

github.com/aliyun/credentials-go v1.4.3/go.mod h1:Jm6d+xIgwJVLVWT561vy67ZRP4lPTQxMbEYRuT2Ti1U=

|

||||

github.com/andreyvit/diff v0.0.0-20170406064948-c7f18ee00883/go.mod h1:rCTlJbsFo29Kk6CurOXKm700vrz8f0KW0JNfpkRJY/8=

|

||||

github.com/andybalholm/brotli v1.0.4/go.mod h1:fO7iG3H7G2nSZ7m0zPUDn85XEX2GTukHGRSepvi9Eig=

|

||||

github.com/antihax/optional v1.0.0/go.mod h1:uupD/76wgC+ih3iEmQUL+0Ugr19nfwCT1kdvxnR2qWY=

|

||||

@@ -755,7 +814,6 @@ github.com/certifi/gocertifi v0.0.0-20191021191039-0944d244cd40/go.mod h1:sGbDF6

|

||||

github.com/certifi/gocertifi v0.0.0-20200922220541-2c3bb06c6054/go.mod h1:sGbDF6GwGcLpkNXPUTkMRoywsNa/ol15pxFe6ERfguA=

|

||||

github.com/cespare/xxhash v1.1.0/go.mod h1:XrSqR1VqqWfGrhpAt58auRo0WTKS1nRRg3ghfAqPWnc=

|

||||

github.com/cespare/xxhash/v2 v2.1.1/go.mod h1:VGX0DQ3Q6kWi7AoAeZDth3/j3BFtOZR5XLFGgcrjCOs=

|

||||

github.com/cespare/xxhash/v2 v2.1.2/go.mod h1:VGX0DQ3Q6kWi7AoAeZDth3/j3BFtOZR5XLFGgcrjCOs=

|

||||

github.com/cespare/xxhash/v2 v2.2.0 h1:DC2CZ1Ep5Y4k3ZQ899DldepgrayRUGE6BBZ/cd9Cj44=

|

||||

github.com/cespare/xxhash/v2 v2.2.0/go.mod h1:VGX0DQ3Q6kWi7AoAeZDth3/j3BFtOZR5XLFGgcrjCOs=

|

||||

github.com/chzyer/logex v1.1.11-0.20170329064859-445be9e134b2/go.mod h1:+Ywpsq7O8HXn0nuIou7OrIPyXbp3wmkHB+jjWRnGsAI=

|

||||

@@ -765,6 +823,8 @@ github.com/circonus-labs/circonus-gometrics v2.3.1+incompatible/go.mod h1:nmEj6D

|

||||

github.com/circonus-labs/circonusllhist v0.1.3/go.mod h1:kMXHVDlOchFAehlya5ePtbp5jckzBHf4XRpQvBOLI+I=

|

||||

github.com/clbanning/mxj v1.8.4 h1:HuhwZtbyvyOw+3Z1AowPkU87JkJUSv751ELWaiTpj8I=

|

||||

github.com/clbanning/mxj v1.8.4/go.mod h1:BVjHeAH+rl9rs6f+QIpeRl0tfu10SXn1pUSa5PVGJng=

|

||||

github.com/clbanning/mxj/v2 v2.5.5 h1:oT81vUeEiQQ/DcHbzSytRngP6Ky9O+L+0Bw0zSJag9E=

|

||||

github.com/clbanning/mxj/v2 v2.5.5/go.mod h1:hNiWqW14h+kc+MdF9C6/YoRfjEJoR3ou6tn/Qo+ve2s=

|

||||

github.com/clbanning/x2j v0.0.0-20191024224557-825249438eec/go.mod h1:jMjuTZXRI4dUb/I5gc9Hdhagfvm9+RyrPryS/auMzxE=

|

||||

github.com/client9/misspell v0.3.4/go.mod h1:qj6jICC3Q7zFZvVWo7KLAzC3yx5G7kyvSDkc90ppPyw=

|

||||

github.com/cncf/udpa/go v0.0.0-20191209042840-269d4d468f6f/go.mod h1:M8M6+tZqaGXZJjfX53e64911xZQV5JYwmTeXPW+k8Sc=

|

||||

@@ -813,6 +873,8 @@ github.com/davecgh/go-spew v1.1.0/go.mod h1:J7Y8YcW2NihsgmVo/mv3lAwl/skON4iLHjSs

|

||||

github.com/davecgh/go-spew v1.1.1/go.mod h1:J7Y8YcW2NihsgmVo/mv3lAwl/skON4iLHjSsI+c5H38=

|

||||

github.com/davecgh/go-spew v1.1.2-0.20180830191138-d8f796af33cc h1:U9qPSI2PIWSS1VwoXQT9A3Wy9MM3WgvqSxFWenqJduM=

|

||||

github.com/davecgh/go-spew v1.1.2-0.20180830191138-d8f796af33cc/go.mod h1:J7Y8YcW2NihsgmVo/mv3lAwl/skON4iLHjSsI+c5H38=

|

||||

github.com/deckarep/golang-set v1.7.1 h1:SCQV0S6gTtp6itiFrTqI+pfmJ4LN85S1YzhDf9rTHJQ=

|

||||

github.com/deckarep/golang-set v1.7.1/go.mod h1:93vsz/8Wt4joVM7c2AVqh+YRMiUSc14yDtF28KmMOgQ=

|

||||

github.com/decred/dcrd/crypto/blake256 v1.0.1/go.mod h1:2OfgNZ5wDpcsFmHmCK5gZTPcCXqlm2ArzUIkw9czNJo=

|

||||

github.com/decred/dcrd/dcrec/secp256k1/v4 v4.2.0 h1:8UrgZ3GkP4i/CLijOJx79Yu+etlyjdBU4sfcs2WYQMs=

|

||||

github.com/decred/dcrd/dcrec/secp256k1/v4 v4.2.0/go.mod h1:v57UDF4pDQJcEfFUCRop3lJL149eHGSe9Jvczhzjo/0=

|

||||

@@ -1162,8 +1224,9 @@ github.com/googleapis/gnostic v0.5.5/go.mod h1:7+EbHbldMins07ALC74bsA81Ovc97Dwqy

|

||||

github.com/googleapis/go-type-adapters v1.0.0/go.mod h1:zHW75FOG2aur7gAO2B+MLby+cLsWGBF62rFAi7WjWO4=

|

||||

github.com/googleapis/google-cloud-go-testing v0.0.0-20200911160855-bcd43fbb19e8/go.mod h1:dvDLG8qkwmyD9a/MJJN3XJcT3xFxOKAvTZGvuZmac9g=

|

||||

github.com/gophercloud/gophercloud v0.1.0/go.mod h1:vxM41WHh5uqHVBMZHzuwNOHh8XEoIEcSTewFxm1c5g8=

|

||||

github.com/gopherjs/gopherjs v0.0.0-20181017120253-0766667cb4d1 h1:EGx4pi6eqNxGaHF6qqu48+N2wcFQ5qg5FXgOdqsJ5d8=

|

||||

github.com/gopherjs/gopherjs v0.0.0-20181017120253-0766667cb4d1/go.mod h1:wJfORRmW1u3UXTncJ5qlYoELFm8eSnnEO6hX4iZ3EWY=

|

||||

github.com/gopherjs/gopherjs v0.0.0-20200217142428-fce0ec30dd00 h1:l5lAOZEym3oK3SQ2HBHWsJUfbNBiTXJDeW2QDxw9AQ0=

|

||||

github.com/gopherjs/gopherjs v0.0.0-20200217142428-fce0ec30dd00/go.mod h1:wJfORRmW1u3UXTncJ5qlYoELFm8eSnnEO6hX4iZ3EWY=

|

||||

github.com/gorilla/context v1.1.1/go.mod h1:kBGZzfjB9CEq2AlWe17Uuf7NDRt0dE0s8S51q0aT7Yg=

|

||||

github.com/gorilla/handlers v0.0.0-20150720190736-60c7bfde3e33/go.mod h1:Qkdc/uu4tH4g6mTK6auzZ766c4CA0Ng8+o/OAirnOIQ=

|

||||

github.com/gorilla/mux v1.6.2/go.mod h1:1lud6UwP+6orDFRuTfBEV8e9/aOM/c4fVVCaMa2zaAs=

|

||||

@@ -1371,6 +1434,8 @@ github.com/liggitt/tabwriter v0.0.0-20181228230101-89fcab3d43de h1:9TO3cAIGXtEhn

|

||||

github.com/liggitt/tabwriter v0.0.0-20181228230101-89fcab3d43de/go.mod h1:zAbeS9B/r2mtpb6U+EI2rYA5OAXxsYw6wTamcNW+zcE=

|

||||

github.com/lightstep/lightstep-tracer-common/golang/gogo v0.0.0-20190605223551-bc2310a04743/go.mod h1:qklhhLq1aX+mtWk9cPHPzaBjWImj5ULL6C7HFJtXQMM=

|

||||

github.com/lightstep/lightstep-tracer-go v0.18.1/go.mod h1:jlF1pusYV4pidLvZ+XD0UBX0ZE6WURAspgAczcDHrL4=

|

||||

github.com/luoxiner/nacos-sdk-go/v2 v2.2.9-60 h1:FA/azfz2nSkMc1XR8LeqhcAiA/2/sOMcyBGYCTUc+Cs=

|

||||

github.com/luoxiner/nacos-sdk-go/v2 v2.2.9-60/go.mod h1:9FKXl6FqOiVmm72i8kADtbeK71egyG9y3uRDBg41tpQ=

|

||||

github.com/lyft/protoc-gen-star v0.6.1/go.mod h1:TGAoBVkt8w7MPG72TrKIu85MIdXwDuzJYeZuUPFPNwA=

|

||||

github.com/lyft/protoc-gen-star/v2 v2.0.1/go.mod h1:RcCdONR2ScXaYnQC5tUzxzlpA3WVYF7/opLeUgcQs/o=

|

||||

github.com/lyft/protoc-gen-star/v2 v2.0.3/go.mod h1:amey7yeodaJhXSbf/TlLvWiqQfLOSpEk//mLlc+axEk=

|

||||

@@ -1460,8 +1525,6 @@ github.com/mwitkow/go-conntrack v0.0.0-20190716064945-2f068394615f/go.mod h1:qRW

|

||||

github.com/mxk/go-flowrate v0.0.0-20140419014527-cca7078d478f/go.mod h1:ZdcZmHo+o7JKHSa8/e818NopupXU1YMK5fe1lsApnBw=

|

||||

github.com/nacos-group/nacos-sdk-go v1.0.8 h1:8pEm05Cdav9sQgJSv5kyvlgfz0SzFUUGI3pWX6SiSnM=

|

||||

github.com/nacos-group/nacos-sdk-go v1.0.8/go.mod h1:hlAPn3UdzlxIlSILAyOXKxjFSvDJ9oLzTJ9hLAK1KzA=

|

||||

github.com/nacos-group/nacos-sdk-go/v2 v2.1.2 h1:A8GV6j0rw80I6tTKSav/pTpEgNECYXeFvZCsiLBWGnQ=

|

||||

github.com/nacos-group/nacos-sdk-go/v2 v2.1.2/go.mod h1:ys/1adWeKXXzbNWfRNbaFlX/t6HVLWdpsNDvmoWTw0g=

|

||||

github.com/nats-io/jwt v0.3.0/go.mod h1:fRYCDE99xlTsqUzISS1Bi75UBJ6ljOJQOAAu5VglpSg=

|

||||

github.com/nats-io/jwt v0.3.2/go.mod h1:/euKqTS1ZD+zzjYrY7pseZrTtWQSjujC7xjPc8wL6eU=

|

||||

github.com/nats-io/nats-server/v2 v2.1.2/go.mod h1:Afk+wRZqkMQs/p45uXdrVLuab3gwv3Z8C4HTBu8GD/k=

|

||||

@@ -1517,6 +1580,8 @@ github.com/openzipkin/zipkin-go v0.1.6/go.mod h1:QgAqvLzwWbR/WpD4A3cGpPtJrZXNIiJ

|

||||

github.com/openzipkin/zipkin-go v0.2.1/go.mod h1:NaW6tEwdmWMaCDZzg8sh+IBNOxHMPnhQw8ySjnjRyN4=

|

||||

github.com/openzipkin/zipkin-go v0.2.2/go.mod h1:NaW6tEwdmWMaCDZzg8sh+IBNOxHMPnhQw8ySjnjRyN4=

|

||||

github.com/openzipkin/zipkin-go v0.3.0/go.mod h1:4c3sLeE8xjNqehmF5RpAFLPLJxXscc0R4l6Zg0P1tTQ=

|

||||

github.com/orcaman/concurrent-map v0.0.0-20210501183033-44dafcb38ecc h1:Ak86L+yDSOzKFa7WM5bf5itSOo1e3Xh8bm5YCMUXIjQ=

|

||||

github.com/orcaman/concurrent-map v0.0.0-20210501183033-44dafcb38ecc/go.mod h1:Lu3tH6HLW3feq74c2GC+jIMS/K2CFcDWnWD9XkenwhI=

|

||||

github.com/pact-foundation/pact-go v1.0.4/go.mod h1:uExwJY4kCzNPcHRj+hCR/HBbOOIwwtUjcrb0b5/5kLM=

|

||||

github.com/pascaldekloe/goe v0.0.0-20180627143212-57f6aae5913c/go.mod h1:lzWF7FIEvWOWxwDKqyGYQf6ZUaNfKdP144TG7ZOy1lc=

|

||||

github.com/pascaldekloe/goe v0.1.0 h1:cBOtyMzM9HTpWjXfbbunk26uA6nG3a8n06Wieeh0MwY=

|

||||

@@ -1560,7 +1625,6 @@ github.com/prometheus/client_golang v1.5.1/go.mod h1:e9GMxYsXl05ICDXkRhurwBS4Q3O

|

||||

github.com/prometheus/client_golang v1.7.1/go.mod h1:PY5Wy2awLA44sXw4AOSfFBetzPP4j5+D6mVACh+pe2M=

|

||||

github.com/prometheus/client_golang v1.9.0/go.mod h1:FqZLKOZnGdFAhOK4nqGHa7D66IdsO+O441Eve7ptJDU=

|

||||

github.com/prometheus/client_golang v1.11.0/go.mod h1:Z6t4BnS23TR94PD6BsDNk8yVqroYurpAkEiz0P2BEV0=

|

||||

github.com/prometheus/client_golang v1.12.2/go.mod h1:3Z9XVyYiZYEO+YQWt3RD2R3jrbd179Rt297l4aS6nDY=

|

||||

github.com/prometheus/client_golang v1.17.0 h1:rl2sfwZMtSthVU752MqfjQozy7blglC+1SOtjMAMh+Q=

|

||||

github.com/prometheus/client_golang v1.17.0/go.mod h1:VeL+gMmOAxkS2IqfCq0ZmHSL+LjWfWDUmp1mBz9JgUY=

|

||||

github.com/prometheus/client_model v0.0.0-20171117100541-99fa1f4be8e5/go.mod h1:MbSGuTsp3dbXC40dX6PRTWyKYBIrTGTE9sqQNg2J8bo=

|

||||

@@ -1593,7 +1657,6 @@ github.com/prometheus/procfs v0.0.11/go.mod h1:lV6e/gmhEcM9IjHGsFOCxxuZ+z1YqCvr4

|

||||

github.com/prometheus/procfs v0.1.3/go.mod h1:lV6e/gmhEcM9IjHGsFOCxxuZ+z1YqCvr4OA4YeYWdaU=

|

||||

github.com/prometheus/procfs v0.2.0/go.mod h1:lV6e/gmhEcM9IjHGsFOCxxuZ+z1YqCvr4OA4YeYWdaU=

|

||||

github.com/prometheus/procfs v0.6.0/go.mod h1:cz+aTbrPOrUb4q7XlbU9ygM+/jj0fzG6c1xBZuNvfVA=

|

||||