mirror of

https://github.com/alibaba/higress.git

synced 2026-02-26 05:30:50 +08:00

Compare commits

42 Commits

v1.4.2

...

release-1.

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

a2c2d1d521 | ||

|

|

a5a28aebf6 | ||

|

|

1c10f36369 | ||

|

|

7054f01a36 | ||

|

|

895f17f8d8 | ||

|

|

29fcd330d5 | ||

|

|

0e58042fa6 | ||

|

|

bdbfad8a8a | ||

|

|

4307f88645 | ||

|

|

25b085cb5e | ||

|

|

dcea483c61 | ||

|

|

8fa1224cba | ||

|

|

8f7c10ee5f | ||

|

|

5a854b990b | ||

|

|

dd11248e47 | ||

|

|

ba98f3a7ad | ||

|

|

d31c978ed3 | ||

|

|

daa374d9a4 | ||

|

|

6b9dabb489 | ||

|

|

6f04404edd | ||

|

|

04a9104062 | ||

|

|

564f8c770a | ||

|

|

fec2e9dfc9 | ||

|

|

dc4ddb52ee | ||

|

|

6f221ead53 | ||

|

|

53f8410843 | ||

|

|

a17ac9e4c6 | ||

|

|

5e95f6f057 | ||

|

|

94f29e56c0 | ||

|

|

870157c576 | ||

|

|

c78ef7011d | ||

|

|

dc0dcaaaee | ||

|

|

34f5722d93 | ||

|

|

55fdddee2f | ||

|

|

980ffde244 | ||

|

|

0a578c2a04 | ||

|

|

536a3069a8 | ||

|

|

08c64ed467 | ||

|

|

cc74c0da93 | ||

|

|

210b97b06b | ||

|

|

bccfbde621 | ||

|

|

f1c6e78047 |

5

.github/workflows/build-and-test-plugin.yaml

vendored

5

.github/workflows/build-and-test-plugin.yaml

vendored

@@ -49,6 +49,11 @@ jobs:

|

||||

with:

|

||||

go-version: 1.19

|

||||

|

||||

- name: Setup Rust

|

||||

uses: actions-rs/toolchain@v1

|

||||

with:

|

||||

toolchain: stable

|

||||

if: matrix.wasmPluginType == 'RUST'

|

||||

- name: Setup Golang Caches

|

||||

uses: actions/cache@v4

|

||||

with:

|

||||

|

||||

@@ -177,8 +177,8 @@ install: pre-install

|

||||

cd helm/higress; helm dependency build

|

||||

helm install higress helm/higress -n higress-system --create-namespace --set 'global.local=true'

|

||||

|

||||

ENVOY_LATEST_IMAGE_TAG ?= sha-63539ca

|

||||

ISTIO_LATEST_IMAGE_TAG ?= sha-63539ca

|

||||

ENVOY_LATEST_IMAGE_TAG ?= sha-59acb61

|

||||

ISTIO_LATEST_IMAGE_TAG ?= sha-59acb61

|

||||

|

||||

install-dev: pre-install

|

||||

helm install higress helm/core -n higress-system --create-namespace --set 'controller.tag=$(TAG)' --set 'gateway.replicas=1' --set 'pilot.tag=$(ISTIO_LATEST_IMAGE_TAG)' --set 'gateway.tag=$(ENVOY_LATEST_IMAGE_TAG)' --set 'global.local=true'

|

||||

|

||||

87

README.md

87

README.md

@@ -1,17 +1,18 @@

|

||||

<h1 align="center">

|

||||

<img src="https://img.alicdn.com/imgextra/i2/O1CN01NwxLDd20nxfGBjxmZ_!!6000000006895-2-tps-960-290.png" alt="Higress" width="240" height="72.5">

|

||||

<br>

|

||||

Cloud Native API Gateway

|

||||

AI Gateway

|

||||

</h1>

|

||||

<h4 align="center"> AI Native API Gateway </h4>

|

||||

|

||||

[](https://github.com/alibaba/higress/actions)

|

||||

[](https://www.apache.org/licenses/LICENSE-2.0.html)

|

||||

|

||||

[**官网**](https://higress.io/) |

|

||||

[**文档**](https://higress.io/zh-cn/docs/overview/what-is-higress) |

|

||||

[**博客**](https://higress.io/zh-cn/blog) |

|

||||

[**开发指引**](https://higress.io/zh-cn/docs/developers/developers_dev) |

|

||||

[**Higress 企业版**](https://www.aliyun.com/product/aliware/mse?spm=higress-website.topbar.0.0.0)

|

||||

[**文档**](https://higress.io/docs/latest/user/quickstart/) |

|

||||

[**博客**](https://higress.io/blog/) |

|

||||

[**开发指引**](https://higress.io/docs/latest/dev/architecture/) |

|

||||

[**AI插件**](https://higress.io/plugin/)

|

||||

|

||||

|

||||

<p>

|

||||

@@ -19,21 +20,54 @@

|

||||

</p>

|

||||

|

||||

|

||||

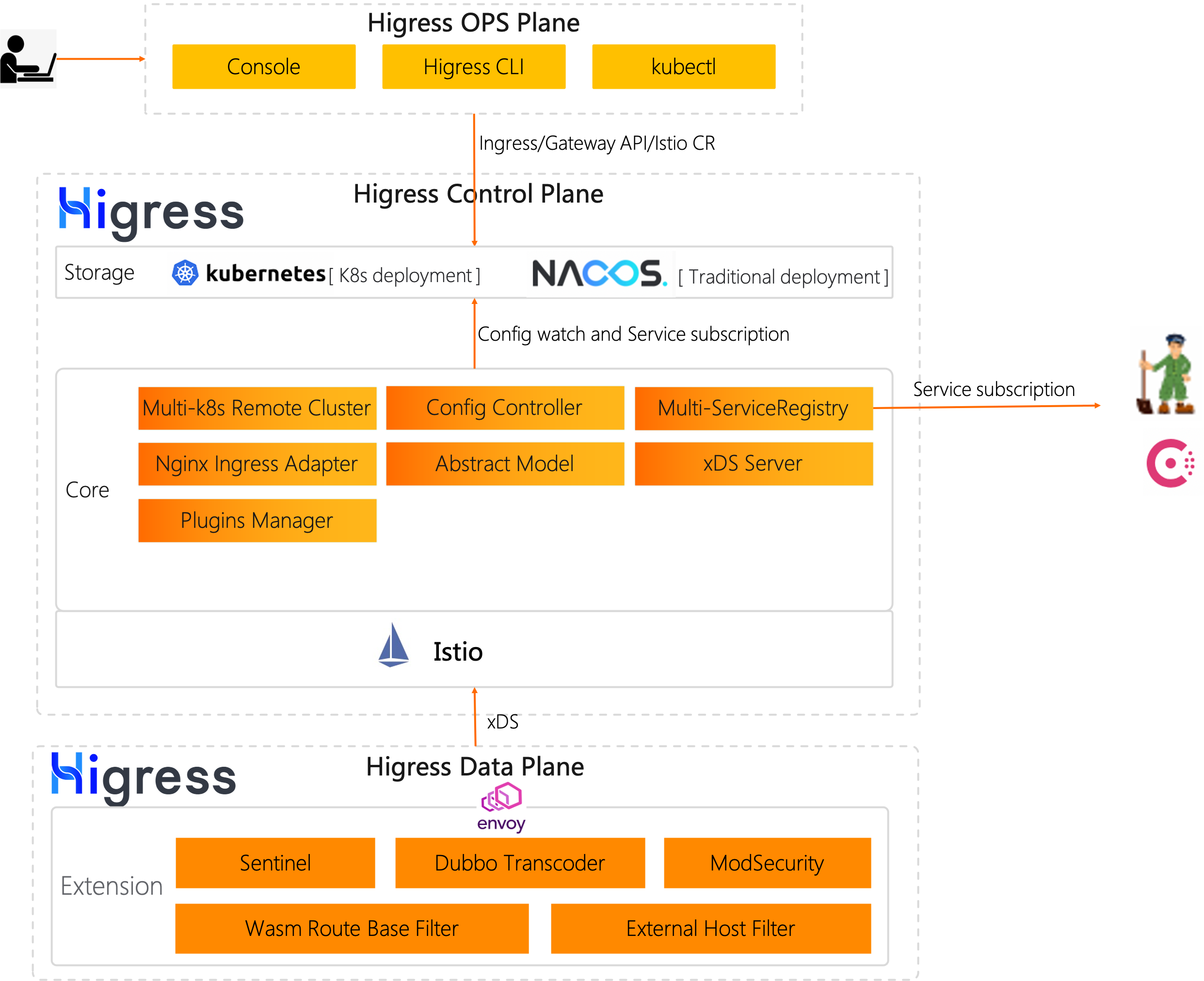

Higress 是基于阿里内部两年多的 Envoy Gateway 实践沉淀,以开源 [Istio](https://github.com/istio/istio) 与 [Envoy](https://github.com/envoyproxy/envoy) 为核心构建的云原生 API 网关。Higress 实现了安全防护网关、流量网关、微服务网关三层网关合一,可以显著降低网关的部署和运维成本。

|

||||

Higress 是基于阿里内部多年的 Envoy Gateway 实践沉淀,以开源 [Istio](https://github.com/istio/istio) 与 [Envoy](https://github.com/envoyproxy/envoy) 为核心构建的云原生 API 网关。

|

||||

|

||||

Higress 在阿里内部作为 AI 网关,承载了通义千问 APP、百炼大模型 API、机器学习 PAI 平台等 AI 业务的流量。

|

||||

|

||||

Higress 能够用统一的协议对接国内外所有 LLM 模型厂商,同时具备丰富的 AI 可观测、多模型负载均衡/fallback、AI token 流控、AI 缓存等能力:

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## Summary

|

||||

|

||||

|

||||

- [**快速开始**](#快速开始)

|

||||

- [**功能展示**](#功能展示)

|

||||

- [**使用场景**](#使用场景)

|

||||

- [**核心优势**](#核心优势)

|

||||

- [**Quick Start**](https://higress.io/zh-cn/docs/user/quickstart)

|

||||

- [**社区**](#社区)

|

||||

|

||||

## 快速开始

|

||||

|

||||

Higress 只需 Docker 即可启动,方便个人开发者在本地搭建学习,或者用于搭建简易站点:

|

||||

|

||||

```bash

|

||||

# 创建一个工作目录

|

||||

mkdir higress; cd higress

|

||||

# 启动 higress,配置文件会写到工作目录下

|

||||

docker run -d --rm --name higress-ai -v ${PWD}:/data \

|

||||

-p 8001:8001 -p 8080:8080 -p 8443:8443 \

|

||||

higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/all-in-one:latest

|

||||

```

|

||||

|

||||

监听端口说明如下:

|

||||

|

||||

- 8001 端口:Higress UI 控制台入口

|

||||

- 8080 端口:网关 HTTP 协议入口

|

||||

- 8443 端口:网关 HTTPS 协议入口

|

||||

|

||||

**Higress 的所有 Docker 镜像都一直使用自己独享的仓库,不受 Docker Hub 境内不可访问的影响**

|

||||

|

||||

K8s 下使用 Helm 部署等其他安装方式可以参考官网 [Quick Start 文档](https://higress.io/docs/latest/user/quickstart/)。

|

||||

|

||||

|

||||

## 使用场景

|

||||

|

||||

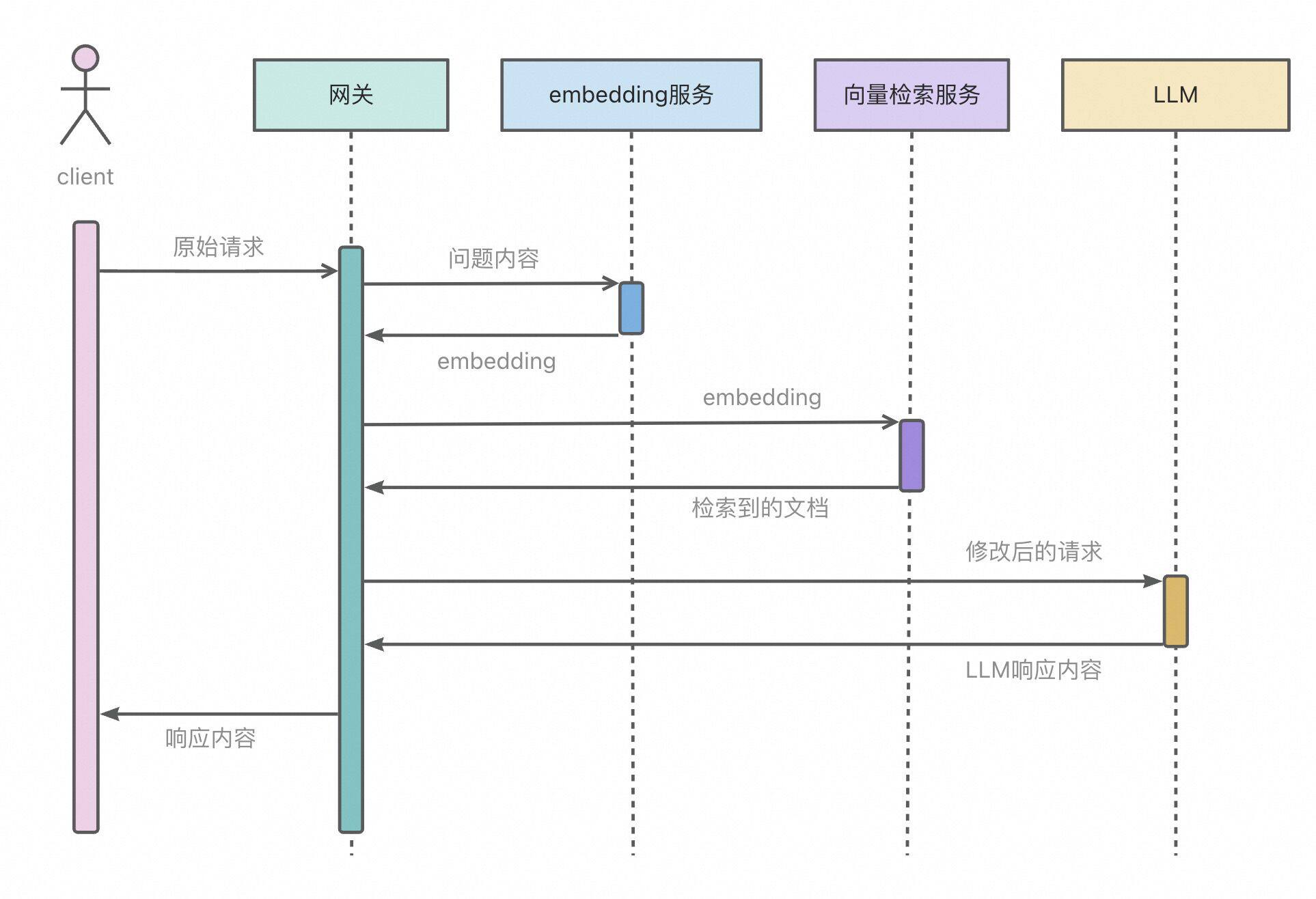

- **AI 网关**:

|

||||

|

||||

Higress 提供了一站式的 AI 插件集,可以增强依赖 AI 能力业务的稳定性、灵活性、可观测性,使得业务与 AI 的集成更加便捷和高效。

|

||||

|

||||

- **Kubernetes Ingress 网关**:

|

||||

|

||||

Higress 可以作为 K8s 集群的 Ingress 入口网关, 并且兼容了大量 K8s Nginx Ingress 的注解,可以从 K8s Nginx Ingress 快速平滑迁移到 Higress。

|

||||

@@ -56,27 +90,36 @@ Higress 是基于阿里内部两年多的 Envoy Gateway 实践沉淀,以开源

|

||||

|

||||

脱胎于阿里巴巴2年多生产验证的内部产品,支持每秒请求量达数十万级的大规模场景。

|

||||

|

||||

彻底摆脱 reload 引起的流量抖动,配置变更毫秒级生效且业务无感。

|

||||

|

||||

- **平滑演进**

|

||||

彻底摆脱 Nginx reload 引起的流量抖动,配置变更毫秒级生效且业务无感。对 AI 业务等长连接场景特别友好。

|

||||

|

||||

支持 Nacos/Zookeeper/Eureka 等多种注册中心,可以不依赖 K8s Service 进行服务发现,支持非容器架构平滑演进到云原生架构。

|

||||

- **流式处理**

|

||||

|

||||

支持从 Nginx Ingress Controller 平滑迁移,支持平滑过渡到 Gateway API,支持业务架构平滑演进到 ServiceMesh。

|

||||

支持真正的完全流式处理请求/响应 Body,Wasm 插件很方便地自定义处理 SSE (Server-Sent Events)等流式协议的报文。

|

||||

|

||||

- **兼收并蓄**

|

||||

|

||||

兼容 Nginx Ingress Annotation 80%+ 的使用场景,且提供功能更丰富的 Higress Annotation 注解。

|

||||

|

||||

兼容 Ingress API/Gateway API/Istio API,可以组合多种 CRD 实现流量精细化管理。

|

||||

|

||||

在 AI 业务等大带宽场景下,可以显著降低内存开销。

|

||||

|

||||

- **便于扩展**

|

||||

|

||||

提供 Wasm、Lua、进程外三种插件扩展机制,支持多语言编写插件,生效粒度支持全局级、域名级,路由级。

|

||||

提供丰富的官方插件库,涵盖 AI、流量管理、安全防护等常用功能,满足90%以上的业务场景需求。

|

||||

|

||||

主打 Wasm 插件扩展,通过沙箱隔离确保内存安全,支持多种编程语言,允许插件版本独立升级,实现流量无损热更新网关逻辑。

|

||||

|

||||

- **安全易用**

|

||||

|

||||

基于 Ingress API 和 Gateway API 标准,提供开箱即用的 UI 控制台,WAF 防护插件、IP/Cookie CC 防护插件开箱即用。

|

||||

|

||||

支持对接 Let's Encrypt 自动签发和续签免费证书,并且可以脱离 K8s 部署,一行 Docker 命令即可启动,方便个人开发者使用。

|

||||

|

||||

插件支持热更新,变更插件逻辑和配置都对流量无损。

|

||||

|

||||

## 功能展示

|

||||

|

||||

### AI 网关 Demo 展示

|

||||

|

||||

[从 OpenAI 到其他大模型,30 秒完成迁移

|

||||

](https://www.bilibili.com/video/BV1dT421a7w7/?spm_id_from=333.788.recommend_more_video.14)

|

||||

|

||||

|

||||

### Higress UI 控制台

|

||||

|

||||

- **丰富的可观测**

|

||||

|

||||

|

||||

@@ -301,6 +301,7 @@ type MatchRule struct {

|

||||

Domain []string `protobuf:"bytes,2,rep,name=domain,proto3" json:"domain,omitempty"`

|

||||

Config *types.Struct `protobuf:"bytes,3,opt,name=config,proto3" json:"config,omitempty"`

|

||||

ConfigDisable bool `protobuf:"varint,4,opt,name=config_disable,json=configDisable,proto3" json:"config_disable,omitempty"`

|

||||

Service []string `protobuf:"bytes,5,rep,name=service,proto3" json:"service,omitempty"`

|

||||

XXX_NoUnkeyedLiteral struct{} `json:"-"`

|

||||

XXX_unrecognized []byte `json:"-"`

|

||||

XXX_sizecache int32 `json:"-"`

|

||||

@@ -367,6 +368,13 @@ func (m *MatchRule) GetConfigDisable() bool {

|

||||

return false

|

||||

}

|

||||

|

||||

func (m *MatchRule) GetService() []string {

|

||||

if m != nil {

|

||||

return m.Service

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func init() {

|

||||

proto.RegisterEnum("higress.extensions.v1alpha1.PluginPhase", PluginPhase_name, PluginPhase_value)

|

||||

proto.RegisterEnum("higress.extensions.v1alpha1.PullPolicy", PullPolicy_name, PullPolicy_value)

|

||||

@@ -377,46 +385,47 @@ func init() {

|

||||

func init() { proto.RegisterFile("extensions/v1alpha1/wasm.proto", fileDescriptor_4d60b240916c4e18) }

|

||||

|

||||

var fileDescriptor_4d60b240916c4e18 = []byte{

|

||||

// 619 bytes of a gzipped FileDescriptorProto

|

||||

0x1f, 0x8b, 0x08, 0x00, 0x00, 0x00, 0x00, 0x00, 0x02, 0xff, 0x7c, 0x94, 0xdd, 0x4e, 0xdb, 0x4c,

|

||||

0x10, 0x86, 0x71, 0x02, 0x81, 0x4c, 0x80, 0xcf, 0xac, 0xbe, 0xd2, 0x15, 0x54, 0x69, 0x84, 0xd4,

|

||||

0xd6, 0xe5, 0xc0, 0x16, 0xa1, 0x3f, 0x27, 0x15, 0x6a, 0x80, 0xb4, 0x44, 0x6d, 0x53, 0xcb, 0x86,

|

||||

0x56, 0xe5, 0xc4, 0xda, 0x98, 0x8d, 0xb3, 0xea, 0xfa, 0x47, 0xde, 0x35, 0x34, 0x17, 0xd2, 0x7b,

|

||||

0xea, 0x61, 0x2f, 0xa1, 0xe2, 0x2e, 0x7a, 0x56, 0x65, 0x6d, 0x43, 0x42, 0xab, 0x9c, 0xed, 0xce,

|

||||

0x3c, 0x33, 0xf3, 0xbe, 0xe3, 0x95, 0xa1, 0x49, 0xbf, 0x49, 0x1a, 0x09, 0x16, 0x47, 0xc2, 0xba,

|

||||

0xdc, 0x23, 0x3c, 0x19, 0x91, 0x3d, 0xeb, 0x8a, 0x88, 0xd0, 0x4c, 0xd2, 0x58, 0xc6, 0x68, 0x7b,

|

||||

0xc4, 0x82, 0x94, 0x0a, 0x61, 0xde, 0x72, 0x66, 0xc9, 0x6d, 0x35, 0x83, 0x38, 0x0e, 0x38, 0xb5,

|

||||

0x14, 0x3a, 0xc8, 0x86, 0xd6, 0x55, 0x4a, 0x92, 0x84, 0xa6, 0x22, 0x2f, 0xde, 0x7a, 0x70, 0x37,

|

||||

0x2f, 0x64, 0x9a, 0xf9, 0x32, 0xcf, 0xee, 0xfc, 0x5e, 0x04, 0xf8, 0x4c, 0x44, 0x68, 0xf3, 0x2c,

|

||||

0x60, 0x11, 0xd2, 0xa1, 0x9a, 0xa5, 0x1c, 0x57, 0x5a, 0x9a, 0x51, 0x77, 0x26, 0x47, 0xb4, 0x09,

|

||||

0x35, 0x31, 0x22, 0xed, 0xe7, 0x2f, 0x70, 0x55, 0x05, 0x8b, 0x1b, 0x72, 0x61, 0x83, 0x85, 0x24,

|

||||

0xa0, 0x5e, 0x92, 0x71, 0xee, 0x25, 0x31, 0x67, 0xfe, 0x18, 0x2f, 0xb6, 0x34, 0x63, 0xbd, 0xfd,

|

||||

0xc4, 0x9c, 0xa3, 0xd7, 0xb4, 0x33, 0xce, 0x6d, 0x85, 0x3b, 0xff, 0xa9, 0x0e, 0xb7, 0x01, 0xb4,

|

||||

0x3b, 0xd3, 0x54, 0x50, 0x3f, 0xa5, 0x12, 0x2f, 0xa9, 0xb9, 0xb7, 0xac, 0xab, 0xc2, 0xe8, 0x29,

|

||||

0xe8, 0x97, 0x34, 0x65, 0x43, 0xe6, 0x13, 0xc9, 0xe2, 0xc8, 0xfb, 0x4a, 0xc7, 0xb8, 0x96, 0xa3,

|

||||

0xd3, 0xf1, 0x77, 0x74, 0x8c, 0x5e, 0xc1, 0x5a, 0xa2, 0xfc, 0x79, 0x7e, 0x1c, 0x0d, 0x59, 0x80,

|

||||

0x97, 0x5b, 0x9a, 0xd1, 0x68, 0xdf, 0x37, 0xf3, 0xd5, 0x98, 0xe5, 0x6a, 0x4c, 0x57, 0xad, 0xc6,

|

||||

0x59, 0xcd, 0xe9, 0x23, 0x05, 0xa3, 0x87, 0xd0, 0x28, 0xaa, 0x23, 0x12, 0x52, 0xbc, 0xa2, 0x66,

|

||||

0x40, 0x1e, 0xea, 0x93, 0x90, 0xa2, 0x03, 0x58, 0x4a, 0x46, 0x44, 0x50, 0x5c, 0x57, 0xf6, 0x8d,

|

||||

0xf9, 0xf6, 0x55, 0x9d, 0x3d, 0xe1, 0x9d, 0xbc, 0x0c, 0xbd, 0x84, 0x95, 0x24, 0x65, 0x71, 0xca,

|

||||

0xe4, 0x18, 0x83, 0x52, 0xb6, 0xfd, 0x97, 0xb2, 0x5e, 0x24, 0xf7, 0xdb, 0x9f, 0x08, 0xcf, 0xa8,

|

||||

0x73, 0x03, 0xa3, 0x03, 0x58, 0xbf, 0xa0, 0x43, 0x92, 0x71, 0x59, 0x1a, 0xa3, 0xf3, 0x8d, 0xad,

|

||||

0x15, 0x78, 0xe1, 0xec, 0x2d, 0x34, 0x42, 0x22, 0xfd, 0x91, 0x97, 0x66, 0x9c, 0x0a, 0x3c, 0x6c,

|

||||

0x55, 0x8d, 0x46, 0xfb, 0xf1, 0x5c, 0xf9, 0x1f, 0x26, 0xbc, 0x93, 0x71, 0xea, 0x40, 0x58, 0x1e,

|

||||

0x05, 0x7a, 0x06, 0x9b, 0xb3, 0x42, 0xbc, 0x0b, 0x26, 0xc8, 0x80, 0x53, 0x1c, 0xb4, 0x34, 0x63,

|

||||

0xc5, 0xf9, 0x7f, 0x66, 0xee, 0x71, 0x9e, 0xdb, 0xf9, 0xae, 0x41, 0xfd, 0xa6, 0x1f, 0xc2, 0xb0,

|

||||

0xcc, 0x22, 0x35, 0x18, 0x6b, 0xad, 0xaa, 0x51, 0x77, 0xca, 0xeb, 0xe4, 0x09, 0x5e, 0xc4, 0x21,

|

||||

0x61, 0x11, 0xae, 0xa8, 0x44, 0x71, 0x43, 0x16, 0xd4, 0x0a, 0xdb, 0xd5, 0xf9, 0xb6, 0x0b, 0x0c,

|

||||

0x3d, 0x82, 0xf5, 0x3b, 0xf2, 0x16, 0x95, 0xbc, 0x35, 0x7f, 0x5a, 0xd7, 0x6e, 0x17, 0x1a, 0x53,

|

||||

0x5f, 0x09, 0xdd, 0x83, 0x8d, 0xb3, 0xbe, 0x6b, 0x77, 0x8f, 0x7a, 0x6f, 0x7a, 0xdd, 0x63, 0xcf,

|

||||

0x3e, 0xe9, 0xb8, 0x5d, 0x7d, 0x01, 0xd5, 0x61, 0xa9, 0x73, 0x76, 0x7a, 0xd2, 0xd7, 0xb5, 0xf2,

|

||||

0x78, 0xae, 0x57, 0x26, 0x47, 0xf7, 0xb4, 0x73, 0xea, 0xea, 0xd5, 0xdd, 0x43, 0x80, 0xa9, 0xa7,

|

||||

0xbd, 0x09, 0x68, 0xa6, 0xcb, 0xc7, 0xf7, 0xbd, 0xa3, 0x2f, 0xfa, 0x02, 0xd2, 0x61, 0xb5, 0x37,

|

||||

0xec, 0xc7, 0xd2, 0x4e, 0xa9, 0xa0, 0x91, 0xd4, 0x35, 0x04, 0x50, 0xeb, 0xf0, 0x2b, 0x32, 0x16,

|

||||

0x7a, 0xe5, 0xf0, 0xf5, 0x8f, 0xeb, 0xa6, 0xf6, 0xf3, 0xba, 0xa9, 0xfd, 0xba, 0x6e, 0x6a, 0xe7,

|

||||

0xed, 0x80, 0xc9, 0x51, 0x36, 0x30, 0xfd, 0x38, 0xb4, 0x08, 0x67, 0x03, 0x32, 0x20, 0x56, 0xf1,

|

||||

0xb1, 0x2c, 0x92, 0x30, 0xeb, 0x1f, 0xbf, 0x91, 0x41, 0x4d, 0x2d, 0x63, 0xff, 0x4f, 0x00, 0x00,

|

||||

0x00, 0xff, 0xff, 0xb9, 0xf2, 0x67, 0xbe, 0x64, 0x04, 0x00, 0x00,

|

||||

// 631 bytes of a gzipped FileDescriptorProto

|

||||

0x1f, 0x8b, 0x08, 0x00, 0x00, 0x00, 0x00, 0x00, 0x02, 0xff, 0x7c, 0x94, 0xdd, 0x6e, 0xd3, 0x4c,

|

||||

0x10, 0x86, 0xeb, 0xa4, 0x49, 0x9b, 0x49, 0xdb, 0xcf, 0x5d, 0x7d, 0x94, 0x55, 0x8b, 0x42, 0x54,

|

||||

0x09, 0x30, 0x3d, 0xb0, 0xd5, 0x94, 0x9f, 0x13, 0x54, 0x91, 0xb6, 0x81, 0x46, 0x40, 0xb0, 0xec,

|

||||

0x16, 0x44, 0x4f, 0xac, 0x8d, 0xbb, 0x71, 0x56, 0xac, 0x7f, 0xe4, 0x5d, 0xb7, 0xe4, 0xaa, 0xb8,

|

||||

0x0d, 0x0e, 0xb9, 0x04, 0xd4, 0xbb, 0xe0, 0x0c, 0x65, 0xed, 0x34, 0x49, 0x41, 0x39, 0xdb, 0x9d,

|

||||

0x79, 0x66, 0xe6, 0x7d, 0xc7, 0x2b, 0x43, 0x83, 0x7e, 0x93, 0x34, 0x12, 0x2c, 0x8e, 0x84, 0x75,

|

||||

0xb5, 0x4f, 0x78, 0x32, 0x24, 0xfb, 0xd6, 0x35, 0x11, 0xa1, 0x99, 0xa4, 0xb1, 0x8c, 0xd1, 0xce,

|

||||

0x90, 0x05, 0x29, 0x15, 0xc2, 0x9c, 0x72, 0xe6, 0x84, 0xdb, 0x6e, 0x04, 0x71, 0x1c, 0x70, 0x6a,

|

||||

0x29, 0xb4, 0x9f, 0x0d, 0xac, 0xeb, 0x94, 0x24, 0x09, 0x4d, 0x45, 0x5e, 0xbc, 0xfd, 0xe0, 0x6e,

|

||||

0x5e, 0xc8, 0x34, 0xf3, 0x65, 0x9e, 0xdd, 0xfd, 0xbd, 0x0c, 0xf0, 0x99, 0x88, 0xd0, 0xe6, 0x59,

|

||||

0xc0, 0x22, 0xa4, 0x43, 0x39, 0x4b, 0x39, 0x2e, 0x35, 0x35, 0xa3, 0xe6, 0x8c, 0x8f, 0x68, 0x0b,

|

||||

0xaa, 0x62, 0x48, 0x5a, 0xcf, 0x5f, 0xe0, 0xb2, 0x0a, 0x16, 0x37, 0xe4, 0xc2, 0x26, 0x0b, 0x49,

|

||||

0x40, 0xbd, 0x24, 0xe3, 0xdc, 0x4b, 0x62, 0xce, 0xfc, 0x11, 0x5e, 0x6e, 0x6a, 0xc6, 0x46, 0xeb,

|

||||

0x89, 0xb9, 0x40, 0xaf, 0x69, 0x67, 0x9c, 0xdb, 0x0a, 0x77, 0xfe, 0x53, 0x1d, 0xa6, 0x01, 0xb4,

|

||||

0x37, 0xd7, 0x54, 0x50, 0x3f, 0xa5, 0x12, 0x57, 0xd4, 0xdc, 0x29, 0xeb, 0xaa, 0x30, 0x7a, 0x0a,

|

||||

0xfa, 0x15, 0x4d, 0xd9, 0x80, 0xf9, 0x44, 0xb2, 0x38, 0xf2, 0xbe, 0xd2, 0x11, 0xae, 0xe6, 0xe8,

|

||||

0x6c, 0xfc, 0x1d, 0x1d, 0xa1, 0x57, 0xb0, 0x9e, 0x28, 0x7f, 0x9e, 0x1f, 0x47, 0x03, 0x16, 0xe0,

|

||||

0x95, 0xa6, 0x66, 0xd4, 0x5b, 0xf7, 0xcd, 0x7c, 0x35, 0xe6, 0x64, 0x35, 0xa6, 0xab, 0x56, 0xe3,

|

||||

0xac, 0xe5, 0xf4, 0xb1, 0x82, 0xd1, 0x43, 0xa8, 0x17, 0xd5, 0x11, 0x09, 0x29, 0x5e, 0x55, 0x33,

|

||||

0x20, 0x0f, 0xf5, 0x48, 0x48, 0xd1, 0x21, 0x54, 0x92, 0x21, 0x11, 0x14, 0xd7, 0x94, 0x7d, 0x63,

|

||||

0xb1, 0x7d, 0x55, 0x67, 0x8f, 0x79, 0x27, 0x2f, 0x43, 0x2f, 0x61, 0x35, 0x49, 0x59, 0x9c, 0x32,

|

||||

0x39, 0xc2, 0xa0, 0x94, 0xed, 0xfc, 0xa5, 0xac, 0x1b, 0xc9, 0x83, 0xd6, 0x27, 0xc2, 0x33, 0xea,

|

||||

0xdc, 0xc2, 0xe8, 0x10, 0x36, 0x2e, 0xe9, 0x80, 0x64, 0x5c, 0x4e, 0x8c, 0xd1, 0xc5, 0xc6, 0xd6,

|

||||

0x0b, 0xbc, 0x70, 0xf6, 0x16, 0xea, 0x21, 0x91, 0xfe, 0xd0, 0x4b, 0x33, 0x4e, 0x05, 0x1e, 0x34,

|

||||

0xcb, 0x46, 0xbd, 0xf5, 0x78, 0xa1, 0xfc, 0x0f, 0x63, 0xde, 0xc9, 0x38, 0x75, 0x20, 0x9c, 0x1c,

|

||||

0x05, 0x7a, 0x06, 0x5b, 0xf3, 0x42, 0xbc, 0x4b, 0x26, 0x48, 0x9f, 0x53, 0x1c, 0x34, 0x35, 0x63,

|

||||

0xd5, 0xf9, 0x7f, 0x6e, 0xee, 0x49, 0x9e, 0xdb, 0xfd, 0xae, 0x41, 0xed, 0xb6, 0x1f, 0xc2, 0xb0,

|

||||

0xc2, 0x22, 0x35, 0x18, 0x6b, 0xcd, 0xb2, 0x51, 0x73, 0x26, 0xd7, 0xf1, 0x13, 0xbc, 0x8c, 0x43,

|

||||

0xc2, 0x22, 0x5c, 0x52, 0x89, 0xe2, 0x86, 0x2c, 0xa8, 0x16, 0xb6, 0xcb, 0x8b, 0x6d, 0x17, 0x18,

|

||||

0x7a, 0x04, 0x1b, 0x77, 0xe4, 0x2d, 0x2b, 0x79, 0xeb, 0xfe, 0xac, 0xae, 0xb1, 0x12, 0x41, 0xd3,

|

||||

0x2b, 0xe6, 0x53, 0x5c, 0xc9, 0x95, 0x14, 0xd7, 0xbd, 0x0e, 0xd4, 0x67, 0xbe, 0x1f, 0xba, 0x07,

|

||||

0x9b, 0xe7, 0x3d, 0xd7, 0xee, 0x1c, 0x77, 0xdf, 0x74, 0x3b, 0x27, 0x9e, 0x7d, 0xda, 0x76, 0x3b,

|

||||

0xfa, 0x12, 0xaa, 0x41, 0xa5, 0x7d, 0x7e, 0x76, 0xda, 0xd3, 0xb5, 0xc9, 0xf1, 0x42, 0x2f, 0x8d,

|

||||

0x8f, 0xee, 0x59, 0xfb, 0xcc, 0xd5, 0xcb, 0x7b, 0x47, 0x00, 0x33, 0x8f, 0x7e, 0x0b, 0xd0, 0x5c,

|

||||

0x97, 0x8f, 0xef, 0xbb, 0xc7, 0x5f, 0xf4, 0x25, 0xa4, 0xc3, 0x5a, 0x77, 0xd0, 0x8b, 0xa5, 0x9d,

|

||||

0x52, 0x41, 0x23, 0xa9, 0x6b, 0x08, 0xa0, 0xda, 0xe6, 0xd7, 0x64, 0x24, 0xf4, 0xd2, 0xd1, 0xeb,

|

||||

0x1f, 0x37, 0x0d, 0xed, 0xe7, 0x4d, 0x43, 0xfb, 0x75, 0xd3, 0xd0, 0x2e, 0x5a, 0x01, 0x93, 0xc3,

|

||||

0xac, 0x6f, 0xfa, 0x71, 0x68, 0x11, 0xce, 0xfa, 0xa4, 0x4f, 0xac, 0xe2, 0x33, 0x5a, 0x24, 0x61,

|

||||

0xd6, 0x3f, 0x7e, 0x30, 0xfd, 0xaa, 0x5a, 0xd3, 0xc1, 0x9f, 0x00, 0x00, 0x00, 0xff, 0xff, 0x0b,

|

||||

0x3c, 0xc3, 0xcf, 0x7e, 0x04, 0x00, 0x00,

|

||||

}

|

||||

|

||||

func (m *WasmPlugin) Marshal() (dAtA []byte, err error) {

|

||||

@@ -581,6 +590,15 @@ func (m *MatchRule) MarshalToSizedBuffer(dAtA []byte) (int, error) {

|

||||

i -= len(m.XXX_unrecognized)

|

||||

copy(dAtA[i:], m.XXX_unrecognized)

|

||||

}

|

||||

if len(m.Service) > 0 {

|

||||

for iNdEx := len(m.Service) - 1; iNdEx >= 0; iNdEx-- {

|

||||

i -= len(m.Service[iNdEx])

|

||||

copy(dAtA[i:], m.Service[iNdEx])

|

||||

i = encodeVarintWasm(dAtA, i, uint64(len(m.Service[iNdEx])))

|

||||

i--

|

||||

dAtA[i] = 0x2a

|

||||

}

|

||||

}

|

||||

if m.ConfigDisable {

|

||||

i--

|

||||

if m.ConfigDisable {

|

||||

@@ -719,6 +737,12 @@ func (m *MatchRule) Size() (n int) {

|

||||

if m.ConfigDisable {

|

||||

n += 2

|

||||

}

|

||||

if len(m.Service) > 0 {

|

||||

for _, s := range m.Service {

|

||||

l = len(s)

|

||||

n += 1 + l + sovWasm(uint64(l))

|

||||

}

|

||||

}

|

||||

if m.XXX_unrecognized != nil {

|

||||

n += len(m.XXX_unrecognized)

|

||||

}

|

||||

@@ -1291,6 +1315,38 @@ func (m *MatchRule) Unmarshal(dAtA []byte) error {

|

||||

}

|

||||

}

|

||||

m.ConfigDisable = bool(v != 0)

|

||||

case 5:

|

||||

if wireType != 2 {

|

||||

return fmt.Errorf("proto: wrong wireType = %d for field Service", wireType)

|

||||

}

|

||||

var stringLen uint64

|

||||

for shift := uint(0); ; shift += 7 {

|

||||

if shift >= 64 {

|

||||

return ErrIntOverflowWasm

|

||||

}

|

||||

if iNdEx >= l {

|

||||

return io.ErrUnexpectedEOF

|

||||

}

|

||||

b := dAtA[iNdEx]

|

||||

iNdEx++

|

||||

stringLen |= uint64(b&0x7F) << shift

|

||||

if b < 0x80 {

|

||||

break

|

||||

}

|

||||

}

|

||||

intStringLen := int(stringLen)

|

||||

if intStringLen < 0 {

|

||||

return ErrInvalidLengthWasm

|

||||

}

|

||||

postIndex := iNdEx + intStringLen

|

||||

if postIndex < 0 {

|

||||

return ErrInvalidLengthWasm

|

||||

}

|

||||

if postIndex > l {

|

||||

return io.ErrUnexpectedEOF

|

||||

}

|

||||

m.Service = append(m.Service, string(dAtA[iNdEx:postIndex]))

|

||||

iNdEx = postIndex

|

||||

default:

|

||||

iNdEx = preIndex

|

||||

skippy, err := skipWasm(dAtA[iNdEx:])

|

||||

|

||||

@@ -114,6 +114,7 @@ message MatchRule {

|

||||

repeated string domain = 2;

|

||||

google.protobuf.Struct config = 3;

|

||||

bool config_disable = 4;

|

||||

repeated string service = 5;

|

||||

}

|

||||

|

||||

// The phase in the filter chain where the plugin will be injected.

|

||||

|

||||

@@ -64,6 +64,10 @@ spec:

|

||||

items:

|

||||

type: string

|

||||

type: array

|

||||

service:

|

||||

items:

|

||||

type: string

|

||||

type: array

|

||||

type: object

|

||||

type: array

|

||||

phase:

|

||||

|

||||

@@ -97,7 +97,7 @@ higress: {{ include "controller.name" . }}

|

||||

{{- end }}

|

||||

|

||||

{{- define "skywalking.enabled" -}}

|

||||

{{- if and .Values.skywalking.enabled .Values.skywalking.service.address }}

|

||||

{{- if and (hasKey .Values "tracing") .Values.tracing.enable (hasKey .Values.tracing "skywalking") .Values.tracing.skywalking.service }}

|

||||

true

|

||||

{{- end }}

|

||||

{{- end }}

|

||||

|

||||

@@ -46,10 +46,6 @@

|

||||

address: {{ .Values.global.tracer.lightstep.address }}

|

||||

# Access Token used to communicate with the Satellite pool

|

||||

accessToken: {{ .Values.global.tracer.lightstep.accessToken }}

|

||||

{{- else if eq .Values.global.proxy.tracer "zipkin" }}

|

||||

zipkin:

|

||||

# Address of the Zipkin collector

|

||||

address: {{ .Values.global.tracer.zipkin.address | default (print "zipkin." .Release.Namespace ":9411") }}

|

||||

{{- else if eq .Values.global.proxy.tracer "datadog" }}

|

||||

datadog:

|

||||

# Address of the Datadog Agent

|

||||

@@ -109,7 +105,17 @@ metadata:

|

||||

labels:

|

||||

{{- include "gateway.labels" . | nindent 4 }}

|

||||

data:

|

||||

|

||||

higress: |-

|

||||

{{- $existingConfig := lookup "v1" "ConfigMap" .Release.Namespace "higress-config" }}

|

||||

{{- $existingData := dict }}

|

||||

{{- if $existingConfig }}

|

||||

{{- $existingData = index $existingConfig.data "higress" | default "{}" | fromYaml }}

|

||||

{{- end }}

|

||||

{{- $newData := dict }}

|

||||

{{- if and (hasKey .Values "tracing") .Values.tracing.enable }}

|

||||

{{- $_ := set $newData "tracing" .Values.tracing }}

|

||||

{{- end }}

|

||||

{{- toYaml (merge $existingData $newData) | nindent 4 }}

|

||||

# Configuration file for the mesh networks to be used by the Split Horizon EDS.

|

||||

meshNetworks: |-

|

||||

{{- if .Values.global.meshNetworks }}

|

||||

@@ -170,8 +176,8 @@ data:

|

||||

"endpoint": {

|

||||

"address": {

|

||||

"socket_address": {

|

||||

"address": "{{ .Values.skywalking.service.address }}",

|

||||

"port_value": "{{ .Values.skywalking.service.port }}"

|

||||

"address": "{{ .Values.tracing.skywalking.service }}",

|

||||

"port_value": "{{ .Values.tracing.skywalking.port }}"

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@@ -178,9 +178,9 @@ global:

|

||||

# Default port for Pilot agent health checks. A value of 0 will disable health checking.

|

||||

statusPort: 15020

|

||||

|

||||

# Specify which tracer to use. One of: zipkin, lightstep, datadog, stackdriver.

|

||||

# Specify which tracer to use. One of: lightstep, datadog, stackdriver.

|

||||

# If using stackdriver tracer outside GCP, set env GOOGLE_APPLICATION_CREDENTIALS to the GCP credential file.

|

||||

tracer: "zipkin"

|

||||

tracer: ""

|

||||

|

||||

# Controls if sidecar is injected at the front of the container list and blocks the start of the other containers until the proxy is ready

|

||||

holdApplicationUntilProxyStarts: false

|

||||

@@ -330,12 +330,8 @@ global:

|

||||

maxNumberOfAnnotations: 200

|

||||

# The global default max number of attributes per span.

|

||||

maxNumberOfAttributes: 200

|

||||

zipkin:

|

||||

# Host:Port for reporting trace data in zipkin format. If not specified, will default to

|

||||

# zipkin service (port 9411) in the same namespace as the other istio components.

|

||||

address: ""

|

||||

|

||||

# Use the Mesh Control Protocol (MCP) for configuring Istiod. Requires an MCP source.

|

||||

|

||||

useMCP: false

|

||||

|

||||

# Observability (o11y) configurations

|

||||

@@ -668,9 +664,15 @@ pilot:

|

||||

podLabels: {}

|

||||

|

||||

|

||||

# Skywalking config settings

|

||||

skywalking:

|

||||

enabled: false

|

||||

service:

|

||||

address: ~

|

||||

port: 11800

|

||||

# Tracing config settings

|

||||

tracing:

|

||||

enable: false

|

||||

sampling: 100

|

||||

timeout: 500

|

||||

skywalking:

|

||||

# access_token: ""

|

||||

service: ""

|

||||

port: 11800

|

||||

# zipkin:

|

||||

# service: ""

|

||||

# port: 9411

|

||||

|

||||

@@ -32,7 +32,7 @@ func ParseProtocol(s string) Protocol {

|

||||

return TCP

|

||||

case "http":

|

||||

return HTTP

|

||||

case "grpc":

|

||||

case "grpc", "triple", "tri":

|

||||

return GRPC

|

||||

case "dubbo":

|

||||

return Dubbo

|

||||

|

||||

@@ -841,6 +841,7 @@ func (m *IngressConfig) convertIstioWasmPlugin(obj *higressext.WasmPlugin) (*ext

|

||||

StructValue: rule.Config,

|

||||

}

|

||||

var matchItems []*types.Value

|

||||

// match ingress

|

||||

for _, ing := range rule.Ingress {

|

||||

matchItems = append(matchItems, &types.Value{

|

||||

Kind: &types.Value_StringValue{

|

||||

@@ -861,6 +862,7 @@ func (m *IngressConfig) convertIstioWasmPlugin(obj *higressext.WasmPlugin) (*ext

|

||||

})

|

||||

continue

|

||||

}

|

||||

// match domain

|

||||

for _, domain := range rule.Domain {

|

||||

matchItems = append(matchItems, &types.Value{

|

||||

Kind: &types.Value_StringValue{

|

||||

@@ -868,10 +870,31 @@ func (m *IngressConfig) convertIstioWasmPlugin(obj *higressext.WasmPlugin) (*ext

|

||||

},

|

||||

})

|

||||

}

|

||||

if len(matchItems) > 0 {

|

||||

v.StructValue.Fields["_match_domain_"] = &types.Value{

|

||||

Kind: &types.Value_ListValue{

|

||||

ListValue: &types.ListValue{

|

||||

Values: matchItems,

|

||||

},

|

||||

},

|

||||

}

|

||||

ruleValues = append(ruleValues, &types.Value{

|

||||

Kind: v,

|

||||

})

|

||||

continue

|

||||

}

|

||||

// match service

|

||||

for _, service := range rule.Service {

|

||||

matchItems = append(matchItems, &types.Value{

|

||||

Kind: &types.Value_StringValue{

|

||||

StringValue: service,

|

||||

},

|

||||

})

|

||||

}

|

||||

if len(matchItems) == 0 {

|

||||

return nil, fmt.Errorf("invalid match rule has no match condition, rule:%v", rule)

|

||||

}

|

||||

v.StructValue.Fields["_match_domain_"] = &types.Value{

|

||||

v.StructValue.Fields["_match_service_"] = &types.Value{

|

||||

Kind: &types.Value_ListValue{

|

||||

ListValue: &types.ListValue{

|

||||

Values: matchItems,

|

||||

|

||||

@@ -431,11 +431,14 @@ func (c *controller) ConvertGateway(convertOptions *common.ConvertOptions, wrapp

|

||||

if err != nil {

|

||||

if k8serrors.IsNotFound(err) {

|

||||

// If there is no matching secret, try to get it from configmap.

|

||||

secretName = httpsCredentialConfig.MatchSecretNameByDomain(rule.Host)

|

||||

secretNamespace = c.options.SystemNamespace

|

||||

namespace, secret := cert.ParseTLSSecret(secretName)

|

||||

if namespace != "" {

|

||||

secretNamespace = namespace

|

||||

matchSecretName := httpsCredentialConfig.MatchSecretNameByDomain(rule.Host)

|

||||

if matchSecretName != "" {

|

||||

namespace, secret := cert.ParseTLSSecret(matchSecretName)

|

||||

if namespace == "" {

|

||||

secretNamespace = c.options.SystemNamespace

|

||||

} else {

|

||||

secretNamespace = namespace

|

||||

}

|

||||

secretName = secret

|

||||

}

|

||||

}

|

||||

|

||||

@@ -417,11 +417,14 @@ func (c *controller) ConvertGateway(convertOptions *common.ConvertOptions, wrapp

|

||||

if err != nil {

|

||||

if k8serrors.IsNotFound(err) {

|

||||

// If there is no matching secret, try to get it from configmap.

|

||||

secretName = httpsCredentialConfig.MatchSecretNameByDomain(rule.Host)

|

||||

secretNamespace = c.options.SystemNamespace

|

||||

namespace, secret := cert.ParseTLSSecret(secretName)

|

||||

if namespace != "" {

|

||||

secretNamespace = namespace

|

||||

matchSecretName := httpsCredentialConfig.MatchSecretNameByDomain(rule.Host)

|

||||

if matchSecretName != "" {

|

||||

namespace, secret := cert.ParseTLSSecret(matchSecretName)

|

||||

if namespace == "" {

|

||||

secretNamespace = c.options.SystemNamespace

|

||||

} else {

|

||||

secretNamespace = namespace

|

||||

}

|

||||

secretName = secret

|

||||

}

|

||||

}

|

||||

|

||||

@@ -163,7 +163,6 @@ func (c *controller) processNextWorkItem() bool {

|

||||

func (c *controller) onEvent(namespacedName types.NamespacedName) error {

|

||||

event := model.EventUpdate

|

||||

ing, err := c.ingressLister.Ingresses(namespacedName.Namespace).Get(namespacedName.Name)

|

||||

ing.Status.InitializeConditions()

|

||||

if err != nil {

|

||||

if kerrors.IsNotFound(err) {

|

||||

event = model.EventDelete

|

||||

@@ -181,6 +180,8 @@ func (c *controller) onEvent(namespacedName types.NamespacedName) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

ing.Status.InitializeConditions()

|

||||

|

||||

// we should check need process only when event is not delete,

|

||||

// if it is delete event, and previously processed, we need to process too.

|

||||

if event != model.EventDelete {

|

||||

|

||||

53

plugins/wasm-assemblyscript/README.md

Normal file

53

plugins/wasm-assemblyscript/README.md

Normal file

@@ -0,0 +1,53 @@

|

||||

## 介绍

|

||||

|

||||

此 SDK 用于使用 AssemblyScript 语言开发 Higress 的 Wasm 插件。

|

||||

|

||||

### 如何使用SDK

|

||||

|

||||

创建一个新的 AssemblyScript 项目。

|

||||

|

||||

```

|

||||

npm init

|

||||

npm install --save-dev assemblyscript

|

||||

npx asinit .

|

||||

```

|

||||

|

||||

在asconfig.json文件中,作为传递给asc编译器的选项之一,包含"use": "abort=abort_proc_exit"。

|

||||

|

||||

```

|

||||

{

|

||||

"options": {

|

||||

"use": "abort=abort_proc_exit"

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

将`"@higress/wasm-assemblyscript": "^0.0.4"`添加到你的依赖项中,然后运行`npm install`。

|

||||

|

||||

### 本地构建

|

||||

|

||||

```

|

||||

npm run asbuild

|

||||

```

|

||||

|

||||

构建结果将在`build`文件夹中。其中,`debug.wasm`和`release.wasm`是已编译的文件,在生产环境中建议使用`release.wasm`。

|

||||

|

||||

注:如果需要插件带有 name section 信息需要带上`"debug": true`,编译参数解释详见[using-the-compiler](https://www.assemblyscript.org/compiler.html#using-the-compiler)。

|

||||

|

||||

```json

|

||||

"release": {

|

||||

"outFile": "build/release.wasm",

|

||||

"textFile": "build/release.wat",

|

||||

"sourceMap": true,

|

||||

"optimizeLevel": 3,

|

||||

"shrinkLevel": 0,

|

||||

"converge": false,

|

||||

"noAssert": false,

|

||||

"debug": true

|

||||

}

|

||||

```

|

||||

|

||||

### AssemblyScript 限制

|

||||

|

||||

此 SDK 使用的 AssemblyScript 版本为`0.27.29`,参考[AssemblyScript Status](https://www.assemblyscript.org/status.html)该版本尚未支持闭包、异常、迭代器等特性,并且JSON,正则表达式等功能还尚未在标准库中实现,暂时需要使用社区提供的实现。

|

||||

|

||||

23

plugins/wasm-assemblyscript/asconfig.json

Normal file

23

plugins/wasm-assemblyscript/asconfig.json

Normal file

@@ -0,0 +1,23 @@

|

||||

{

|

||||

"targets": {

|

||||

"debug": {

|

||||

"outFile": "build/debug.wasm",

|

||||

"textFile": "build/debug.wat",

|

||||

"sourceMap": true,

|

||||

"debug": true

|

||||

},

|

||||

"release": {

|

||||

"outFile": "build/release.wasm",

|

||||

"textFile": "build/release.wat",

|

||||

"sourceMap": true,

|

||||

"optimizeLevel": 3,

|

||||

"shrinkLevel": 0,

|

||||

"converge": false,

|

||||

"noAssert": false

|

||||

}

|

||||

},

|

||||

"options": {

|

||||

"bindings": "esm",

|

||||

"use": "abort=abort_proc_exit"

|

||||

}

|

||||

}

|

||||

214

plugins/wasm-assemblyscript/assembly/cluster_wrapper.ts

Normal file

214

plugins/wasm-assemblyscript/assembly/cluster_wrapper.ts

Normal file

@@ -0,0 +1,214 @@

|

||||

import {

|

||||

log,

|

||||

LogLevelValues,

|

||||

get_property,

|

||||

WasmResultValues,

|

||||

} from "@higress/proxy-wasm-assemblyscript-sdk/assembly";

|

||||

import { getRequestHost } from "./request_wrapper";

|

||||

|

||||

export abstract class Cluster {

|

||||

abstract clusterName(): string;

|

||||

abstract hostName(): string;

|

||||

}

|

||||

|

||||

export class RouteCluster extends Cluster {

|

||||

host: string;

|

||||

constructor(host: string = "") {

|

||||

super();

|

||||

this.host = host;

|

||||

}

|

||||

|

||||

clusterName(): string {

|

||||

let result = get_property("cluster_name");

|

||||

if (result.status != WasmResultValues.Ok) {

|

||||

log(LogLevelValues.error, "get route cluster failed");

|

||||

return "";

|

||||

}

|

||||

return String.UTF8.decode(result.returnValue);

|

||||

}

|

||||

|

||||

hostName(): string {

|

||||

if (this.host != "") {

|

||||

return this.host;

|

||||

}

|

||||

return getRequestHost();

|

||||

}

|

||||

}

|

||||

|

||||

export class K8sCluster extends Cluster {

|

||||

serviceName: string;

|

||||

namespace: string;

|

||||

port: i64;

|

||||

version: string;

|

||||

host: string;

|

||||

|

||||

constructor(

|

||||

serviceName: string,

|

||||

namespace: string,

|

||||

port: i64,

|

||||

version: string = "",

|

||||

host: string = ""

|

||||

) {

|

||||

super();

|

||||

this.serviceName = serviceName;

|

||||

this.namespace = namespace;

|

||||

this.port = port;

|

||||

this.version = version;

|

||||

this.host = host;

|

||||

}

|

||||

|

||||

clusterName(): string {

|

||||

let namespace = this.namespace != "" ? this.namespace : "default";

|

||||

return `outbound|${this.port}|${this.version}|${this.serviceName}.${namespace}.svc.cluster.local`;

|

||||

}

|

||||

|

||||

hostName(): string {

|

||||

if (this.host != "") {

|

||||

return this.host;

|

||||

}

|

||||

return `${this.serviceName}.${this.namespace}.svc.cluster.local`;

|

||||

}

|

||||

}

|

||||

|

||||

export class NacosCluster extends Cluster {

|

||||

serviceName: string;

|

||||

group: string;

|

||||

namespaceID: string;

|

||||

port: i64;

|

||||

isExtRegistry: boolean;

|

||||

version: string;

|

||||

host: string;

|

||||

|

||||

constructor(

|

||||

serviceName: string,

|

||||

namespaceID: string,

|

||||

port: i64,

|

||||

// use DEFAULT-GROUP by default

|

||||

group: string = "DEFAULT-GROUP",

|

||||

// set true if use edas/sae registry

|

||||

isExtRegistry: boolean = false,

|

||||

version: string = "",

|

||||

host: string = ""

|

||||

) {

|

||||

super();

|

||||

this.serviceName = serviceName;

|

||||

this.group = group.replace("_", "-");

|

||||

this.namespaceID = namespaceID;

|

||||

this.port = port;

|

||||

this.isExtRegistry = isExtRegistry;

|

||||

this.version = version;

|

||||

this.host = host;

|

||||

}

|

||||

|

||||

clusterName(): string {

|

||||

let tail = "nacos" + (this.isExtRegistry ? "-ext" : "");

|

||||

return `outbound|${this.port}|${this.version}|${this.serviceName}.${this.group}.${this.namespaceID}.${tail}`;

|

||||

}

|

||||

|

||||

hostName(): string {

|

||||

if (this.host != "") {

|

||||

return this.host;

|

||||

}

|

||||

return this.serviceName;

|

||||

}

|

||||

}

|

||||

|

||||

export class StaticIpCluster extends Cluster {

|

||||

serviceName: string;

|

||||

port: i64;

|

||||

host: string;

|

||||

|

||||

constructor(serviceName: string, port: i64, host: string = "") {

|

||||

super()

|

||||

this.serviceName = serviceName;

|

||||

this.port = port;

|

||||

this.host = host;

|

||||

}

|

||||

|

||||

clusterName(): string {

|

||||

return `outbound|${this.port}||${this.serviceName}.static`;

|

||||

}

|

||||

|

||||

hostName(): string {

|

||||

if (this.host != "") {

|

||||

return this.host;

|

||||

}

|

||||

return this.serviceName;

|

||||

}

|

||||

}

|

||||

|

||||

export class DnsCluster extends Cluster {

|

||||

serviceName: string;

|

||||

domain: string;

|

||||

port: i64;

|

||||

|

||||

constructor(serviceName: string, domain: string, port: i64) {

|

||||

super();

|

||||

this.serviceName = serviceName;

|

||||

this.domain = domain;

|

||||

this.port = port;

|

||||

}

|

||||

|

||||

clusterName(): string {

|

||||

return `outbound|${this.port}||${this.serviceName}.dns`;

|

||||

}

|

||||

|

||||

hostName(): string {

|

||||

return this.domain;

|

||||

}

|

||||

}

|

||||

|

||||

export class ConsulCluster extends Cluster {

|

||||

serviceName: string;

|

||||

datacenter: string;

|

||||

port: i64;

|

||||

host: string;

|

||||

|

||||

constructor(

|

||||

serviceName: string,

|

||||

datacenter: string,

|

||||

port: i64,

|

||||

host: string = ""

|

||||

) {

|

||||

super();

|

||||

this.serviceName = serviceName;

|

||||

this.datacenter = datacenter;

|

||||

this.port = port;

|

||||

this.host = host;

|

||||

}

|

||||

|

||||

clusterName(): string {

|

||||

return `outbound|${this.port}||${this.serviceName}.${this.datacenter}.consul`;

|

||||

}

|

||||

|

||||

hostName(): string {

|

||||

if (this.host != "") {

|

||||

return this.host;

|

||||

}

|

||||

return this.serviceName;

|

||||

}

|

||||

}

|

||||

|

||||

export class FQDNCluster extends Cluster {

|

||||

fqdn: string;

|

||||

host: string;

|

||||

port: i64;

|

||||

|

||||

constructor(fqdn: string, port: i64, host: string = "") {

|

||||

super();

|

||||

this.fqdn = fqdn;

|

||||

this.host = host;

|

||||

this.port = port;

|

||||

}

|

||||

|

||||

clusterName(): string {

|

||||

return `outbound|${this.port}||${this.fqdn}`;

|

||||

}

|

||||

|

||||

hostName(): string {

|

||||

if (this.host != "") {

|

||||

return this.host;

|

||||

}

|

||||

return this.fqdn;

|

||||

}

|

||||

}

|

||||

120

plugins/wasm-assemblyscript/assembly/http_wrapper.ts

Normal file

120

plugins/wasm-assemblyscript/assembly/http_wrapper.ts

Normal file

@@ -0,0 +1,120 @@

|

||||

import {

|

||||

Cluster

|

||||

} from "./cluster_wrapper"

|

||||

|

||||

import {

|

||||

log,

|

||||

LogLevelValues,

|

||||

Headers,

|

||||

HeaderPair,

|

||||

root_context,

|

||||

BufferTypeValues,

|

||||

get_buffer_bytes,

|

||||

BaseContext,

|

||||

stream_context,

|

||||

WasmResultValues,

|

||||

RootContext,

|

||||

ResponseCallBack

|

||||

} from "@higress/proxy-wasm-assemblyscript-sdk/assembly";

|

||||

|

||||

export interface HttpClient {

|

||||

get(path: string, headers: Headers, cb: ResponseCallBack, timeoutMillisecond: u32): boolean;

|

||||

head(path: string, headers: Headers, cb: ResponseCallBack, timeoutMillisecond: u32): boolean;

|

||||

options(path: string, headers: Headers, cb: ResponseCallBack, timeoutMillisecond: u32): boolean;

|

||||

post(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32): boolean;

|

||||

put(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32): boolean;

|

||||

patch(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32): boolean;

|

||||

delete(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32): boolean;

|

||||

connect(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32): boolean;

|

||||

trace(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32): boolean;

|

||||

}

|

||||

|

||||

const methodArrayBuffer: ArrayBuffer = String.UTF8.encode(":method");

|

||||

const pathArrayBuffer: ArrayBuffer = String.UTF8.encode(":path");

|

||||

const authorityArrayBuffer: ArrayBuffer = String.UTF8.encode(":authority");

|

||||

|

||||

const StatusBadGateway: i32 = 502;

|

||||

|

||||

export class ClusterClient {

|

||||

cluster: Cluster;

|

||||

|

||||

constructor(cluster: Cluster) {

|

||||

this.cluster = cluster;

|

||||

}

|

||||

|

||||

private httpCall(method: string, path: string, headers: Headers, body: ArrayBuffer, callback: ResponseCallBack, timeoutMillisecond: u32 = 500): boolean {

|

||||

if (root_context == null) {

|

||||

log(LogLevelValues.error, "Root context is null");

|

||||

return false;

|

||||

}

|

||||

for (let i: i32 = headers.length - 1; i >= 0; i--) {

|

||||

const key = String.UTF8.decode(headers[i].key)

|

||||

if ((key == ":method") || (key == ":path") || (key == ":authority")) {

|

||||

headers.splice(i, 1);

|

||||

}

|

||||

}

|

||||

|

||||

headers.push(new HeaderPair(methodArrayBuffer, String.UTF8.encode(method)));

|

||||

headers.push(new HeaderPair(pathArrayBuffer, String.UTF8.encode(path)));

|

||||

headers.push(new HeaderPair(authorityArrayBuffer, String.UTF8.encode(this.cluster.hostName())));

|

||||

|

||||

const result = (root_context as RootContext).httpCall(this.cluster.clusterName(), headers, body, [], timeoutMillisecond, root_context as BaseContext, callback,

|

||||

(_origin_context: BaseContext, _numHeaders: u32, body_size: usize, _trailers: u32, callback: ResponseCallBack): void => {

|

||||

const respBody = get_buffer_bytes(BufferTypeValues.HttpCallResponseBody, 0, body_size as u32);

|

||||

const respHeaders = stream_context.headers.http_callback.get_headers()

|

||||

let code = StatusBadGateway;

|

||||

let headers = new Array<HeaderPair>();

|

||||

for (let i = 0; i < respHeaders.length; i++) {

|

||||

const h = respHeaders[i];

|

||||

if (String.UTF8.decode(h.key) == ":status") {

|

||||

code = <i32>parseInt(String.UTF8.decode(h.value))

|

||||

}

|

||||

headers.push(new HeaderPair(h.key, h.value));

|

||||

}

|

||||

log(LogLevelValues.debug, `http call end, code: ${code}, body: ${String.UTF8.decode(respBody)}`)

|

||||

callback(code, headers, respBody);

|

||||

})

|

||||

log(LogLevelValues.debug, `http call start, cluster: ${this.cluster.clusterName()}, method: ${method}, path: ${path}, body: ${String.UTF8.decode(body)}, timeout: ${timeoutMillisecond}`)

|

||||

if (result != WasmResultValues.Ok) {

|

||||

log(LogLevelValues.error, `http call failed, result: ${result}`)

|

||||

return false

|

||||

}

|

||||

return true

|

||||

}

|

||||

|

||||

get(path: string, headers: Headers, cb: ResponseCallBack, timeoutMillisecond: u32 = 500): boolean {

|

||||

return this.httpCall("GET", path, headers, new ArrayBuffer(0), cb, timeoutMillisecond);

|

||||

}

|

||||

|

||||

head(path: string, headers: Headers, cb: ResponseCallBack, timeoutMillisecond: u32 = 500): boolean {

|

||||

return this.httpCall("HEAD", path, headers, new ArrayBuffer(0), cb, timeoutMillisecond);

|

||||

}

|

||||

|

||||

options(path: string, headers: Headers, cb: ResponseCallBack, timeoutMillisecond: u32 = 500): boolean {

|

||||

return this.httpCall("OPTIONS", path, headers, new ArrayBuffer(0), cb, timeoutMillisecond);

|

||||

}

|

||||

|

||||

post(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32 = 500): boolean {

|

||||

return this.httpCall("POST", path, headers, body, cb, timeoutMillisecond);

|

||||

}

|

||||

|

||||

put(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32 = 500): boolean {

|

||||

return this.httpCall("PUT", path, headers, body, cb, timeoutMillisecond);

|

||||

}

|

||||

|

||||

patch(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32 = 500): boolean {

|

||||

return this.httpCall("PATCH", path, headers, body, cb, timeoutMillisecond);

|

||||

}

|

||||

|

||||

delete(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32 = 500): boolean {

|

||||

return this.httpCall("DELETE", path, headers, body, cb, timeoutMillisecond);

|

||||

}

|

||||

|

||||

connect(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32 = 500): boolean {

|

||||

return this.httpCall("CONNECT", path, headers, body, cb, timeoutMillisecond);

|

||||

}

|

||||

|

||||

trace(path: string, headers: Headers, body: ArrayBuffer, cb: ResponseCallBack, timeoutMillisecond: u32 = 500): boolean {

|

||||

return this.httpCall("TRACE", path, headers, body, cb, timeoutMillisecond);

|

||||

}

|

||||

}

|

||||

18

plugins/wasm-assemblyscript/assembly/index.ts

Normal file

18

plugins/wasm-assemblyscript/assembly/index.ts

Normal file

@@ -0,0 +1,18 @@

|

||||

export {RouteCluster,

|

||||

K8sCluster,

|

||||

NacosCluster,

|

||||

ConsulCluster,

|

||||

FQDNCluster,

|

||||

StaticIpCluster} from "./cluster_wrapper"

|

||||

export {HttpClient,

|

||||

ClusterClient} from "./http_wrapper"

|

||||

export {Log} from "./log_wrapper"

|

||||

export {SetCtx,

|

||||

HttpContext,

|

||||

ParseConfigBy,

|

||||

ProcessRequestBodyBy,

|

||||

ProcessRequestHeadersBy,

|

||||

ProcessResponseBodyBy,

|

||||

ProcessResponseHeadersBy,

|

||||

Logger, RegisteTickFunc} from "./plugin_wrapper"

|

||||

export {ParseResult} from "./rule_matcher"

|

||||

66

plugins/wasm-assemblyscript/assembly/log_wrapper.ts

Normal file

66

plugins/wasm-assemblyscript/assembly/log_wrapper.ts

Normal file

@@ -0,0 +1,66 @@

|

||||

import { log, LogLevelValues } from "@higress/proxy-wasm-assemblyscript-sdk/assembly";

|

||||

|

||||

enum LogLevel {

|

||||

Trace = 0,

|

||||

Debug,

|

||||

Info,

|

||||

Warn,

|

||||

Error,

|

||||

Critical,

|

||||

}

|

||||

|

||||

export class Log {

|

||||

private pluginName: string;

|

||||

|

||||

constructor(pluginName: string) {

|

||||

this.pluginName = pluginName;

|

||||

}

|

||||

|

||||

private log(level: LogLevel, msg: string): void {

|

||||

let formattedMsg = `[${this.pluginName}] ${msg}`;

|

||||

switch (level) {

|

||||

case LogLevel.Trace:

|

||||

log(LogLevelValues.trace, formattedMsg);

|

||||

break;

|

||||

case LogLevel.Debug:

|

||||

log(LogLevelValues.debug, formattedMsg);

|

||||

break;

|

||||

case LogLevel.Info:

|

||||

log(LogLevelValues.info, formattedMsg);

|

||||

break;

|

||||

case LogLevel.Warn:

|

||||

log(LogLevelValues.warn, formattedMsg);

|

||||

break;

|

||||

case LogLevel.Error:

|

||||

log(LogLevelValues.error, formattedMsg);

|

||||

break;

|

||||

case LogLevel.Critical:

|

||||

log(LogLevelValues.critical, formattedMsg);

|

||||

break;

|

||||

}

|

||||

}

|

||||

|

||||

public Trace(msg: string): void {

|

||||

this.log(LogLevel.Trace, msg);

|

||||

}

|

||||

|

||||

public Debug(msg: string): void {

|

||||

this.log(LogLevel.Debug, msg);

|

||||

}

|

||||

|

||||

public Info(msg: string): void {

|

||||

this.log(LogLevel.Info, msg);

|

||||

}

|

||||

|

||||

public Warn(msg: string): void {

|

||||

this.log(LogLevel.Warn, msg);

|

||||

}

|

||||

|

||||

public Error(msg: string): void {

|

||||

this.log(LogLevel.Error, msg);

|

||||

}

|

||||

|

||||

public Critical(msg: string): void {

|

||||

this.log(LogLevel.Critical, msg);

|

||||

}

|

||||

}

|

||||

445

plugins/wasm-assemblyscript/assembly/plugin_wrapper.ts

Normal file

445

plugins/wasm-assemblyscript/assembly/plugin_wrapper.ts

Normal file

@@ -0,0 +1,445 @@

|

||||

import { Log } from "./log_wrapper";

|

||||

import {

|

||||

Context,

|

||||

FilterHeadersStatusValues,

|

||||

RootContext,

|

||||

setRootContext,

|

||||

proxy_set_effective_context,

|

||||

log,

|

||||

LogLevelValues,

|

||||

FilterDataStatusValues,

|

||||

get_buffer_bytes,

|

||||

BufferTypeValues,

|

||||

set_tick_period_milliseconds,

|

||||

get_current_time_nanoseconds

|

||||

} from "@higress/proxy-wasm-assemblyscript-sdk/assembly";

|

||||

import {

|

||||

getRequestHost,

|

||||

getRequestMethod,

|

||||

getRequestPath,

|

||||

getRequestScheme,

|

||||

isBinaryRequestBody,

|

||||

} from "./request_wrapper";

|

||||

import { RuleMatcher, ParseResult } from "./rule_matcher";

|

||||

import { JSON } from "assemblyscript-json/assembly";

|

||||

|

||||

export function SetCtx<PluginConfig>(

|

||||

pluginName: string,

|

||||

setFuncs: usize[] = []

|

||||

): void {

|

||||

const rootContextId = 1

|

||||

setRootContext(new CommonRootCtx<PluginConfig>(rootContextId, pluginName, setFuncs));

|

||||

}

|

||||

|

||||

export interface HttpContext {

|

||||

Scheme(): string;

|

||||

Host(): string;

|

||||

Path(): string;

|

||||

Method(): string;

|

||||

SetContext(key: string, value: usize): void;

|

||||

GetContext(key: string): usize;

|

||||

DontReadRequestBody(): void;

|

||||

DontReadResponseBody(): void;

|

||||

}

|

||||

|

||||

type ParseConfigFunc<PluginConfig> = (

|

||||

json: JSON.Obj,

|

||||

) => ParseResult<PluginConfig>;

|

||||

type OnHttpHeadersFunc<PluginConfig> = (

|

||||

context: HttpContext,

|

||||

config: PluginConfig,

|

||||

) => FilterHeadersStatusValues;

|

||||

type OnHttpBodyFunc<PluginConfig> = (

|

||||

context: HttpContext,

|

||||

config: PluginConfig,

|

||||

body: ArrayBuffer,

|

||||

) => FilterDataStatusValues;

|

||||

|

||||

|

||||

export var Logger: Log = new Log("");

|

||||

|

||||

class CommonRootCtx<PluginConfig> extends RootContext {

|

||||

pluginName: string;

|

||||

hasCustomConfig: boolean;

|

||||

ruleMatcher: RuleMatcher<PluginConfig>;

|

||||

parseConfig: ParseConfigFunc<PluginConfig> | null;

|

||||

onHttpRequestHeaders: OnHttpHeadersFunc<PluginConfig> | null;

|

||||

onHttpRequestBody: OnHttpBodyFunc<PluginConfig> | null;

|

||||

onHttpResponseHeaders: OnHttpHeadersFunc<PluginConfig> | null;

|

||||

onHttpResponseBody: OnHttpBodyFunc<PluginConfig> | null;

|

||||

onTickFuncs: Array<TickFuncEntry>;

|

||||

|

||||

constructor(context_id: u32, pluginName: string, setFuncs: usize[]) {

|

||||

super(context_id);

|

||||

this.pluginName = pluginName;

|

||||

Logger = new Log(pluginName);

|

||||

this.hasCustomConfig = true;

|

||||

this.onHttpRequestHeaders = null;

|

||||

this.onHttpRequestBody = null;

|

||||

this.onHttpResponseHeaders = null;

|

||||

this.onHttpResponseBody = null;

|

||||

this.parseConfig = null;

|

||||

this.ruleMatcher = new RuleMatcher<PluginConfig>();

|

||||

this.onTickFuncs = new Array<TickFuncEntry>();

|

||||

for (let i = 0; i < setFuncs.length; i++) {

|

||||

changetype<Closure<PluginConfig>>(setFuncs[i]).lambdaFn(

|

||||

setFuncs[i],

|

||||

this

|

||||

);

|

||||

}

|

||||

if (this.parseConfig == null) {

|

||||

this.hasCustomConfig = false;

|

||||

this.parseConfig = (json: JSON.Obj): ParseResult<PluginConfig> =>{ return new ParseResult<PluginConfig>(null, true); };

|

||||

}

|

||||

}

|

||||

|

||||

createContext(context_id: u32): Context {

|

||||

return new CommonCtx<PluginConfig>(context_id, this);

|

||||

}

|

||||

|

||||

onConfigure(configuration_size: u32): boolean {

|

||||

super.onConfigure(configuration_size);

|

||||

const data = this.getConfiguration();

|

||||

let jsonData: JSON.Obj = new JSON.Obj();

|

||||

if (data == "{}") {

|

||||

if (this.hasCustomConfig) {

|

||||

log(LogLevelValues.warn, "config is empty, but has ParseConfigFunc");

|

||||

}

|

||||

} else {

|

||||

const parseData = JSON.parse(data);

|

||||

if (parseData.isObj) {

|

||||

jsonData = changetype<JSON.Obj>(JSON.parse(data));

|

||||

} else {

|

||||

log(LogLevelValues.error, "parse json data failed")

|

||||

return false;

|

||||

}

|

||||

}

|

||||

|

||||

if (!this.ruleMatcher.parseRuleConfig(jsonData, this.parseConfig as ParseConfigFunc<PluginConfig>)) {

|

||||

return false;

|

||||

}

|

||||

|

||||

if (globalOnTickFuncs.length > 0) {

|

||||

this.onTickFuncs = globalOnTickFuncs;

|

||||

set_tick_period_milliseconds(100);

|

||||

}

|

||||

return true;

|

||||

}

|

||||

|

||||

onTick(): void {

|

||||

for (let i = 0; i < this.onTickFuncs.length; i++) {

|

||||

const tickFuncEntry = this.onTickFuncs[i];

|

||||

const now = getCurrentTimeMilliseconds();

|

||||

if (tickFuncEntry.lastExecuted + tickFuncEntry.tickPeriod <= now) {

|

||||

tickFuncEntry.tickFunc();

|

||||

tickFuncEntry.lastExecuted = getCurrentTimeMilliseconds();

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

function getCurrentTimeMilliseconds(): u64 {

|

||||

return get_current_time_nanoseconds() / 1000000;

|

||||

}

|

||||

|

||||

class TickFuncEntry {

|

||||

lastExecuted: u64;

|

||||

tickPeriod: u64;

|

||||

tickFunc: () => void;

|

||||

|

||||

constructor(lastExecuted: u64, tickPeriod: u64, tickFunc: () => void) {

|

||||

this.lastExecuted = lastExecuted;

|

||||

this.tickPeriod = tickPeriod;

|

||||

this.tickFunc = tickFunc;

|

||||

}

|

||||

}

|

||||

|

||||

var globalOnTickFuncs = new Array<TickFuncEntry>();

|

||||

|

||||

export function RegisteTickFunc(tickPeriod: i64, tickFunc: () => void): void {

|

||||

globalOnTickFuncs.push(new TickFuncEntry(0, tickPeriod, tickFunc));

|

||||

}

|

||||

|

||||

class Closure<PluginConfig> {

|

||||

lambdaFn: (closure: usize, ctx: CommonRootCtx<PluginConfig>) => void;

|

||||

parseConfigFunc: ParseConfigFunc<PluginConfig> | null;

|

||||

onHttpHeadersFunc: OnHttpHeadersFunc<PluginConfig> | null;

|

||||

OnHttpBodyFunc: OnHttpBodyFunc<PluginConfig> | null;

|

||||

|

||||

constructor(

|

||||

lambdaFn: (closure: usize, ctx: CommonRootCtx<PluginConfig>) => void

|

||||

) {

|

||||

this.lambdaFn = lambdaFn;

|

||||

this.parseConfigFunc = null;

|

||||

this.onHttpHeadersFunc = null;

|

||||

this.OnHttpBodyFunc = null;

|

||||

}

|

||||

|

||||

setParseConfigFunc(f: ParseConfigFunc<PluginConfig>): void {

|

||||

this.parseConfigFunc = f;

|

||||

}

|

||||

|

||||

setHttpHeadersFunc(f: OnHttpHeadersFunc<PluginConfig>): void {

|

||||

this.onHttpHeadersFunc = f;

|

||||

}

|

||||

|

||||

setHttpBodyFunc(f: OnHttpBodyFunc<PluginConfig>): void {

|

||||

this.OnHttpBodyFunc = f;

|

||||

}

|

||||

}

|

||||

|

||||

export function ParseConfigBy<PluginConfig>(

|

||||

f: ParseConfigFunc<PluginConfig>

|

||||

): usize {

|

||||

const lambdaFn = function (

|

||||

closure: usize,

|

||||

ctx: CommonRootCtx<PluginConfig>

|

||||

): void {

|

||||

const f = changetype<Closure<PluginConfig>>(closure).parseConfigFunc;

|

||||

if (f != null) {

|

||||

ctx.parseConfig = f;

|

||||

}

|

||||

};

|

||||

const closure = new Closure<PluginConfig>(lambdaFn);

|

||||

closure.setParseConfigFunc(f);

|

||||

return changetype<usize>(closure);

|

||||

}

|

||||

|

||||

export function ProcessRequestHeadersBy<PluginConfig>(

|

||||

f: OnHttpHeadersFunc<PluginConfig>

|

||||

): usize {

|

||||

const lambdaFn = function (

|

||||

closure: usize,

|

||||

ctx: CommonRootCtx<PluginConfig>

|

||||

): void {

|

||||

const f = changetype<Closure<PluginConfig>>(closure).onHttpHeadersFunc;

|

||||

if (f != null) {

|

||||

ctx.onHttpRequestHeaders = f;

|

||||

}

|

||||

};

|

||||

const closure = new Closure<PluginConfig>(lambdaFn);

|

||||

closure.setHttpHeadersFunc(f);

|

||||

return changetype<usize>(closure);

|

||||

}

|

||||

|

||||

export function ProcessRequestBodyBy<PluginConfig>(

|

||||

f: OnHttpBodyFunc<PluginConfig>

|

||||

): usize {

|

||||

const lambdaFn = function (

|

||||

closure: usize,

|

||||

ctx: CommonRootCtx<PluginConfig>

|

||||

): void {

|

||||

const f = changetype<Closure<PluginConfig>>(closure).OnHttpBodyFunc;

|

||||

if (f != null) {

|

||||

ctx.onHttpRequestBody = f;

|

||||

}

|

||||

};

|

||||

const closure = new Closure<PluginConfig>(lambdaFn);

|

||||

closure.setHttpBodyFunc(f);

|

||||

return changetype<usize>(closure);

|

||||

}

|

||||

|

||||

export function ProcessResponseHeadersBy<PluginConfig>(

|

||||

f: OnHttpHeadersFunc<PluginConfig>

|

||||

): usize {

|

||||

const lambdaFn = function (

|

||||

closure: usize,

|

||||

ctx: CommonRootCtx<PluginConfig>

|

||||

): void {

|

||||

const f = changetype<Closure<PluginConfig>>(closure).onHttpHeadersFunc;

|

||||

if (f != null) {

|

||||

ctx.onHttpResponseHeaders = f;

|

||||

}

|

||||

};

|

||||

const closure = new Closure<PluginConfig>(lambdaFn);

|

||||

closure.setHttpHeadersFunc(f);

|

||||

return changetype<usize>(closure);

|

||||

}

|

||||

|

||||

export function ProcessResponseBodyBy<PluginConfig>(

|

||||

f: OnHttpBodyFunc<PluginConfig>

|

||||

): usize {

|

||||

const lambdaFn = function (

|

||||

closure: usize,

|

||||

ctx: CommonRootCtx<PluginConfig>

|

||||

): void {

|

||||

const f = changetype<Closure<PluginConfig>>(closure).OnHttpBodyFunc;

|

||||

if (f != null) {

|

||||

ctx.onHttpResponseBody = f;

|

||||

}

|

||||

};

|

||||