mirror of

https://github.com/alibaba/higress.git

synced 2026-02-26 05:30:50 +08:00

Compare commits

56 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

ce298054f1 | ||

|

|

24c69fb0b7 | ||

|

|

a38be77b9e | ||

|

|

27999dcc59 | ||

|

|

811179a6a0 | ||

|

|

5f43dd0224 | ||

|

|

e23ab3ca7c | ||

|

|

032a69556f | ||

|

|

ee6bb11730 | ||

|

|

fc600f204a | ||

|

|

357418853f | ||

|

|

e8586cccd7 | ||

|

|

d55b9a0837 | ||

|

|

4f04ac067b | ||

|

|

c7028bd7f2 | ||

|

|

95ff52cde9 | ||

|

|

7c7205b572 | ||

|

|

f342f50ca4 | ||

|

|

659d136bfe | ||

|

|

541e5e206f | ||

|

|

387c337654 | ||

|

|

8024a96881 | ||

|

|

f71c1900a8 | ||

|

|

1199946d36 | ||

|

|

b1571de6f0 | ||

|

|

20dae295a8 | ||

|

|

9a1f9e4606 | ||

|

|

6f4ef33590 | ||

|

|

fef8ecc822 | ||

|

|

0ade9504be | ||

|

|

6311fecfce | ||

|

|

5c225de080 | ||

|

|

bf9ef5eefd | ||

|

|

26f5737a80 | ||

|

|

50c1a5e78c | ||

|

|

647304eb45 | ||

|

|

0a7fc9f412 | ||

|

|

c9253264ef | ||

|

|

8c80084ada | ||

|

|

9f5ee99c2d | ||

|

|

3770bd2f55 | ||

|

|

698a395e89 | ||

|

|

2c72767203 | ||

|

|

bb3ac59834 | ||

|

|

6c1fe57034 | ||

|

|

5c5cc6ac90 | ||

|

|

265da8e4d6 | ||

|

|

119698eea4 | ||

|

|

18d20ca135 | ||

|

|

9978db2ac6 | ||

|

|

1582fa6ef9 | ||

|

|

2b49fd5b26 | ||

|

|

48433a6549 | ||

|

|

8ec48b3b85 | ||

|

|

32007d2ab8 | ||

|

|

27b088fc7e |

@@ -35,6 +35,7 @@ header:

|

||||

- 'hgctl/pkg/manifests'

|

||||

- 'pkg/ingress/kube/gateway/istio/testdata'

|

||||

- 'release-notes/**'

|

||||

- '.cursor/**'

|

||||

|

||||

comment: on-failure

|

||||

dependency:

|

||||

|

||||

13

ADOPTERS.md

Normal file

13

ADOPTERS.md

Normal file

@@ -0,0 +1,13 @@

|

||||

# Adopters of Higress

|

||||

|

||||

Below are the adopters of the Higress project. If you are using Higress in your organization, please add your name to the list by submitting a pull request: this will help foster the Higress community. Kindly ensure the list remains in alphabetical order.

|

||||

|

||||

|

||||

| Organization | Contact (GitHub User Name) | Environment | Description of Use |

|

||||

|---------------------------------------|----------------------------------------|--------------------------------------------|-----------------------------------------------------------------------|

|

||||

| [antdigital](https://antdigital.com/) | [@Lovelcp](https://github.com/Lovelcp) | Production | Ingress Gateway, Microservice gateway, LLM Gateway, MCP Gateway |

|

||||

| [kuaishou](https://ir.kuaishou.com/) | [@maplecap](https://github.com/maplecap) | Production | LLM Gateway |

|

||||

| [Trip.com](https://www.trip.com/) | [@CH3CHO](https://github.com/CH3CHO) | Production | LLM Gateway, MCP Gateway |

|

||||

| [vipshop](https://github.com/vipshop/) | [@firebook](https://github.com/firebook) | Production | LLM Gateway, MCP Gateway, Inference Gateway |

|

||||

| [labring](https://github.com/labring/) | [@zzjin](https://github.com/zzjin) | Production | Ingress Gateway |

|

||||

| < company name here> | < your github handle here > | <Production/Testing/Experimenting/etc> | <Ingress Gateway/Microservice gateway/LLM Gateway/MCP Gateway/Inference Gateway> |

|

||||

@@ -146,7 +146,7 @@ docker-buildx-push: clean-env docker.higress-buildx

|

||||

export PARENT_GIT_TAG:=$(shell cat VERSION)

|

||||

export PARENT_GIT_REVISION:=$(TAG)

|

||||

|

||||

export ENVOY_PACKAGE_URL_PATTERN?=https://github.com/higress-group/proxy/releases/download/v2.2.0/envoy-symbol-ARCH.tar.gz

|

||||

export ENVOY_PACKAGE_URL_PATTERN?=https://github.com/higress-group/proxy/releases/download/v2.1.10/envoy-symbol-ARCH.tar.gz

|

||||

|

||||

build-envoy: prebuild

|

||||

./tools/hack/build-envoy.sh

|

||||

|

||||

15

README.md

15

README.md

@@ -45,7 +45,7 @@ Higress was born within Alibaba to solve the issues of Tengine reload affecting

|

||||

|

||||

You can click the button below to install the enterprise version of Higress:

|

||||

|

||||

[](https://www.aliyun.com/product/apigateway?spm=higress-github.topbar.0.0.0)

|

||||

[](https://www.aliyun.com/product/api-gateway?spm=higress-github.topbar.0.0.0)

|

||||

|

||||

|

||||

If you use open-source Higress and wish to obtain enterprise-level support, you can contact the project maintainer johnlanni's email: **zty98751@alibaba-inc.com** or social media accounts (WeChat ID: **nomadao**, DingTalk ID: **chengtanzty**). Please note **Higress** when adding as a friend :)

|

||||

@@ -82,6 +82,8 @@ Port descriptions:

|

||||

>

|

||||

> If you experience a timeout when pulling image from `higress-registry.cn-hangzhou.cr.aliyuncs.com`, you can try replacing it with the following docker registry mirror source:

|

||||

>

|

||||

> **North America**: `higress-registry.us-west-1.cr.aliyuncs.com`

|

||||

>

|

||||

> **Southeast Asia**: `higress-registry.ap-southeast-7.cr.aliyuncs.com`

|

||||

|

||||

For other installation methods such as Helm deployment under K8s, please refer to the official [Quick Start documentation](https://higress.io/en-us/docs/user/quickstart).

|

||||

@@ -117,7 +119,16 @@ If you are deploying on the cloud, it is recommended to use the [Enterprise Edit

|

||||

|

||||

Higress can function as a feature-rich ingress controller, which is compatible with many annotations of K8s' nginx ingress controller.

|

||||

|

||||

[Gateway API](https://gateway-api.sigs.k8s.io/) support is coming soon and will support smooth migration from Ingress API to Gateway API.

|

||||

[Gateway API](https://gateway-api.sigs.k8s.io/) is already supported, and it supports a smooth migration from Ingress API to Gateway API.

|

||||

|

||||

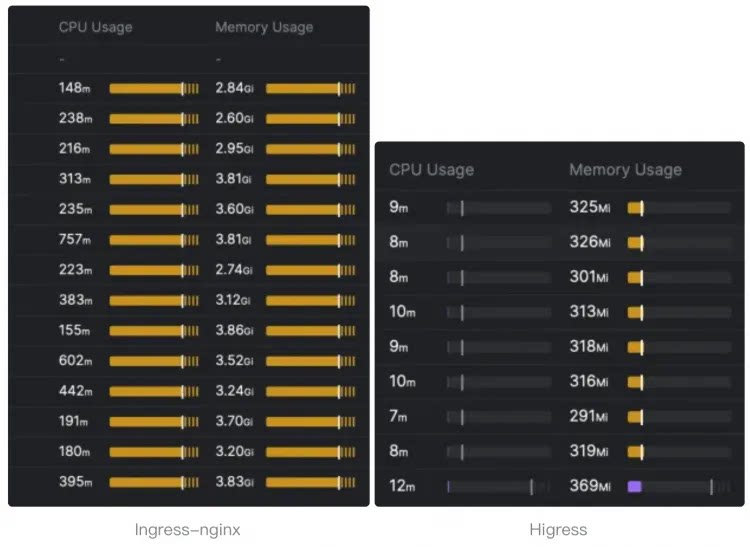

Compared to ingress-nginx, the resource overhead has significantly decreased, and the speed at which route changes take effect has improved by ten times.

|

||||

|

||||

> The following resource overhead comparison comes from [sealos](https://github.com/labring).

|

||||

>

|

||||

> For details, you can read this [article](https://sealos.io/blog/sealos-envoy-vs-nginx-2000-tenants) to understand how sealos migrates the monitoring of **tens of thousands of ingress** resources from nginx ingress to higress.

|

||||

|

||||

|

||||

|

||||

|

||||

- **Microservice gateway**:

|

||||

|

||||

|

||||

Submodule envoy/envoy updated: 3fe314c698...0961b00718

@@ -1,5 +1,5 @@

|

||||

apiVersion: v2

|

||||

appVersion: 2.1.9

|

||||

appVersion: 2.1.10

|

||||

description: Helm chart for deploying higress gateways

|

||||

icon: https://higress.io/img/higress_logo_small.png

|

||||

home: http://higress.io/

|

||||

@@ -15,4 +15,4 @@ dependencies:

|

||||

repository: "file://../redis"

|

||||

version: 0.0.1

|

||||

type: application

|

||||

version: 2.1.9

|

||||

version: 2.1.10

|

||||

|

||||

@@ -3,7 +3,8 @@

|

||||

# Declare variables to be passed into your templates.

|

||||

global:

|

||||

# -- Specify the image registry and pull policy

|

||||

hub: higress-registry.cn-hangzhou.cr.aliyuncs.com/higress

|

||||

# Will inherit from parent chart's global.hub if not set

|

||||

hub: ""

|

||||

# -- Specify image pull policy if default behavior isn't desired.

|

||||

# Default behavior: latest images will be Always else IfNotPresent.

|

||||

imagePullPolicy: ""

|

||||

|

||||

@@ -71,6 +71,11 @@ spec:

|

||||

items:

|

||||

type: string

|

||||

type: array

|

||||

routeType:

|

||||

enum:

|

||||

- HTTP

|

||||

- GRPC

|

||||

type: string

|

||||

service:

|

||||

items:

|

||||

type: string

|

||||

|

||||

@@ -203,7 +203,7 @@ template:

|

||||

{{- if $o11y.enabled }}

|

||||

{{- $config := $o11y.promtail }}

|

||||

- name: promtail

|

||||

image: {{ $config.image.repository }}:{{ $config.image.tag }}

|

||||

image: {{ $config.image.repository | default (printf "%s/promtail" .Values.global.hub) }}:{{ $config.image.tag }}

|

||||

imagePullPolicy: IfNotPresent

|

||||

args:

|

||||

- -config.file=/etc/promtail/promtail.yaml

|

||||

@@ -250,6 +250,10 @@ template:

|

||||

tolerations:

|

||||

{{- toYaml . | nindent 6 }}

|

||||

{{- end }}

|

||||

{{- with .Values.gateway.topologySpreadConstraints }}

|

||||

topologySpreadConstraints:

|

||||

{{- toYaml . | nindent 6 }}

|

||||

{{- end }}

|

||||

volumes:

|

||||

- emptyDir: {}

|

||||

name: workload-socket

|

||||

|

||||

@@ -289,6 +289,10 @@ spec:

|

||||

tolerations:

|

||||

{{- toYaml . | nindent 8 }}

|

||||

{{- end }}

|

||||

{{- with .Values.controller.topologySpreadConstraints }}

|

||||

topologySpreadConstraints:

|

||||

{{- toYaml . | nindent 8 }}

|

||||

{{- end }}

|

||||

volumes:

|

||||

- name: log

|

||||

emptyDir: {}

|

||||

|

||||

@@ -5,6 +5,9 @@ metadata:

|

||||

namespace: {{ .Release.Namespace }}

|

||||

labels:

|

||||

{{- include "gateway.labels" . | nindent 4}}

|

||||

{{- with .Values.gateway.metrics.podMonitorSelector }}

|

||||

{{- toYaml . | nindent 4 }}

|

||||

{{- end }}

|

||||

annotations:

|

||||

{{- .Values.gateway.annotations | toYaml | nindent 4 }}

|

||||

spec:

|

||||

|

||||

@@ -24,9 +24,6 @@ spec:

|

||||

{{- end }}

|

||||

{{- with .Values.gateway.service.externalTrafficPolicy }}

|

||||

externalTrafficPolicy: "{{ . }}"

|

||||

{{- end }}

|

||||

{{- with .Values.gateway.service.loadBalancerClass}}

|

||||

loadBalancerClass: "{{ . }}"

|

||||

{{- end }}

|

||||

type: {{ .Values.gateway.service.type }}

|

||||

ports:

|

||||

|

||||

@@ -362,7 +362,7 @@ global:

|

||||

enabled: false

|

||||

promtail:

|

||||

image:

|

||||

repository: higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/promtail

|

||||

repository: "" # Will use global.hub if not set

|

||||

tag: 2.9.4

|

||||

port: 3101

|

||||

resources:

|

||||

@@ -377,7 +377,7 @@ global:

|

||||

# The default value is "" and when caName="", the CA will be configured by other

|

||||

# mechanisms (e.g., environmental variable CA_PROVIDER).

|

||||

caName: ""

|

||||

hub: higress-registry.cn-hangzhou.cr.aliyuncs.com/higress

|

||||

hub: "" # Will use global.hub if not set

|

||||

|

||||

clusterName: ""

|

||||

# -- meshConfig defines runtime configuration of components, including Istiod and istio-agent behavior

|

||||

@@ -433,7 +433,7 @@ gateway:

|

||||

# -- The readiness timeout seconds

|

||||

readinessTimeoutSeconds: 3

|

||||

|

||||

hub: higress-registry.cn-hangzhou.cr.aliyuncs.com/higress

|

||||

hub: "" # Will use global.hub if not set

|

||||

tag: ""

|

||||

# -- revision declares which revision this gateway is a part of

|

||||

revision: ""

|

||||

@@ -522,12 +522,19 @@ gateway:

|

||||

|

||||

affinity: {}

|

||||

|

||||

topologySpreadConstraints: []

|

||||

|

||||

# -- If specified, the gateway will act as a network gateway for the given network.

|

||||

networkGateway: ""

|

||||

|

||||

metrics:

|

||||

# -- If true, create PodMonitor or VMPodScrape for gateway

|

||||

enabled: false

|

||||

# -- Selector for PodMonitor

|

||||

# When using monitoring.coreos.com/v1.PodMonitor, the selector must match

|

||||

# the label "release: kube-prome" is the default for kube-prometheus-stack

|

||||

podMonitorSelector:

|

||||

release: kube-prome

|

||||

# -- provider group name for CustomResourceDefinition, can be monitoring.coreos.com or operator.victoriametrics.com

|

||||

provider: monitoring.coreos.com

|

||||

interval: ""

|

||||

@@ -548,7 +555,7 @@ controller:

|

||||

replicas: 1

|

||||

image: higress

|

||||

|

||||

hub: higress-registry.cn-hangzhou.cr.aliyuncs.com/higress

|

||||

hub: "" # Will use global.hub if not set

|

||||

tag: ""

|

||||

env: {}

|

||||

|

||||

@@ -624,6 +631,8 @@ controller:

|

||||

|

||||

affinity: {}

|

||||

|

||||

topologySpreadConstraints: []

|

||||

|

||||

autoscaling:

|

||||

enabled: false

|

||||

minReplicas: 1

|

||||

@@ -642,7 +651,7 @@ pilot:

|

||||

rollingMaxSurge: 100%

|

||||

rollingMaxUnavailable: 25%

|

||||

|

||||

hub: higress-registry.cn-hangzhou.cr.aliyuncs.com/higress

|

||||

hub: "" # Will use global.hub if not set

|

||||

tag: ""

|

||||

|

||||

# -- Can be a full hub/image:tag

|

||||

@@ -795,7 +804,7 @@ pluginServer:

|

||||

replicas: 2

|

||||

image: plugin-server

|

||||

|

||||

hub: higress-registry.cn-hangzhou.cr.aliyuncs.com/higress

|

||||

hub: "" # Will use global.hub if not set

|

||||

tag: ""

|

||||

|

||||

imagePullSecrets: []

|

||||

|

||||

@@ -1,9 +1,9 @@

|

||||

dependencies:

|

||||

- name: higress-core

|

||||

repository: file://../core

|

||||

version: 2.1.9

|

||||

version: 2.1.10

|

||||

- name: higress-console

|

||||

repository: https://higress.io/helm-charts/

|

||||

version: 2.1.9

|

||||

digest: sha256:d696af6726b40219cc16e7cf8de7400101479dfbd8deb3101d7ee736415b9875

|

||||

generated: "2025-11-13T16:33:49.721553+08:00"

|

||||

digest: sha256:fbb896461a8bdc1d5a4f8403253a59497b3b7a13909e9b92a4f3ce3f4f8d999d

|

||||

generated: "2026-02-03T16:05:30.300315+08:00"

|

||||

|

||||

@@ -1,5 +1,5 @@

|

||||

apiVersion: v2

|

||||

appVersion: 2.1.9

|

||||

appVersion: 2.1.10

|

||||

description: Helm chart for deploying Higress gateways

|

||||

icon: https://higress.io/img/higress_logo_small.png

|

||||

home: http://higress.io/

|

||||

@@ -12,9 +12,9 @@ sources:

|

||||

dependencies:

|

||||

- name: higress-core

|

||||

repository: "file://../core"

|

||||

version: 2.1.9

|

||||

version: 2.1.10

|

||||

- name: higress-console

|

||||

repository: "https://higress.io/helm-charts/"

|

||||

version: 2.1.9

|

||||

type: application

|

||||

version: 2.1.9

|

||||

version: 2.1.10

|

||||

|

||||

@@ -44,7 +44,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| controller.autoscaling.minReplicas | int | `1` | |

|

||||

| controller.autoscaling.targetCPUUtilizationPercentage | int | `80` | |

|

||||

| controller.env | object | `{}` | |

|

||||

| controller.hub | string | `"higress-registry.cn-hangzhou.cr.aliyuncs.com/higress"` | |

|

||||

| controller.hub | string | `""` | |

|

||||

| controller.image | string | `"higress"` | |

|

||||

| controller.imagePullSecrets | list | `[]` | |

|

||||

| controller.labels | object | `{}` | |

|

||||

@@ -83,6 +83,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| controller.serviceAccount.name | string | `""` | If not set and create is true, a name is generated using the fullname template |

|

||||

| controller.tag | string | `""` | |

|

||||

| controller.tolerations | list | `[]` | |

|

||||

| controller.topologySpreadConstraints | list | `[]` | |

|

||||

| downstream | object | `{"connectionBufferLimits":32768,"http2":{"initialConnectionWindowSize":1048576,"initialStreamWindowSize":65535,"maxConcurrentStreams":100},"idleTimeout":180,"maxRequestHeadersKb":60,"routeTimeout":0}` | Downstream config settings |

|

||||

| gateway.affinity | object | `{}` | |

|

||||

| gateway.annotations | object | `{}` | Annotations to apply to all resources |

|

||||

@@ -95,7 +96,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| gateway.hostNetwork | bool | `false` | |

|

||||

| gateway.httpPort | int | `80` | |

|

||||

| gateway.httpsPort | int | `443` | |

|

||||

| gateway.hub | string | `"higress-registry.cn-hangzhou.cr.aliyuncs.com/higress"` | |

|

||||

| gateway.hub | string | `""` | |

|

||||

| gateway.image | string | `"gateway"` | |

|

||||

| gateway.kind | string | `"Deployment"` | Use a `DaemonSet` or `Deployment` |

|

||||

| gateway.labels | object | `{}` | Labels to apply to all resources |

|

||||

@@ -104,6 +105,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| gateway.metrics.interval | string | `""` | |

|

||||

| gateway.metrics.metricRelabelConfigs | list | `[]` | for operator.victoriametrics.com/v1beta1.VMPodScrape |

|

||||

| gateway.metrics.metricRelabelings | list | `[]` | for monitoring.coreos.com/v1.PodMonitor |

|

||||

| gateway.metrics.podMonitorSelector | object | `{"release":"kube-prome"}` | Selector for PodMonitor When using monitoring.coreos.com/v1.PodMonitor, the selector must match the label "release: kube-prome" is the default for kube-prometheus-stack |

|

||||

| gateway.metrics.provider | string | `"monitoring.coreos.com"` | provider group name for CustomResourceDefinition, can be monitoring.coreos.com or operator.victoriametrics.com |

|

||||

| gateway.metrics.rawSpec | object | `{}` | some more raw podMetricsEndpoints spec |

|

||||

| gateway.metrics.relabelConfigs | list | `[]` | |

|

||||

@@ -151,6 +153,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| gateway.serviceAccount.name | string | `""` | The name of the service account to use. If not set, the release name is used |

|

||||

| gateway.tag | string | `""` | |

|

||||

| gateway.tolerations | list | `[]` | |

|

||||

| gateway.topologySpreadConstraints | list | `[]` | |

|

||||

| gateway.unprivilegedPortSupported | string | `nil` | |

|

||||

| global.autoscalingv2API | bool | `true` | whether to use autoscaling/v2 template for HPA settings for internal usage only, not to be configured by users. |

|

||||

| global.caAddress | string | `""` | The customized CA address to retrieve certificates for the pods in the cluster. CSR clients such as the Istio Agent and ingress gateways can use this to specify the CA endpoint. If not set explicitly, default to the Istio discovery address. |

|

||||

@@ -191,7 +194,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| global.multiCluster.clusterName | string | `""` | Should be set to the name of the cluster this installation will run in. This is required for sidecar injection to properly label proxies |

|

||||

| global.multiCluster.enabled | bool | `true` | Set to true to connect two kubernetes clusters via their respective ingressgateway services when pods in each cluster cannot directly talk to one another. All clusters should be using Istio mTLS and must have a shared root CA for this model to work. |

|

||||

| global.network | string | `""` | Network defines the network this cluster belong to. This name corresponds to the networks in the map of mesh networks. |

|

||||

| global.o11y | object | `{"enabled":false,"promtail":{"image":{"repository":"higress-registry.cn-hangzhou.cr.aliyuncs.com/higress/promtail","tag":"2.9.4"},"port":3101,"resources":{"limits":{"cpu":"500m","memory":"2Gi"}},"securityContext":{}}}` | Observability (o11y) configurations |

|

||||

| global.o11y | object | `{"enabled":false,"promtail":{"image":{"repository":"","tag":"2.9.4"},"port":3101,"resources":{"limits":{"cpu":"500m","memory":"2Gi"}},"securityContext":{}}}` | Observability (o11y) configurations |

|

||||

| global.omitSidecarInjectorConfigMap | bool | `false` | |

|

||||

| global.onDemandRDS | bool | `false` | |

|

||||

| global.oneNamespace | bool | `false` | Whether to restrict the applications namespace the controller manages; If not set, controller watches all namespaces |

|

||||

@@ -243,7 +246,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| global.watchNamespace | string | `""` | If not empty, Higress Controller will only watch resources in the specified namespace. When isolating different business systems using K8s namespace, if each namespace requires a standalone gateway instance, this parameter can be used to confine the Ingress watching of Higress within the given namespace. |

|

||||

| global.xdsMaxRecvMsgSize | string | `"104857600"` | |

|

||||

| gzip | object | `{"chunkSize":4096,"compressionLevel":"BEST_COMPRESSION","compressionStrategy":"DEFAULT_STRATEGY","contentType":["text/html","text/css","text/plain","text/xml","application/json","application/javascript","application/xhtml+xml","image/svg+xml"],"disableOnEtagHeader":true,"enable":true,"memoryLevel":5,"minContentLength":1024,"windowBits":12}` | Gzip compression settings |

|

||||

| hub | string | `"higress-registry.cn-hangzhou.cr.aliyuncs.com/higress"` | |

|

||||

| hub | string | `""` | |

|

||||

| meshConfig | object | `{"enablePrometheusMerge":true,"rootNamespace":null,"trustDomain":"cluster.local"}` | meshConfig defines runtime configuration of components, including Istiod and istio-agent behavior See https://istio.io/docs/reference/config/istio.mesh.v1alpha1/ for all available options |

|

||||

| meshConfig.rootNamespace | string | `nil` | The namespace to treat as the administrative root namespace for Istio configuration. When processing a leaf namespace Istio will search for declarations in that namespace first and if none are found it will search in the root namespace. Any matching declaration found in the root namespace is processed as if it were declared in the leaf namespace. |

|

||||

| meshConfig.trustDomain | string | `"cluster.local"` | The trust domain corresponds to the trust root of a system Refer to https://github.com/spiffe/spiffe/blob/master/standards/SPIFFE-ID.md#21-trust-domain |

|

||||

@@ -260,7 +263,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| pilot.env.PILOT_ENABLE_METADATA_EXCHANGE | string | `"false"` | |

|

||||

| pilot.env.PILOT_SCOPE_GATEWAY_TO_NAMESPACE | string | `"false"` | |

|

||||

| pilot.env.VALIDATION_ENABLED | string | `"false"` | |

|

||||

| pilot.hub | string | `"higress-registry.cn-hangzhou.cr.aliyuncs.com/higress"` | |

|

||||

| pilot.hub | string | `""` | |

|

||||

| pilot.image | string | `"pilot"` | Can be a full hub/image:tag |

|

||||

| pilot.jwksResolverExtraRootCA | string | `""` | You can use jwksResolverExtraRootCA to provide a root certificate in PEM format. This will then be trusted by pilot when resolving JWKS URIs. |

|

||||

| pilot.keepaliveMaxServerConnectionAge | string | `"30m"` | The following is used to limit how long a sidecar can be connected to a pilot. It balances out load across pilot instances at the cost of increasing system churn. |

|

||||

@@ -275,7 +278,7 @@ The command removes all the Kubernetes components associated with the chart and

|

||||

| pilot.serviceAnnotations | object | `{}` | |

|

||||

| pilot.tag | string | `""` | |

|

||||

| pilot.traceSampling | float | `1` | |

|

||||

| pluginServer.hub | string | `"higress-registry.cn-hangzhou.cr.aliyuncs.com/higress"` | |

|

||||

| pluginServer.hub | string | `""` | |

|

||||

| pluginServer.image | string | `"plugin-server"` | |

|

||||

| pluginServer.imagePullSecrets | list | `[]` | |

|

||||

| pluginServer.labels | object | `{}` | |

|

||||

|

||||

@@ -112,6 +112,7 @@ helm delete higress -n higress-system

|

||||

| gateway.metrics.rawSpec | object | `{}` | 额外的度量规范 |

|

||||

| gateway.metrics.relabelConfigs | list | `[]` | 重新标签配置 |

|

||||

| gateway.metrics.relabelings | list | `[]` | 重新标签项 |

|

||||

| gateway.metrics.podMonitorSelector | object | `{"release":"kube-prometheus-stack"}` | PodMonitor 选择器,当使用 prometheus stack 的podmonitor自动发现时,选择器必须匹配标签 "release: kube-prome",这是 kube-prometheus-stack 的默认设置 |

|

||||

| gateway.metrics.scrapeTimeout | string | `""` | 抓取的超时时间 |

|

||||

| gateway.name | string | `"higress-gateway"` | 网关名称 |

|

||||

| gateway.networkGateway | string | `""` | 网络网关指定 |

|

||||

|

||||

Submodule istio/istio updated: 3a661d92b0...017e7d8a4d

@@ -53,7 +53,6 @@ require (

|

||||

github.com/cockroachdb/errors v1.9.1 // indirect

|

||||

github.com/cockroachdb/logtags v0.0.0-20211118104740-dabe8e521a4f // indirect

|

||||

github.com/cockroachdb/redact v1.1.3 // indirect

|

||||

github.com/davecgh/go-spew v1.1.1 // indirect

|

||||

github.com/deckarep/golang-set v1.7.1 // indirect

|

||||

github.com/dlclark/regexp2 v1.11.5 // indirect

|

||||

github.com/getsentry/sentry-go v0.12.0 // indirect

|

||||

|

||||

@@ -185,10 +185,7 @@ github.com/getsentry/sentry-go v0.12.0/go.mod h1:NSap0JBYWzHND8oMbyi0+XZhUalc1TB

|

||||

github.com/gin-contrib/sse v0.0.0-20190301062529-5545eab6dad3/go.mod h1:VJ0WA2NBN22VlZ2dKZQPAPnyWw5XTlK1KymzLKsr59s=

|

||||

github.com/gin-gonic/gin v1.4.0/go.mod h1:OW2EZn3DO8Ln9oIKOvM++LBO+5UPHJJDH72/q/3rZdM=

|

||||

github.com/go-check/check v0.0.0-20180628173108-788fd7840127/go.mod h1:9ES+weclKsC9YodN5RgxqK/VD9HM9JsCSh7rNhMZE98=

|

||||

github.com/go-errors/errors v1.0.1 h1:LUHzmkK3GUKUrL/1gfBUxAHzcev3apQlezX/+O7ma6w=

|

||||

github.com/go-errors/errors v1.0.1/go.mod h1:f4zRHt4oKfwPJE5k8C9vpYG+aDHdBFUsgrm6/TyX73Q=

|

||||

github.com/go-faker/faker/v4 v4.1.0 h1:ffuWmpDrducIUOO0QSKSF5Q2dxAht+dhsT9FvVHhPEI=

|

||||

github.com/go-faker/faker/v4 v4.1.0/go.mod h1:uuNc0PSRxF8nMgjGrrrU4Nw5cF30Jc6Kd0/FUTTYbhg=

|

||||

github.com/go-faster/city v1.0.1 h1:4WAxSZ3V2Ws4QRDrscLEDcibJY8uf41H6AhXDrNDcGw=

|

||||

github.com/go-faster/city v1.0.1/go.mod h1:jKcUJId49qdW3L1qKHH/3wPeUstCVpVSXTM6vO3VcTw=

|

||||

github.com/go-faster/errors v0.7.1 h1:MkJTnDoEdi9pDabt1dpWf7AA8/BaSYZqibYyhZ20AYg=

|

||||

@@ -429,7 +426,6 @@ github.com/paulmach/protoscan v0.2.1/go.mod h1:SpcSwydNLrxUGSDvXvO0P7g7AuhJ7lcKf

|

||||

github.com/pelletier/go-toml v1.2.0/go.mod h1:5z9KED0ma1S8pY6P1sdut58dfprrGBbd/94hg7ilaic=

|

||||

github.com/pierrec/lz4/v4 v4.1.21 h1:yOVMLb6qSIDP67pl/5F7RepeKYu/VmTyEXvuMI5d9mQ=

|

||||

github.com/pierrec/lz4/v4 v4.1.21/go.mod h1:gZWDp/Ze/IJXGXf23ltt2EXimqmTUXEy0GFuRQyBid4=

|

||||

github.com/pingcap/errors v0.11.4 h1:lFuQV/oaUMGcD2tqt+01ROSmJs75VG1ToEOkZIZ4nE4=

|

||||

github.com/pingcap/errors v0.11.4/go.mod h1:Oi8TUi2kEtXXLMJk9l1cGmz20kV3TaQ0usTwv5KuLY8=

|

||||

github.com/pkg/diff v0.0.0-20210226163009-20ebb0f2a09e/go.mod h1:pJLUxLENpZxwdsKMEsNbx1VGcRFpLqf3715MtcvvzbA=

|

||||

github.com/pkg/errors v0.8.0/go.mod h1:bwawxfHBFNV+L2hUp1rHADufV3IMtnDRdf1r5NINEl0=

|

||||

|

||||

@@ -8,6 +8,7 @@ import (

|

||||

_ "github.com/alibaba/higress/plugins/golang-filter/mcp-server/servers/higress/higress-api"

|

||||

_ "github.com/alibaba/higress/plugins/golang-filter/mcp-server/servers/higress/higress-ops"

|

||||

_ "github.com/alibaba/higress/plugins/golang-filter/mcp-server/servers/rag"

|

||||

_ "github.com/alibaba/higress/plugins/golang-filter/mcp-server/servers/tool-search"

|

||||

mcp_session "github.com/alibaba/higress/plugins/golang-filter/mcp-session"

|

||||

"github.com/alibaba/higress/plugins/golang-filter/mcp-session/common"

|

||||

xds "github.com/cncf/xds/go/xds/type/v3"

|

||||

|

||||

144

plugins/golang-filter/mcp-server/servers/tool-search/README.md

Normal file

144

plugins/golang-filter/mcp-server/servers/tool-search/README.md

Normal file

@@ -0,0 +1,144 @@

|

||||

# Tool Search MCP Server

|

||||

|

||||

这是一个基于 Higress Golang Filter 实现的 MCP Server,用于提供工具语义搜索功能。当前实现**仅支持向量语义搜索**(基于 Milvus 向量数据库),**不包含全文检索或混合搜索**。

|

||||

|

||||

## 功能特性

|

||||

|

||||

- **向量语义搜索**:使用 OpenAI 兼容的 Embedding API 将用户查询转换为向量,并在 Milvus 中进行相似度检索

|

||||

- **工具元数据支持**:从数据库中读取完整的工具定义(JSON 格式),并动态拼接工具名称

|

||||

- **全量工具列表**:支持获取数据库中所有可用工具

|

||||

- **可配置 Embedding 模型**:支持自定义模型、维度及 API 端点(如 DashScope)

|

||||

- **Milvus 集成**:通过标准 gRPC 接口连接 Milvus 向量数据库

|

||||

|

||||

## 数据库要求(Milvus)

|

||||

|

||||

本服务依赖 **Milvus 向量数据库**,需预先创建集合(Collection),其 Schema 应包含以下字段:

|

||||

|

||||

| 字段名 | 类型 | 说明 |

|

||||

|--------------|-------------------|-------------------------|

|

||||

| `id` | VarChar(64) | 文档唯一 ID |

|

||||

| `content` | VarChar(64) | 工具描述文本 |

|

||||

| `metadata` | JSON | 完整的工具定义(必须包含 `name` 字段) |

|

||||

| `vector` | FloatVector(1024) | embedding 向量 |

|

||||

| `metadata` | Int64 | 创建时间 |

|

||||

|

||||

|

||||

## 配置参数

|

||||

|

||||

### 根级配置

|

||||

|

||||

| 参数 | 类型 | 必填 | 默认值 | 说明 |

|

||||

|--------------|--------|------|-----------------------------------------------------|------|

|

||||

| `vector` | object | 是 | - | 向量数据库配置(见下文) |

|

||||

| `embedding` | object | 是 | - | Embedding API 配置(见下文) |

|

||||

| `description`| string | 否 | `"Tool search server for semantic similarity search"` | MCP Server 描述信息 |

|

||||

|

||||

### Vector 配置(`vector` 对象)

|

||||

|

||||

| 参数 | 类型 | 必填 | 默认值 | 说明 |

|

||||

|-------------|--------|------|--------------------|------|

|

||||

| `type` | string | 是 | - | **必须为 `"milvus"`** |

|

||||

| `host` | string | 是 | - | Milvus 服务地址(如 `localhost`) |

|

||||

| `port` | int | 是 | - | Milvus gRPC 端口(如 `19530`) |

|

||||

| `database` | string | 否 | `"default"` | Milvus 数据库名 |

|

||||

| `tableName` | string | 否 | `"apig_mcp_tools"` | Milvus 集合名 |

|

||||

| `username` | string | 否 | - | 认证用户名(可选) |

|

||||

| `password` | string | 否 | - | 认证密码(可选) |

|

||||

|

||||

### Embedding 配置(`embedding` 对象)

|

||||

|

||||

| 参数 | 类型 | 必填 | 默认值 | 说明 |

|

||||

|--------------|--------|------|-----------------------------------------------------------|------|

|

||||

| `apiKey` | string | 是 | - | Embedding 服务的 API Key |

|

||||

| `baseURL` | string | 否 | `https://dashscope.aliyuncs.com/compatible-mode/v1` | OpenAI 兼容 API 的 Base URL |

|

||||

| `model` | string | 否 | `text-embedding-v4` | 使用的 Embedding 模型 |

|

||||

| `dimensions` | int | 否 | `1024` | 向量维度 |

|

||||

|

||||

## 配置示例

|

||||

|

||||

|

||||

Tool Search MCP Server 也可以作为 Higress 的一个模块进行配置。以下是一个在 Higress ConfigMap 中配置 Tool Search 的示例:

|

||||

|

||||

```yaml

|

||||

apiVersion: v1

|

||||

kind: ConfigMap

|

||||

metadata:

|

||||

name: higress-config

|

||||

namespace: higress-system

|

||||

data:

|

||||

higress: |

|

||||

mcpServer:

|

||||

enable: true

|

||||

sse_path_suffix: "/sse"

|

||||

redis:

|

||||

address: "<Redis IP>:6379"

|

||||

username: ""

|

||||

password: ""

|

||||

db: 0

|

||||

match_list:

|

||||

- path_rewrite_prefix: ""

|

||||

upstream_type: ""

|

||||

enable_path_rewrite: false

|

||||

match_rule_domain: "*"

|

||||

match_rule_path: "/mcp-servers/tool-search"

|

||||

match_rule_type: "prefix"

|

||||

servers:

|

||||

- path: "/mcp-servers/tool-search"

|

||||

name: "tool-search"

|

||||

type: "tool-search"

|

||||

config:

|

||||

vector:

|

||||

type: "milvus"

|

||||

host: "localhost"

|

||||

port: 19530

|

||||

database: "default"

|

||||

tableName: "apig_mcp_tools"

|

||||

username: "root"

|

||||

password: "Milvus"

|

||||

maxTools: 1000

|

||||

embedding:

|

||||

apiKey: "your-dashscope-api-key"

|

||||

baseURL: "https://dashscope.aliyuncs.com/compatible-mode/v1"

|

||||

model: "text-embedding-v4"

|

||||

dimensions: 1024

|

||||

description: "Higress 工具语义搜索服务"

|

||||

```

|

||||

|

||||

## 工具搜索接口

|

||||

|

||||

Tool Search MCP Server 提供以下 MCP 工具:

|

||||

|

||||

### x_higress_tool_search

|

||||

|

||||

基于语义相似度搜索最相关的工具。

|

||||

|

||||

**输入参数**:

|

||||

|

||||

| 参数名 | 类型 | 必填 | 说明 |

|

||||

|---------|--------|------|------|

|

||||

| `query` | string | 是 | 查询语句,用于与工具描述进行语义相似度比较 |

|

||||

| `topK` | int | 否 | 指定需要选择的工具数量,默认选择前10个工具 |

|

||||

|

||||

**输出格式**:

|

||||

|

||||

```

|

||||

{

|

||||

"tools": [

|

||||

{

|

||||

"name": "server_name___tool_name",

|

||||

"title": "Tool Title",

|

||||

"description": "Tool description",

|

||||

"inputSchema": {...},

|

||||

"outputSchema": {...}

|

||||

}

|

||||

]

|

||||

}

|

||||

```

|

||||

|

||||

|

||||

## 搜索实现

|

||||

|

||||

通过向量相似度进行搜索,索引配置如下

|

||||

- 使用 HNSW 索引算法进行向量索引

|

||||

- 默认参数:M=8, efConstruction=64

|

||||

- 相似度度量方式:内积(IP)

|

||||

@@ -0,0 +1,18 @@

|

||||

{

|

||||

"vector": {

|

||||

"type": "milvus",

|

||||

"vectorWeight": 0.5,

|

||||

"tableName": "apig_mcp_tools",

|

||||

"host": "localhost",

|

||||

"port": 19530,

|

||||

"database": "default",

|

||||

"username": "root",

|

||||

"password": "Milvus"

|

||||

},

|

||||

"embedding": {

|

||||

"apiKey": "your-dashscope-api-key",

|

||||

"baseURL": "https://dashscope.aliyuncs.com/compatible-mode/v1",

|

||||

"model": "text-embedding-v4",

|

||||

"dimensions": 1024

|

||||

}

|

||||

}

|

||||

@@ -0,0 +1,79 @@

|

||||

package tool_search

|

||||

|

||||

import (

|

||||

"context"

|

||||

"fmt"

|

||||

"time"

|

||||

|

||||

"github.com/envoyproxy/envoy/contrib/golang/common/go/api"

|

||||

"github.com/openai/openai-go/v2"

|

||||

"github.com/openai/openai-go/v2/option"

|

||||

)

|

||||

|

||||

// EmbeddingClient handles vector embedding generation using OpenAI-compatible APIs

|

||||

type EmbeddingClient struct {

|

||||

client *openai.Client

|

||||

model string

|

||||

dimensions int

|

||||

}

|

||||

|

||||

// NewEmbeddingClient creates a new EmbeddingClient instance for OpenAI-compatible APIs

|

||||

func NewEmbeddingClient(apiKey, baseURL, model string, dimensions int) *EmbeddingClient {

|

||||

api.LogInfof("Creating EmbeddingClient with baseURL: %s, model: %s, dimensions: %d", baseURL, model, dimensions)

|

||||

|

||||

// Create client with timeout

|

||||

client := openai.NewClient(

|

||||

option.WithAPIKey(apiKey),

|

||||

option.WithBaseURL(baseURL),

|

||||

option.WithRequestTimeout(30*time.Second),

|

||||

)

|

||||

|

||||

return &EmbeddingClient{

|

||||

client: &client,

|

||||

model: model,

|

||||

dimensions: dimensions,

|

||||

}

|

||||

}

|

||||

|

||||

// GetEmbedding generates vector embedding for the given text

|

||||

func (e *EmbeddingClient) GetEmbedding(ctx context.Context, text string) ([]float32, error) {

|

||||

api.LogInfof("Generating embedding for text (length: %d)", len(text))

|

||||

api.LogDebugf("Using model: %s, dimensions: %d", e.model, e.dimensions)

|

||||

|

||||

// Add timeout to context if not already present

|

||||

ctx, cancel := context.WithTimeout(ctx, 30*time.Second)

|

||||

defer cancel()

|

||||

|

||||

params := openai.EmbeddingNewParams{

|

||||

Model: e.model,

|

||||

Input: openai.EmbeddingNewParamsInputUnion{

|

||||

OfString: openai.String(text),

|

||||

},

|

||||

Dimensions: openai.Int(int64(e.dimensions)),

|

||||

EncodingFormat: openai.EmbeddingNewParamsEncodingFormatFloat,

|

||||

}

|

||||

|

||||

api.LogDebugf("Calling OpenAI-compatible API for embedding generation")

|

||||

embeddingResp, err := e.client.Embeddings.New(ctx, params)

|

||||

if err != nil {

|

||||

api.LogErrorf("OpenAI-compatible API call failed: %v", err)

|

||||

return nil, fmt.Errorf("failed to generate embedding: %w", err)

|

||||

}

|

||||

|

||||

if len(embeddingResp.Data) == 0 {

|

||||

api.LogErrorf("Empty embedding response from API")

|

||||

return nil, fmt.Errorf("empty embedding response")

|

||||

}

|

||||

|

||||

api.LogDebugf("Successfully received embedding from API")

|

||||

api.LogDebugf("Response data length: %d, embedding dimension: %d", len(embeddingResp.Data), len(embeddingResp.Data[0].Embedding))

|

||||

|

||||

// Convert []float64 to []float32

|

||||

embedding := make([]float32, len(embeddingResp.Data[0].Embedding))

|

||||

for i, v := range embeddingResp.Data[0].Embedding {

|

||||

embedding[i] = float32(v)

|

||||

}

|

||||

|

||||

api.LogInfof("Embedding conversion completed, final dimension: %d", len(embedding))

|

||||

return embedding, nil

|

||||

}

|

||||

204

plugins/golang-filter/mcp-server/servers/tool-search/milvus.go

Normal file

204

plugins/golang-filter/mcp-server/servers/tool-search/milvus.go

Normal file

@@ -0,0 +1,204 @@

|

||||

package tool_search

|

||||

|

||||

import (

|

||||

"context"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"time"

|

||||

|

||||

"github.com/alibaba/higress/plugins/golang-filter/mcp-server/servers/rag/config"

|

||||

"github.com/alibaba/higress/plugins/golang-filter/mcp-server/servers/rag/schema"

|

||||

"github.com/milvus-io/milvus-sdk-go/v2/client"

|

||||

"github.com/milvus-io/milvus-sdk-go/v2/entity"

|

||||

)

|

||||

|

||||

type MilvusVectorStoreProvider struct {

|

||||

client client.Client

|

||||

collection string

|

||||

dimensions int

|

||||

}

|

||||

|

||||

func NewMilvusVectorStoreProvider(cfg *config.VectorDBConfig, dimensions int) (*MilvusVectorStoreProvider, error) {

|

||||

connectParam := client.Config{

|

||||

Address: fmt.Sprintf("%s:%d", cfg.Host, cfg.Port),

|

||||

}

|

||||

connectParam.DBName = cfg.Database

|

||||

|

||||

if cfg.Username != "" && cfg.Password != "" {

|

||||

connectParam.Username = cfg.Username

|

||||

connectParam.Password = cfg.Password

|

||||

}

|

||||

|

||||

milvusClient, err := client.NewClient(context.Background(), connectParam)

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to create milvus client: %w", err)

|

||||

}

|

||||

|

||||

return &MilvusVectorStoreProvider{

|

||||

client: milvusClient,

|

||||

collection: cfg.Collection,

|

||||

dimensions: dimensions,

|

||||

}, nil

|

||||

}

|

||||

|

||||

func (c *MilvusVectorStoreProvider) ListAllDocs(ctx context.Context, limit int) ([]schema.Document, error) {

|

||||

expr := ""

|

||||

|

||||

outputFields := []string{"id", "content", "metadata", "created_at"}

|

||||

|

||||

var queryResult []entity.Column

|

||||

var err error

|

||||

|

||||

if limit > 0 {

|

||||

queryResult, err = c.client.Query(

|

||||

ctx,

|

||||

c.collection,

|

||||

[]string{}, // partitions

|

||||

expr, // filter condition

|

||||

outputFields,

|

||||

client.WithLimit(int64(limit)),

|

||||

)

|

||||

} else {

|

||||

queryResult, err = c.client.Query(

|

||||

ctx,

|

||||

c.collection,

|

||||

[]string{}, // partitions

|

||||

expr, // filter condition

|

||||

outputFields,

|

||||

)

|

||||

}

|

||||

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to query all documents: %w", err)

|

||||

}

|

||||

|

||||

if len(queryResult) == 0 {

|

||||

return []schema.Document{}, nil

|

||||

}

|

||||

|

||||

rowCount := queryResult[0].Len()

|

||||

documents := make([]schema.Document, 0, rowCount)

|

||||

|

||||

for i := 0; i < rowCount; i++ {

|

||||

var (

|

||||

id string

|

||||

content string

|

||||

metadata map[string]interface{}

|

||||

createdAt int64

|

||||

)

|

||||

|

||||

for _, col := range queryResult {

|

||||

switch col.Name() {

|

||||

case "id":

|

||||

if v, err := col.(*entity.ColumnVarChar).Get(i); err == nil {

|

||||

id = v.(string)

|

||||

}

|

||||

case "content":

|

||||

if v, err := col.(*entity.ColumnVarChar).Get(i); err == nil {

|

||||

content = v.(string)

|

||||

}

|

||||

case "metadata":

|

||||

if v, err := col.(*entity.ColumnJSONBytes).Get(i); err == nil {

|

||||

if bytes, ok := v.([]byte); ok {

|

||||

_ = json.Unmarshal(bytes, &metadata)

|

||||

}

|

||||

}

|

||||

case "created_at":

|

||||

if v, err := col.(*entity.ColumnInt64).Get(i); err == nil {

|

||||

createdAt = v.(int64)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

doc := schema.Document{

|

||||

ID: id,

|

||||

Content: content,

|

||||

Metadata: metadata,

|

||||

CreatedAt: time.UnixMilli(createdAt),

|

||||

}

|

||||

documents = append(documents, doc)

|

||||

}

|

||||

|

||||

return documents, nil

|

||||

}

|

||||

|

||||

func (c *MilvusVectorStoreProvider) SearchDocs(ctx context.Context, vector []float32, options *schema.SearchOptions) ([]schema.SearchResult, error) {

|

||||

if options == nil {

|

||||

options = &schema.SearchOptions{TopK: 10}

|

||||

}

|

||||

|

||||

sp, err := entity.NewIndexHNSWSearchParam(16) // 默认 HNSW 搜索参数

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to build search param: %w", err)

|

||||

}

|

||||

|

||||

outputFields := []string{"id", "content", "metadata"}

|

||||

searchResults, err := c.client.Search(

|

||||

ctx,

|

||||

c.collection,

|

||||

[]string{}, // partition names

|

||||

"", // filter expression

|

||||

outputFields, // output fields

|

||||

[]entity.Vector{entity.FloatVector(vector)},

|

||||

"vector", // anns_field

|

||||

entity.IP, // metric_type

|

||||

options.TopK,

|

||||

sp,

|

||||

)

|

||||

|

||||

if err != nil {

|

||||

return nil, fmt.Errorf("failed to search documents: %w", err)

|

||||

}

|

||||

|

||||

var results []schema.SearchResult

|

||||

for _, result := range searchResults {

|

||||

for i := 0; i < result.ResultCount; i++ {

|

||||

id, _ := result.IDs.Get(i)

|

||||

score := result.Scores[i]

|

||||

|

||||

var content string

|

||||

var metadata map[string]interface{}

|

||||

for _, field := range result.Fields {

|

||||

switch field.Name() {

|

||||

case "content":

|

||||

if contentCol, ok := field.(*entity.ColumnVarChar); ok {

|

||||

if contentVal, err := contentCol.Get(i); err == nil {

|

||||

if contentStr, ok := contentVal.(string); ok {

|

||||

content = contentStr

|

||||

}

|

||||

}

|

||||

}

|

||||

case "metadata":

|

||||

if metaCol, ok := field.(*entity.ColumnJSONBytes); ok {

|

||||

if metaVal, err := metaCol.Get(i); err == nil {

|

||||

if metaBytes, ok := metaVal.([]byte); ok {

|

||||

if err := json.Unmarshal(metaBytes, &metadata); err != nil {

|

||||

metadata = make(map[string]interface{})

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

searchResult := schema.SearchResult{

|

||||

Document: schema.Document{

|

||||

ID: fmt.Sprintf("%s", id),

|

||||

Content: content,

|

||||

Metadata: metadata,

|

||||

},

|

||||

Score: float64(score),

|

||||

}

|

||||

results = append(results, searchResult)

|

||||

}

|

||||

}

|

||||

|

||||

return results, nil

|

||||

}

|

||||

|

||||

func (c *MilvusVectorStoreProvider) Close() error {

|

||||

if c.client != nil {

|

||||

return c.client.Close()

|

||||

}

|

||||

return nil

|

||||

}

|

||||

237

plugins/golang-filter/mcp-server/servers/tool-search/search.go

Normal file

237

plugins/golang-filter/mcp-server/servers/tool-search/search.go

Normal file

@@ -0,0 +1,237 @@

|

||||

package tool_search

|

||||

|

||||

import (

|

||||

"context"

|

||||

"fmt"

|

||||

"time"

|

||||

|

||||

"github.com/alibaba/higress/plugins/golang-filter/mcp-server/servers/rag/config"

|

||||

"github.com/alibaba/higress/plugins/golang-filter/mcp-server/servers/rag/schema"

|

||||

"github.com/envoyproxy/envoy/contrib/golang/common/go/api"

|

||||

)

|

||||

|

||||

// SearchService handles tool search operations

|

||||

type SearchService struct {

|

||||

milvusProvider *MilvusVectorStoreProvider

|

||||

config *config.VectorDBConfig

|

||||

tableName string

|

||||

dimensions int

|

||||

maxTools int // 写死的最大工具数量,仅用于单测

|

||||

embeddingClient *EmbeddingClient

|

||||

}

|

||||

|

||||

// NewSearchService creates a new SearchService instance

|

||||

func NewSearchService(host string, port int, database, username, password, tableName string, embeddingClient *EmbeddingClient, dimensions int, maxTools int) *SearchService {

|

||||

// Create Milvus configuration

|

||||

cfg := &config.VectorDBConfig{

|

||||

Provider: "milvus",

|

||||

Host: host,

|

||||

Port: port,

|

||||

Database: database,

|

||||

Collection: tableName,

|

||||

Username: username,

|

||||

Password: password,

|

||||

}

|

||||

|

||||

// Create Milvus provider

|

||||

provider, err := NewMilvusVectorStoreProvider(cfg, dimensions)

|

||||

if err != nil {

|

||||

api.LogErrorf("Failed to create Milvus provider: %v", err)

|

||||

return nil

|

||||

}

|

||||

|

||||

return &SearchService{

|

||||

milvusProvider: provider,

|

||||

config: cfg,

|

||||

tableName: tableName,

|

||||

dimensions: dimensions,

|

||||

maxTools: maxTools, // 使用写死的值

|

||||

embeddingClient: embeddingClient,

|

||||

}

|

||||

}

|

||||

|

||||

// ToolSearchResult represents the result of a tool search

|

||||

type ToolSearchResult struct {

|

||||

Tools []ToolDefinition `json:"tools"`

|

||||

}

|

||||

|

||||

// ToolDefinition represents a tool definition in the search result

|

||||

type ToolDefinition map[string]interface{}

|

||||

|

||||

// SearchTools performs semantic search for tools

|

||||

func (s *SearchService) SearchTools(ctx context.Context, query string, topK int) (*ToolSearchResult, error) {

|

||||

api.LogInfof("Starting tool search for query: '%s', topK: %d", query, topK)

|

||||

|

||||

// Generate vector embedding for the query

|

||||

vector, err := s.embeddingClient.GetEmbedding(ctx, query)

|

||||

if err != nil {

|

||||

api.LogErrorf("Failed to generate embedding for query '%s': %v", query, err)

|

||||

return nil, fmt.Errorf("failed to generate embedding: %w", err)

|

||||

}

|

||||

|

||||

api.LogInfof("Embedding generated successfully, vector dimension: %d", len(vector))

|

||||

|

||||

// Perform vector search

|

||||

records, err := s.searchToolsInDB(query, vector, topK)

|

||||

if err != nil {

|

||||

api.LogErrorf("Failed to search tools: %v", err)

|

||||

return nil, fmt.Errorf("failed to search tools: %w", err)

|

||||

}

|

||||

|

||||

api.LogInfof("Vector search completed, found %d records", len(records))

|

||||

|

||||

return s.convertRecordsToResult(records), nil

|

||||

}

|

||||

|

||||

// convertRecordsToResult converts database records to tool search result

|

||||

func (s *SearchService) convertRecordsToResult(records []ToolRecord) *ToolSearchResult {

|

||||

api.LogInfof("Converting %d records to tool definitions", len(records))

|

||||

|

||||

tools := make([]ToolDefinition, 0, len(records))

|

||||

for i, record := range records {

|

||||

var tool ToolDefinition

|

||||

|

||||

// Use metadata if available

|

||||

if len(record.Metadata) > 0 {

|

||||

tool = record.Metadata

|

||||

api.LogDebugf("Successfully parsed metadata for tool %s", record.Name)

|

||||

} else {

|

||||

api.LogDebugf("No metadata found for tool %s, using basic definition", record.Name)

|

||||

// If no metadata, create a basic tool definition

|

||||

tool = ToolDefinition{

|

||||

"name": record.Name,

|

||||

"description": record.Content,

|

||||

}

|

||||

}

|

||||

|

||||

// Update the name to include server name

|

||||

tool["name"] = fmt.Sprintf("%s", record.Name)

|

||||

|

||||

tools = append(tools, tool)

|

||||

|

||||

api.LogDebugf("Tool %d: %s - %s", i+1, tool["name"], record.Content)

|

||||

}

|

||||

|

||||

api.LogInfof("Successfully converted %d tools", len(tools))

|

||||

return &ToolSearchResult{Tools: tools}

|

||||

}

|

||||

|

||||

// GetAllTools retrieves all available tools

|

||||

func (s *SearchService) GetAllTools() (*ToolSearchResult, error) {

|

||||

api.LogInfo("Retrieving all tools")

|

||||

records, err := s.getAllToolsFromDB()

|

||||

if err != nil {

|

||||

api.LogErrorf("Failed to get all tools: %v", err)

|

||||

return nil, fmt.Errorf("failed to get all tools: %w", err)

|

||||

}

|

||||

|

||||

api.LogInfof("Found %d tools in database", len(records))

|

||||

|

||||

// Convert records to tool definitions

|

||||

tools := make([]ToolDefinition, 0, len(records))

|

||||

for _, record := range records {

|

||||

var tool ToolDefinition

|

||||

|

||||

// Use metadata if available

|

||||

if len(record.Metadata) > 0 {

|

||||

tool = record.Metadata

|

||||

api.LogDebugf("Successfully parsed metadata for tool %s", record.Name)

|

||||

} else {

|

||||

api.LogDebugf("No metadata found for tool %s, using basic definition", record.Name)

|

||||

// If no metadata, create a basic tool definition

|

||||

tool = ToolDefinition{

|

||||

"name": record.Name,

|

||||

"description": record.Content,

|

||||

}

|

||||

}

|

||||

|

||||

// Update the name to include server name

|

||||

tool["name"] = fmt.Sprintf("%s", record.Name)

|

||||

|

||||

tools = append(tools, tool)

|

||||

}

|

||||

|

||||

api.LogInfof("Successfully converted %d tools", len(tools))

|

||||

return &ToolSearchResult{Tools: tools}, nil

|

||||

}

|

||||

|

||||

// ToolRecord represents a tool record in the database

|

||||

type ToolRecord struct {

|

||||

ID string `json:"id"`

|

||||

Name string `json:"name"`

|

||||

Content string `json:"content"`

|

||||

Metadata map[string]interface{} `json:"metadata"`

|

||||

}

|

||||

|

||||

func (s *SearchService) searchToolsInDB(query string, vector []float32, topK int) ([]ToolRecord, error) {

|

||||

api.LogInfof("Performing vector search for query: '%s', topK: %d", query, topK)

|

||||

|

||||

// For Milvus, we'll perform vector search directly

|

||||

ctx, cancel := context.WithTimeout(context.Background(), 10*time.Second)

|

||||

defer cancel()

|

||||

|

||||

// Perform vector search

|

||||

searchOptions := &schema.SearchOptions{

|

||||

TopK: topK,

|

||||

}

|

||||

|

||||

results, err := s.milvusProvider.SearchDocs(ctx, vector, searchOptions)

|

||||

if err != nil {

|

||||

api.LogErrorf("Vector search failed: %v", err)

|

||||

return nil, fmt.Errorf("failed to perform vector search: %w", err)

|

||||

}

|

||||

|

||||

// Convert results to ToolRecords

|

||||

var records []ToolRecord

|

||||

for _, result := range results {

|

||||

doc := result.Document

|

||||

tool := ToolRecord{

|

||||

ID: doc.ID,

|

||||

Content: doc.Content,

|

||||

Metadata: doc.Metadata,

|

||||

}

|

||||

|

||||

if name, ok := doc.Metadata["name"].(string); ok {

|

||||

tool.Name = name

|

||||

}

|

||||

|

||||

records = append(records, tool)

|

||||

}

|

||||

|

||||

api.LogInfof("Vector search completed, found %d results", len(records))

|

||||

return records, nil

|

||||

}

|

||||

|

||||

// getAllToolsFromDB retrieves all tools from the database

|

||||

func (s *SearchService) getAllToolsFromDB() ([]ToolRecord, error) {

|

||||

api.LogInfof("Executing GetAllTools query from collection: %s", s.tableName)

|

||||

|

||||

ctx, cancel := context.WithTimeout(context.Background(), 10*time.Second)

|

||||

defer cancel()

|

||||

|

||||

// Retrieve all documents with limit

|

||||

docs, err := s.milvusProvider.ListAllDocs(ctx, s.maxTools)

|

||||

if err != nil {

|

||||

api.LogErrorf("Failed to list documents: %v", err)

|

||||

return nil, fmt.Errorf("failed to list documents: %w", err)

|

||||

}

|

||||

|

||||

// Convert documents to ToolRecords

|

||||

var tools []ToolRecord

|

||||

for _, doc := range docs {

|

||||

tool := ToolRecord{

|

||||

ID: doc.ID,

|

||||

Content: doc.Content,

|

||||

Metadata: doc.Metadata,

|

||||

}

|

||||

|

||||

if name, ok := doc.Metadata["name"].(string); ok {

|

||||

tool.Name = name

|

||||

}

|

||||

|

||||

tools = append(tools, tool)

|

||||

}

|

||||

|

||||

api.LogInfof("GetAllTools query completed, found %d tools", len(tools))

|

||||

return tools, nil

|

||||

}

|

||||

196

plugins/golang-filter/mcp-server/servers/tool-search/server.go

Normal file

196

plugins/golang-filter/mcp-server/servers/tool-search/server.go

Normal file

@@ -0,0 +1,196 @@

|

||||

package tool_search

|

||||

|

||||

import (

|

||||

"errors"

|

||||

"fmt"

|

||||

|

||||

"github.com/alibaba/higress/plugins/golang-filter/mcp-session/common"

|

||||

"github.com/envoyproxy/envoy/contrib/golang/common/go/api"

|

||||

"github.com/mark3labs/mcp-go/mcp"

|

||||

)

|

||||

|

||||

const (

|

||||

Version = "1.0.0"

|

||||

|

||||

// 默认配置值

|

||||

defaultTableName = "apig_mcp_tools"

|

||||

defaultBaseURL = "https://dashscope.aliyuncs.com/compatible-mode/v1"

|

||||

defaultModel = "text-embedding-v4"

|

||||

defaultDimensions = 1024

|

||||

// 写死最大工具数量为1000,仅用于单测

|

||||

fixedMaxTools = 1000

|

||||

)

|

||||

|

||||

func init() {

|

||||

common.GlobalRegistry.RegisterServer("tool-search", &ToolSearchConfig{})

|

||||

}

|

||||

|

||||

type VectorConfig struct {

|

||||

Type string `json:"type"`

|

||||

VectorWeight float64 `json:"vectorWeight"`

|

||||

TableName string `json:"tableName"`

|

||||

Host string `json:"host"`

|

||||

Port int `json:"port"`

|

||||

Database string `json:"database"`

|

||||

Username string `json:"username"`

|

||||

Password string `json:"password"`

|

||||

}

|

||||

|

||||

type EmbeddingConfig struct {

|

||||

APIKey string `json:"apiKey"`

|

||||

BaseURL string `json:"baseURL"`

|

||||

Model string `json:"model"`

|

||||

Dimensions int `json:"dimensions"`

|

||||

}

|

||||

|

||||

type ToolSearchConfig struct {

|

||||

Vector VectorConfig `json:"vector"`

|

||||

Embedding EmbeddingConfig `json:"embedding"`

|

||||

description string

|

||||

}

|

||||

|

||||

func (c *ToolSearchConfig) ParseConfig(config map[string]any) error {

|

||||

// Parse vector configuration

|

||||

vectorConfig, ok := config["vector"].(map[string]any)

|

||||

if !ok {

|

||||

return errors.New("missing vector configuration")

|

||||

}

|

||||

|

||||

if err := c.parseVectorConfig(vectorConfig); err != nil {

|

||||

return fmt.Errorf("failed to parse vector config: %w", err)

|

||||

}

|

||||

|

||||

// Parse embedding configuration

|

||||

embeddingConfig, ok := config["embedding"].(map[string]any)

|

||||

if !ok {

|

||||

return errors.New("missing embedding configuration")

|

||||

}

|

||||

|

||||

if err := c.parseEmbeddingConfig(embeddingConfig); err != nil {

|

||||

return fmt.Errorf("failed to parse embedding config: %w", err)

|

||||

}

|

||||

|

||||

// Optional description

|

||||

if description, ok := config["description"].(string); ok {

|

||||

c.description = description

|

||||

} else {

|

||||

c.description = "Tool search server for semantic similarity search"

|

||||

}

|

||||

|

||||

api.LogDebugf("ToolSearchConfig ParseConfig: %+v", config)

|

||||

return nil

|

||||

}

|

||||

|

||||

func (c *ToolSearchConfig) parseVectorConfig(config map[string]any) error {

|

||||

if vectorType, ok := config["type"].(string); ok {

|

||||

c.Vector.Type = vectorType

|

||||

} else {

|

||||

return errors.New("missing vector.type")

|

||||

}

|

||||

|

||||

if c.Vector.Type != "milvus" {

|

||||

return fmt.Errorf("unsupported vector.type: %s, only 'milvus' is supported", c.Vector.Type)

|

||||

}

|

||||

|

||||

if host, ok := config["host"].(string); ok {

|

||||

c.Vector.Host = host

|

||||

} else {

|

||||

return errors.New("missing vector.host")

|

||||

}

|

||||

|

||||

if port, ok := config["port"].(float64); ok {

|

||||

c.Vector.Port = int(port)

|

||||

} else if port, ok := config["port"].(int); ok {

|

||||

c.Vector.Port = port

|

||||

} else {

|

||||

return errors.New("missing vector.port")

|

||||

}

|

||||

|

||||

if database, ok := config["database"].(string); ok {

|

||||

c.Vector.Database = database

|

||||

} else {

|

||||

c.Vector.Database = "default" // 默认数据库

|

||||

}

|

||||

|

||||

if tableName, ok := config["tableName"].(string); ok {

|

||||

c.Vector.TableName = tableName

|

||||

} else {

|

||||

c.Vector.TableName = defaultTableName

|

||||

}

|

||||

|

||||

if username, ok := config["username"].(string); ok {

|

||||

c.Vector.Username = username

|

||||

}

|

||||

|

||||

if password, ok := config["password"].(string); ok {

|

||||

c.Vector.Password = password

|

||||

}

|

||||

|

||||

// 移除maxTools的解析逻辑

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

func (c *ToolSearchConfig) parseEmbeddingConfig(config map[string]any) error {

|

||||

// Parse API key (required)

|

||||

if apiKey, ok := config["apiKey"].(string); ok {

|

||||

c.Embedding.APIKey = apiKey

|

||||

} else {

|

||||

return errors.New("missing embedding.apiKey")

|

||||

}

|

||||

|

||||

// Parse optional fields with defaults

|

||||

if baseURL, ok := config["baseURL"].(string); ok {

|

||||

c.Embedding.BaseURL = baseURL

|

||||

} else {

|

||||

c.Embedding.BaseURL = defaultBaseURL

|

||||

}

|

||||

|

||||

if model, ok := config["model"].(string); ok {

|

||||

c.Embedding.Model = model

|

||||

} else {

|

||||

c.Embedding.Model = defaultModel

|

||||

}

|

||||

|

||||

if dimensions, ok := config["dimensions"].(float64); ok {

|

||||

c.Embedding.Dimensions = int(dimensions)

|

||||

} else if dimensions, ok := config["dimensions"].(int); ok {

|

||||

c.Embedding.Dimensions = dimensions

|

||||

} else {

|

||||

c.Embedding.Dimensions = defaultDimensions

|

||||

}

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

func (c *ToolSearchConfig) NewServer(serverName string) (*common.MCPServer, error) {

|

||||

mcpServer := common.NewMCPServer(

|

||||

serverName,

|

||||

Version,

|

||||

common.WithInstructions(c.description),

|

||||

)

|

||||

|

||||

// Create embedding client

|

||||

embeddingClient := NewEmbeddingClient(c.Embedding.APIKey, c.Embedding.BaseURL, c.Embedding.Model, c.Embedding.Dimensions)

|

||||

|

||||

// Create search service,使用写死的fixedMaxTools值

|

||||

searchService := NewSearchService(

|

||||

c.Vector.Host,

|

||||

c.Vector.Port,

|

||||

c.Vector.Database,

|

||||

c.Vector.Username,

|

||||

c.Vector.Password,

|

||||

c.Vector.TableName,

|

||||